News & Media

Archive

Latest coverage from the Starling Lab.

Data Integrity in the AI Era: Key 2026 Legislation to Watch

Navigate the complex landscape of AI transparency with our guide to upcoming synthetic media laws. Learn how the EU, US, and China are regulating deepfakes and content provenance.

May 11, 2026

Welcoming Fred Grinstein as a 2026 Starling Lab Fellow

We are delighted to announce that Fred Grinstein has joined Starling Lab as a 2026 Fellow, where he continues his research at the intersection of documentary practice, generative AI, and media authenticity.

Mar 3, 2026

After the Rubicon: AI, Creators, and the Design of Authenticity in Documentary Media

AI is now embedded across the non-fiction content ecosystem: in historical documentary broadcast series, festival films, independent shorts, and throughout the contemporary creator economy spanning YouTube, Instagram, TikTok, X, and other social video platforms. What was once speculative has become common practice. The question has shifted from whether these tools belong in documentary to how they are being used, by whom, and with what kinds of disclosure and care.

Mar 3, 2026

How AI Is Accelerating Our Flawed Relationship With Documentary Truth

The discomfort many of us are experiencing now is not simply about fake videos. It reflects a growing awareness that the infrastructure we assumed was holding non-fiction media together may never have fully existed. What sustained trust instead was a fragile system of consensus built from institutions, conventions, and shared habits of interpretation. It went unexamined because it worked well enough.

Mar 3, 2026

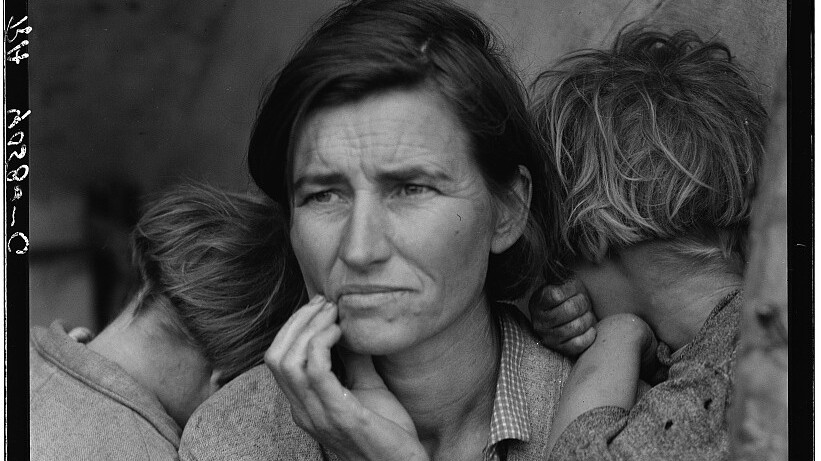

World Model News: Walking Around Inside a Photograph with Google DeepMind Genie 3

I fed a 1930s Dust Bowl photograph of migrant workers by Dorothea Lange into the latest iteration of Google DeepMind’s Project Genie and within seconds I was walking around inside it. A shack lay ahead. I turned around and the scene behind me (a scene that doesn't exist in the original photograph) was already there, rendered in dusty period-accurate detail, all in keeping with the source photo.

Feb 2, 2026

UN Report Cites Starling Lab Submission on AI Surveillance Risks

A new UN position paper references our research on the dangers of AI in counter-terrorism, recognizing our call for ethical guardrails in authenticity infrastructure.

Feb 2, 2026

Apple SHARP: When Any Photograph Becomes 3D

I've been experimenting with 3D reconstruction from archival photographs for the past year at Spatial Lab, and when we started in early 2025, I wasn't sure high-quality single-image-to-3D was even possible. Gradually I developed processes with diffusion models that created convincing 3D scenes from 2D images, but they were painstaking manual processes that took hours to complete. Now, with Apple's SHARP, there is a fast, opensource, image-to-3D process freely available.

Jan 29, 2026

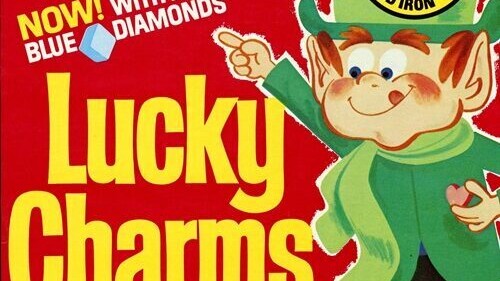

What Should a Nutrition Label for Synthetic Media Say?

At Starling Lab, we've been prototyping what we call a "nutrition label" for synthetic media, intended to be a standardized way of communicating what audiences are actually looking at. After months of experiments and dead ends, here's where our thinking has landed.

Jan 29, 2026

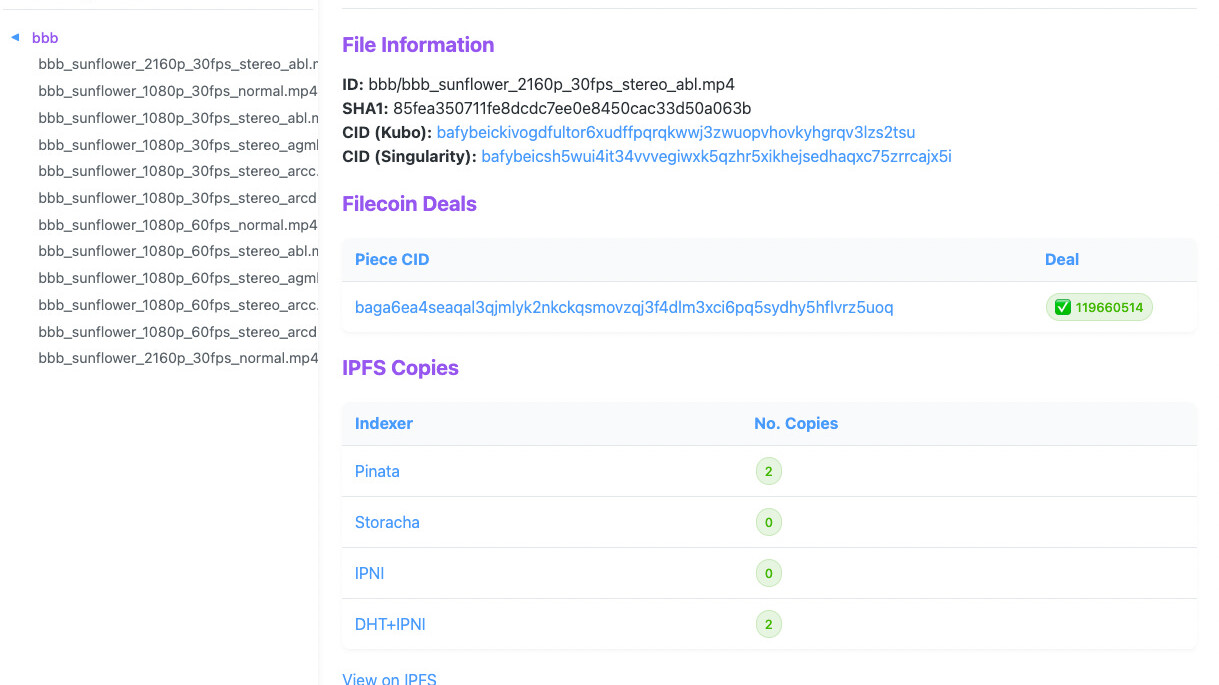

Preserving Harvard Public Health on the Distributed Web

The Distributed Press Clone API successfully preserved a decade of Harvard Public Health journalism, preserving the entire site on IPFS and Hypercore for permanent, resilient public access.

Jan 23, 2026

Jan 20, 2026 Event: Thomas Friedman and Craig Mundie on AI

Starling Lab co-hosts 'Entering a New Era of Human Civilization' at Stanford with HAI and FSI. Featuring Craig Mundie, Thomas Friedman, and John Hennessy, the event explores the intersection of AI, journalism, and history. Learn how cryptographic data integrity and technical frameworks are essential for verifying facts and restoring trust in the digital age.

Jan 20, 2026

The Post-Photographic Age Is Here. What Does That Mean for Trust?

For over 180 years, photographs have served as evidence. A photograph was understood to be a mechanical recording of light that existed in a specific place at a specific time. In other words, proof of the existence of an object or event. This evidentiary assumption underpins journalism, law enforcement, insurance claims, scientific research, and countless other domains. With the advent of AI-generated synthetic imagery, that assumption is now obsolete.

Jan 13, 2026

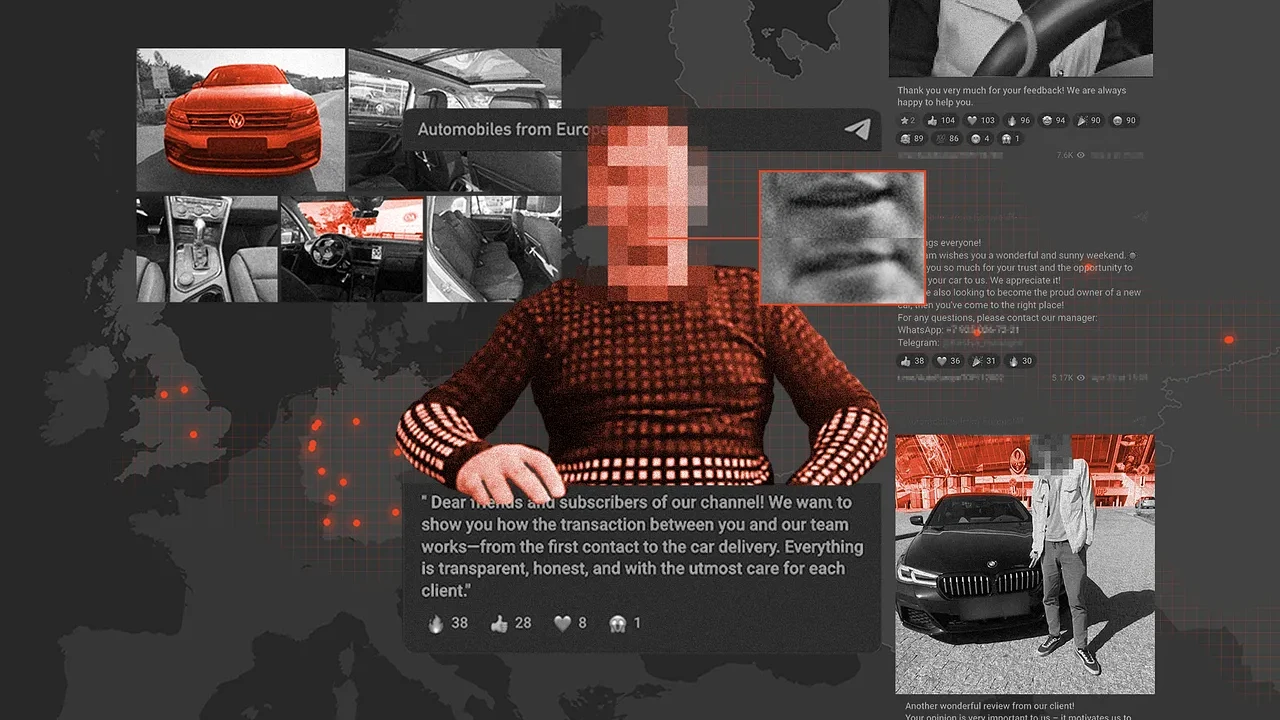

Pilot Project on Making Web Preservation Easy for Investigative Journalism

Tracking the illicit flow of cars from Europe to Russia, reporters found their sanctions-evasion scheme was a red herring: a sophisticated scam targeting Russians, complete with deepfaked videos and paid actors.

Nov 22, 2025

Safeguarding History: Preserving Armenian Cultural Heritage on the Decentralized Web

Preserving 'digital twins' of endangered Armenian cultural heritage sites in Nagorno-Karabakh for 20 years on the decentralized USC Filecoin Node.

Nov 10, 2025

Training British Barristers in Interrogating Authenticated Digital Evidence

A mock trial at Inner Temple tests new standards for authenticating open-source material – and reveals what happens when cryptographic metadata meets centuries-old legal tradition.

Sep 18, 2025

Finding Your Files in Decentralized Storage Just Got Easier

Our new CAR Content Locator solves the challenge of tracking individual files in large archives across Filecoin and IPFS networks.

Aug 10, 2025

Legal Experts Are Racing to Keep International Courts Ahead of AI

With US leadership waning and AI technology advancing rapidly, international legal scholars gather at Leiden University to draft urgent guidance for judges confronting synthetic evidence.

Jul 22, 2025

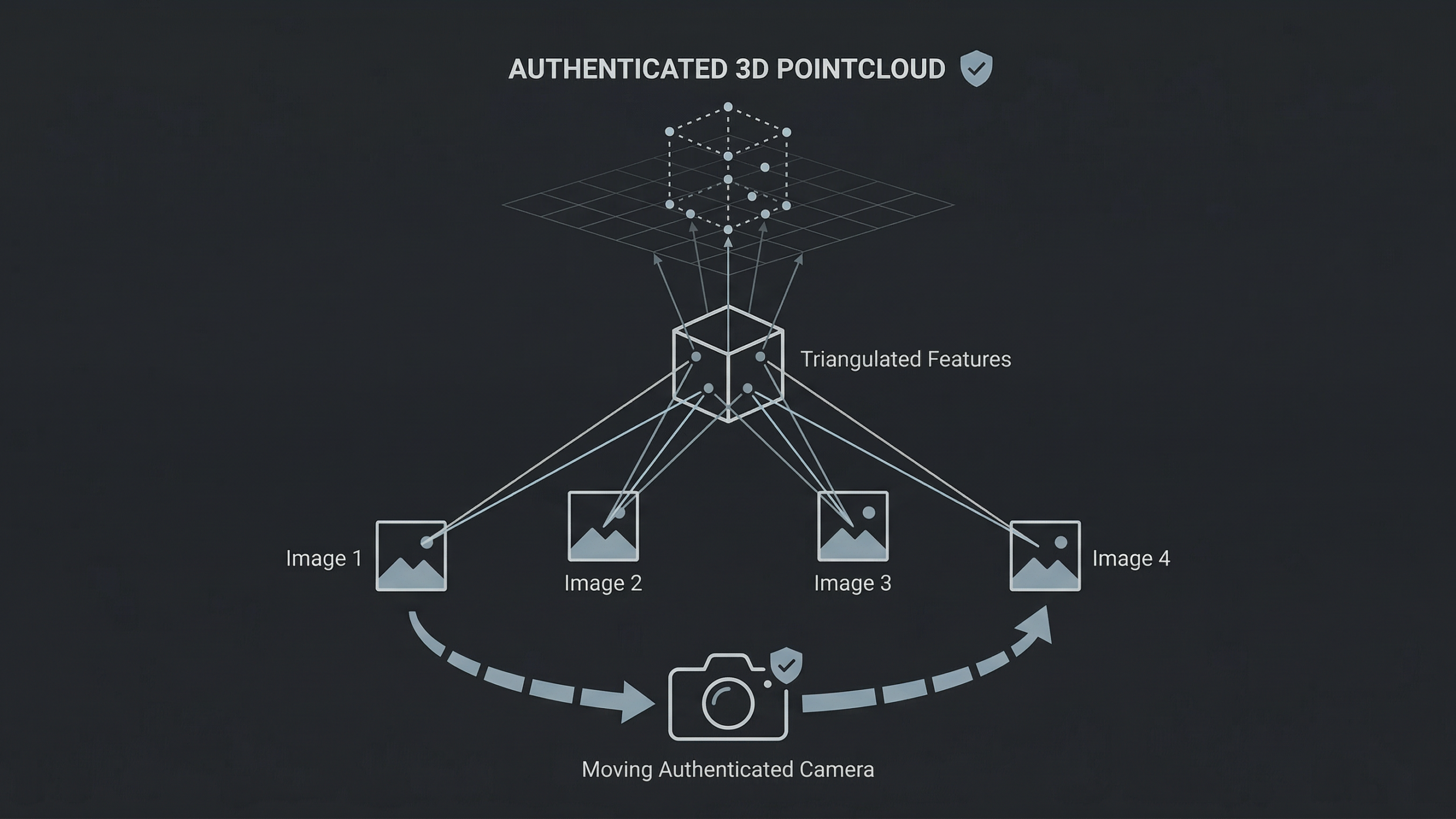

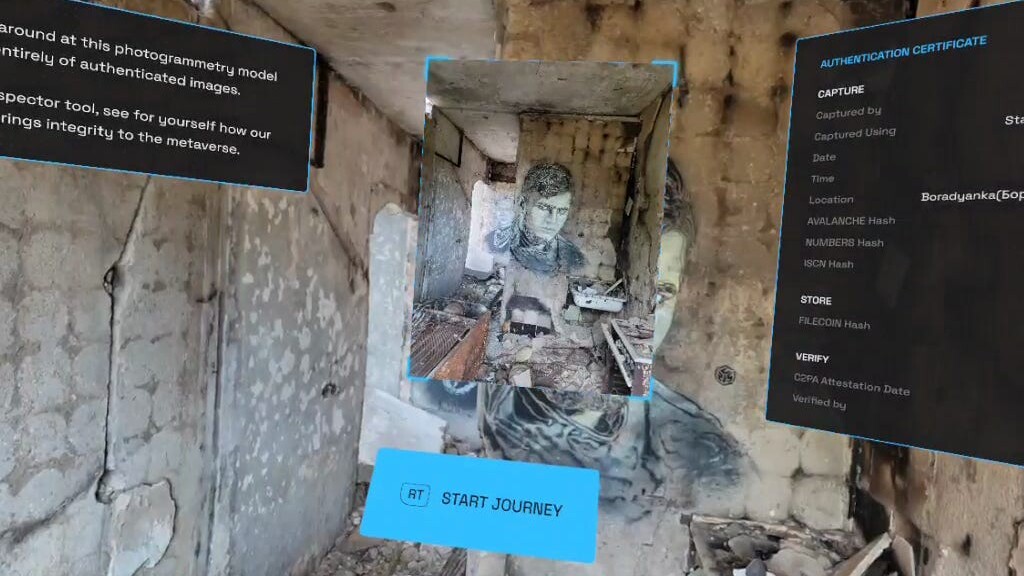

Exploring Authenticity in NeRF and Gaussian Splatting

Why researchers must explore “authenticity” in realistic VR technologies like NeRF and Gaussian Splatting

Jun 10, 2025

Submission to the UN Special Rapporteur on Human Rights Defenders from our Brazil Coverage

Our contribution to the UN Special Rapporteur's report to the Human Rights Council

Mar 19, 2025

Building Trust in the Age of AI – A Starling & HAI Conference at Stanford

From cryptographic proofs to newsroom policy—key takeaways and speaker highlights from our recent event on campus with Stanford HAI

Dec 1, 2024

Event Roundup: Evidence & International Justice In a Generative AI World

Takeaways from our international law and technology experts roundtable at Georgetown, with Hala Systems and the U.S. State Department’s Office for Global Criminal Justice.

Sep 1, 2024

Public Records and Human Rights Conference Track

Discover how the Public Records and Human Rights Conference at IPFS Camp 2024 brought together experts to discuss decentralized technologies for data integrity and human rights protection.

Aug 29, 2024

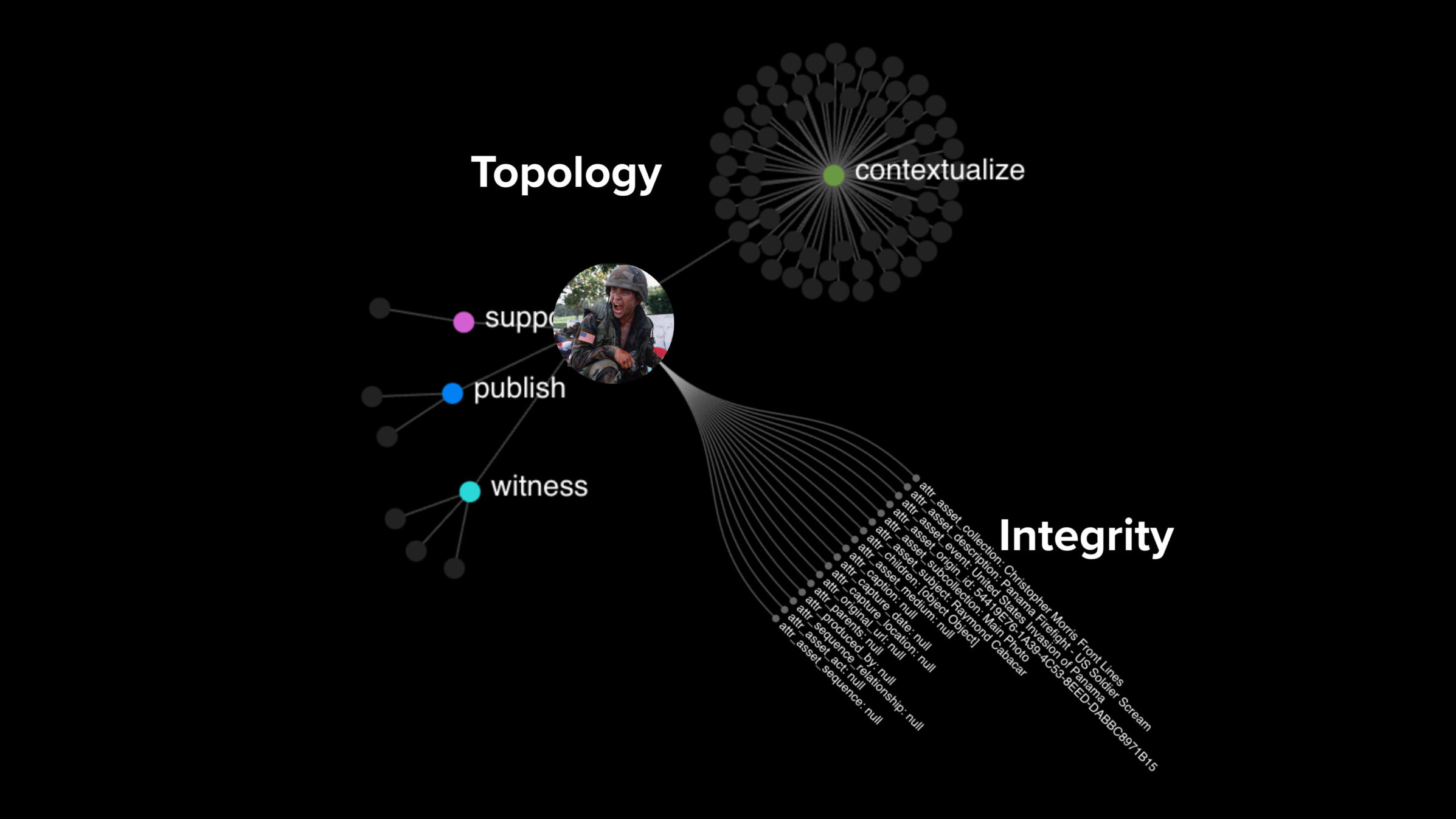

Working on Authenticated Data with Authenticated Attributes

We’re focusing on Authenticated Attributes, a new initiative to enhance the authenticity and trustworthiness of digital media using cryptographic tools. This novel backend, featuring Hyperbee, supports verification workflows and authenticated metadata storage, aiding journalists and legal investigators in working with verifiable data.

Aug 22, 2024

Event roundup: “Shall We Press Play?” – Navigating AI and Evidence in the Digital Age

Insights and Discussions on Ensuring Authenticity in Digital Evidence

Jul 17, 2024

Navigating AI’s Challenges in Courtrooms: New Insights from Starling Lab & TRUE Project in Opinio Juris

Addressing the risks and solutions for authenticating open-source imagery in the emerging age of synthetic media.

Jul 1, 2024

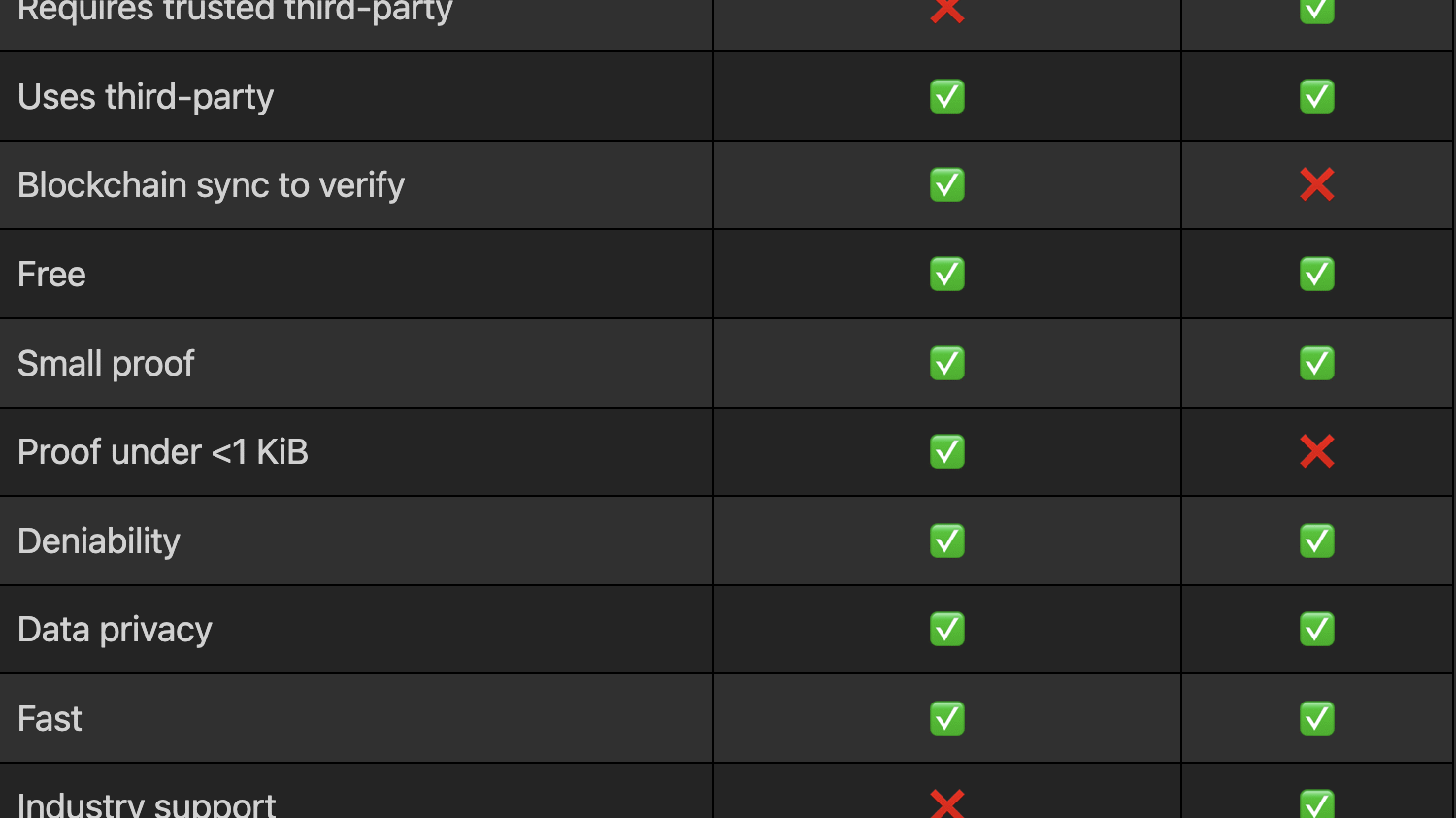

Time for Trusted Timestamping

Explore the importance of trusted timestamping in verifying data authenticity, integrity, and the timing of digital signatures.

Jun 3, 2024

Authenticated Attributes: An Alternative to Deepfake Detection

Upstream, not downstream: Our approach to establishing the source and history of digital media to build trust

Apr 9, 2024

Proposing An ‘Index For Accountability’ To UN Human Rights Chief

Starling Lab and Hala Systems offer to the UN OHCHR a decentralized approach to sharing evidence, the “Index for Accountability.”

Mar 9, 2024

Starling’s Call to Action for the UN Commission of Inquiry on Ukraine

Our joint submission to the United Nations Commission of Inquiry on Ukraine includes evidence of displacement of Ukrainian children as well as an original nuclear monitoring dataset.

Jan 25, 2024

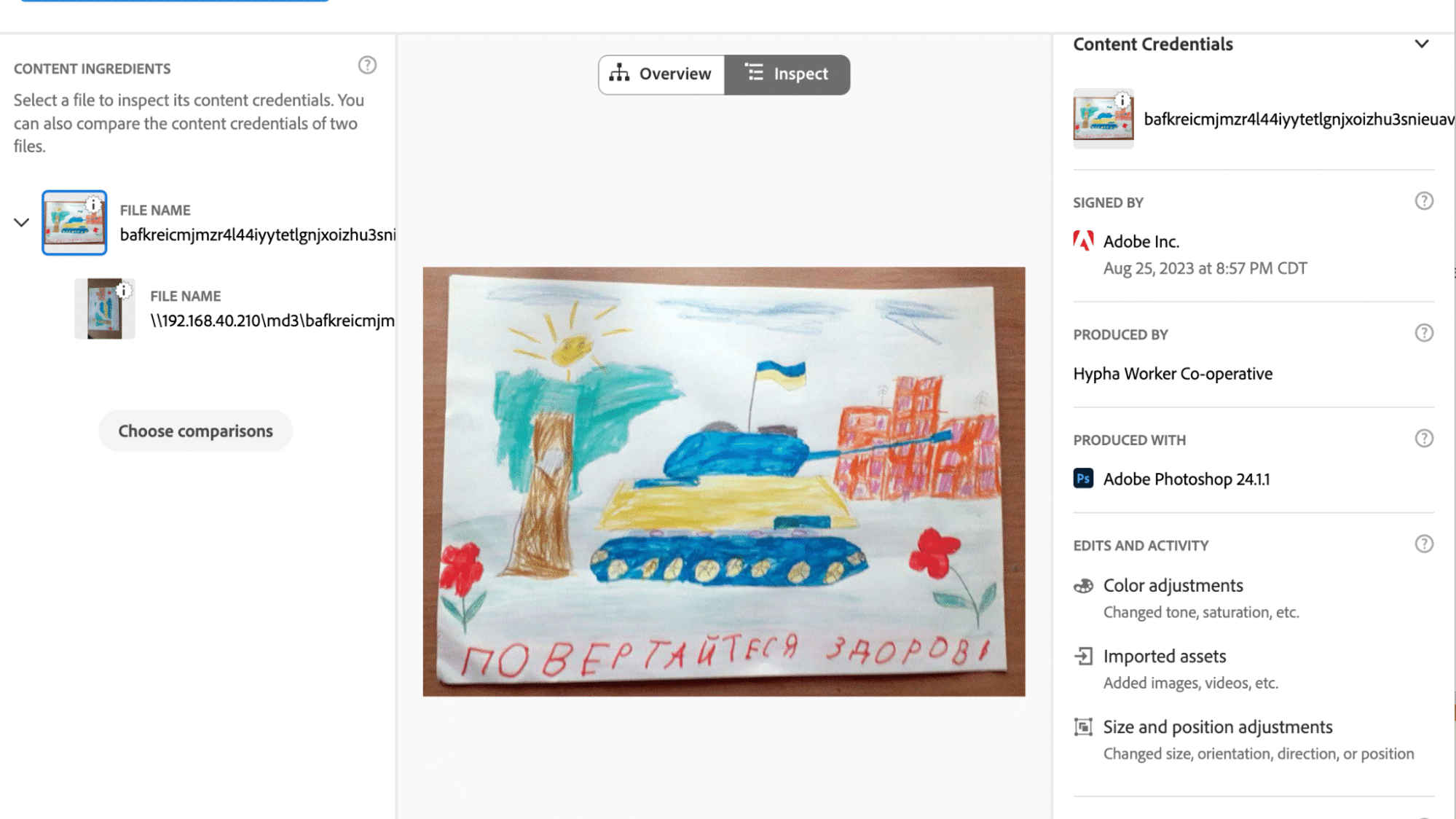

Mom, I See War: A Digital Archive by Numbers Protocol and Starling Lab

Mom, I See War (MISW) is the first known digital archive to document the way children experience war by preserving high-resolution jpeg scans of their drawings and metadata about the drawings. MISW has a collection of 10,026 single-image assets from Russia’s invasion of Ukraine which Starling Lab has registered on the NEAR blockchain and archived on distributed web protocols.

Jan 22, 2024

Creating Human Records that Stand the Test of Time

Preserving digital evidence for the long term using ledgers

Dec 12, 2023

“New Frontiers in Evidence” OSINT event at the Inner Temple, London

The "New Frontiers in Evidence" conference at the Inner Temple brought together leading experts to discuss the challenges and advancements in the use of user-generated evidence in legal contexts. Highlights included keynote insights from Eliot Higgins of Bellingcat and sessions on digital evidence authentication, ethical considerations, and innovative investigative methodologies.

Dec 1, 2023

Starling Explores What Blockchain Can Do for Democracy

Starling Lab explores the role of blockchain and decentralized ledger technology (DLT) in strengthening democracy, enhancing trust in digital media against deepfakes, and creating secure digital evidence for human rights documenters and courts.

Nov 1, 2023

Starling-Hala joint legal methodology recognised by UN Human Rights Council

A new report, presented to the United Nations Human Rights Council, cites work of the Starling Lab for Data Integrity and Hala Systems as an emerging good practice to protect the universal right to education.

Jul 15, 2023

‘Designing for trust through authentication’ publication at MIT Knowledge Futures Group

A review of the landmark "Paths Into the Future of Trust" series, which gathers journalists, technologists, and philosophers to find a way forward through our modern information crisis.

Jun 15, 2023

Archiving 10,000 Web Pages of Weaponized Narratives in support of the DFRLab

Ahead of a DFRLab report studying pro-Kremlin news articles, we preserved this evidence base for later review and potential accountability. This archiving run tested the scale at which we could forensically preserve web pages.

Feb 1, 2023

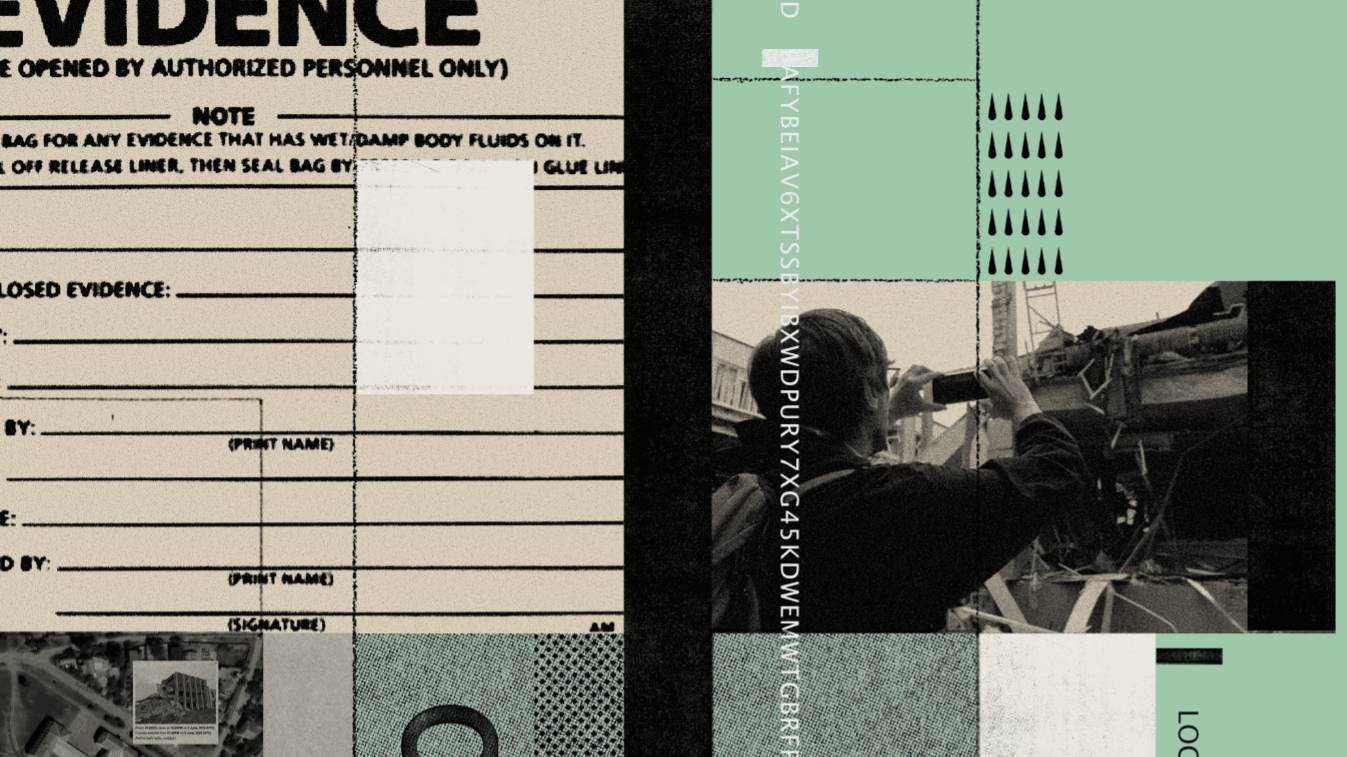

Publication of our whitepaper on Best Practices for Admissibility of Web Archives

Screenshots aren't enough. Secure court-ready web evidence with verifiable metadata, hashing, and an unbreakable chain of custody.

Nov 1, 2022

Supervisory Testimony as a Novel Tool for Accountability

The testimony of a primary observer’s supervisor could help bridge the evidentiary gap. Supervisory testimony would consolidate the institutional knowledge of the chain of custody of specific pieces of digital evidence in a single person.

Jul 1, 2021