World Model News: Walking Around Inside a Photograph with Google DeepMind Genie 3

I fed a 1930s Dust Bowl photograph of migrant workers by Dorothea Lange into the latest iteration of Google DeepMind’s Project Genie and within seconds I was walking around inside it. A shack lay ahead. I turned around and the scene behind me (a scene that doesn’t exist in the original photograph) was already there, rendered in dusty period-accurate detail, all in keeping with the source photo.

Beyond the borders and viewpoint of the original photograph none of it was real. Everything else was generated by the Genie 3 world model, which used the photo as a jumping off point. As I navigated the scene it looked like an extension of the photo documentation of that place, but in fact it was synthetic visuals. The distinction between the real and the synthesized is becoming a pressing issue in spatial media as generative AI models like Genie 3 become more capable and produce ever more realistic imagery.

Genie 3 is the first public release of Google DeepMind’s Project Genie world model, which launched on January 29, 2026 for AI Ultra subscribers in the United States. It followed two earlier versions, Genie 1 in February 2024 and Genie 2 in December 2024, that were demonstrated but never released to the public.

The technical approach is fundamentally different from reconstruction methods like Gaussian splatting. Where a splat scene derives its geometry and color from dozens or hundreds of real photographs or frames of video of a real place, Genie 3 generates frames autoregressively — one at a time, responding to your movement inputs, building up the world around you on the fly as you explore. Feed it one image or a text prompt and it produces a navigable environment in real time. The world extends and springs into existence as you move through it. Areas you don’t visit or turn to look at are never generated into existence.

This is not a pre-rendered video. It’s an AI model predicting in real time what is likely to be around the next corner based on the user’s movements and what it’s learned about how similar worlds look.

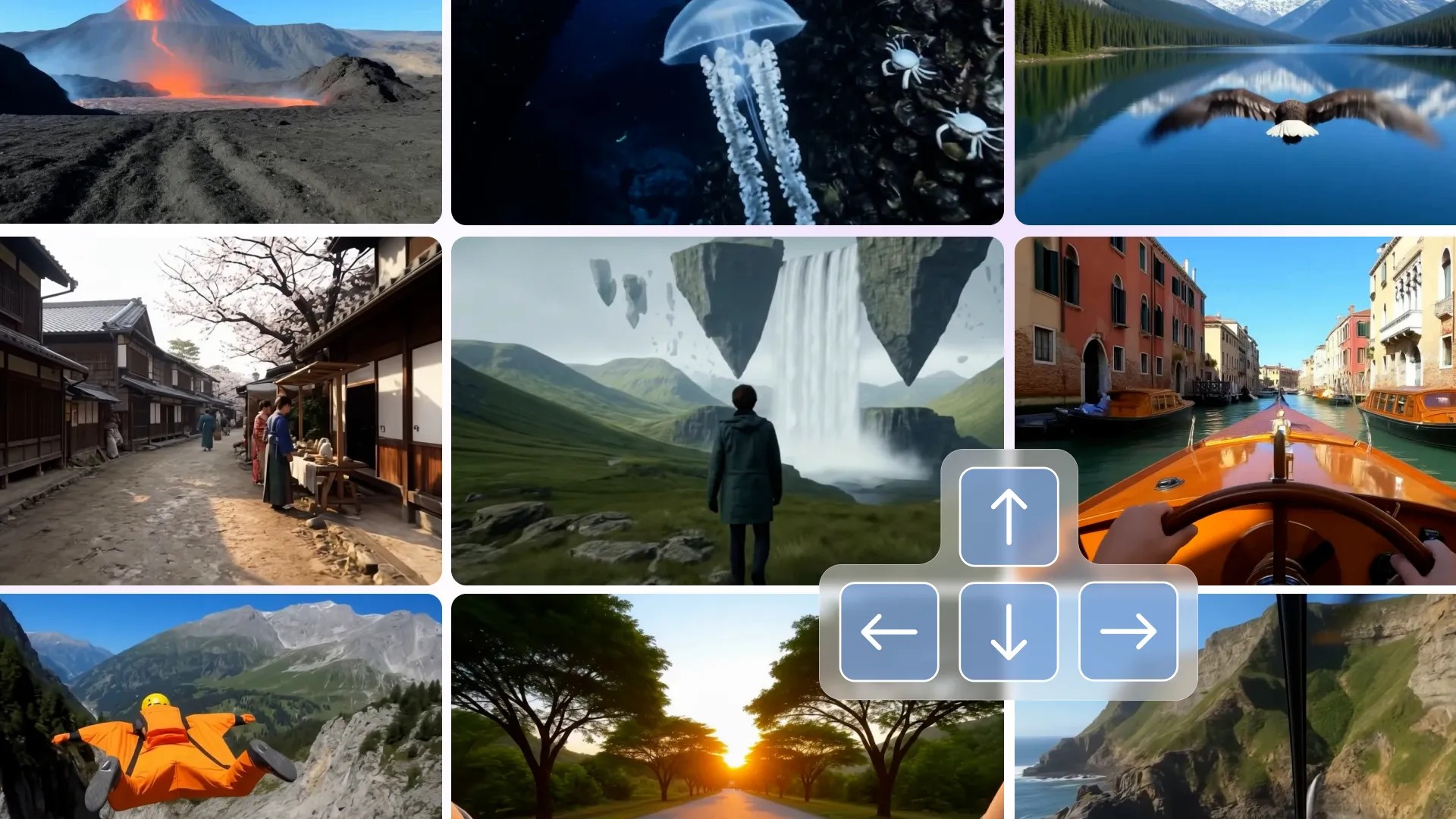

Google DeepMind Genie3 Output

The fidelity was high enough to be uncomfortable. For the first thirty seconds or so, the Dust Bowl scene felt spatially coherent with consistent lighting, plausible architecture, and realistic period detail. It degraded as I continued navigating, with textures softening and geometry losing confidence, but the initial impression was convincing enough that a casual viewer could easily mistake it for a real place.

Genie 3’s realism and believability are the problem. Nothing in the output distinguishes what came from the source photograph and what the model fabricated. There is no seam, no visual indicator, no metadata. The landscape to my left might have been derived from pixel data in the original image or it might have been entirely hallucinated from the model’s training set. I could tell the difference because I knew the photograph it had been prompted with, but without that knowledge it would have been impossible to tell the difference.

Compared to Apple’s SHARP, SHARP also generates a 3D scene from a single image, but SHARP’s output is strictly limited to the input photo and generates what is seen in the photo, except with added 3D depth. It’s possible to move around the scene, but the illusion breaks as soon as you step significantly outside the bounds of the input image. Genie 3 is doing something more radical: it’s continuing the world beyond the frame, inventing spaces the camera never saw. The result looks similar but the implications for trust are very different. SHARP augments a photo within the bounds of the origins image. Genie completely blasts through the edges of the photo.

Apple Sharp Output

Genie 3 is not a research demo anymore. It’s a product. And it’s not alone. Fei-Fei Li’s World Labs Marble shipped its commercial API and closed a $1 billion funding round. Tencent’s HunyuanWorld is an open source world model that gives anyone to create their own virtual worlds from prompts, but requires the user to have access to a computer with professional grade CPU, GPU, and memory. The capability to generate convincing, navigable 3D worlds from a flat image is now a commodity available to anyone with a credit card — or, in Tencent’s case, a commercial-grade GPU.

And none of these tools produce provenance metadata. There is no content credential attached to a Genie 3 world. No C2PA manifest. No indication of what was source material and what was synthesized. If someone records a walkthrough of a generated Dust Bowl scene and posts it without context, there is currently nothing in the file itself that would help a viewer, a journalist, or an archivist distinguish it from footage of a real place.

Genie 3 access currently requires a US location and a Google AI Ultra subscription ($250/month). Sessions cap at 60 seconds, though you can re-enter your world afterward. If you’d rather not pay, Odyssey has a free web demo worth exploring, and there are open-source options (HY-WorldPlay, lingbot-world) you can run locally.

The difference between 3D reconstructions of real spaces and AI generated plausible ones is getting harder to see from the outside. That’s why Starling Lab has been working on authenticity for emerging technologies including a “nutrition label” for synthetic media. World models like Genie 3 pose an even greater challenge when the output isn’t a single image but an entire explorable environment generated in real time, different for every user session, never the same twice.

Spatial Lab is a publication of Starling Lab, a joint initiative of Stanford University and USC focused on data integrity. We cover spatial intelligence technologies for journalism, law, and historical documentation.