How AI Is Accelerating Our Flawed Relationship With Documentary Truth

Authenticity, Semiotics, and the Collapse of the Myth of Objectivity

Fred Grinstein is a documentary producer, media executive, and educator working at the intersection of non-fiction storytelling and emerging technology. His work spans legacy television, independent documentary, and research collaborations focused on media authenticity and synthetic media.

Through the Looking Glass (Again)

We’ve been somewhere like this before.

In 1896, early film audiences reportedly recoiled when a projected image of a train appeared to rush toward them. Whether or not the story is literally true, it captures something enduring about how new media can briefly destabilize perception. Early cinema collapsed the boundary between representation and reality, and over time audiences adapted. They developed a form of media literacy: an ability to read the frame, recognize artifice, and distinguish cinematic depiction from the world itself.

That arc — shock, adaptation, literacy — has become the story we tell ourselves about every new medium that emerges. It reassures us that confusion is temporary, and that our understanding will catch up.

In the summer of 2025, a short AI-generated video depicting bunnies bouncing on a trampoline circulated widely online. Many viewers initially assumed it was real. The reaction wasn’t panic or awe, but something quieter: casual plausibility. The video did not feel extraordinary. It felt believable. And unlike early cinema, there was no stable grammar for audiences to learn from — no consistent cues to decode, no shared visual language that reliably signaled illusion.

@rachelthecatlovers Just checked the home security cam and… I think we’ve got guest performers out back! @Ring #bunny #ringdoorbell #ring #bunnies #trampoline ♬ Bounce When She Walk - Ohboyprince

This is where the Lumière analogy begins to break down. We are no longer dealing with a medium that can simply be “read” into literacy. We are through the looking glass, in a media environment where the signals that once helped us distinguish reality from artifice are themselves synthetic, automated, and endlessly recombinable.

The discomfort many of us are experiencing now is not simply about fake videos. It reflects a growing awareness that the infrastructure we assumed was holding non-fiction media together may never have fully existed. What sustained trust instead was a fragile system of consensus built from institutions, conventions, and shared habits of interpretation. It went unexamined because it worked well enough.

That system has been under strain for some time. Artificial intelligence did not introduce the pressure, but it is accelerating it toward a more complete and undeniable state. Media literacy remains important. But unlike earlier media shifts, the pace, adaptability, and scale of synthetic media now evolve faster than shared norms can stabilize, making the emergence of a universally reliable literacy far less certain.

Part I: A Moment of Duality

AI is producing both inspiring and troubling outcomes at the same time. It is opening up creative possibilities, lowering barriers to entry, enabling stories that were previously too expensive, dangerous, or fragmented to tell, and expanding who gets to participate in cultural production. At the same time, it is amplifying disinformation, collapsing visual trust, and normalizing distortion at unprecedented speed.

It’s overly simplistic to frame this as a debate about whether AI is “good” or “bad.” A better question is: why was our media ecosystem so unprepared to absorb its effects?

One possibility is that we overestimated the strength of the trust systems we thought were already in place.

This strain did not emerge entirely by accident. Alongside scale, habit, and technological change, shifts in incentives and distribution in television, streaming, and social media gradually loosened the connection between credibility signals and shared accountability. What AI does is make that loosening impossible to ignore.

The Semiotics Problem

Non-fiction media has always relied on semiotics — visual and narrative signals that communicate seriousness, authority, and credibility. Camera framing, lower thirds, interview posture, typography, tone. These cues did not guarantee truth, but they created a workable proxy for trust.

Reality television played a significant role in blurring these signals. Journalistic tropes were reused as entertainment grammar across the genre. Sitdown interviews with competition reality contestants gave an air of “newsworthiness” to their presentation. Documentary filmmaking techniques once reserved for meaty topics like war zone coverage were deployed to bring dramatic weight to unusual workplaces, or to the personal lives of fabulous housewives. And without a newsroom and its journalistic standards, these “verite” style productions openly used scripts and direction to deliver for the viewer. This was not deception by design; it was optimization for scale, legibility, and production efficiency. At the time, it felt harmless.

I remember once describing a show I worked on as “basically like wrestling,” referring to the fact that viewers watch indifferently to the authenticity of the action before them. The comment stayed with me — not because it felt wrong in the moment, but because, in retrospect, it captured something quietly shifting. We were teaching audiences that the language of credibility could be separated from the obligation to truth.

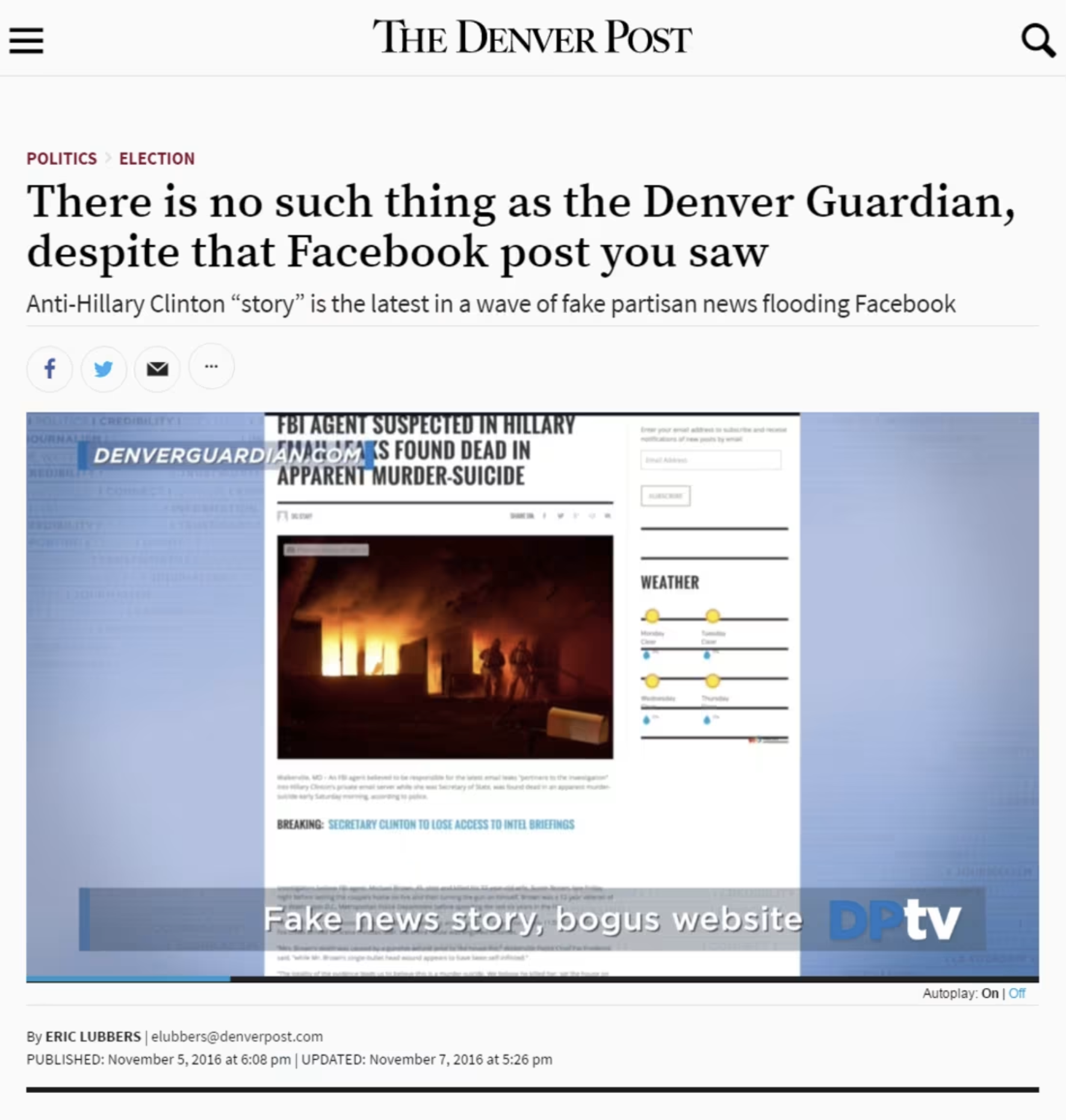

This dynamic was not confined to reality television. Social media platforms soon abstracted the same signals even further. Fake newspapers succeeded not because their claims were persuasive, but because their fonts, layouts, mastheads, and column structures looked right. Credibility work was being done before content was even processed. Semiotics detached from institutional accountability.

You might even argue that the fake documentary style of TV sitcoms like The Office or Modern Family, or the success of found-footage horror films like The Blair Witch Project and Paranormal Activity, also diluted trust signals along the way, each one innocently exploring new entertainment territory.

AI completes this trajectory. Today it automates credibility aesthetics at scale. Semiotics made credibility legible, platforms made it viral, and AI makes it effortless.

The Collapse of Top-Down Narratives

For much of the twentieth century, non-fiction media operated within top-down structures. Broadcast news, major publications, and editorial gatekeeping created shared reference points. Some reflect with nostalgia on an era when newsmen like Walter Cronkite offered a broadly accepted standard for the daily record. In retrospect, it’s also generally acknowledged that these same individuals and the newsrooms they fronted lacked the representation we’ve come to expect — whether in terms of gender, race, or lifestyle. These systems were imperfect and exclusionary, but they provided consensus.

That architecture has fractured. Information now flows through preference-driven feeds, citizen journalism, influencers, and self-published media. This decentralization has expanded representation — a real and necessary benefit — but it has also dissolved shared baselines.

The warts of this system are uncomfortable. In a fully decentralized media environment, young creators with large audiences and no editorial oversight can publicly speculate about the Holocaust, not through evidence, but through the performance of doubt. This is not an argument against free speech. It is an acknowledgment of what unfettered access to broadcast power includes.

The problem is not that more people can speak publicly; it’s that we never designed shared norms, literacy, or infrastructure for a world in which everyone can.

Avatars are the logical endpoint of this shift. As authority fragments, trust migrates to interface, to the affect of a trustworthy performance, and to always-on availability. Synthetic personalities scale certainty and intimacy far more efficiently than institutions ever could.

AI did not cause this collapse. It accelerated it.

The Myth of Objectivity

Documentary has never been objective. That idea was always more aspiration than reality.

Filmmakers from Errol Morris to Werner Herzog have long argued that documentary media itself is not a transparent window onto the world, but a construction shaped by choices, context, and interpretation.

We see this tension across the documentary canon itself. Michael Moore’s films openly foreground his perspective, collapsing the distance between journalist and participant. Films like Exit Through the Gift Shop or Author: The JT Leroy Story openly embrace sleight of hand as techniques to deliver what their filmmakers see as a methods to unpack deeper more compelling truths about their dynamic subjects. Even highly polished “authorized” documentaries — celebrity-driven biographical series like Beckham where the subject participates as en EP in shaping the narrative — carry the aesthetic weight of documentary while transparently advancing a point of view.

Audiences have long understood this. We don’t watch these works expecting neutral arbitration; we watch them expecting interpretation. Documentary has always contained this elasticity between reportage and argument, between record and persuasion. Truth, in this tradition, emerges through investigation and transparent process — not through the camera alone.

AI makes this impossible to ignore. When images, voices, and scenes can be generated or altered, the fantasy of mechanical objectivity collapses. What remains is accountability.

Part II: From Authenticity to Infrastructure

If debates about documentary truth are to move forward, they must move past an obsession with detecting fakery after the fact.

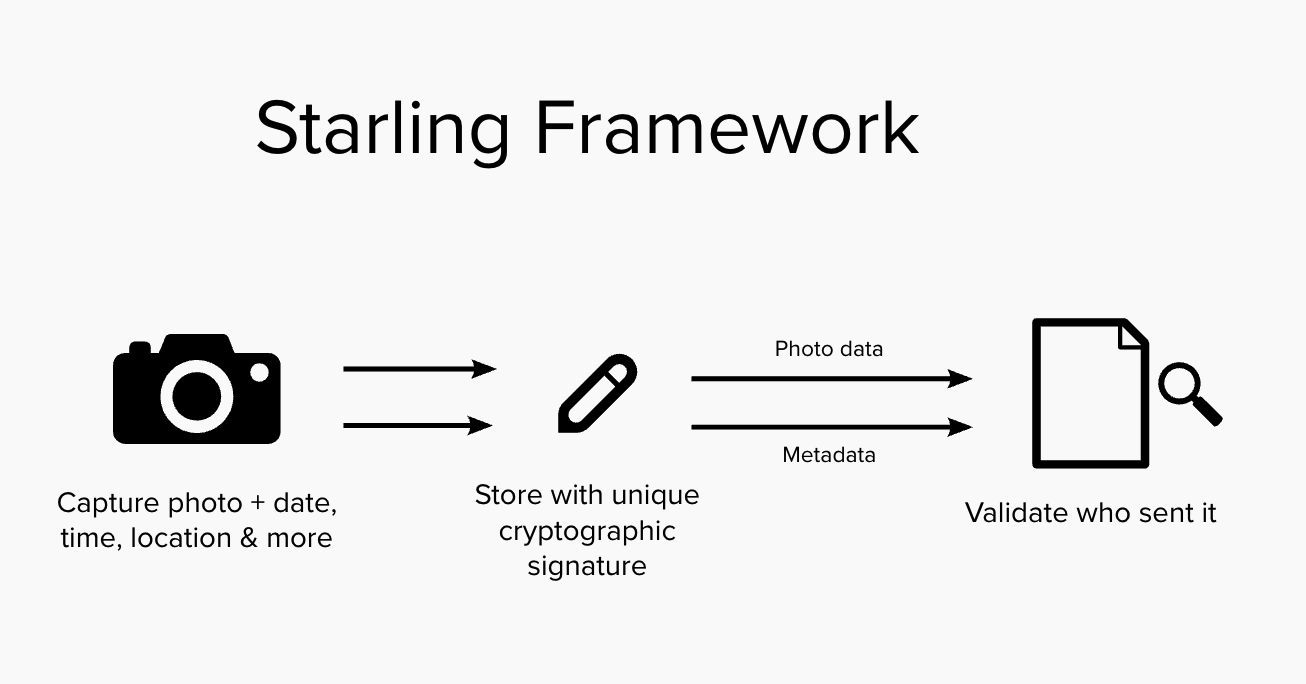

This is where the work of Starling Lab becomes instructive. Rather than attempting to identify synthetic media once it has already circulated, Starling focuses on infrastructure for authenticity: methods to capture, store, and verify media across its lifecycle.

This provenance-first approach emphasizes establishing a verifiable chain of custody from the moment media is created, anchoring metadata such as time, location, and source at capture, and preserving it through secure storage and verification. The goal is not to define truth, but to reliably distinguish documented reality from simulation.

In the Starling Framework, a Root of Trust is the foundational source within a system where cryptographic trust is first established, ensuring the authenticity and integrity of digital records. Establishing a clear “root” is essential for creating a verifiable audit trail that tracks the creation and entire lifecycle of content, regardless of whether it is AI-created synthetic media or traditional content filmed with a camera. The Root of Trust serves as the bedrock for a “trustless” system, where any third party can independently confirm a digital asset’s origin and history without relying solely on the reputation of a centralized authority.

Capture

The Starling Framework prioritizes establishing this Root of Trust at the Capture phase — or, in the case of generative AI work, the Creation phase — as close to the original event as possible. Ideally, this is anchored in the hardware or firmware of a device, such as a camera’s sensor, a smartphone’s secure enclave, or a computer’s GPU, to bind “birth certificates” of authenticity to an asset before it can be manipulated at the user level. By systematically hashing and signing digital media at this earliest stage, the framework creates an immutable provenance trail that remains with the data throughout its entire lifecycle.

In the Starling Framework, both “real” content created with an actual camera and AI-generated synthetic content are treated equally. Both get “birth certificates” of authenticity. The framework provides the transparency necessary for audiences to evaluate what they are seeing from an informed position.

Store

Digital assets naturally decay due to hardware failure, media obsolescence, or malicious attacks, making resilient preservation essential. Documentary filmmaking, journalism, and other disciplines also require edits and adjustments to content as part of standard workflow: interviews are edited for brevity and clarity, photos are cropped and toned, and historical images are restored and repaired.

Starling addresses this by leveraging tamper-evident data structures and constant audits to demonstrate integrity over time and immediately expose changes. Integrity proofs from the capture step are registered on consensus-based ledgers (blockchain), creating an immutable record of the content and its time of creation that remains accessible even if original hardware fails.

Verify

Starling Labs embraces both human and technological approaches to verification, creating digital audit trails akin to a notary’s physical ledger.

Starling workflows surface cryptographic integrity evidence and immutable registrations, allowing audiences to evaluate the evidence for themselves rather than relying on blind trust. These methods automate complex parts of the verification cycle, removing opportunities for human error and providing real-time authentication.

Ultimately, this enables current and future inspectors to definitively understand the provenance and integrity of digital media throughout its entire history.

The Starling Lab Framework’s provenance and verification do not create trust, nor do they resolve meaning or ethics. But they help stabilize the preconditions for trust by establishing a shared and verifiable record of what happened, when, and where.

Without baseline agreement on those fundamentals, discourse collapses into endless skepticism. With them, we can argue productively about interpretation, responsibility, and impact.

In other words, authenticity infrastructure allows us to move the conversation up the stack.

The Opportunity

AI did not break the documentary. It revealed the fragility of relying on habit, authority, and convention to convey authenticity rather than on designed systems.

That realization is unsettling, and understandably produces fear and pessimism. But it is also clarifying.

The opportunity now is not to restore a mythic past of objectivity, and not to surrender to relativism, but to build infrastructure that acknowledges how non-fiction actually works: as a negotiated, interpretive, consensus-driven practice.

Today’s audiences expect credits at the end of a documentary that list the director, producers, music credits, and all the people who participated in creating the work. What we are suggesting would be an extension of those credits, functioning similarly to an ingredients list on food packaging. But instead of words printed on a cardboard box, it would be addresses on a blockchain ledger pointing to the primary sources used to create the documentary. Further entries could list the modifications made to those sources in the making of the film. In this way, audiences would know not only who made the documentary, but what raw ingredients went into it, and how they were processed to arrive at the final result.

Documentary sits at the center of this moment not because it is uniquely threatened, but because it has always operated at the boundary between fact and meaning. AI simply makes that boundary visible.

What we choose to build there — soberly, optimistically, and intentionally — will determine whether non-fiction remains a space for shared understanding, or dissolves into noise.

Limits of the Argument

This essay does not argue that artificial intelligence is the sole or primary cause of declining trust in non-fiction media, nor does it attempt to resolve the political, economic, or psychological forces shaping this moment. It also does not claim that new forms of media literacy will not emerge, or that decentralization is inherently harmful. Rather, it focuses on a narrower observation: long-standing weaknesses in how non-fiction media relied on consensus, semiotics, and institutional habit are now being accelerated by synthetic media at a scale that challenges individual cognition. The infrastructure questions raised here are not presented as sufficient solutions, but as necessary conditions for meaningful discourse to continue.