Combating Racism as a Public Health Crisis

Combating Racism as a Public Health Crisis

Black Voice News & Starling Lab analyze 35+ California RPHC declarations and use verifiable data to track government action and long-term accountability.

TeamReading Time: 5min

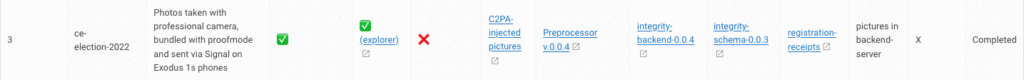

Prototypes

Share

Contents

Background

Framework

TechnologyLearningsArchive

Fellowship Projects and Awards

See More

Background

In the modern era, data is a vital resource that all organizations and communities need to gauge and affect change. Marginalized communities can face unique challenges accessing data, compounding long standing inequities.

In 2015, Black Voice News launched Mapping Black California as a project to better understand, report on, and visualize data important to Black Californians. The initiative utilizes geospatial technology to enhance community news and storytelling and equips the Black community with data-driven tools to address regional and local systemic inequities.

The MBC team began to search for data-supported visualizations when reporting on public health. Their coverage sought to explore the profound impacts of racism on social determinants of health, including housing, education, and employment. But all too often, they found data available pertaining to Black communities is too broad or omits key information.

In the wake of George Floyd’s murder in 2020, a nationwide movement pushed elected officials and other government leaders to reckon with racism in their communities. Public outcry specifically called for the acknowledgement of structural racism in law enforcement, healthcare and community conditions. Official responses varied, ranging from simple acknowledgement of the problem to more extensive commitments to provide funding and take measurable action. Many of these took the form of declarations that racism is a “public health crisis” This presented an opportunity for MBC to collect its own data about these structural commitments, mindful of a long history of failed reforms.

BVN Executive Editor Stephanie Williams explained:

“The uprising of 2020 is reflective of the historical uprisings across decades and centuries, just as the disproportionate impacts of COVID-19 on Blacks was reflective of the same experiences the community suffered during the great flu epidemic of 1918. Though separated by 100 years, racism drove the response in both cases.

This project is meaningful and important because the recorded commitments for structural and institutional change made in the declarations of racism as a public health crisis and captured in the dashboard and Web3 authentication technology and decentralized protocols, will provide leverage for activists and others to hold officials accountable in the years to come even as the events of 2020 fade from memory. If not, 100 years from now we may find ourselves experiencing the same traumas, telling the same stories and experiencing the same results.”

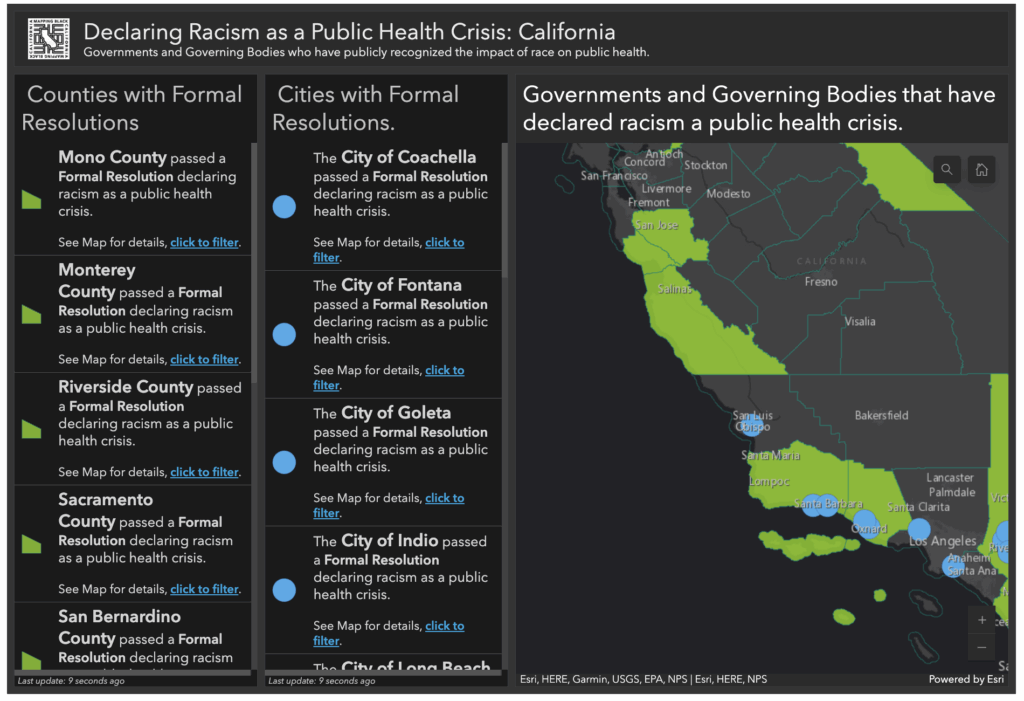

Public entities across the state of California – county supervisors and commissions, city councils, and various other government associations – soon issued nearly 35 separate declarations related to racism as a public health crisis, sometimes abbreviated RPHC.

This fellowship aimed to quantify and qualify what political promises were made, to measure what action was in fact taken, and to document both in ways that could support long-term accountability.

Contents

Scope

FrameworkTechnologyLearningsArchive

Scope

For this project, The Starling Lab, Black Voice News, and Esri Mapping teamed up to develop a new-and-improved data dashboard and series of articles. Each would incorporate web archives (i.e., preserved web pages), verified as authentic through cryptographic methods, to create an evidence-based resource that could be used as a tool to measure and affect social change. The archives included data available on government and other public websites, which together help assess and quantify the efforts of various different jurisdictions.

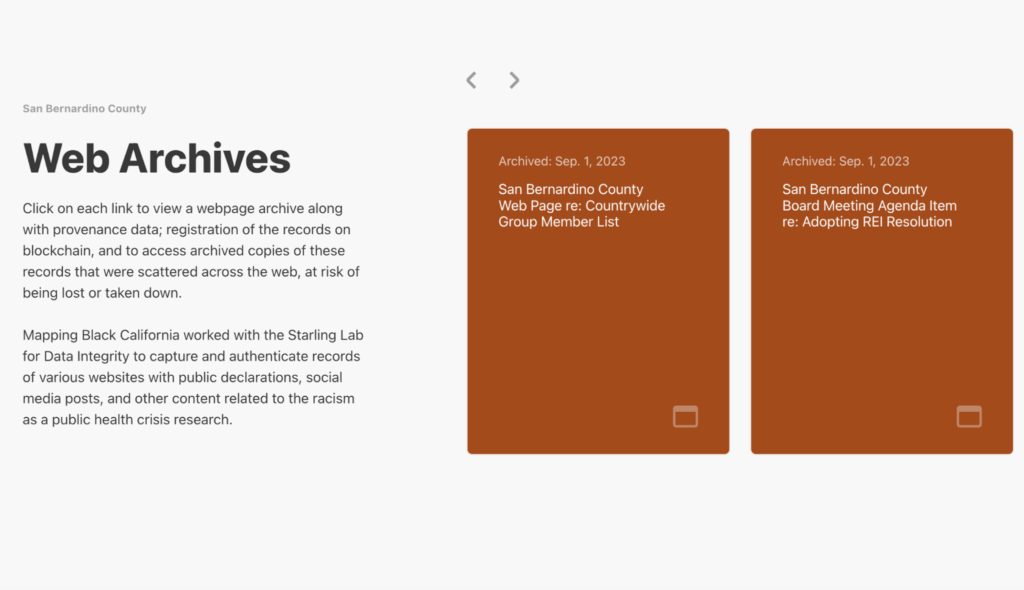

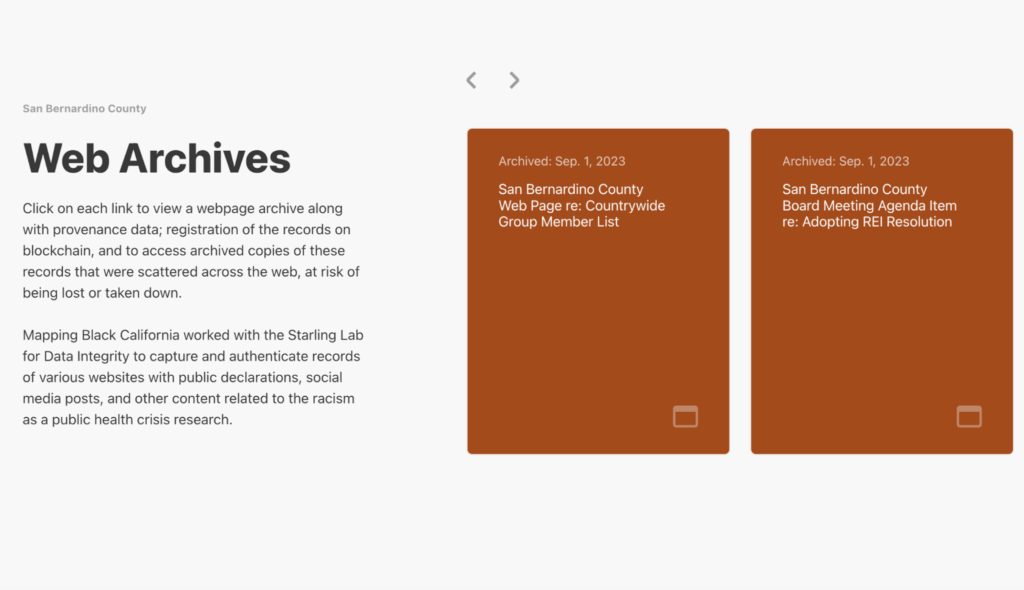

The outcome of this project includes decentralized preservation of authenticated web archives, encompassing roughly 350 different web pages (roughly 10 per formal government declaration). This body of evidence for the reporting was published using a specially developed WordPress plugin that invites readers to verify pages as they existed on official channels.

Dr. Paulette Brown-Hinds, publisher of Black Voice News and founder of Mapping Black California, explained the focus:

“From my perspective, the project with Starling Lab allowed us to experiment with a new technology to address an old challenge: how to keep important records accessible to the public, transparent, and available indefinitely.”

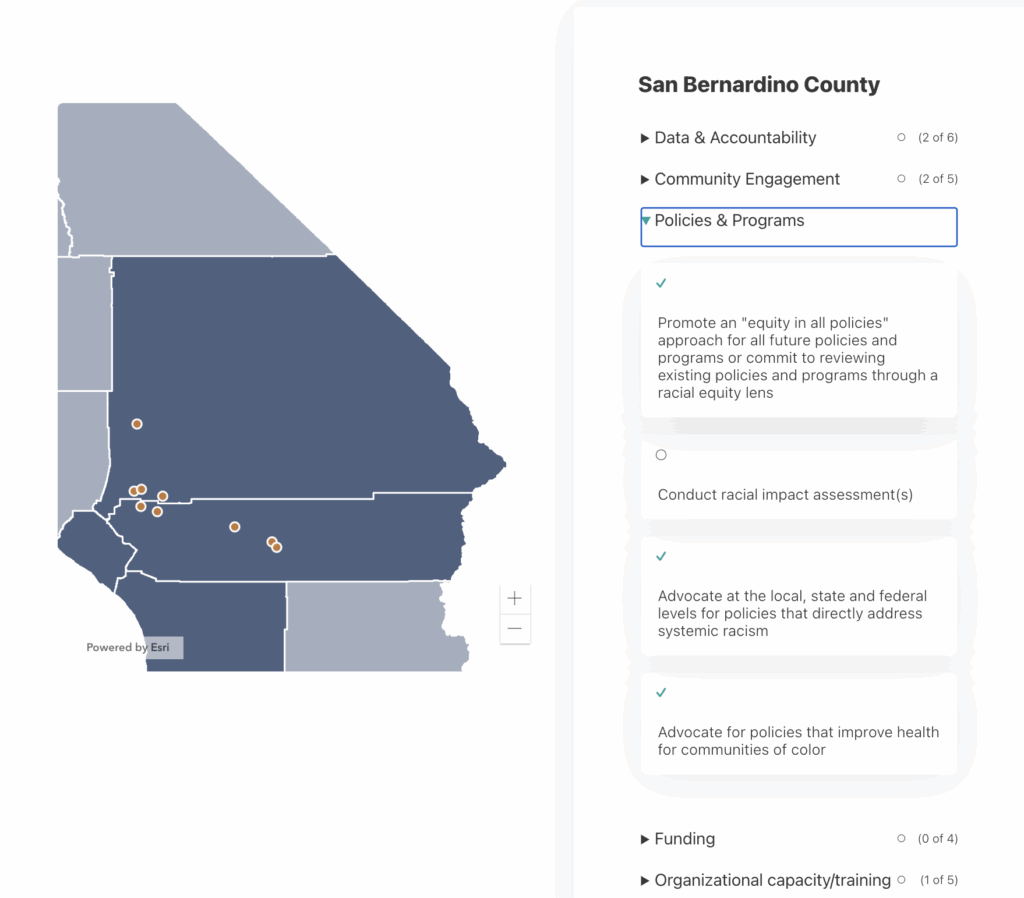

Towards these goals, Black Voice News created the Combatting Racism as a Public Health Crisis data dashboard. This accountability tool and content aggregator provides users with a more detailed examination of nearly three dozen official resolutions, along with demographic data, progress reports promises, and comprehensive resources of other sources of data about these resolutions.

Researchers analyzed each jurisdiction’s resolution and mapped the commitments to a comprehensive rubric. This rubric was defined initially by a template provided by the American Public Health Association's analysis of RPHC resolutions across the nation. It was then augmented to reflect the specific high needs areas in the California landscape; specifically COVID-19 tracking metrics and inclusion of race specific language (Black, African American, Hispanic, Latino, etc).

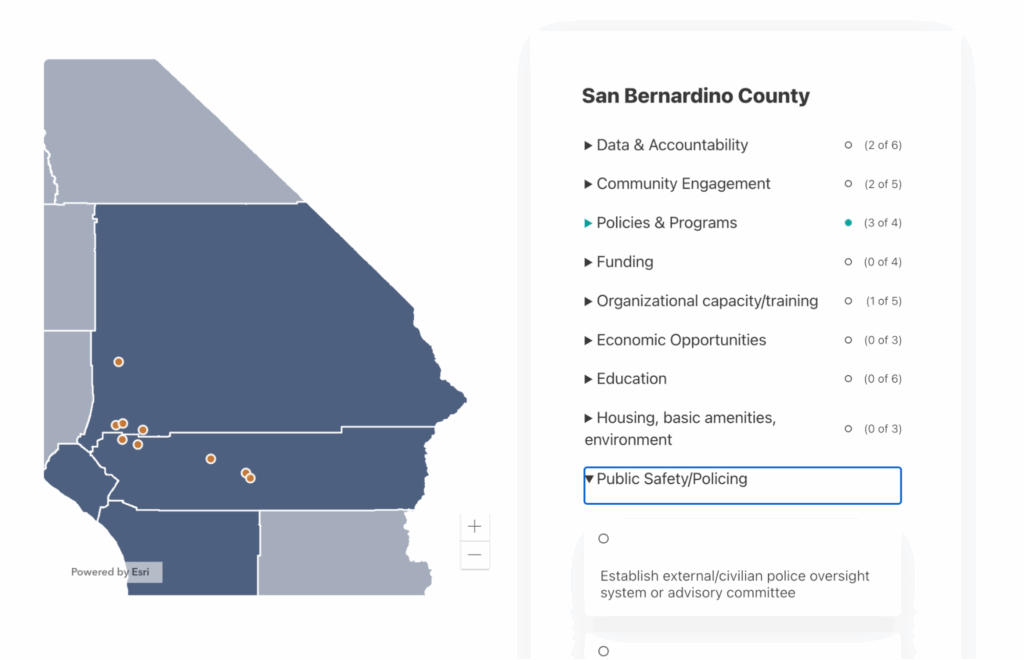

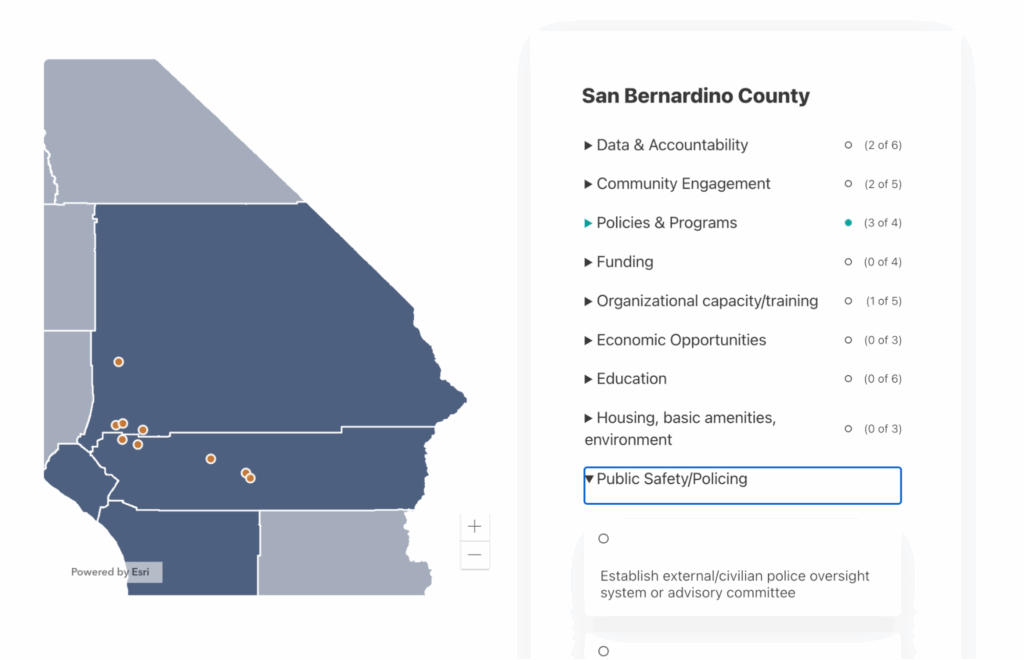

This data dashboard establishes categories and sets of commitments within each category, allowing tracking and comparison between jurisdictions. Categories include Data & Accountability, Community Engagement, Policies & Programs, Funding, Organizational capacity/training, Economic Opportunities, Education, Housing, basic amenities, environment, and Public Safety/Policing.

These nine general categories are further delineated into 39 strategic actions, quantifying how many of these actions each jurisdiction’s public pledges promised to address.

In addition to categorizing and adding quantitative measurements, this data dashboard aimed to:

- Establish a baseline of commitments for each jurisdiction.

- Set a quantitative standard for markers of progression towards the goals.

- Utilize web tools to track, measure, clarify and report on the progress of commitments made by declarative entities.

- Use this data to publish data-informed stories to Black Voice News and external newsrooms.

- Inform local advocates, leaders, community-based organizations (CBOs), and the general community on the progression of commitments to address the real-life, day-to-day impacts of structural racism in their localities.

In each of the categories, the government organizations were rated on a scale of 0-9. Zero means none of the strategic actions in that category were taken, and nine means all strategic actions in that category were covered. The majority of the organizations have a range of 3-6 strategic actions covered in their pledges.

This project required extensive research to identify pertinent government and social media web pages, both of which are particularly susceptible to link rot. The original pages live on a variety of different platforms, many maintained by counties or cities that do not have comprehensive archiving or data preservation policies. This vulnerable set of information is likely to be important for underrepresented groups to have evidence to point to for accountability purposes. The result was an ideal situation to prototype authenticated archives, as Alex Reed, project manager of Mapping Black California, explains:

“The incorporation of encrypted and distributed archiving into our content aggregation platform is what will ensure its longevity decades to come. The documents, images, and web pages that we were able to Archive will never be destroyed and will serve as a reference point for researchers, analysts, journalists, and the public at Large for as long as the internet exists.”

Using Mapping Black California’s dashboard as the basis for her investigative series, reporter Breanna Reeves examined four different local governments – among the many – who passed resolutions that declared racism a public health crisis.

In the first article of the Combating Racism as a Public Health Crisis series, Holding Leaders Accountable, Reeves provides an overview of the events that led many jurisdictions across California to sign resolutions and make declarations. Her reporting focused on local governments who had some of the most comprehensive declarations, meaning they addressed nearly all strategic actions in their declaration. Additionally, her reporting examined local governments who made declarations but have been slow turning the declarations into actions.

The second article of the series, Santa Cruz County’s Inclusive Resolution, highlights Santa Cruz County as having a very inclusive resolution that utilized community engagement to bring many of their pledges to life.

The third, Oakland Addresses Systemic Racism with Data-driven Approach, focuses on the City’s 2022 resolution. Oakland also has a comprehensive resolution that acknowledged white supremacy as the root cause of racism. While their resolution may not meet all criteria outlined in Mapping Black California’s dashboard, the reporting suggests they were ahead of the curve in addressing racism as a public health crisis with their Department of Race & Equity, which was created in 2016.

The fourth and final article of the initial series, Riverside and San Bernardino Counties Take Action Against Racism as a Public Health Crisis, analyzes jurisdictions with resolutions that are less comprehensive – or even make no mention of taking action. Specifically, the coverage explores how San Bernardino County was the first jurisdiction in the state to pass a resolution in 2020, but in the last three years has been slow to act.

This series showcases the power of data and verifiable evidence, and demonstrates a new methodology - and standard - for evidence-based data reporting. As Reeves shared about the experience:

“Collaborating with Starling Lab and Mapping Black California to publish the Combating Racism as a Public Health Crisis is perhaps one of the most important projects I've done thus far. As a reporter, my job is to hold those in power accountable. I believe this project did exactly that by analyzing promises made by dozens of local governments and inquiring on behalf of the communities these promises were made to.”

Contents

Framework

TechnologyLearningsArchive

Framework

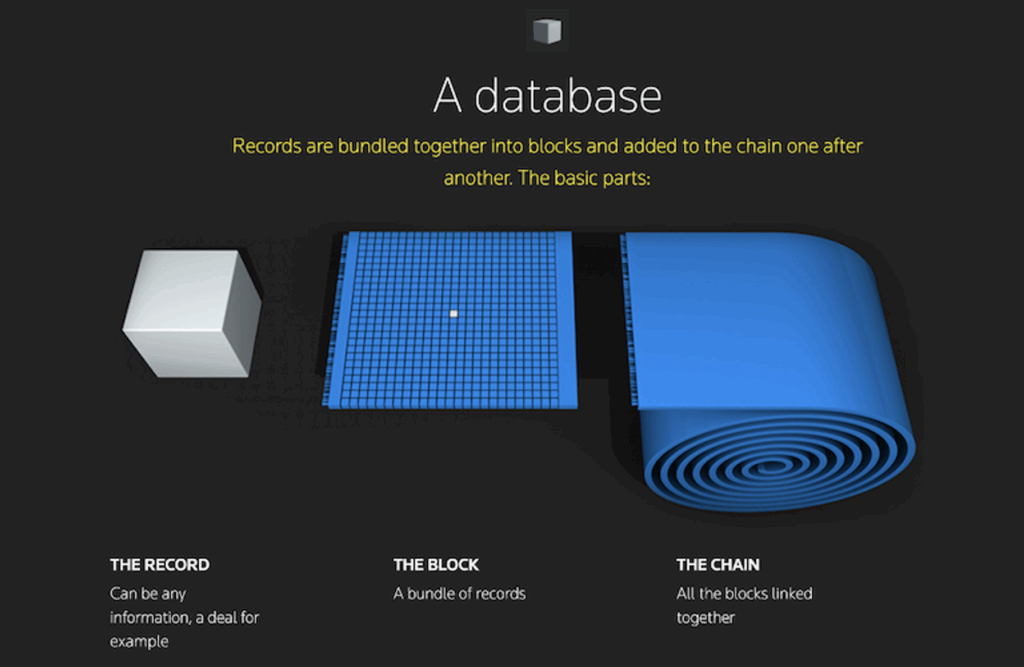

Mapping Black California had previously published an interactive map of official RPHC declarations by local and regional governments. This map, however, was out of date, lacked ways to present verification information about the data, and needed quantifiable measurements and comparisons. We saw this as an opportunity to implement the Starling Framework: Capture, Store, Verify.

The Challenge

Working with Starling Lab, the Black Voice News (BVN) investigative team worked to update the site and to archive and analyze data available on government websites, social media, public databases, and recordings of meetings and events.

The original version of the map links to public records scattered across the web, each at risk of disappearing when the owner of a site changes what is hosted on that URL. When Pew Research looked at web pages from 2013 to see if they were available a decade later, they found that 38% had disappeared by 2023. The same study discovered that a quarter of pages online from 2013 to 2023 were no longer available. In addition to the pages going offline, redirect links can also lead users to dead ends. Google announced that they will soon shutter their URL shortening service, breaking all of its historical links.

Throughout the reporting done for the original interactive map, an important theme emerged: Members of the public wanted to hold their leaders accountable for pledges and promises made after these resolutions were passed. Oftentimes, records which are supposed to be publicly available are in reality difficult and time-intensive to access.

It’s easy to assume that information about these official commitments would be kept available through the government itself. On paper, California has several good public records laws. In practice, entities all over the state have faced scorn over their inability or even refusal to provide a variety of public records to the public.

Even outside of government, all too often digital records are kept by singular, powerful organizations or services that can remove or modify the information at will – or charge users exorbitant fees. Vows by officials made on social media posts can be deleted or entire accounts made private.

Since this map and data was meant to be a public-facing reference, an interface was needed that is accessible to a general audience. The tool should present an understandable and quantifiable summary of efforts towards combating racism as a public health crisis. The original map didn’t show quantifiable progress or data comparing one region to another, so it was difficult for the public to gain meaningful insight through cross-comparisons. Displaying a view that enables the comparison of different jurisdiction’s efforts – with evidence to support this quantified data – is an important step towards understanding progress and holding governing bodies accountable.

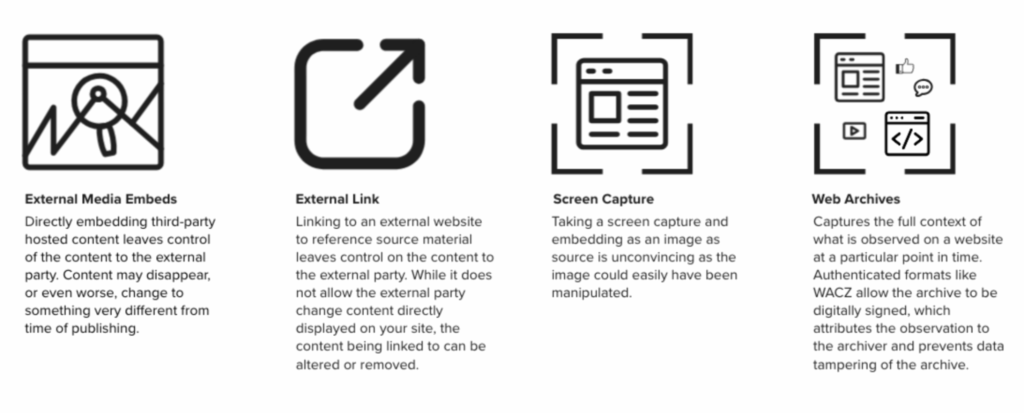

Finally, in addition to official documents, the team wanted to capture content from sites like YouTube, social media, and other places where evidence might be published online. This supporting material is essential for the work of journalists and investigators who are looking for corroborating information and context around a story or investigation. It must also be preserved and displayed in a way that holds up against standards of evidence they may be subject to in the future, a necessary step when public records are maintained by fragile, poorly-resourced public jurisdictions.

The Prototype

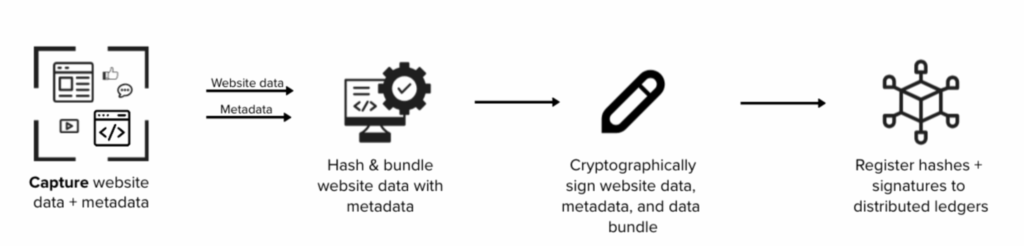

Using the Starling Framework, we can preserve cryptographically secured versions of these documents and records to build a better data resource. By capturing, preserving, and displaying complete versions of these webpages we are able to demonstrate rich context as part of a new integrity workflow, and to showcase the value of using cryptographic signatures and registered hashes to secure provenance of captured content. This project resulted in a verifiable body of evidence, with integrity, for holding leaders accountable through reporting on facts.

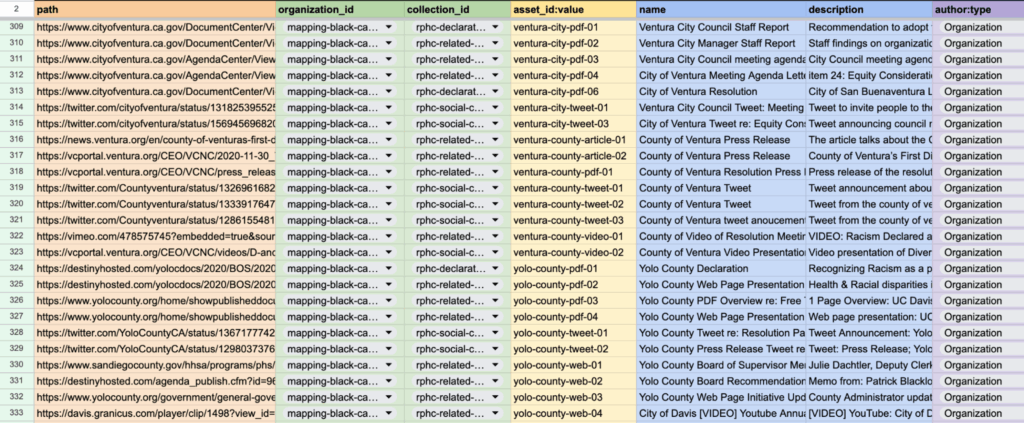

For this project, Starling Lab teamed up with Black Voice News to collect about 350 websites, including social media posts, along with metadata files for each archive (a total of over 1100 records) that supported the data shown in the interactive dashboard. Because this was such a large body of evidence, we needed a streamlined way of capturing and preserving the websites with evidence of pledges, promises, and statements. Using web archive technology called Browsertrix developed by the Webrecorder team, in combination with Starling Integrity tools, we prototyped a new workflow for journalists and investigators to use for capturing and preserving public data from the web.

To present the captured information, BVN and Starling Lab worked with the Esri special projects team to build an interactive data dashboard that brings a massive body of data together in one place. This dashboard aggregated and analyzed information, the authenticity of which was preserved by Starling Lab, about the progress in different categories of combating racism as a public health crisis. Having this information in one comprehensive tool enables others to understand, compare, and hold jurisdictions accountable. To ensure data redundancy and availability, the evidence files are distributed on IPFS and archived on Filecoin, which allows them to be verifiably retrieved from multiple sources in the future.

In addition to preserving and distributing replayable web archives as evidence and sources, a complete package of the data was hashed and signed at time of capture. These cryptographic hashes serve as fingerprints registered on several distributed ledgers, creating an immutable index of what exactly existed, who witnessed it, and when the data archiving occurred.

This prototype is an experiment to snapshot web content, from hundreds of sources, into authentic public record, with special consideration for preserving original source information from capture to presentation. Through this project, the team hopes to inspire new standards in journalism and other investigative research.

Contents

Scope

Framework

Technology

LearningsArchive

Technology

Capture

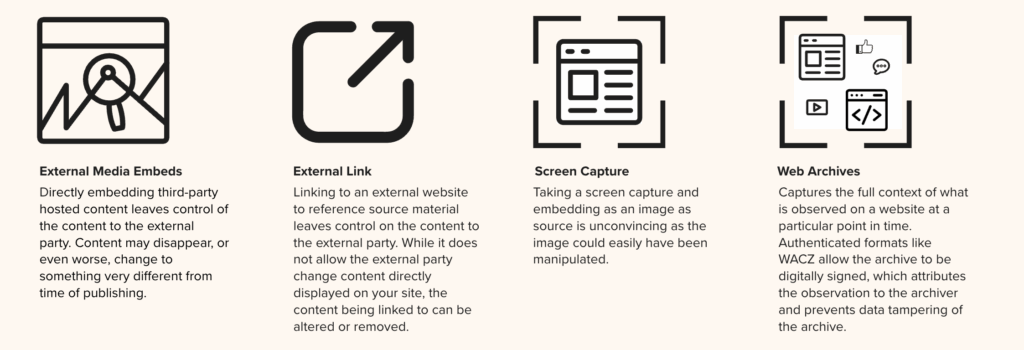

Archiving Content on the Web

The approach to web capture taken by Black Voice News and Starling Lab was unique. Much of the data was collected from local government websites which may self-host video and databases which are hard to search and access without special investigative techniques. This is web content that is also subject to take down and link rot as budgets are changed or staff turns over, and systems aren’t maintained. To address the lack of access and likely disappearance of this web content, we created authenticated web archives.

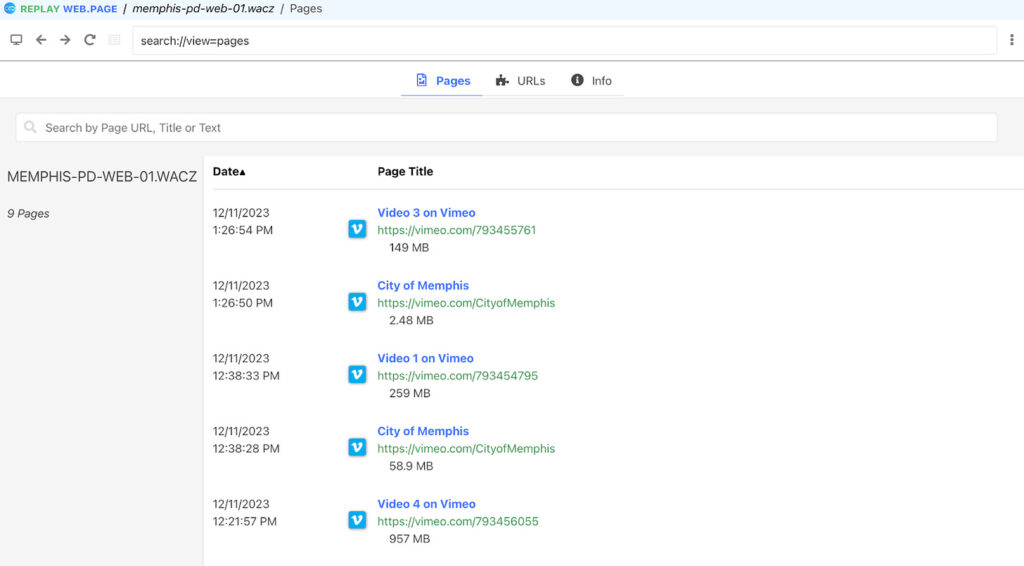

Web archives created with the Webrecorder suite of tools enabled the team to capture the full context of everything that existed on the web page in a zip archive called a WACZ file. The information collected includes all content on a webpage, such as articles, comments, likes, and multimedia files such as audio and video. A WACZ file is a copy of the code and media that makes up that webpage as it appeared, including an index of what content was captured. When users later display (or “replay”) the page using certain tools, it remains fully interactive like it was at the time of capture.

First, most of the web archives were created with an automated tool called Browsertrix (on a custom instance operated by Starling Lab). Some were created manually with a Chrome extension called Archiveweb.page when websites were too complex to crawl with a bot. These tools visit a site on the world wide web and scrape all the content loaded during the browsing session, including site code and assets.

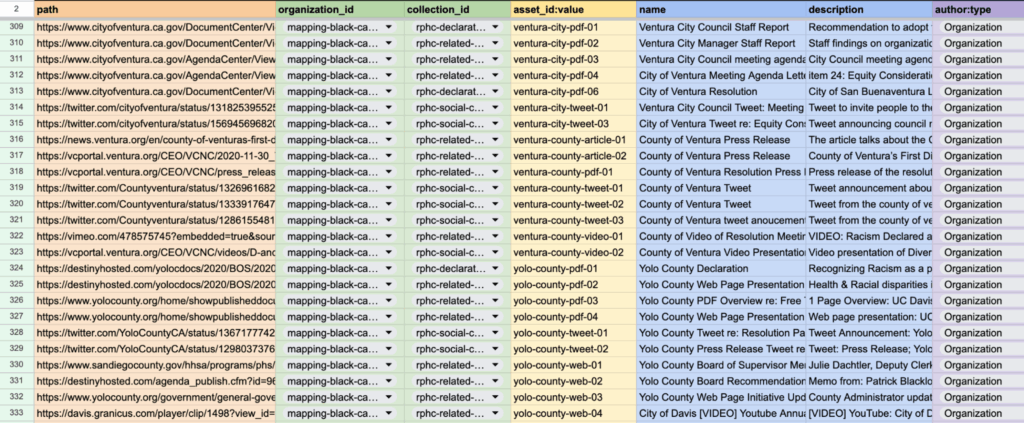

The list of all the URLs to crawl, along with the other metadata fields were researched and populated by the Black Voice News investigative team. In addition to specifying names for each web archive, we included several additional metadata fields. Each URL was assigned a jurisdiction and geographic identifier so it could later be correlated with the correct region in the interactive map created by Esri.

A custom Python script was used to take the spreadsheet that listed all the URLs and metadata fields to automate the Browsertrix web crawls via its API. When these tools capture an archive, it preserves the data in such a way that, when the archives are replayed, each part of the website can be replayed as it was witnessed by the Browsertrix crawler, along with information that the end user can inspect about the authenticity of this web archive.

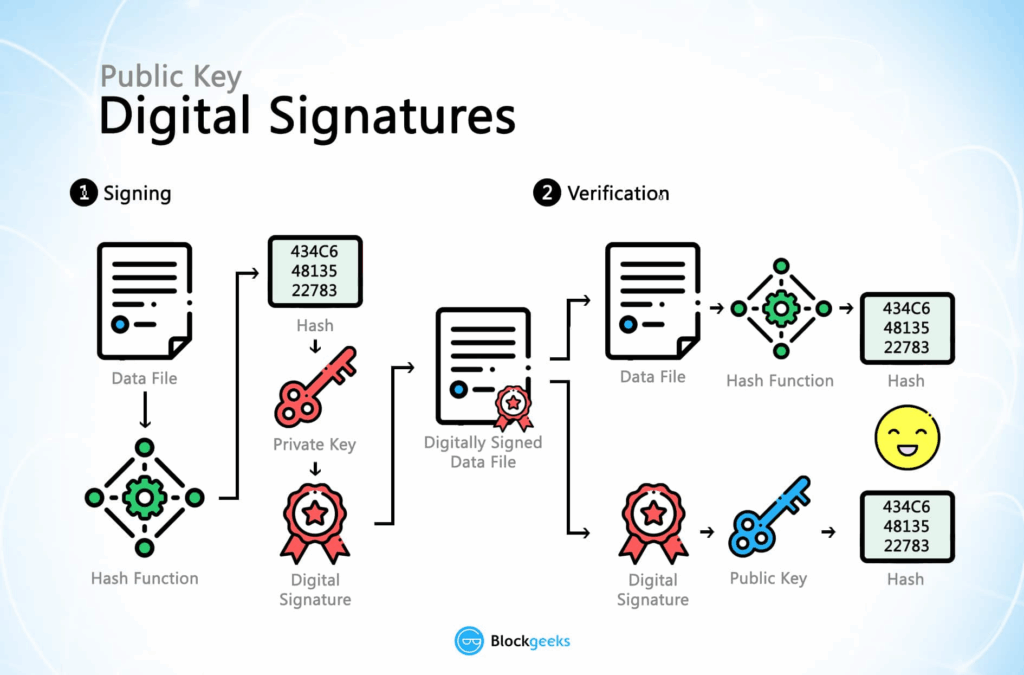

Signing and Verification of Web Archives

When a WACZ record of a website was created, an immutable digital ‘fingerprint’ called a hash was also generated. If any single byte of the data is changed, be it a pixel of an image or the timestamp of when it was collected, the hash used to verify copies of that page will change as well. It is important for a newsroom like BVN to establish this fingerprint in case the provenance and integrity of a web archive is called into question. If that happens, we have a reliable record of this hash when it was recorded.

The hash representing the crawled data in the WACZ file was also cryptographically signed by one of Starling Lab’s Let’s Encrypt certificates as it is the operator of the Browsertrix server. This signature represents an attestation to the web content as it was observed and when, and ties it to a known authority via its domain name. This cryptographic provenance is packaged into the web archive file and is described in the WACZ specification and WACZ Signing and Verification specification.

In the case of manual crawls using the ArchiveWeb.page, the WACZ files were signed by a keypair locally generated by the Chrome extension. The tool securely signs the crawled content with a private key belonging to a verifiable identity, identifiable with the public key associated with the ArchiveWeb.page user.

For each crawled URL, the hashed, signed, and timestamped provenance bundle was packaged alongside the crawled web content into a ZIP file with a .wacz extension in the file name, and verifiable with viewers developed by Webrecorder.

Timestamping and Registering Web Archives

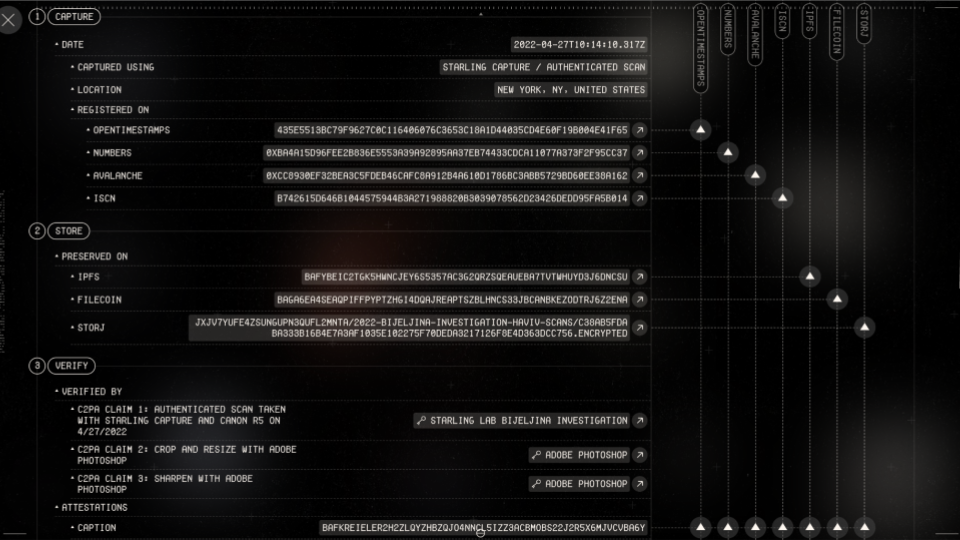

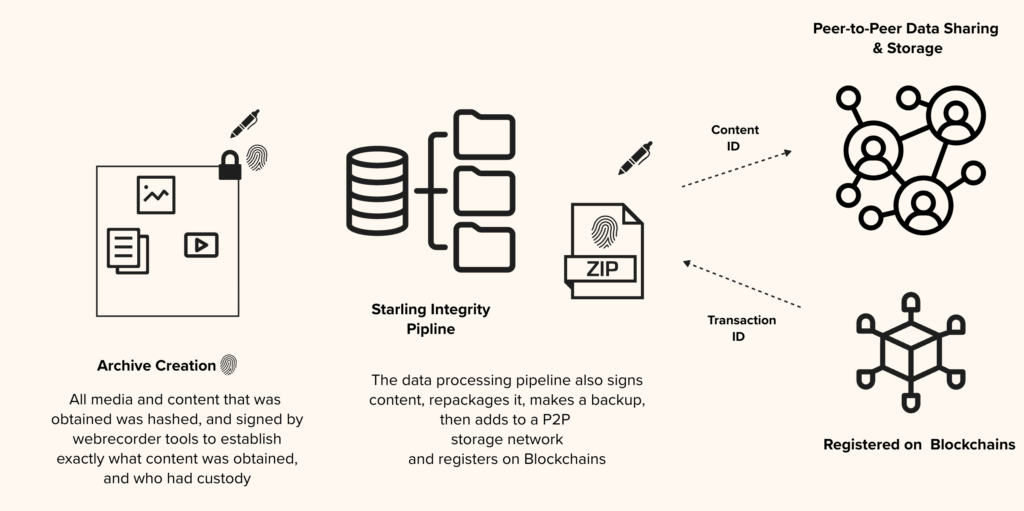

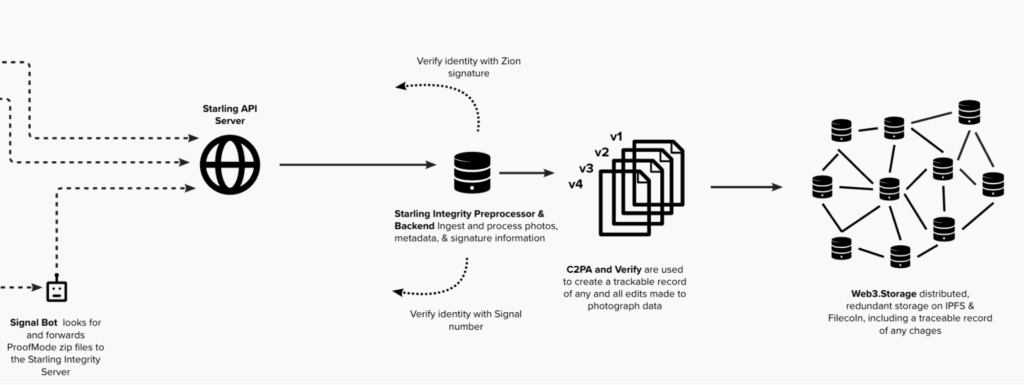

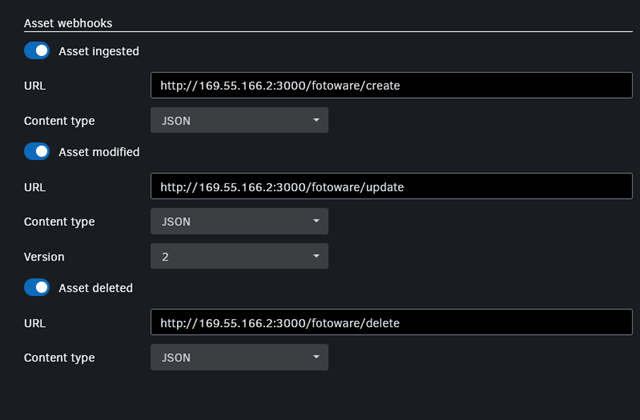

Once an authenticated web archive is produced, it is uploaded to the Starling Integrity pipeline.

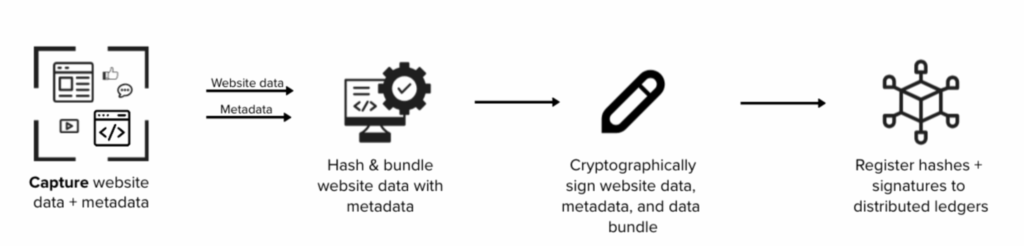

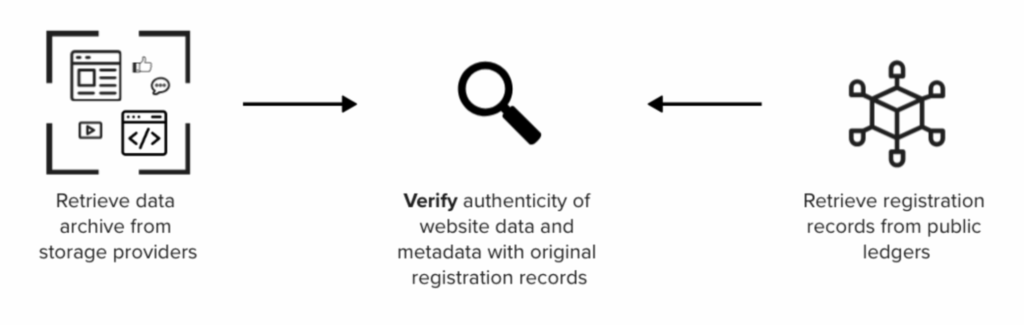

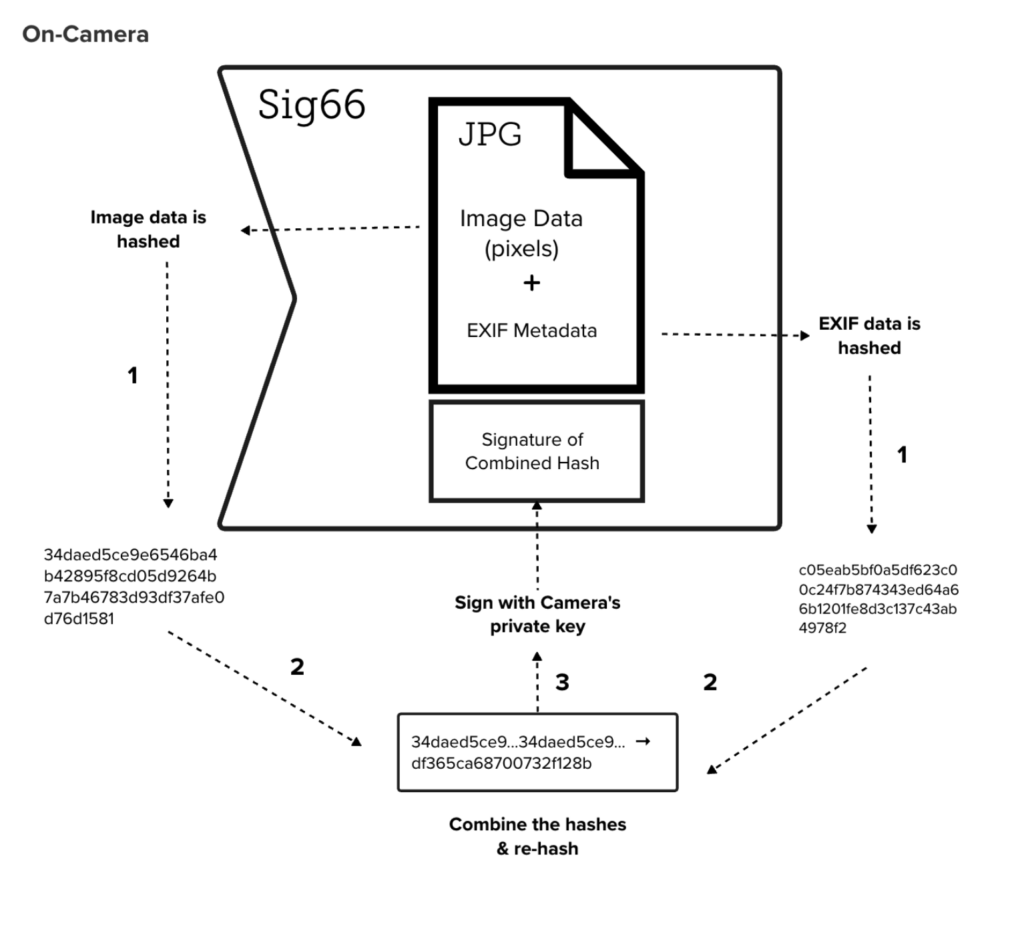

The Starling Integrity pipeline streamlines the complex data processing workflow to ingest digital media and to establish records of provenance. A pipeline like this streamlines the complex steps of ingesting, hashing, signing, timestamping, and registering content for newsrooms like BVN. Without the pipeline, all 350 records would have to be manually processed with multiple tools. The following diagram illustrates the registration process.

As part of the Starling Integrity workflow, the hash of each WACZ file was added to OpenTimestamps servers for a proof of existence at the time of registration.

File hashes and metadata, including tools and process information specified in the crawl spreadsheet, were then registered on distributed ledgers to establish a public record of the web archive creation. These provenance information were stored and distributed with a peer-to-peer data sharing system called IPFS, while the WACZ files themselves are stored separately, so users who gain access to the web archives may verify their integrity against their blockchain registrations.

The provenance data were registered on three different blockchains: Numbers, Avalanche, and LikeCoin. These registrations represent a public and immutable index that anyone using the Combating Racism data dashboard can inspect and verify against, if the authenticity of the web content is called into question, or if another newsroom wants a reliable source for information for writing a story about the data in this project.

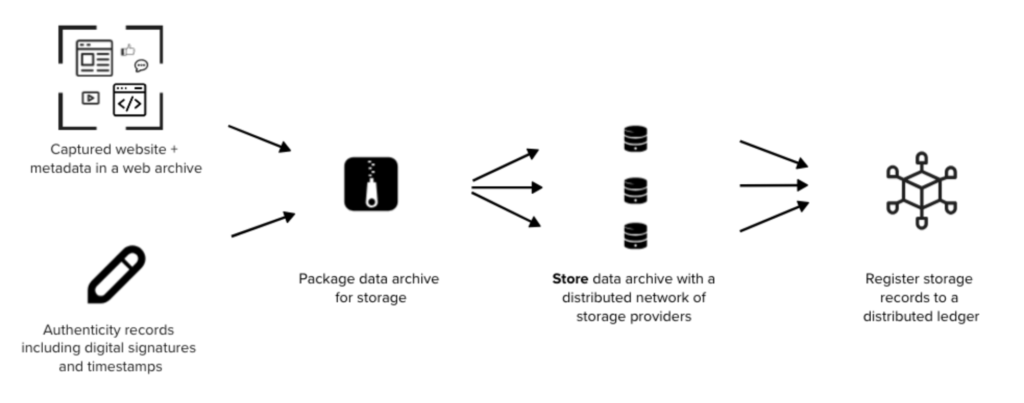

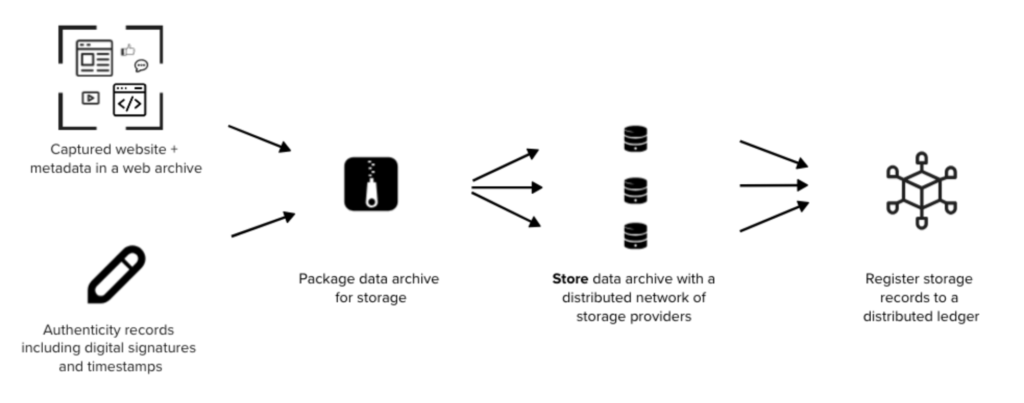

Store

Preserving Web Archives with Distributed Storage

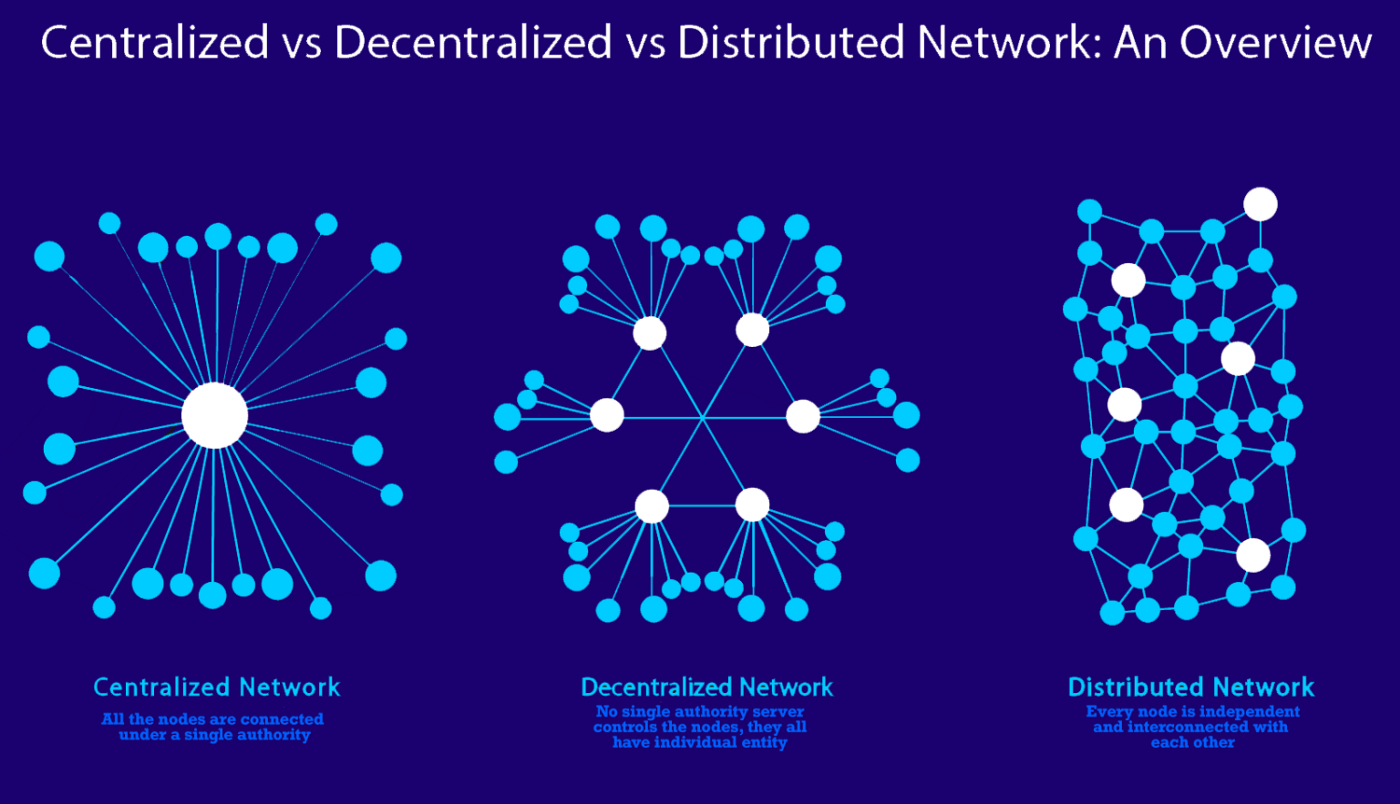

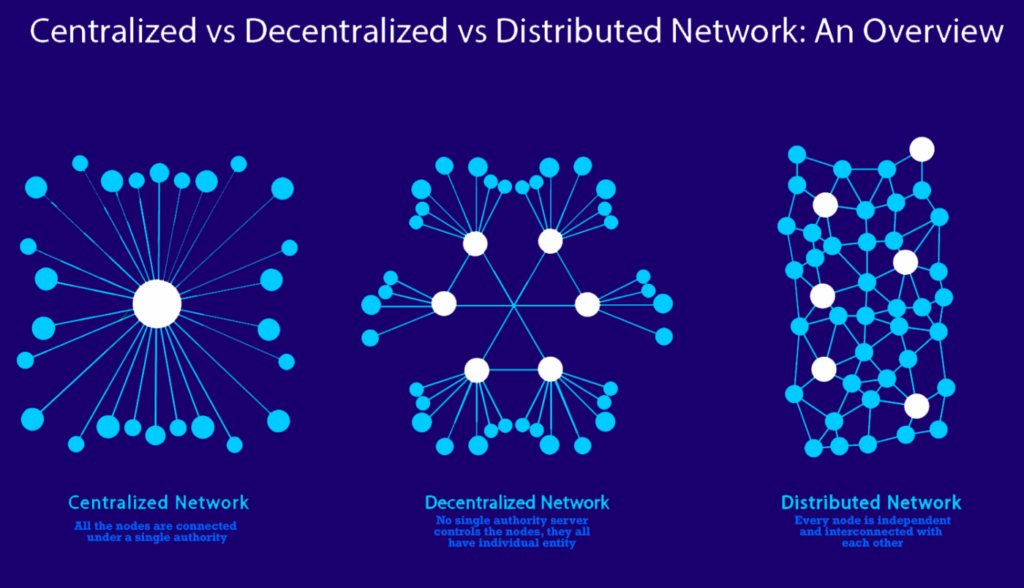

While the Starling Integrity pipeline has the authenticity information of web archives locked-in onto immutable public ledgers, the next step was to ensure the WACZ files themselves are properly preserved. Redundancy of storage is crucial in preventing content at risk of disappearing from the web, therefore Starling has chosen to preserve its archives on decentralized storage networks in addition to centralized systems.

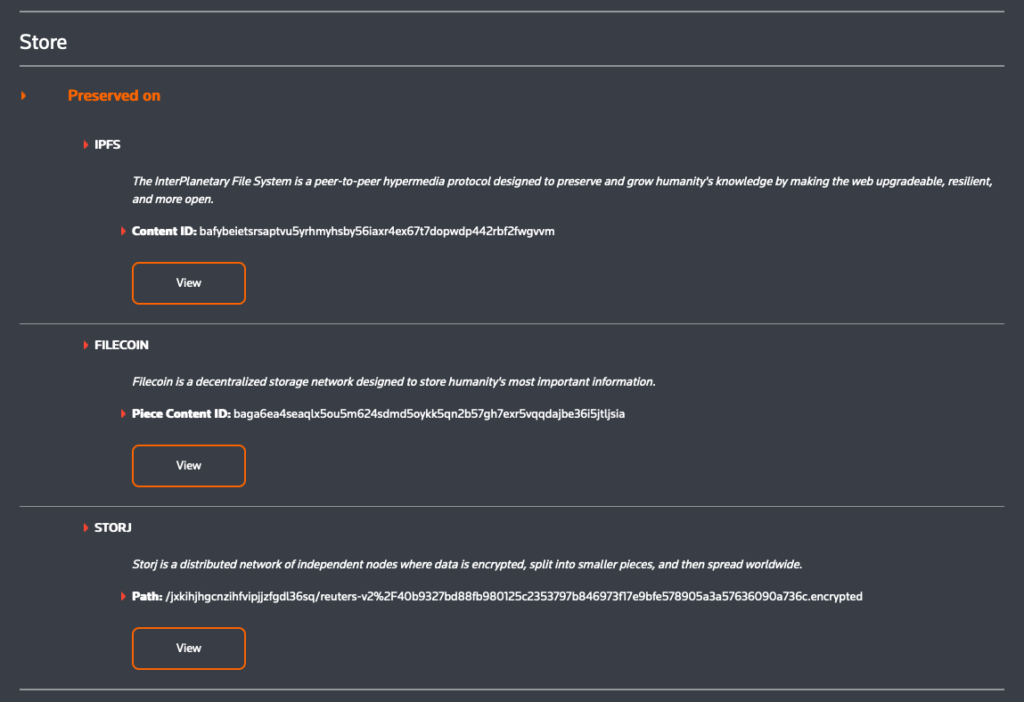

The WACZ files are identified using content identifiers, or CIDs which represent the exact data of the file, and pinned in the peer-to-peer data sharing system called IPFS using Web3.Storage. This allowed downloading of the files from different servers, but IPFS is not a long-term preservation system as the servers make no promise for hosting the files for a particular amount of time.

Decentralized preservation of the files rely on the Filecoin network. In addition to IPFS pinning, Web3.Storage also takes uploaded data and stores them onto Filecoin nodes operated by several storage providers, who make collateral-backed promises to preserve the data over a specific time period.

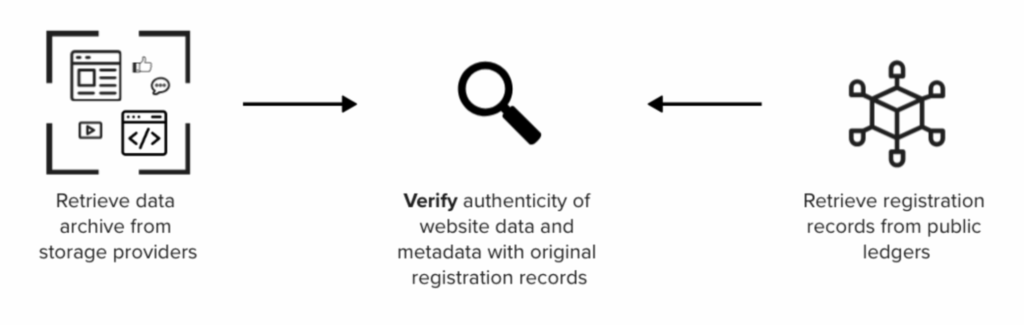

Regardless of where one retrieves the web archives, whether that be IPFS, Filecoin, a centralized host or other decentralized networks, the authenticity of the files can be verified against blockchain registration records and/or WACZ-bundled signatures such that each crawl can be attributed to its archiver.

Verify

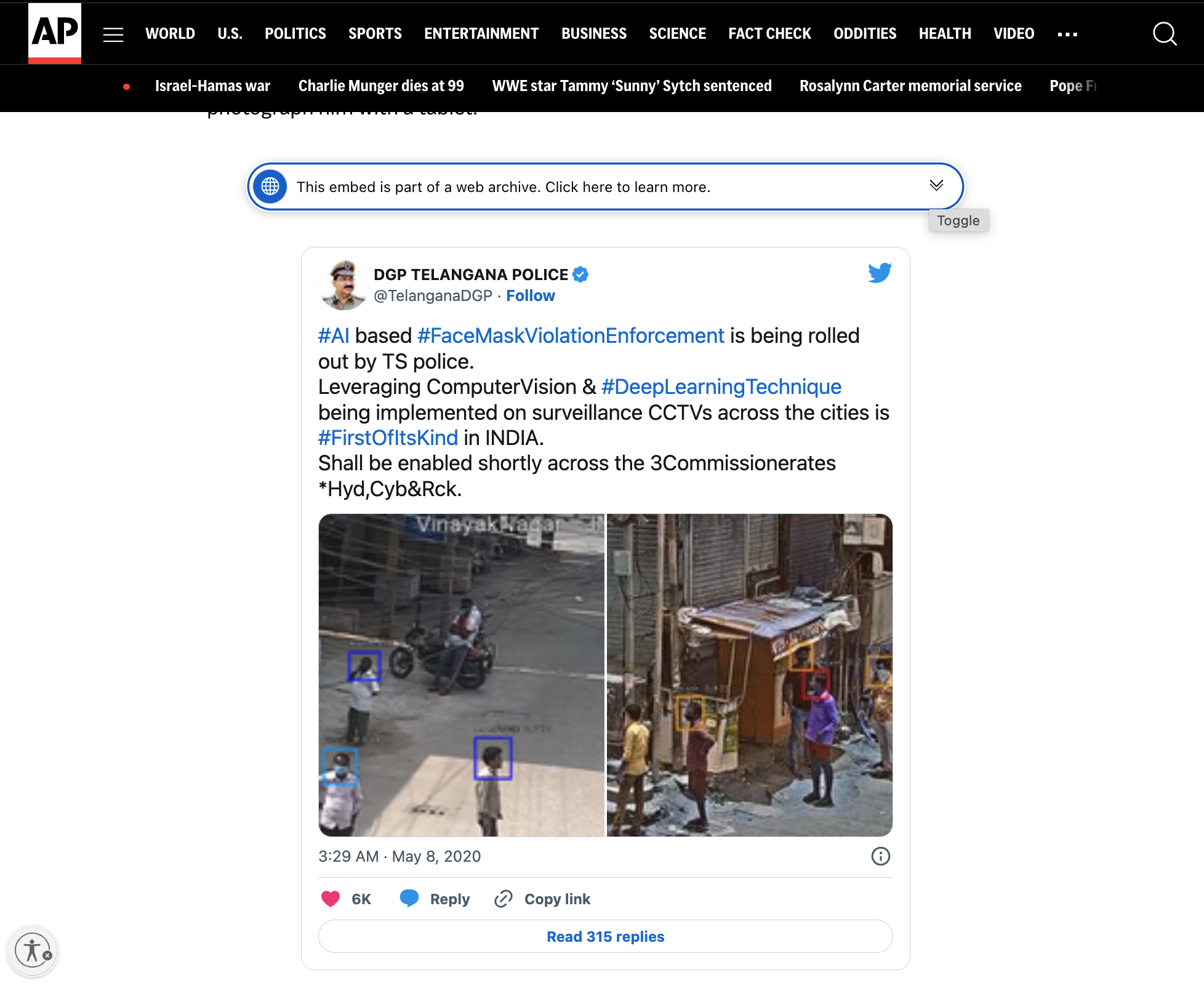

Screen captures are simply pixelated recreations of what an observer saw on a website, and they contain no audit trail that provides a record of what the observer captured. Embeds from social media posts can also be taken down or changed at any time, as the actual content is still hosted by the website or company that originally published it. This puts embedded versions of web content at risk of disappearing, erasing the sources of what a journalist is trying to reference in their reporting.

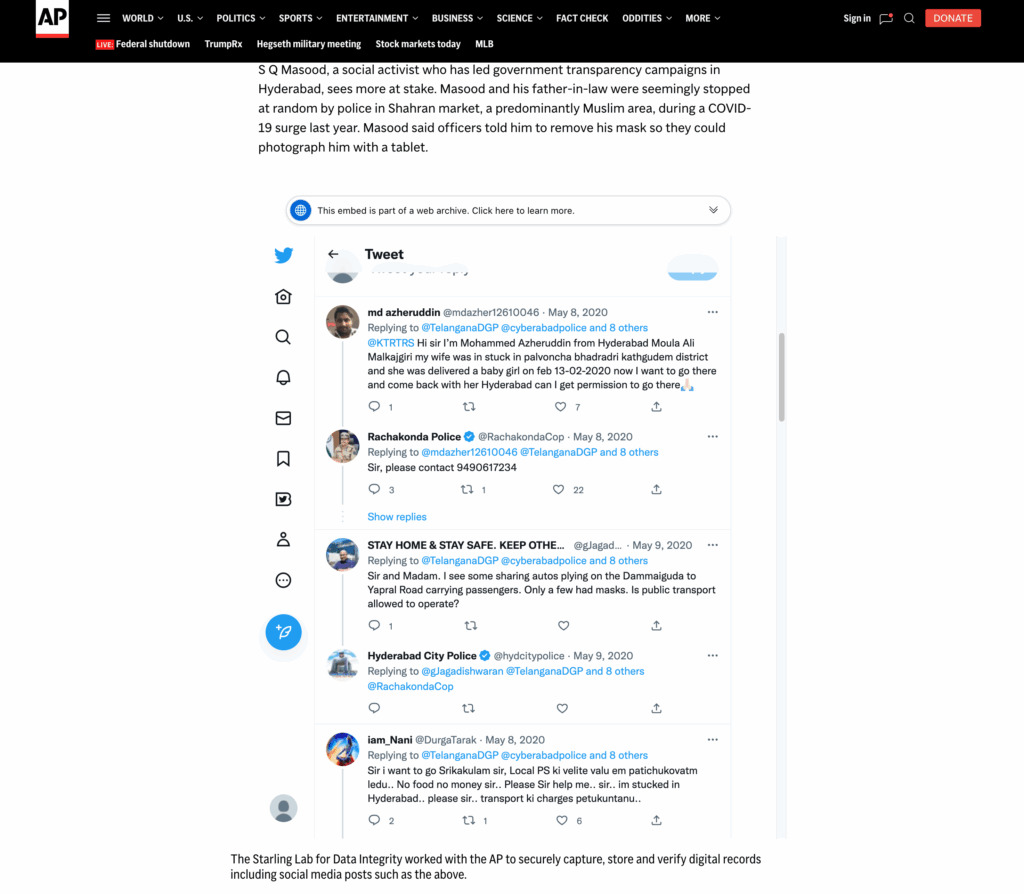

Self-contained WACZ files containing provenance information can be preserved and distributed without the constraints and risks of embedding third-party-hosted web content. This case study showcases the embedding of self-hosted (and redundantly preserved) WACZ files alongside additional authenticity information, which enables readers to reliably inspect the rich context captured in these web archives, as they were seen by the observers who digitally signed their crawls.

In the following sections, we will discuss the general design for the displays created to show web archives and related metadata. Next, we will discuss the implementation details for each of the two places these designs were implemented; on a data dashboard, and within news articles on a separate website.

Display Design for Web Archives and Metadata

For this project, web archives needed to be presented on two different sites. The Mapping Black California interactive data dashboards, as well as the Black Voice News website which are hosted on separate WordPress instances. The data dashboard is a microsite built by the Esri team, and the website is a website managed by Newspack.

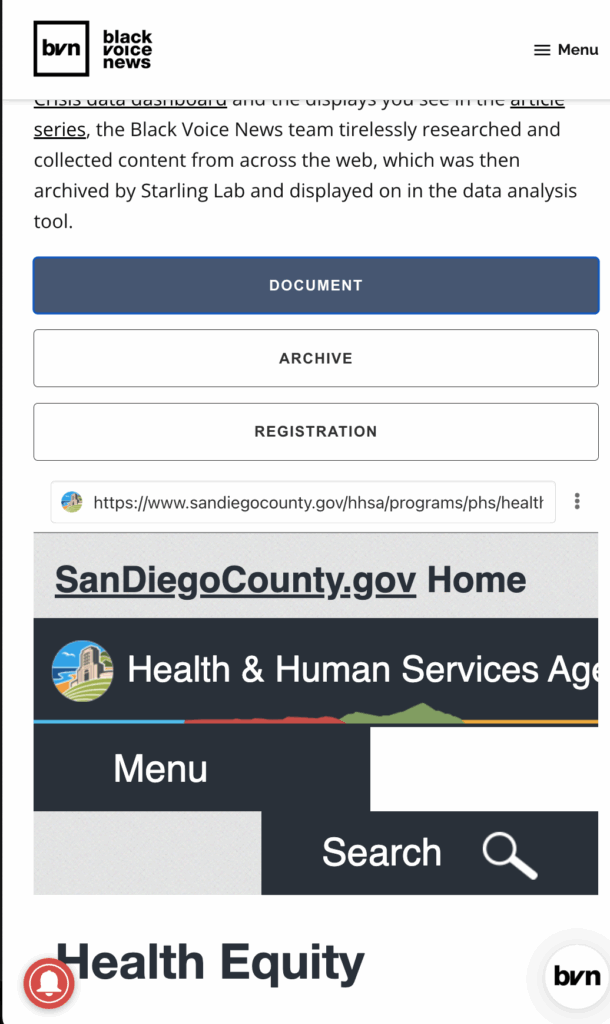

In order to provide the body of evidence presented in the Authenticated WACZ Display seen on the Black Voice News website and the displays in the Combatting Racism as a Public Health Crisis data dashboard, the Black Voice News team tirelessly researched and collected content from across the web, which was then archived and integrated into a custom web component we called the wacz-lightbox.

The Starling Lab team, alongside developer Giacomo Boscani-Gilroy and Esri’s Joe Allen, prototyped a new kind of visual display for both web archives and their accompanying authenticity information. Two versions of the component were implemented. One version of this display was integrated into the data dashboard’s WordPress source code as a web component. The second version, used on the Newspack-managed news website, incorporated the web component as a WordPress plugin.

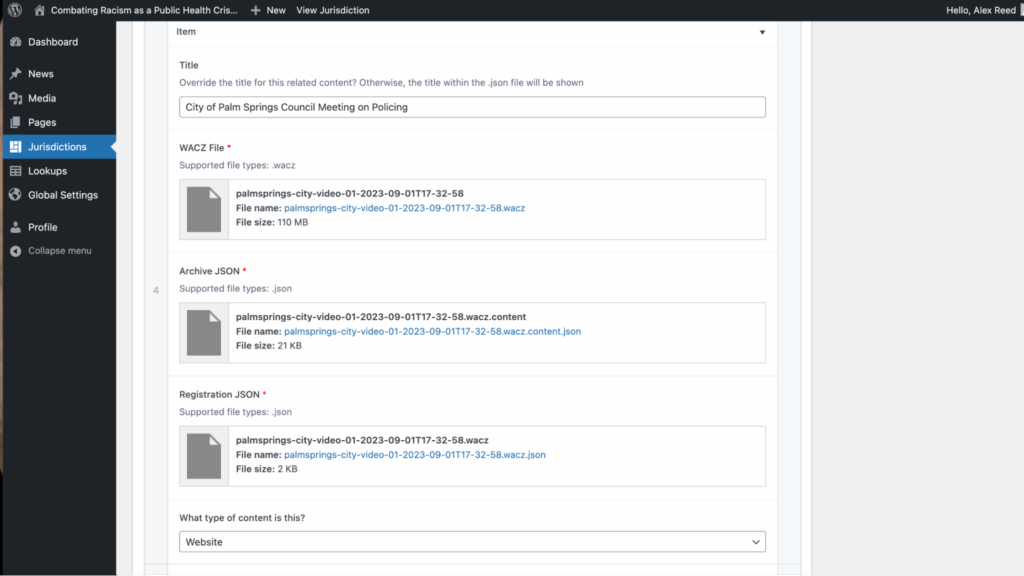

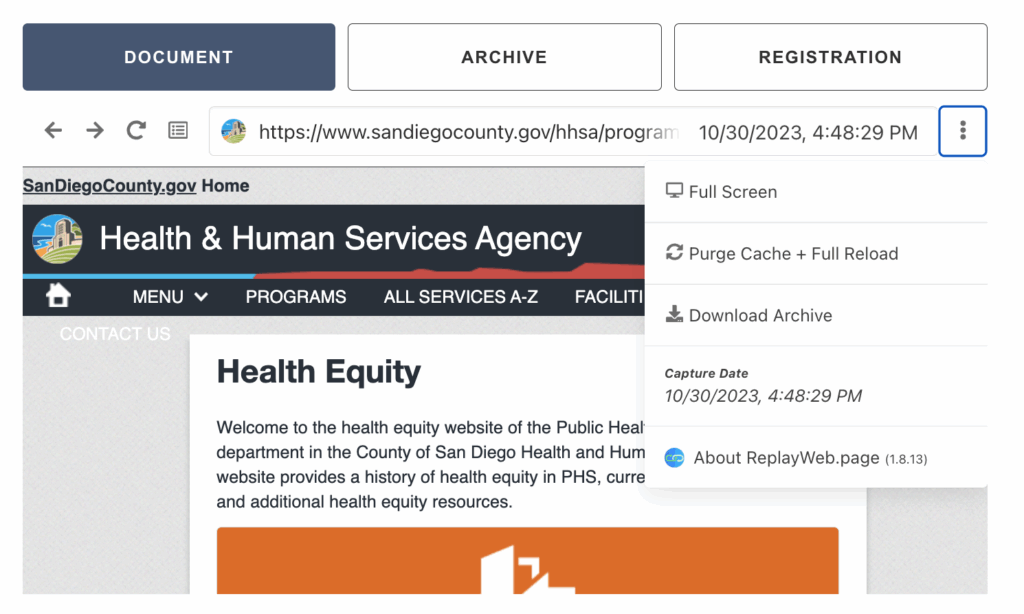

This component was not only able to display the explorable WACZ file, but also incorporated metadata files related to each web archive. Each web archive has two associated metadata files, the first one contains file metadata such as the description containing the crawled URL, crawler identity, and the time and date the site was crawled.

The second metadata file contained information about the publicly registered authenticity records of the web archive, such as the hashes of the WACZ file and the blockchain registration information.

Displaying Web Archives in a Data Dashboard

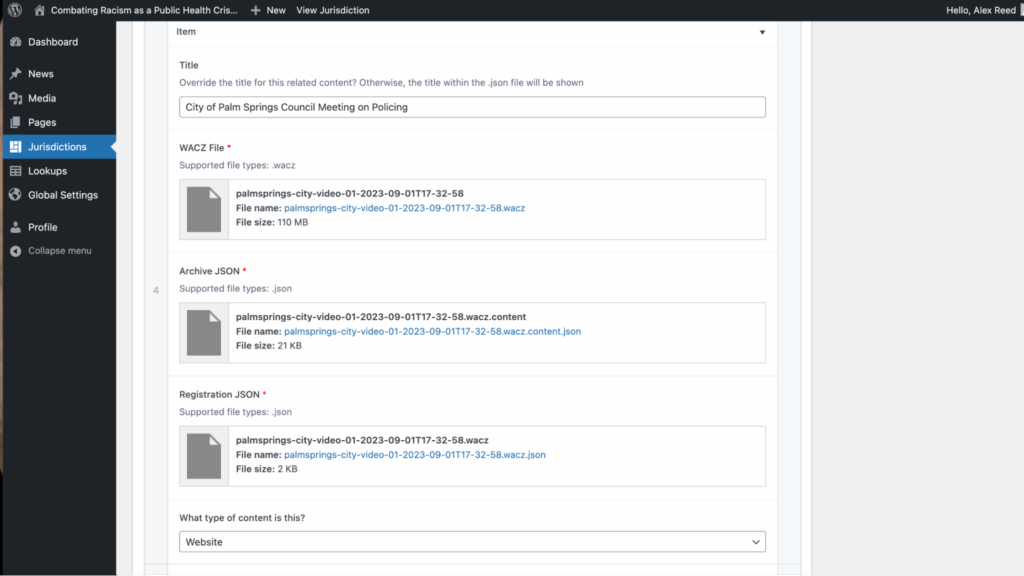

Using the design for the display, Joe Allen from Esri developed a customization in the Combating Racism as a Public Health Crisis data dashboard WordPress instance that enabled us to add the web component created by Giacomo Boscani-Gilroy.

This display enabled us to upload a WACZ file with two JSON metadata files as media, create “Jurisdictions” posts which correlated to areas of the California map, and filter the data and web archives shown in this interactive dashboard. This was accomplished by modifying the PHP files of the WordPress instance to embed the web component used for displaying explorable web archives.

Once web archives are added, the reader can use the data dashboard to filter down to specific jurisdictions to reveal the relevant archives. Clicking on a web archive card will reveal an explorable interface for archived content and the WACZ file’s metadata, giving the reader all the necessary information to verify the preserved records.

Displaying Web Archives in a Managed WordPress News Site

The data dashboard built by Esri for this project was referenced in a five-part series of articles titled Combatting Racism as a Public Health Crisis, published in November 2023. The team implemented a display for the web archives on this Black Voice News WordPress website, which is managed by Newspack. Newspack’s WordPress management platform prevented direct editing of the source code, as was done for the data dashboard. To implement this, Starling Lab developed a WordPress plugin called starling-replay-web-page, which Newspack could add to their managed sites.

The plugin enabled the use of a shortcode containing a media ID to embed an explorable web archive onto a news article.

Once rendered on the Black Voice News website, the web archive and its associated metadata are rendered for readers to explore and verify.

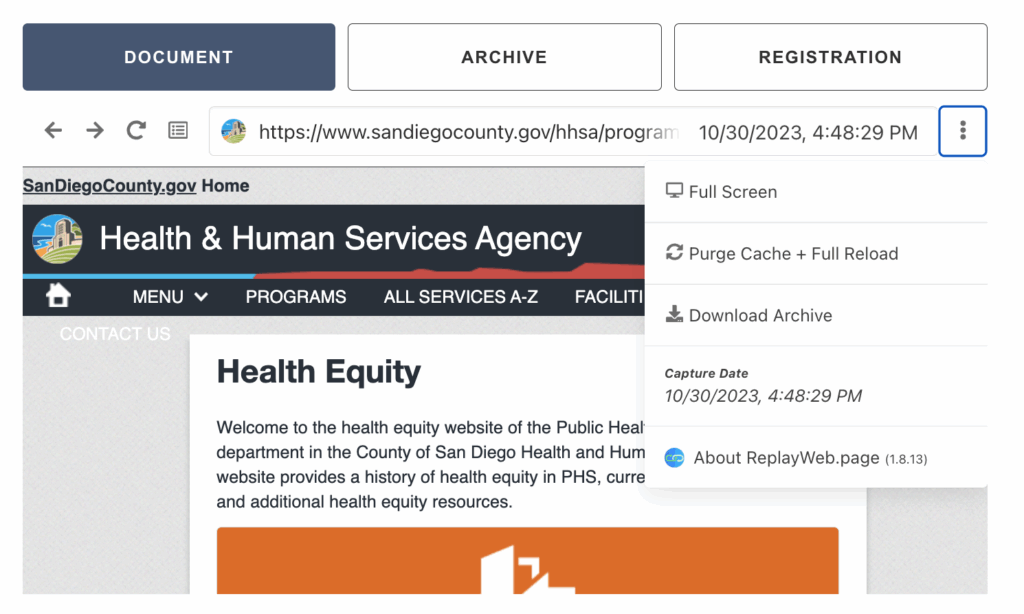

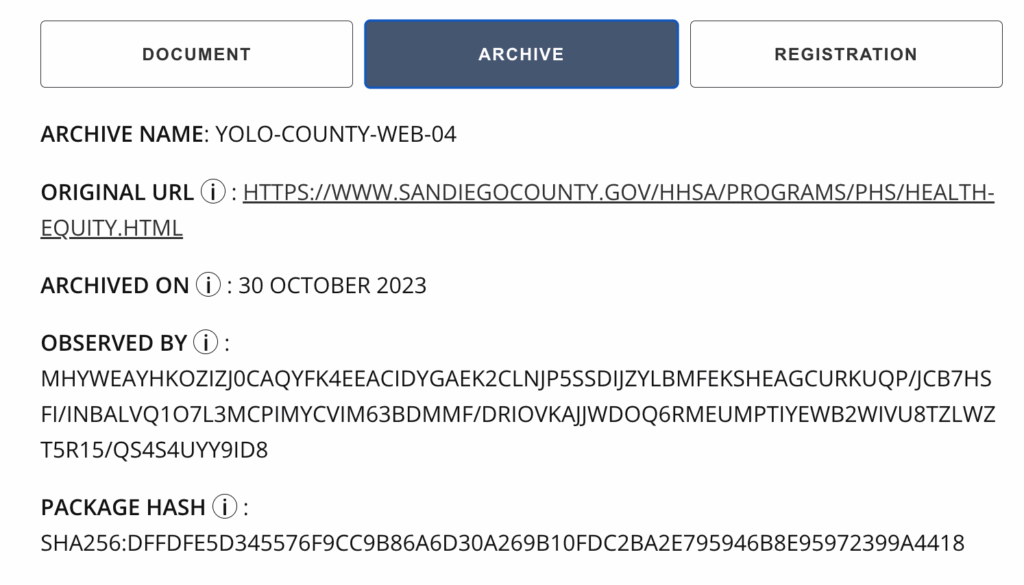

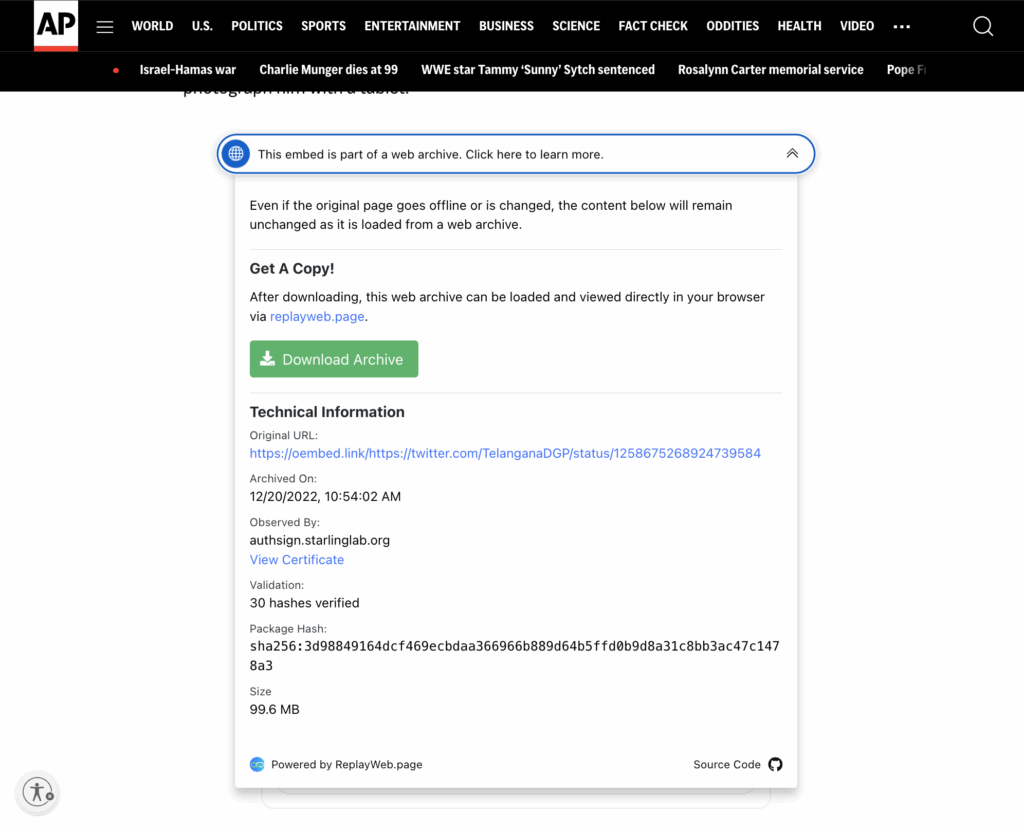

Verifying Metadata of a Web Archive

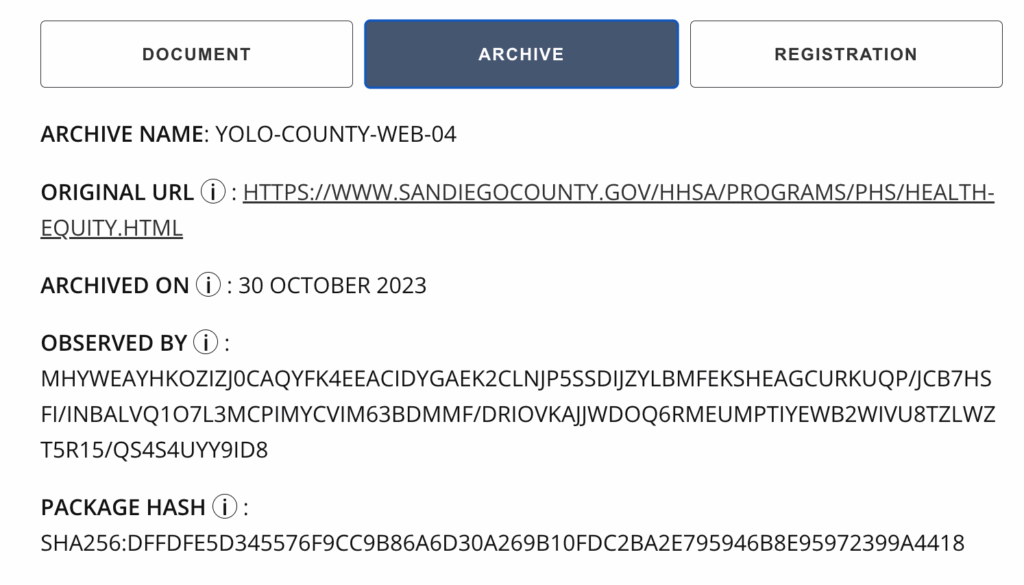

A reader can navigate to the “Archive” tab to view verification information related to the web archive itself.

Here are what the fields represent:

-

- Archive Name – The human-readable name given to the web archive based on the jurisdiction and type of content

- Original URL – The original link these webpages were archived from

- Archived On – The date that the website archive was crawled

- Observed By – The signing identity of the observer, which may be a Starling Lab SSL certificate or a public key associated with the ArchiveWeb.page user

- Package Hash – The hash of the web archive

An important part of any investigative process is the ability to cross reference information. In order to create a useful data dashboard and report on racism as a public health crisis, auditable records of evidence are required. By adding information such as a human readable name, the original URL and information about who observed it and when, one can more easily cross reference similar information and to track down supporting evidence.

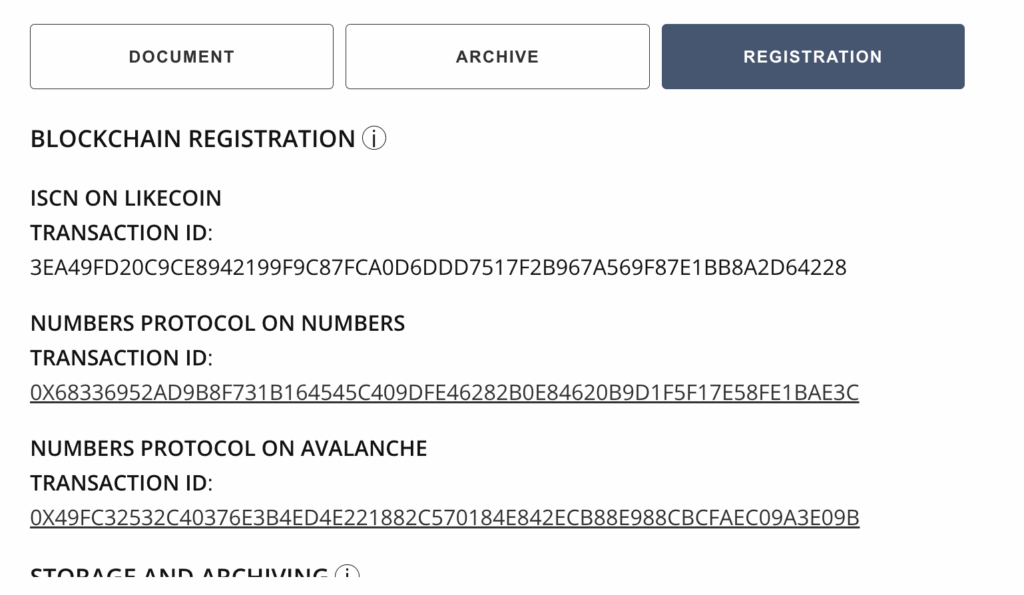

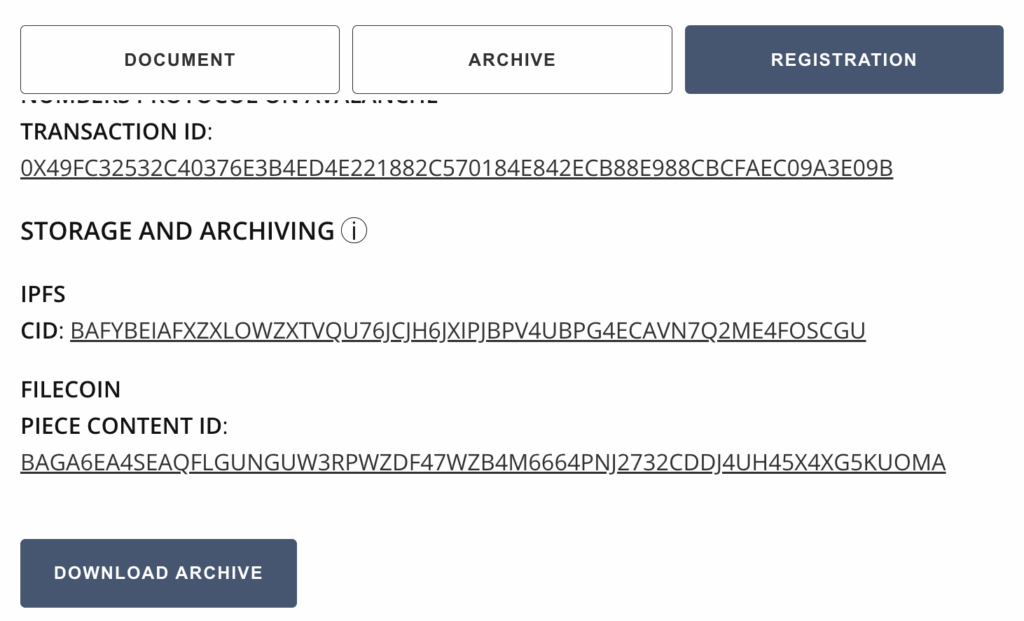

Verifying Authenticity Information of a Web Archive

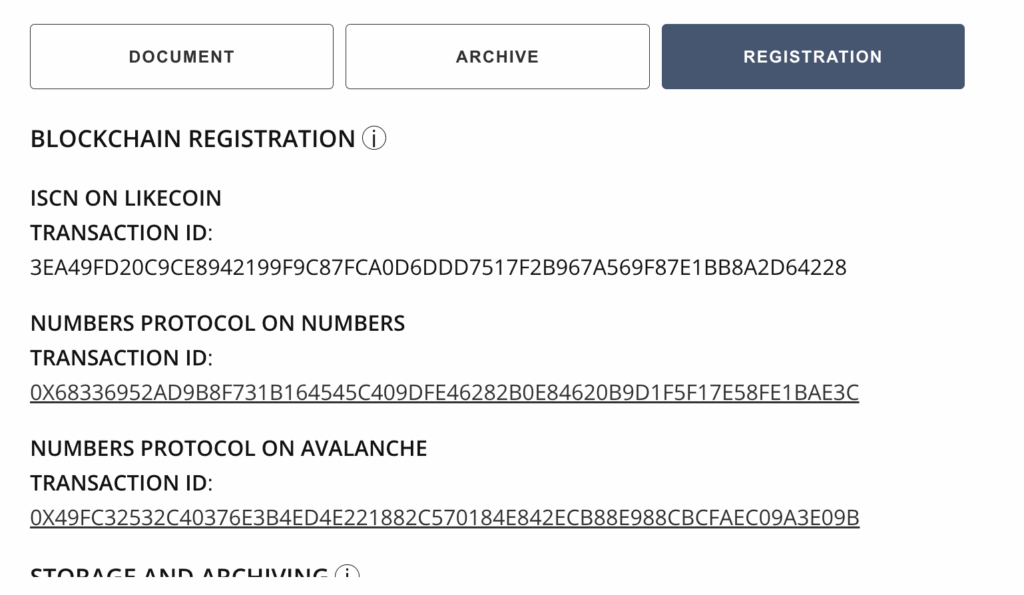

A reader can navigate to the “Registration” tab to view blockchain registration and preservation information about the web archive.

The blockchain registrations contain information specifically about the provenance of the web archives. They allow readers to navigate to records on several public blockchains to verify when the web archives were established and whether a WACZ file they have (which may be downloaded from other sources) is the authentic version crawled by Starling Lab and BVN.

Here are what the fields represent:

-

- Blockchain Registration – Hashes of the web archives & metadata about the archive are registered on different blockchains to establish an immutable record of what was captured and when

- ISCN on LikeCoin – Registrations on LikeCoin can be explored on ISCN, searching by the Transaction ID

- Numbers Protocol on Numbers – Registrations on Numbers can be explored with Numbers Explorer, searching by the Transaction ID

- Numbers Protocol on Avalanche – Registrations on Avalanche can be explored on Snowtrace, searching by the Transaction ID

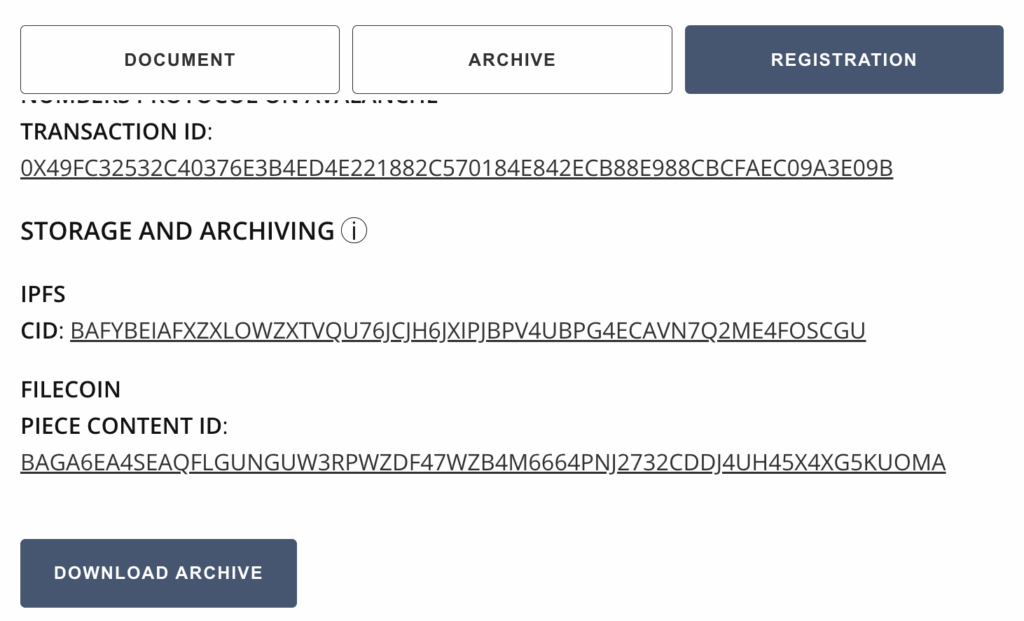

- Storage and Archiving – Copies of these web archives were stored in a resilient, peer-to-peer system (IPFS), and archived in a long term crypto-incentivized distributed storage system (Filecoin)

- IPFS CID – The hash-based content identifier of the web archive, if even a tiny detail (from a pixel to a character in a document) in the WACZ file changes, this identifier will change

- Filecoin Piece CID – A unique identifier that can be used to locate the web archive stored on Filecoin

- Download Archive – This enables readers to download the WACZ file, which they can use to produce a hash and verify against the blockchain records

If a reader chooses to download the WACZ file, they can use ReplayWeb.page to explore it without using the BVN website. Should the BVN website become unavailable in the future, the reader can still explore the web archive should they need it for their own investigative or reporting work. They can also use the blockchain registrations to establish provenance of the archives.

Contents

Scope

Framework

Technology

LearningsArchive

Technology

Capture

Archiving Content on the Web

The approach to web capture taken by Black Voice News and Starling Lab was unique. Much of the data was collected from local government websites which may self-host video and databases which are hard to search and access without special investigative techniques. This is web content that is also subject to take down and link rot as budgets are changed or staff turns over, and systems aren’t maintained. To address the lack of access and likely disappearance of this web content, we created authenticated web archives.

Web archives created with the Webrecorder suite of tools enabled the team to capture the full context of everything that existed on the web page in a zip archive called a WACZ file. The information collected includes all content on a webpage, such as articles, comments, likes, and multimedia files such as audio and video. A WACZ file is a copy of the code and media that makes up that webpage as it appeared, including an index of what content was captured. When users later display (or “replay”) the page using certain tools, it remains fully interactive like it was at the time of capture.

First, most of the web archives were created with an automated tool called Browsertrix (on a custom instance operated by Starling Lab). Some were created manually with a Chrome extension called Archiveweb.page when websites were too complex to crawl with a bot. These tools visit a site on the world wide web and scrape all the content loaded during the browsing session, including site code and assets.

The list of all the URLs to crawl, along with the other metadata fields were researched and populated by the Black Voice News investigative team. In addition to specifying names for each web archive, we included several additional metadata fields. Each URL was assigned a jurisdiction and geographic identifier so it could later be correlated with the correct region in the interactive map created by Esri.

A custom Python script was used to take the spreadsheet that listed all the URLs and metadata fields to automate the Browsertrix web crawls via its API. When these tools capture an archive, it preserves the data in such a way that, when the archives are replayed, each part of the website can be replayed as it was witnessed by the Browsertrix crawler, along with information that the end user can inspect about the authenticity of this web archive.

Signing and Verification of Web Archives

When a WACZ record of a website was created, an immutable digital ‘fingerprint’ called a hash was also generated. If any single byte of the data is changed, be it a pixel of an image or the timestamp of when it was collected, the hash used to verify copies of that page will change as well. It is important for a newsroom like BVN to establish this fingerprint in case the provenance and integrity of a web archive is called into question. If that happens, we have a reliable record of this hash when it was recorded.

The hash representing the crawled data in the WACZ file was also cryptographically signed by one of Starling Lab’s Let’s Encrypt certificates as it is the operator of the Browsertrix server. This signature represents an attestation to the web content as it was observed and when, and ties it to a known authority via its domain name. This cryptographic provenance is packaged into the web archive file and is described in the WACZ specification and WACZ Signing and Verification specification.

In the case of manual crawls using the ArchiveWeb.page, the WACZ files were signed by a keypair locally generated by the Chrome extension. The tool securely signs the crawled content with a private key belonging to a verifiable identity, identifiable with the public key associated with the ArchiveWeb.page user.

For each crawled URL, the hashed, signed, and timestamped provenance bundle was packaged alongside the crawled web content into a ZIP file with a .wacz extension in the file name, and verifiable with viewers developed by Webrecorder.

Timestamping and Registering Web Archives

Once an authenticated web archive is produced, it is uploaded to the Starling Integrity pipeline.

The Starling Integrity pipeline streamlines the complex data processing workflow to ingest digital media and to establish records of provenance. A pipeline like this streamlines the complex steps of ingesting, hashing, signing, timestamping, and registering content for newsrooms like BVN. Without the pipeline, all 350 records would have to be manually processed with multiple tools. The following diagram illustrates the registration process.

As part of the Starling Integrity workflow, the hash of each WACZ file was added to OpenTimestamps servers for a proof of existence at the time of registration.

File hashes and metadata, including tools and process information specified in the crawl spreadsheet, were then registered on distributed ledgers to establish a public record of the web archive creation. These provenance information were stored and distributed with a peer-to-peer data sharing system called IPFS, while the WACZ files themselves are stored separately, so users who gain access to the web archives may verify their integrity against their blockchain registrations.

The provenance data were registered on three different blockchains: Numbers, Avalanche, and LikeCoin. These registrations represent a public and immutable index that anyone using the Combating Racism data dashboard can inspect and verify against, if the authenticity of the web content is called into question, or if another newsroom wants a reliable source for information for writing a story about the data in this project.

Store

Preserving Web Archives with Distributed Storage

While the Starling Integrity pipeline has the authenticity information of web archives locked-in onto immutable public ledgers, the next step was to ensure the WACZ files themselves are properly preserved. Redundancy of storage is crucial in preventing content at risk of disappearing from the web, therefore Starling has chosen to preserve its archives on decentralized storage networks in addition to centralized systems.

The WACZ files are identified using content identifiers, or CIDs which represent the exact data of the file, and pinned in the peer-to-peer data sharing system called IPFS using Web3.Storage. This allowed downloading of the files from different servers, but IPFS is not a long-term preservation system as the servers make no promise for hosting the files for a particular amount of time.

Decentralized preservation of the files rely on the Filecoin network. In addition to IPFS pinning, Web3.Storage also takes uploaded data and stores them onto Filecoin nodes operated by several storage providers, who make collateral-backed promises to preserve the data over a specific time period.

Regardless of where one retrieves the web archives, whether that be IPFS, Filecoin, a centralized host or other decentralized networks, the authenticity of the files can be verified against blockchain registration records and/or WACZ-bundled signatures such that each crawl can be attributed to its archiver.

Verify

Screen captures are simply pixelated recreations of what an observer saw on a website, and they contain no audit trail that provides a record of what the observer captured. Embeds from social media posts can also be taken down or changed at any time, as the actual content is still hosted by the website or company that originally published it. This puts embedded versions of web content at risk of disappearing, erasing the sources of what a journalist is trying to reference in their reporting.

Self-contained WACZ files containing provenance information can be preserved and distributed without the constraints and risks of embedding third-party-hosted web content. This case study showcases the embedding of self-hosted (and redundantly preserved) WACZ files alongside additional authenticity information, which enables readers to reliably inspect the rich context captured in these web archives, as they were seen by the observers who digitally signed their crawls.

In the following sections, we will discuss the general design for the displays created to show web archives and related metadata. Next, we will discuss the implementation details for each of the two places these designs were implemented; on a data dashboard, and within news articles on a separate website.

Display Design for Web Archives and Metadata

For this project, web archives needed to be presented on two different sites. The Mapping Black California interactive data dashboards, as well as the Black Voice News website which are hosted on separate WordPress instances. The data dashboard is a microsite built by the Esri team, and the website is a website managed by Newspack.

In order to provide the body of evidence presented in the Authenticated WACZ Display seen on the Black Voice News website and the displays in the Combatting Racism as a Public Health Crisis data dashboard, the Black Voice News team tirelessly researched and collected content from across the web, which was then archived and integrated into a custom web component we called the wacz-lightbox.

The Starling Lab team, alongside developer Giacomo Boscani-Gilroy and Esri’s Joe Allen, prototyped a new kind of visual display for both web archives and their accompanying authenticity information. Two versions of the component were implemented. One version of this display was integrated into the data dashboard’s WordPress source code as a web component. The second version, used on the Newspack-managed news website, incorporated the web component as a WordPress plugin.

This component was not only able to display the explorable WACZ file, but also incorporated metadata files related to each web archive. Each web archive has two associated metadata files, the first one contains file metadata such as the description containing the crawled URL, crawler identity, and the time and date the site was crawled.

The second metadata file contained information about the publicly registered authenticity records of the web archive, such as the hashes of the WACZ file and the blockchain registration information.

Displaying Web Archives in a Data Dashboard

Using the design for the display, Joe Allen from Esri developed a customization in the Combating Racism as a Public Health Crisis data dashboard WordPress instance that enabled us to add the web component created by Giacomo Boscani-Gilroy.

This display enabled us to upload a WACZ file with two JSON metadata files as media, create “Jurisdictions” posts which correlated to areas of the California map, and filter the data and web archives shown in this interactive dashboard. This was accomplished by modifying the PHP files of the WordPress instance to embed the web component used for displaying explorable web archives.

Once web archives are added, the reader can use the data dashboard to filter down to specific jurisdictions to reveal the relevant archives. Clicking on a web archive card will reveal an explorable interface for archived content and the WACZ file’s metadata, giving the reader all the necessary information to verify the preserved records.

Displaying Web Archives in a Managed WordPress News Site

The data dashboard built by Esri for this project was referenced in a five-part series of articles titled Combatting Racism as a Public Health Crisis, published in November 2023. The team implemented a display for the web archives on this Black Voice News WordPress website, which is managed by Newspack. Newspack’s WordPress management platform prevented direct editing of the source code, as was done for the data dashboard. To implement this, Starling Lab developed a WordPress plugin called starling-replay-web-page, which Newspack could add to their managed sites.

The plugin enabled the use of a shortcode containing a media ID to embed an explorable web archive onto a news article.

Once rendered on the Black Voice News website, the web archive and its associated metadata are rendered for readers to explore and verify.

Verifying Metadata of a Web Archive

A reader can navigate to the “Archive” tab to view verification information related to the web archive itself.

Here are what the fields represent:

-

- Archive Name – The human-readable name given to the web archive based on the jurisdiction and type of content

- Original URL – The original link these webpages were archived from

- Archived On – The date that the website archive was crawled

- Observed By – The signing identity of the observer, which may be a Starling Lab SSL certificate or a public key associated with the ArchiveWeb.page user

- Package Hash – The hash of the web archive

An important part of any investigative process is the ability to cross reference information. In order to create a useful data dashboard and report on racism as a public health crisis, auditable records of evidence are required. By adding information such as a human readable name, the original URL and information about who observed it and when, one can more easily cross reference similar information and to track down supporting evidence.

Verifying Authenticity Information of a Web Archive

A reader can navigate to the “Registration” tab to view blockchain registration and preservation information about the web archive.

The blockchain registrations contain information specifically about the provenance of the web archives. They allow readers to navigate to records on several public blockchains to verify when the web archives were established and whether a WACZ file they have (which may be downloaded from other sources) is the authentic version crawled by Starling Lab and BVN.

Here are what the fields represent:

-

- Blockchain Registration – Hashes of the web archives & metadata about the archive are registered on different blockchains to establish an immutable record of what was captured and when

- ISCN on LikeCoin – Registrations on LikeCoin can be explored on ISCN, searching by the Transaction ID

- Numbers Protocol on Numbers – Registrations on Numbers can be explored with Numbers Explorer, searching by the Transaction ID

- Numbers Protocol on Avalanche – Registrations on Avalanche can be explored on Snowtrace, searching by the Transaction ID

- Storage and Archiving – Copies of these web archives were stored in a resilient, peer-to-peer system (IPFS), and archived in a long term crypto-incentivized distributed storage system (Filecoin)

- IPFS CID – The hash-based content identifier of the web archive, if even a tiny detail (from a pixel to a character in a document) in the WACZ file changes, this identifier will change

- Filecoin Piece CID – A unique identifier that can be used to locate the web archive stored on Filecoin

- Download Archive – This enables readers to download the WACZ file, which they can use to produce a hash and verify against the blockchain records

If a reader chooses to download the WACZ file, they can use ReplayWeb.page to explore it without using the BVN website. Should the BVN website become unavailable in the future, the reader can still explore the web archive should they need it for their own investigative or reporting work. They can also use the blockchain registrations to establish provenance of the archives.

Contents

Scope

Framework

Technology

LearningsArchive

Learnings

Data Preparation and Publishing Workflow

To capture and archive web content, we used the Starling Integrity Backend, along with a custom instance of the Browsertrix crawler. Using a Python script, we ingested a spreadsheet of URLs and relevant metadata as input, and the crawler produces WACZ web archives, which are then processed by the pipeline. This process also generated two JSON files containing metadata that are shown in the Archive and Registration tabs of the web component. These files need to be manually associated with each WACZ file when publishing to WordPress.

The Starling Integrity Backend was designed to archive files sent to it, but it does not have sophisticated features for aiding publishing workflows. For example, retrieval of the WACZ file that results from crawling a particular URL, or querying for every WACZ file with a particular attribute, would be really helpful to analysts and publishers. However, these are not simple tasks to perform on archival data, and finding the right files often required assistance from the engineering team.

In this case study, the team learned some of the feature requirements around designing a backend and processing workflow that can handle the structuring, processing, and querying of data. For future projects, a new database is being developed to hold content and archival metadata. A user will then be able to query metadata, both auto-generated in the archival process (e.g. blockchain registration Tx) and human-inputted fields of metadata (e.g. collection name), all while maintaining metadata integrity. Unlike traditional databases, these data are cryptographically signed and timestamped, and additions and changes are tracked. This database, alongside a CLI tool to support the processing of data, and the updating of metadata associated with a content identifier, will enable the Lab to create more streamlined and cohesive media collections and archives. The data can be exported and streamlined for integrating with standard publishing workflows.

Displaying Web Archives for Verification

On the BVN news site, since this display is designed to show archives taken from a desktop web browser, it is not very mobile friendly. The smaller the screen gets, the more difficult it is to view the embed display. Not only does it display a full size webpage that doesn’t scale down to smaller browsers, the info tooltips that describe the metadata and provenance information are also difficult to see.

Creating a display that allows experts and readers to inspect and verify content, without overwhelming them with too much information or a confusing interface, continues to be a challenge both for the Lab, and other organizations that are creating authenticated, verifiable content.

Embedded ads and subscription popups on news sites add to the number of elements on a page, and having all these elements makes it difficult to see and assess a web archive. Displaying all the information made space on the page seem crowded, overwhelming readers. An improved design that both distinguished the embed on the page, as well as had more mobile-responsive features would be a welcome addition to future versions.

The version of the web archive embedded on the Combating Racism data dashboard, however, didn’t have to compete for space with news article text and have a much cleaner display.

Wordpress Plugin Development

Initially, the team developed a web component and planned on integrating it directly into the PHP source code for the WordPress websites. However, these sites are managed by Newspack, which requested that we instead bundle the web component as a WordPress plugin. As Starling Lab had previously created WordPress plugins, it was relatively easy to assist Giacomo in implementing the custom web component for displaying WACZ files as a new plugin.

Working with service provider-managed platforms, however, is something that is common to many newsrooms. When creating new features, it is important to understand from the beginning what the restrictions and requirements may be from third parties who are involved in the development, hosting, and technical maintenance of news sites.

Contents

Scope

Framework

Technology

LearningsArchive

Archive

Original, archived interactive map display: https://mappingblackca.com/project/rcphc/

New updated dashboard: https://combatingracism.com/

Links to News Articles

- Landing Page: Combating Racism as a Public Health Crisis

- Combating Racism as a Public Health Crisis, Part 1: Holding Leaders Accountable

- Combating Racism as a Public Health Crisis, Part 2: Santa Cruz County’s Inclusive Resolution

- Combating Racism as a Public Health Crisis, Part 3: Oakland Addresses Systemic Racism with Data-driven Approach

- Combating Racism as a Public Health Crisis, Part 4: Riverside and San Bernardino Counties Take Action Against Racism as a Public Health Crisis

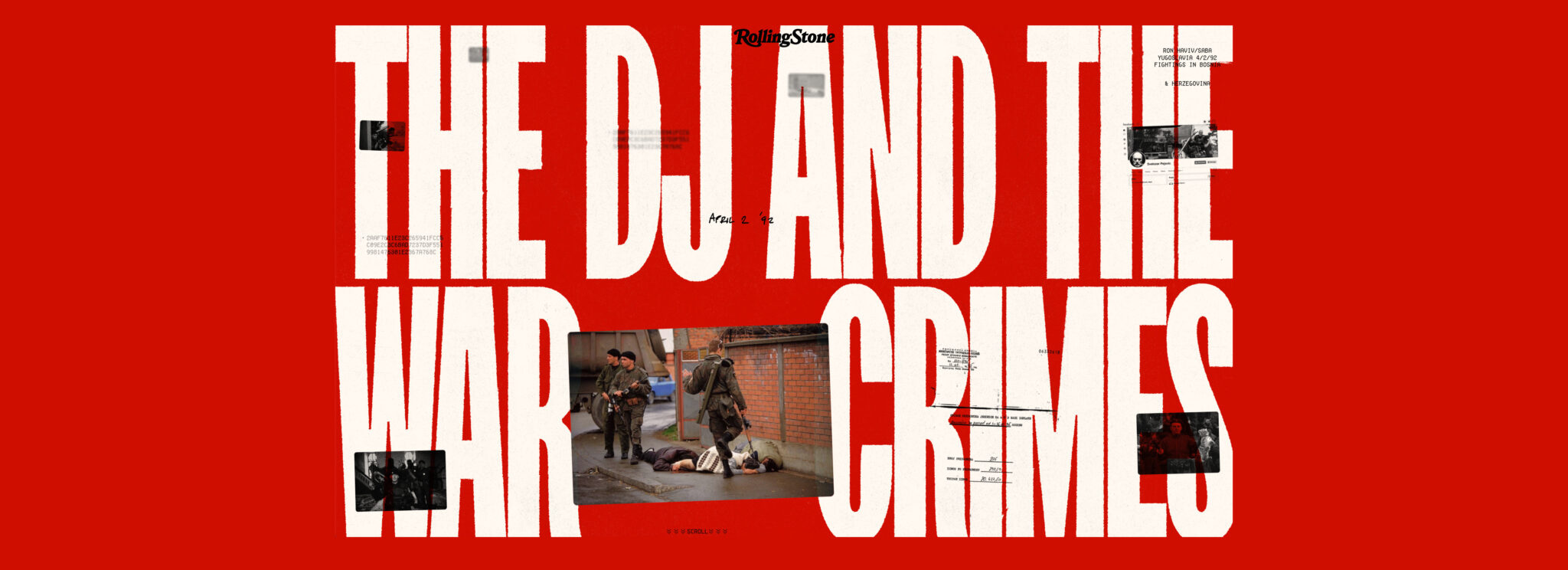

Creating the First Cryptographic Archive for a War Crimes Investigation

The Advanced Tech Breaking Open A War Crimes Investigation

A first-of-its-kind cryptographic archive published by Rolling Stone helped reopen a 30-year-old cold case. Released at the dawn of consumer-available generative AI, it illuminates new paths to overcome a range of modern challenges including denialism, deepfakes, and link rot.

Adam RoseReading Time: 10min

Prototypes

Share

Contents

Context

Framework

TechnologyLearningsArchive

Fellowship Projects and Awards

See More

Context

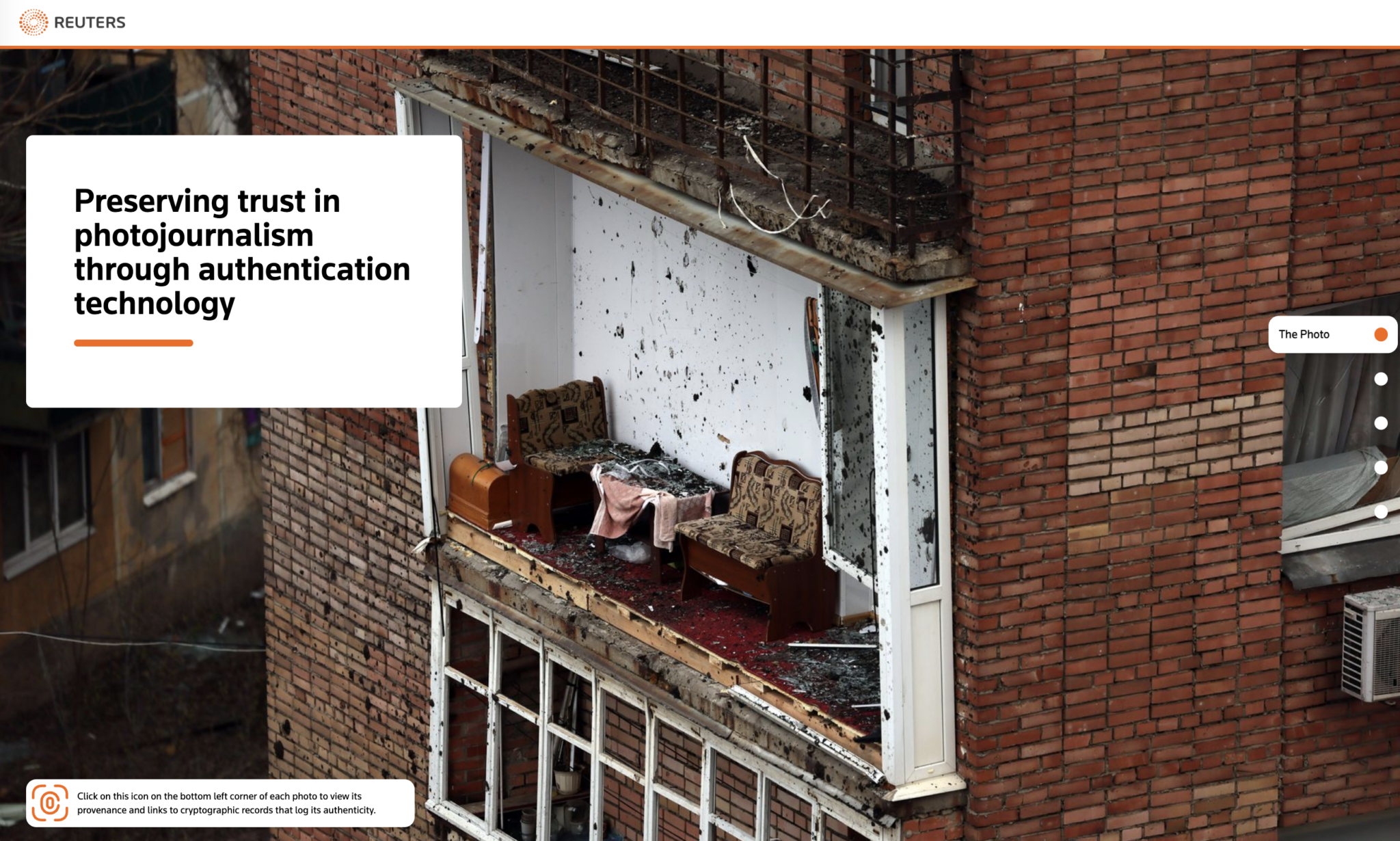

How can you trust that a news photograph is real?

We looked into the future of investigative journalism — by looking into the past.

In 1992 as civilians were killed in the streets, Ron Haviv captured the defining image of the Balkan War. But for some, seeing was not believing.

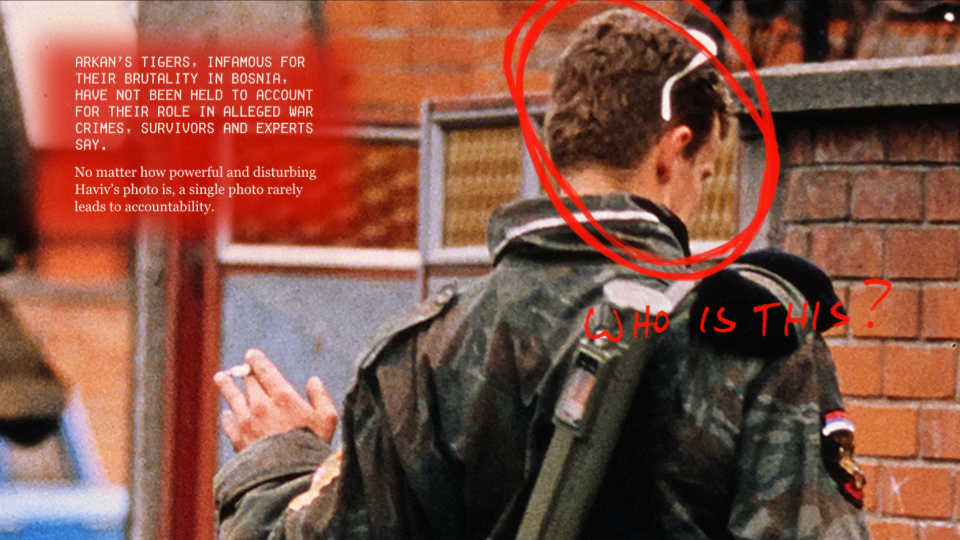

It had been three decades without justice or accountability for the soldier in this frame. He appears callous, flicking a cigarette as his boot – suspended in time – hangs over innocent civilians who were later found dead.

(This case study crops out the more graphic portions of the images.)

The photo has long been subject to denialism. It went “viral” – or what would be the equivalent in 1992 – after it first ran in Time Magazine. The image was soon used in a TV news report to confront the general who oversaw the soldier’s paramilitary unit. He denied that it shows what it clearly did.

More recently, when Russia first invaded Crimea in 2014, the image reemerged online paired with false claims that it depicted a Ukrainian soldier committing war crimes against civilians. This deception through false captions represents a more common twist on the deepfake – the “cheapfake.”

“People have said it's fake. People have said I set it up. People have said these people aren't dying. Captions have been changed. So there must be a way to authenticate what photographers are seeing.” – Ron Haviv

In recent years, society has been introduced to groundbreaking new technologies. Some, like generative AI, made it even harder to trust our own eyes.

But other technologies can help us restore that trust.

In The DJ and the War Crimes, Rolling Stone published a first-of-its-kind cryptographic archive. It authenticated Haviv’s photos, along with hundreds of other records from the conflict. Classic investigative and documentary journalism was paired with an immersive microsite.

We invited audiences to become the investigator.

Contents

Scope

Framework

TechnologyLearningsArchive

Scope

Starling Lab’s collaboration with Rolling Stone exemplifies our work. Along with the world-class investigation, we teamed up to publish a unique front-end journalism archive that lets readers explore evidence in a way never available to audiences before. Meanwhile, the equally innovative back-end ensured that this evidence would be resilient against efforts to undermine its credibility or availability.

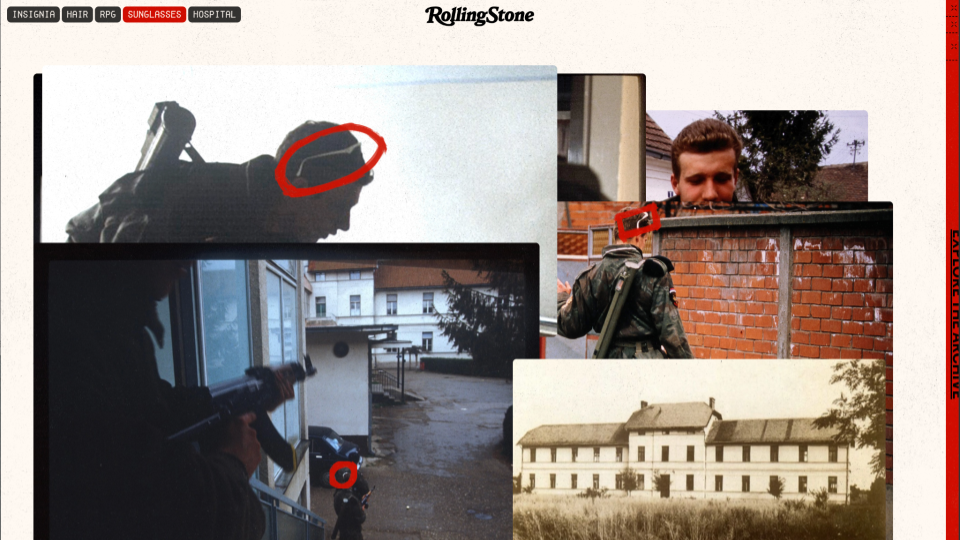

This particular story began with the iconic photo taken by Haviv in Bejilina, Bosnia. Ultimately the journalistic piece is a classic who dunnit. The reporting team, led by Sophia Jones, set out to answer the lingering question: “Who is the soldier in this iconic photo depicting an alleged war crime?”

The result included all the classic elements of investigative journalism. Over 11 months, reporters collected a staggering amount of evidence from firsthand witnesses, archives, and on-the-ground reporting.

The scale of the investigation was matched by the team involved. Jones led fellow reporters Nidžara Ahmetašević and Milivoje Pantović, as well as other local freelancers and photographers who opted to remain anonymous for their safety. At least two dozen individuals played a role, including editorial staff at Rolling Stone, contributors and engineers from Starling Lab, and designers from Gladeye.

Haviv always hoped that his photojournalism would lead to accountability. Not only did the investigation focus on his famous image, it included several others from his rolls of film that day which had never been published before.

The group wanted to ensure that ephemeral media – whether aging film slides or social media posts – could be preserved and made available to others along with all the evidence in the investigation. Our accounting identified nearly 2,000 documents (PDFs), 40 key images, and 183 web archives. The latter are more than just a screenshot, but a more robust way of capturing information that's on the internet – and very much at risk of deletion or link rot.

Contents

Framework

TechnologyLearningsArchive

Framework

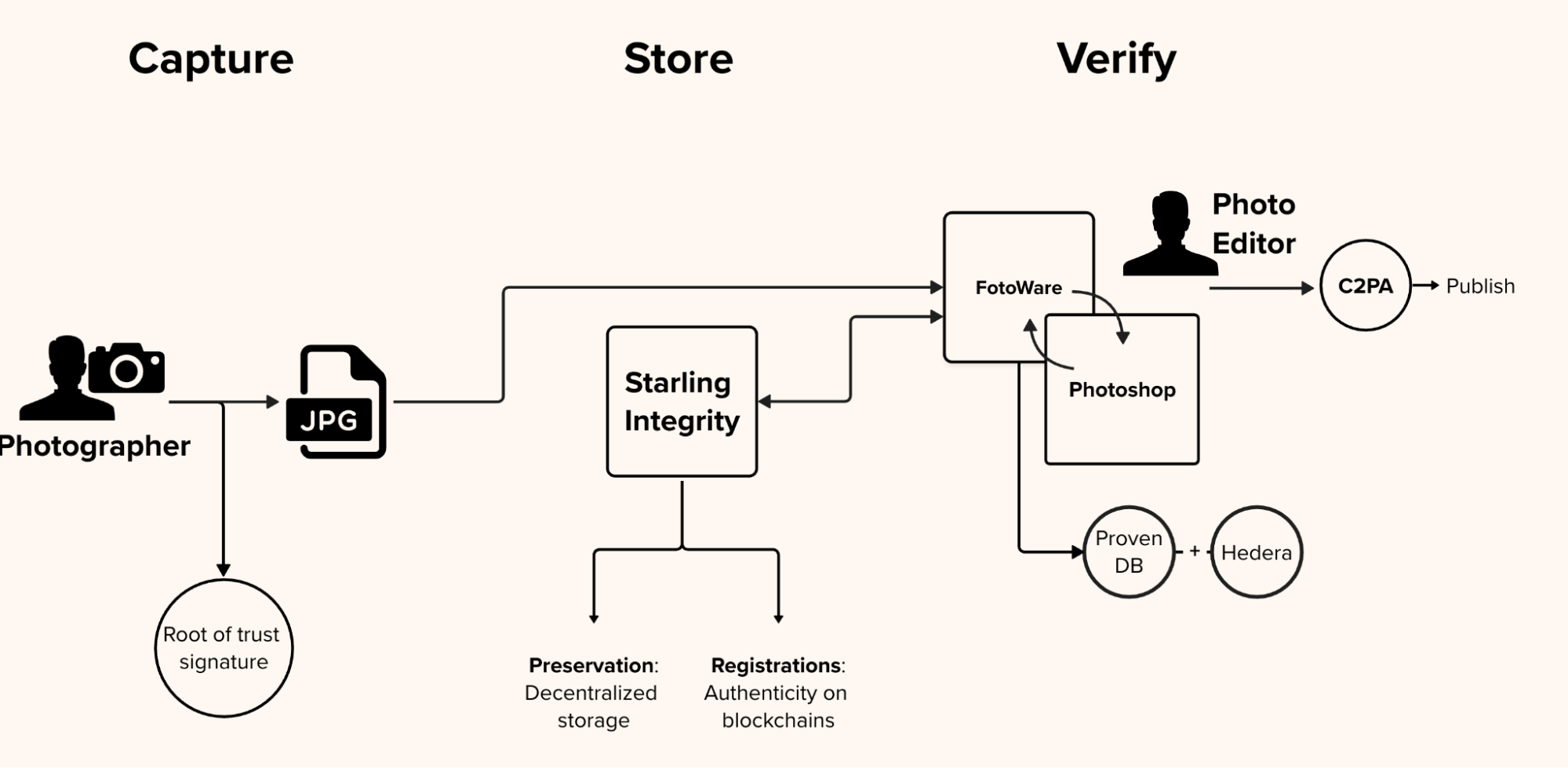

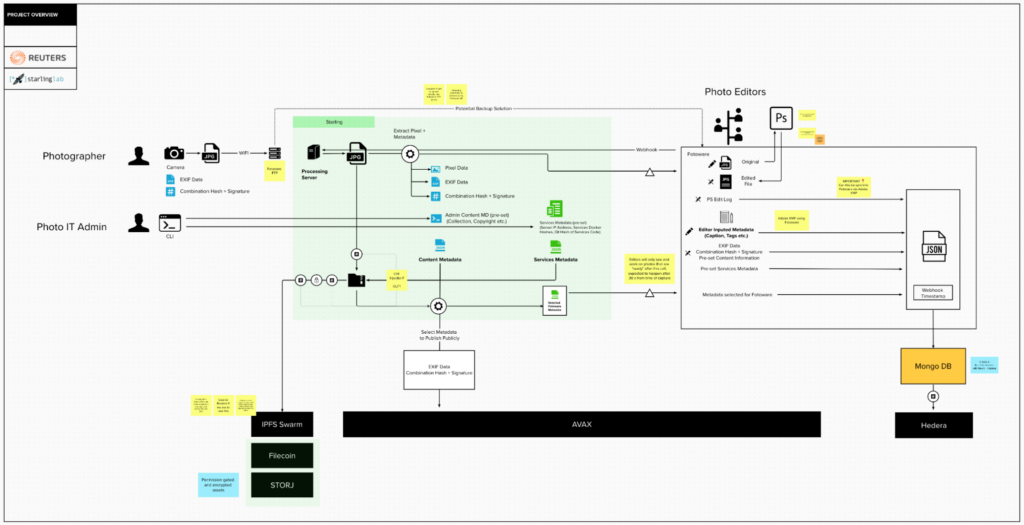

We think about three important stages of a piece of digital media's life cycle – Capture, Store, Verify – and address each through the Starling Framework.

- The Capture phase is based around a cryptographic root of trust. How do we embed verifiable metadata into documents?

- Next, how do we Store this material? Digitization is not the same as preservation.

- Finally, audiences must be able to Verify what they see.

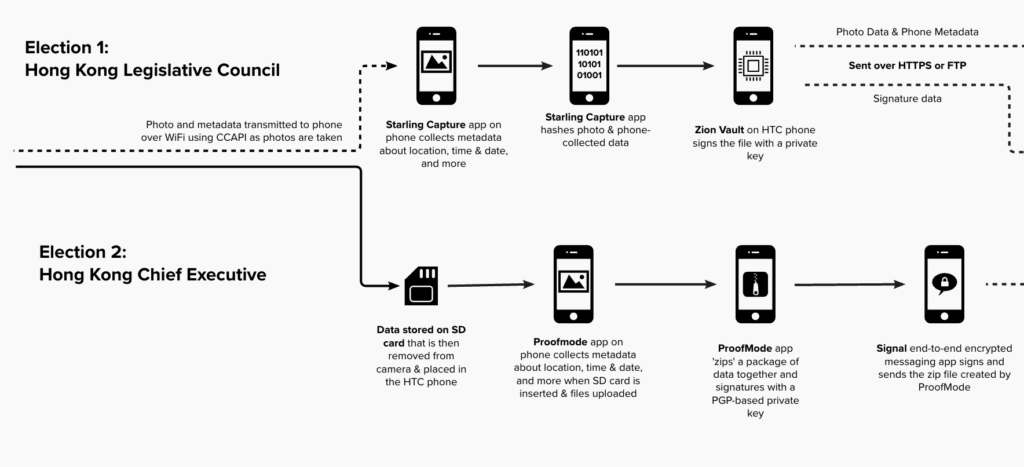

We asked: How might we use advanced cryptographic systems to verify and secure assets and their metadata (like the date and time)?In the end, we applied over a dozen technologies to capture, store and verify these digital assets: C2PA content credentials, a custom capture app paired with a Canon 1Dx Mark III, the ProofMode authenticated capture app, WebRecorder website archiving, PGP encryption, Authsign, "ZK" proofs, and preservation on blockchains (OTS/Bitcoin, Avalanche, ISCN/Likecoin, IPFS, Filecoin, Storj).

Contents

Scope

Framework

Technology

LearningsArchive

Technology

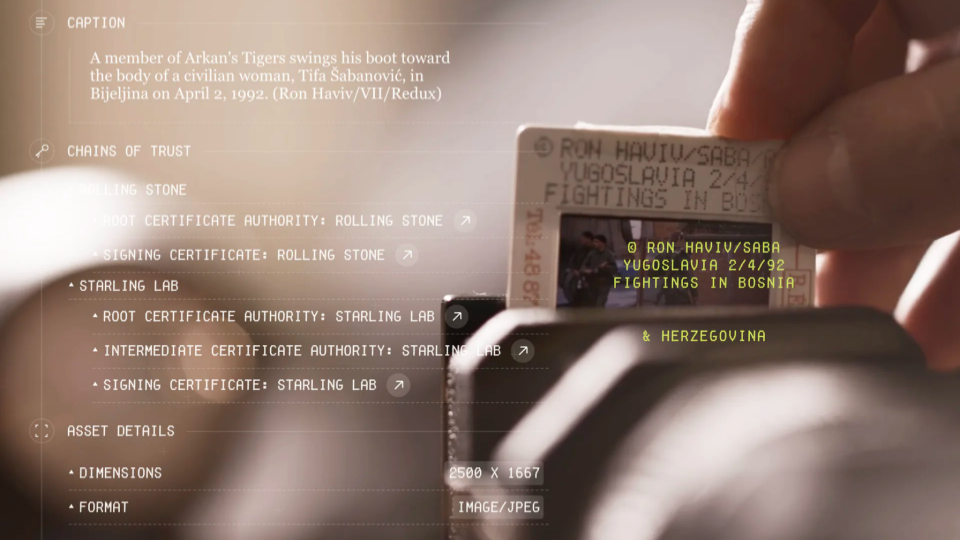

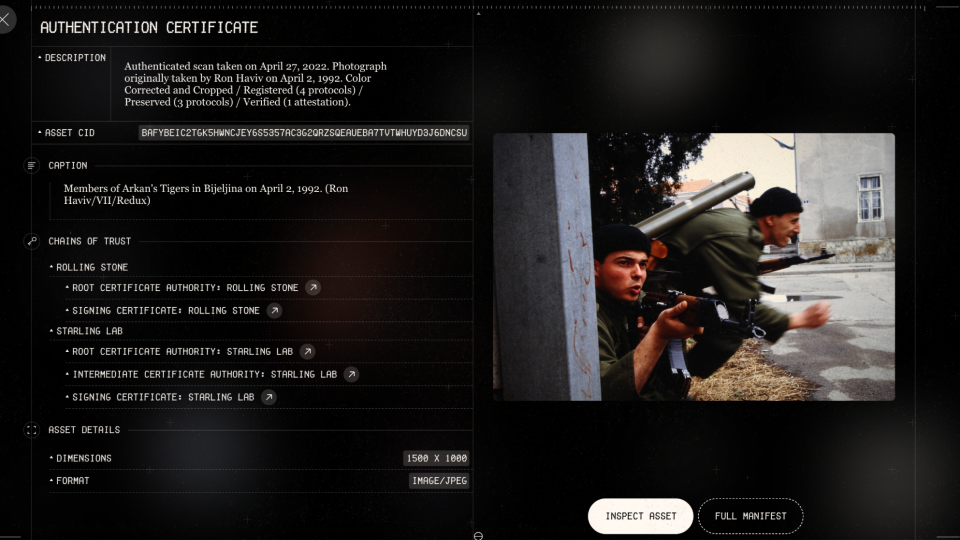

Capture – Film Slides

The physical slide seen below was developed from the actual film in the camera used by Haviv in Bijeljina. That’s his hand holding the paper frame. In order to bring these images into the 21st Century, we had to digitize several of them. Haviv is depicted inserting one into physical bellows, which hold it in place for a modern digital camera to capture in high fidelity.

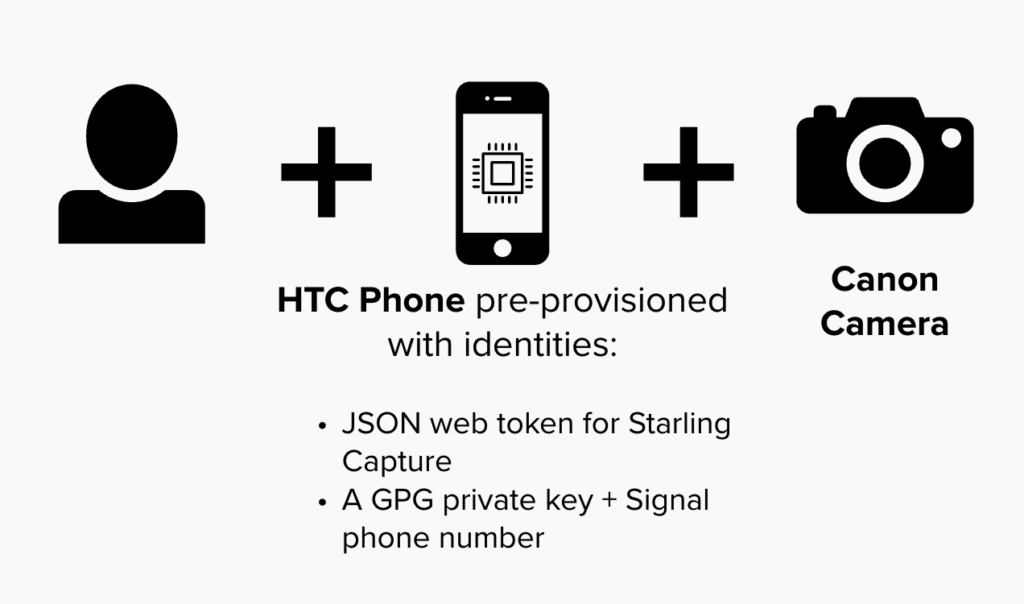

The camera – a Canon 1Dx Mark III – was equipped with special firmware provided by the manufacturer so that we could tether it to a mobile phone.

The phone – an HTC Exodus 1 – came from the manufacturer with a cryptographically secure chip. While popularized as a “wallet” by cryptocurrency fans, it has far more utility for our use case by authenticating the data from digitized photos.

A custom app on the phone, developed by Numbers, allowed us to take the digital equivalent of a fingerprint, add our own digital signature, and then record all of it in a virtual fingerprint registry.

In technical terms, we used a secure enclave, hashed and signed each file, and registered them on blockchain. This allowed us to establish a cryptographic “root of trust.”

Along the way we wanted to add additional authenticity markers. Fortunately, we had access to the one person who could make an attestation about which version was his own original film slide – not the ones that had been manipulated or lied about on the internet in recent decades.

Haviv’s personal testimony on video is more relatable to audiences than the 1s and 0s under the hood. Even our most modern tech-forward approach can’t exclude humans from the process. To the contrary, we sought ways to include them, their corroborations, and acknowledgements of their role.

https://www.youtube.com/watch?v=4UWieqM_s_Y

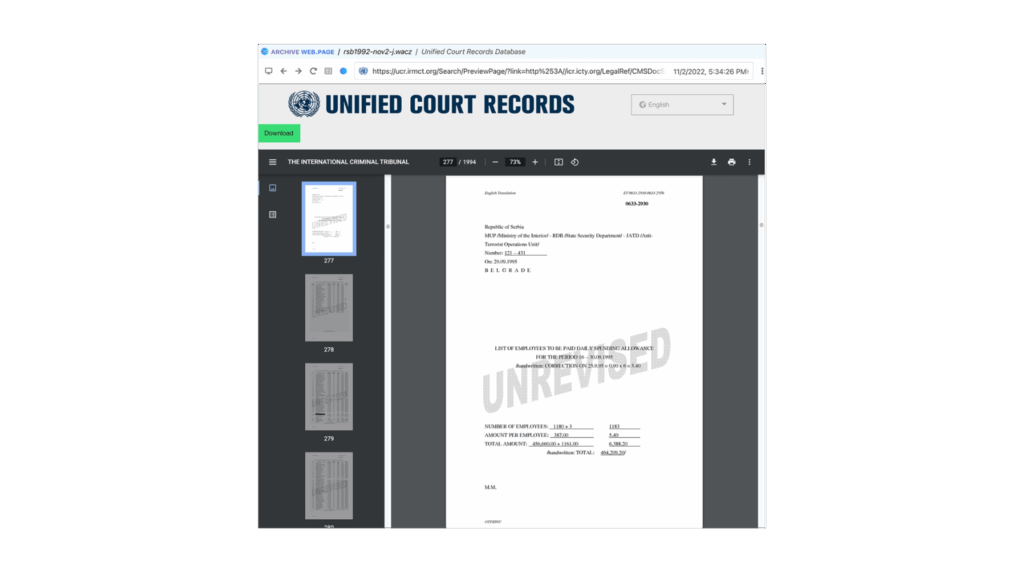

Capture – Payroll PDFs on UN Servers

Another crucial piece of evidence was a collection of payroll records from the paramilitary unit at the center of the alleged atrocity. These first surfaced in a war crimes tribunal, and copies were eventually stored on United Nations servers. Unfortunately, custodians told our reporters that these were “confidential” and declined to release them. Fortunately, those same reporters kept searching and found them sitting in the clear (i.e., unencrypted) on the UN’s public servers. This led to a pair of challenges that technology was able to overcome.

First, the team had to assume that after publication the record keepers might respond by taking the documents offline. Beyond mere linkrot, the history of denialism around this unit raised legitimate concerns that someone might dismiss our version of the records as “fake.” With AI making it even easier to generate assets, our team was concerned about the general public being conditioned to doubt anything is real – a phenomena psychologists label the “liar’s dividend.”

To ensure proper preservation we used Webrecorder, a free and open source tool that works as an extension on any Chrome-based browser. No mere screenshot or “print” feature, it carefully captures all data and metadata during a browsing session. This means you can relive the browsing experience as it was when journalists visited the site – even offline years later. As part of the replay experience, links on a saved webpage can be clicked, and the linked pages can be read (assuming the initial investigator made sure to capture each page). Webrecorder backs this with cryptographically secure proof that the files were from specific servers, which we could use to demonstrate the materials indeed came from the UN’s tribunal archives.

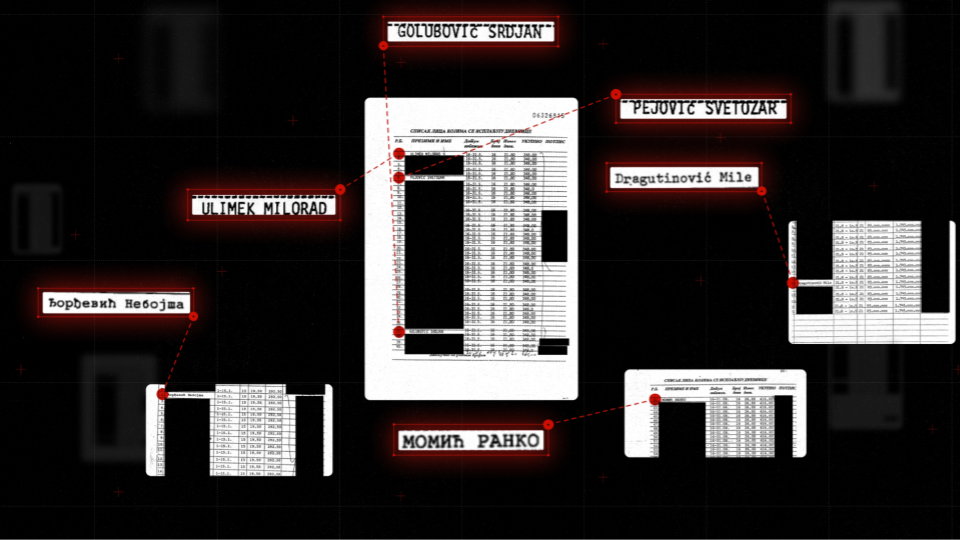

Verify - Redacting Names From Payroll Records

Second, as a matter of good journalistic practice, it was important to redact names from the payroll records if someone wasn’t a subject of the investigation. If a person was a cook or truck driver and had no involvement with the atrocities, it wouldn’t be appropriate to put them through the same level of public scrutiny.

In order to redact the records, we turned to Trisha Datta, working on her PhD at Stanford and advised by Professor Dan Boneh, one of Starling’s principle investigators. She implemented a zero knowledge proof, which uses cryptography to guarantee that the only changes made to published PDFs were the additions of block boxes over specific pixels. This means that someone with a sophisticated understanding (like an expert witness in court) can literally check our math. This confirms nothing else was manipulated from the moment data left UN servers to the time the redacted records are viewed on your screen.

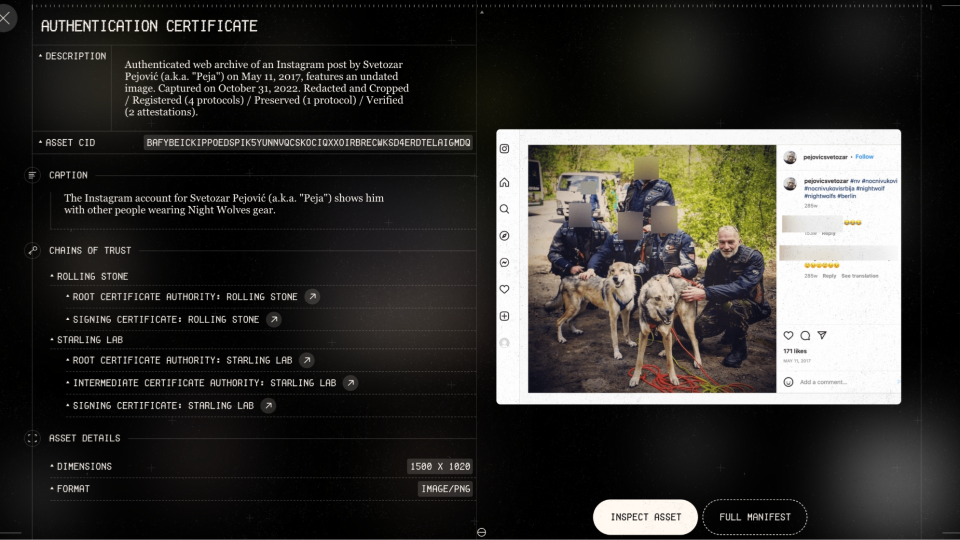

Capture - Social Media (OSINT)

Webrecorder was also a powerful tool to capture social media, like this Instagram post. The one person we didn’t blur out happens to be one of the individuals named on the payroll records. Jones did a masterful job tracking down and identifying the connections that different people from the unit still had in modern day.

Of course, social media can be ephemeral for many reasons. We’ve seen how mercurial CEOs can cause content to disappear. Users may set their accounts to private or delete individual posts. When the story went live, it was a real risk that accounts might go dark and make it challenging for prosecutors to reconstruct who was connected to whom.

This subject in the photo with wolves is one of several individuals who appeared to be leading a life of impunity. He was spotted in photos on various sites associating with the Nightwolves, a notorious Russian motorcycle gang which supported Russia’s initial invasion of Crimea.

Verify - User Experience For The Archive

The story’s archive lets audiences explore the evidence for themselves. All the documents are spread out so that anyone can build their own associations between them – social media posts, news clippings, payroll records, photographs scanned from 30 years ago, and more recent images captured in the current day. Any of these assets might be vulnerable, but because they are all stored and secured using decentralized technologies (more on that later) it empowers us to ensure they survive and are not prone to future denialism.

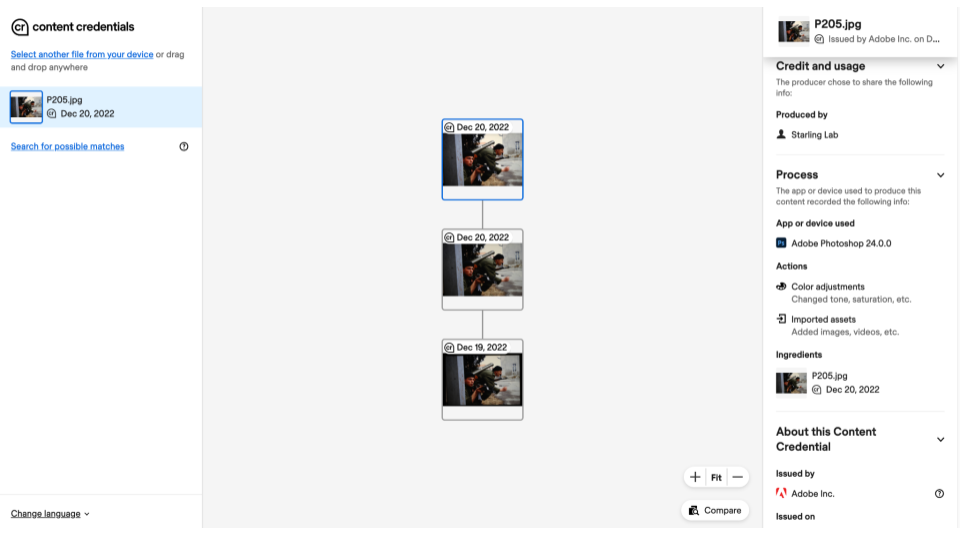

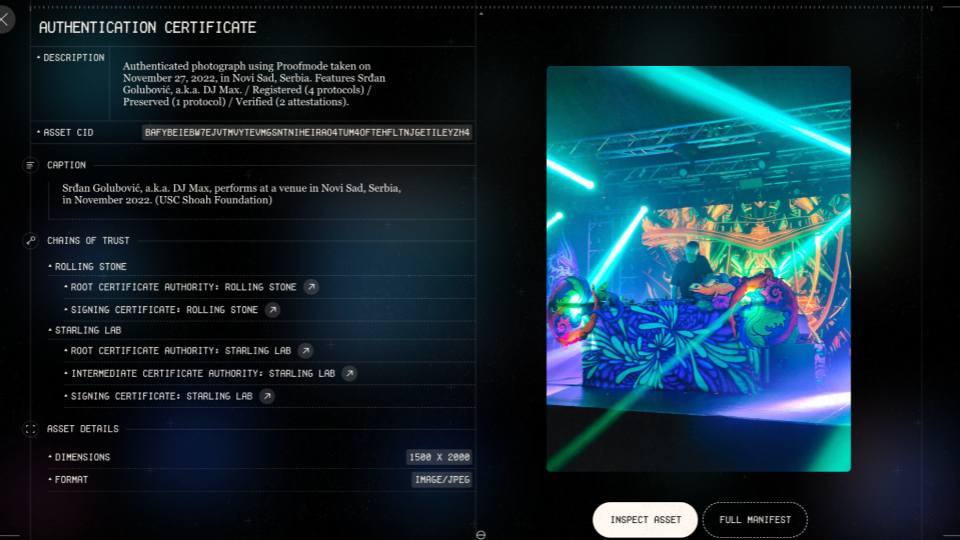

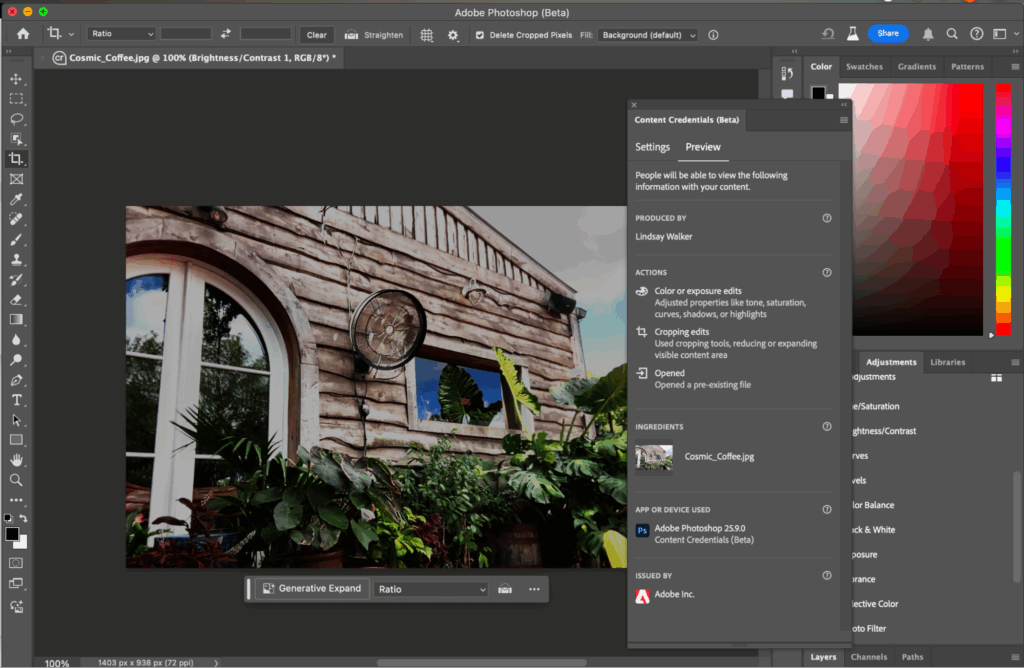

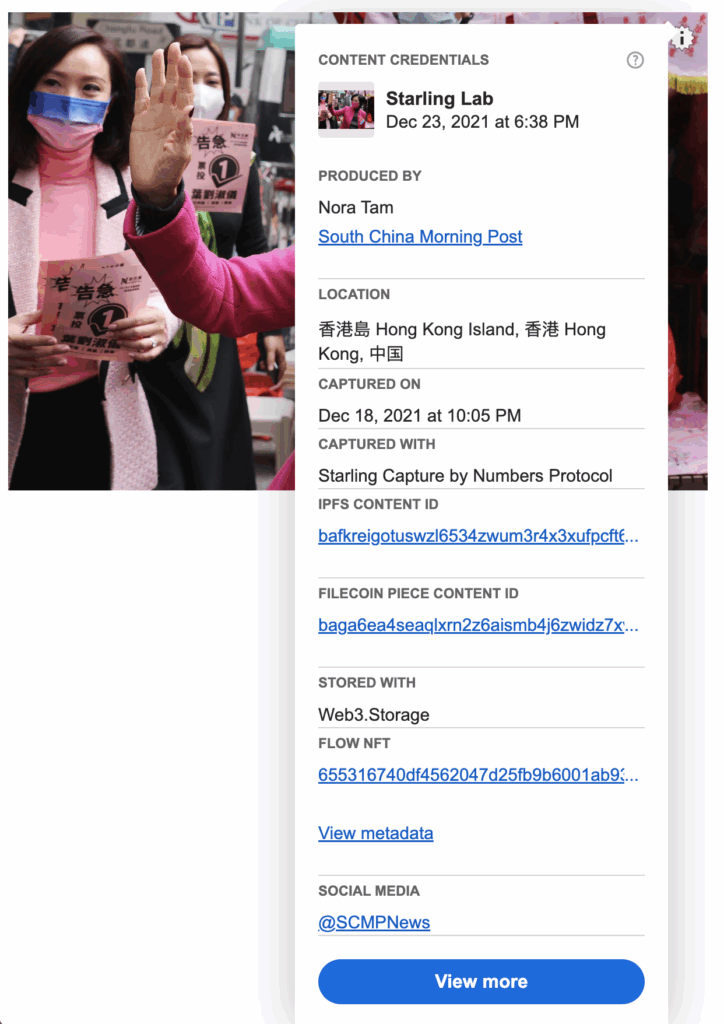

Each one of those assets – whether seen in the narrative’s main story, featurettes, or full archive – has an “eye” icon in the lower-left corner that when clicked opens up an authentication certificate. It’s a similar idea to the Content Credentials being popularized by major industry leaders through the Coalition for Content Provenance and Authenticity (C2PA). This coalition includes Adobe, Google, Meta, Canon, Sony, BBC, and many more hardware manufacturers, software developers, and media companies.

C2PA is a technical standard that produces a “manifest” of authenticity metadata for a digital asset. We were also able to incorporate it into each item for this project.

In the authentication certificate you can see how we establish different chains of trust, inspect the asset, and examine additional details. Readers again see the Starling Framework – Capture, Store, Verify – as applied to an individual asset.

In the capture phase, we indicate where the original version was registered on a variety of blockchains – OpenTimestamps, Numbers Avalanche and ISCN. Each is linked so that you can confirm the underlying transaction with an on-chain explorer. (For the non-technical audience, this is simply where we put the fingerprints of each file into a registry – and importantly, the registry is cryptographically secure so that no one can edit it later.)

In the storage phase, copies were preserved using decentralized storage systems like IPFS, Filecoin, and Storj. These are designed to offer a resilient, tamper-evident, and censor-resistant alternative to common corporate cloud-based systems like Google Drive, Microsoft Azure, Amazon Web Services, or Dropbox.

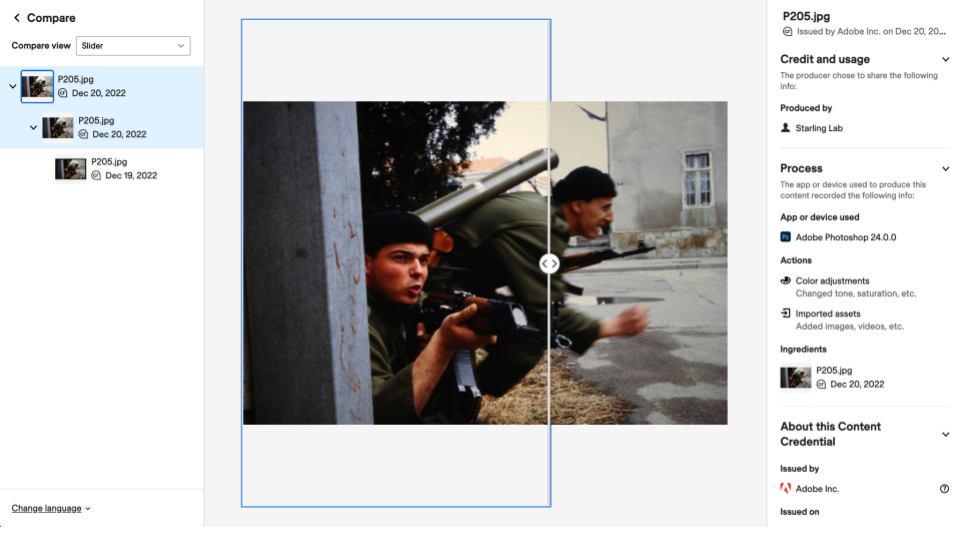

Under the thumbnail of each asset are a pair of buttons for the “full manifest” (which includes code for tech-savvy verifiers) and “inspect.” The latter allows audiences to examine changes to the evidence through a verification tool on the Content Credentials site. It provides a visual and user-friendly way of exploring the C2PA metadata – and lets anyone audit the edit history of an asset.

Any photo published in the news is likely to be edited somehow. We’re all used to cameras on our phones processing an image, often thought of as a filter. And in a journalistic project, it’s normal to do cropping and color correcting. We can think of these as permissible edits – but in journalism they should be transparent.

Adobe Photoshop was used to make all the photo adjustments in this story. Anyone who uses that software can go into preferences to turn on Content Credentials before starting an edit, and that generates metadata about the changes certified by Adobe itself.

The Verify tool allows you to explore those verified edits, either side-by-side or overlaid with a slider. Looking closer at the first edit to this image using the side-by-side view, there’s a noticeable black border around the top version – an artifact from the digitization process. The paper frame of film slides, which you’ll recall Haviv held to insert them in the bellows, doesn’t allow light to penetrate. The existence of the border is a clear indicator to the newsroom that the complete slide was digitized – but it’s also something a page designer wants to chop to the virtual cutting room floor.

The second edit to the photo, seen with a slider, confirms that color correction was relatively minor. Notice the sky’s lighter exposure to the left of the slider compared to the right.

Verified with your own eyes, you can be assured the changes were helpful – not a deceptive wholesale change like adding a bayonet.

Store - Where Does It All Go?

As seen earlier in the authentication certificates, all these assets were stored using decentralized networks like IPFS, Filecoin, and Storj. These offer promising alternatives to entrusting assets with one of the few megacorporations that dominate the commercial storage market.

Traditional digital storage uses “location addressing,” meaning a file can be located only by knowing the specific path through a directory and subdirectories (think of folders nested inside of other folders on your computer). It’s the same concept as a website address structured as www.website.com/directory/subdirectory/subsubdirectory/file.pdf. If “subdirectory” is renamed “subdirectory2,” everything breaks. In contrast, these innovative decentralized systems use “content addressing.” This approach starts by taking a digital fingerprint from the file, resulting in a code that can be about 64 characters long. When you later look for the file by searching for its unique code, the system checks for a matching copy anywhere on the internet. Ultimately you don’t care where it finds that copy, only that it’s a 100% perfect match as proven by cryptography.

The implications are profound. If a file is destroyed at a data center due to a natural disaster or an authoritarian government raid, you can confirm a full and authentic recovery when anyone else – even an unknown stranger – hands you a copy matching the original file’s fingerprint.

Instead of requiring you to place your trust in a centralized (and potentially corruptible) authority, this is considered a “trustless” system.

This fundamental design shift is a potential long-term solution to linkrot and related problems that have plagued newsrooms, archives, and even Supreme Court opinions.

Capture - Live From Novi Sad

The authentication certificate for this final photo shows a colorful scene. It also demonstrates additional ways to authenticate journalistic work in the field.

The person at the center of this modern day image is the same person in the middle of Haviv’s iconic image of a war crime in progress. In between, he went on to a notable career as a DJ, playing in European festivals over the years with crowd sizes comparable to Coachella.

This particular image came from an event where he was spinning in Novi Sad, Serbia. It depicts his life of impunity, as there had been no accountability for the war crimes decades before. This was one of several images and videos captured by a freelancer we hired to cover the event. However, we didn’t have a go-to person in Serbia. With the show scheduled days after we caught word of it, how could we quickly develop trust in a total stranger? This became more sensitive when the most promising local freelancer asked to remain anonymous, not wanting his name to be on the radar of his neighborhood war criminal.

Technology once again provided a solution. We decided to use Proofmode, developed by Guardian Project, which is a free and open source app that can be installed on any iOS or Android phone. It uses software signatures to authenticate each image as it’s taken, including a full C2PA manifest. This solution worked especially well for the freelancer, as it cost nothing to set up and allowed them to operate discretely with a phone – looking like any other partygoer. But when he returned to his car in the parking lot, he was able to upload images with manifests allowing us to confirm we were seeing the same moments as they were witnessed by the lens of his phone.

Contents

Technology

LearningsArchive

Learnings

Data Can Disappear

Not long before his set in Serbia, the subject of this story had started a new Instagram account and was leaving more of a digital trail than ever. Hours after the story published, he set it to private.

Data Can Disappear

Fortunately, we have most of that account saved thanks to Webrecorder and thanks to our content addressed decentralized archives. All of that evidence is available for investigators even though it's no longer available to the public on Instagram.

Those subjects and their relationships – on social media and in real life – are the heart of any investigation. Here we see the primary subject on the top left still enjoying his cigarettes. On the far right is a younger version of the man seen posing with wolves. Another one of the people in this network carried the casket of the unit’s general at his funeral. The are nodes in a social network – and poignantly now captured on nodes in our decentralized archive.

Where There Is Tech, There Is Hope

Days after this story was released, local prosecutors reopened this 30-year-old cold case. Hopefully we will eventually see justice for the victims.

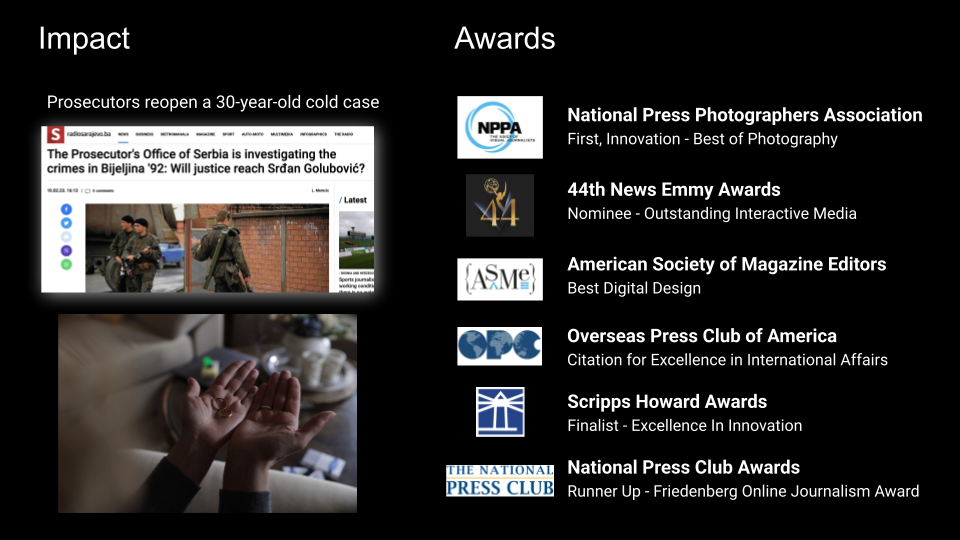

Over the next few months, the story earned a number of journalism industry accolades. Awards juries recognized the fine investigative work, and they also recognized the need to authenticate and archive. Starling’s hope is that these approaches illuminate new paths for all newsrooms to pursue and inspire new implementations that will benefit society and restore trust in journalism.

Contents

Scope

Framework

Technology

LearningsArchive

Archive

Links to News Articles

- Rolling Stone story

- 9-min mini-doc

- Direct link to the archive

- Featurettes: The Photograph• The Document• The Network

- Gladeye case study

Credits

A large team within Rolling Stone was led by Executive Editor Sean Woods, Creative Director Joseph Hutchinson, and Digital Director Lisa Tozzi.

A world-renowned design firm, Gladeye, handled the custom site development, overseen by Tarver Graham and implemented by Nathan Walker.

Starling’s work was spearheaded by Jonathan Dotan, Adam Rose, Benedict Lau, Yurko Jaremko, and Josh Lee.

Narrative Watch

Narrative Watch

Does bodycam footage promote accountability for police use of force? We build an authenticated survey questioning videos and claims, and archive the original material for the long-term.

Starling LabReading Time: 5min

Prototypes

Share

Contents

Background

See More

Background

Over the past decade, there’s been a significant push for police departments to add more body cameras in the hopes of creating accountability and being a tool for reform. This collaborative project confronts the difficulty of establishing facts and interrogative bodycam footage, as well as to cryptographically authenticate and archive material primary and supportive to protect vulnerable public records.This case study follows publication of news articles detailing our findings, and is accompanied by a survey of criminal justice experts investigating the relative absence of reforms many advocates expected.

Contents

Context

FrameworkTechnologyLearningsArchive

Context

Since 2020, the Starling Lab for Data Integrity awards fellowships to journalists who integrate and collaborate in case studies exploring the integration of technologies used to capture, store, and publish authenticated media. This project, called Narrative Watch, is one such fellowship between the Lab and two reporting groups: The Grio and Big Local News.

Launched in 2016, The Grio is an American television network and website with news, opinion, entertainment and video content geared toward Black Americans. Big Local News, a Stanford University-based team led the research into problems around access to public records and data for journalists working in policing, public health, policing, and more. The group works to develop tools to help journalists access, analyze, publish, and archive data.

Narrative Watch culminated in December 2023 with the publication by The Grio of two articles about police body cam footage, leveraging technical authentication tools developed by Starling. Both articles addressed the difficulty in using and obtaining body cam footage from police departments, despite regulations put in place aimed at facilitating police accountability.

While this project was a collaboration between the three teams, the following individuals were most notably involved:

- Big Local News is led by Chery Phillips (a 2023 Starling Journalism Fellow), along with Senior Data Scientist Eric Sagara acting as technical lead on the project.

- The Grio’s SVP and Chief Content Officer Geraldine Moriba oversaw the project, with additional reporting and editing support provided by Natasha Alford, and Josiah Bates.

- Starling’s work was overseen by Journalism Fellowship Director Ann Grimes and Project Manager Lindsay Walker.

- Related work around this subject was done by the California Reporting Project. Additional research and reporting support was provided by Dana Amihere, Dilcia Mercedes, Lisa Seyton, Irene Casado Sanchez, Lisa Pickoff White, and Ananya Tiwari.

The Narrative Watch project made public records requests from police departments for Use of Force cases where individuals were seriously injured or killed. They gathered reports, photos, audio, and video, focusing on cases involving claims like "I feared for my life" or "I was attacked." The final project involved three data sets that could be cross-referenced with police reports. A survey of criminal justice experts was also conducted to see if consensus could be reached on disputed details from body cam footage.

One key set of records involved the 2020 beating of Tyre Nichols in Memphis, which were archived by the Starling Lab for Data Integrity using advanced authentication technologies. These tools preserve vulnerable public records, ensuring their accuracy and availability, especially in combating misinformation. As civil rights attorney Benjamin Crump explained, many states delay video release, leaving families without access to critical evidence. By using cryptographic methods and decentralized systems, public records can now be safeguarded against manipulation, even as the rise of AI makes such concerns more pressing.

Contents

Framework

Framework