Pilot Project on Making Web Preservation Easy for Investigative Journalism

Journalism

In the digital age, the “smoking gun” is often a fleeting URL, a social message that gets edited minutes later, or a listing on a grey-market website that vanishes once a sale is made.

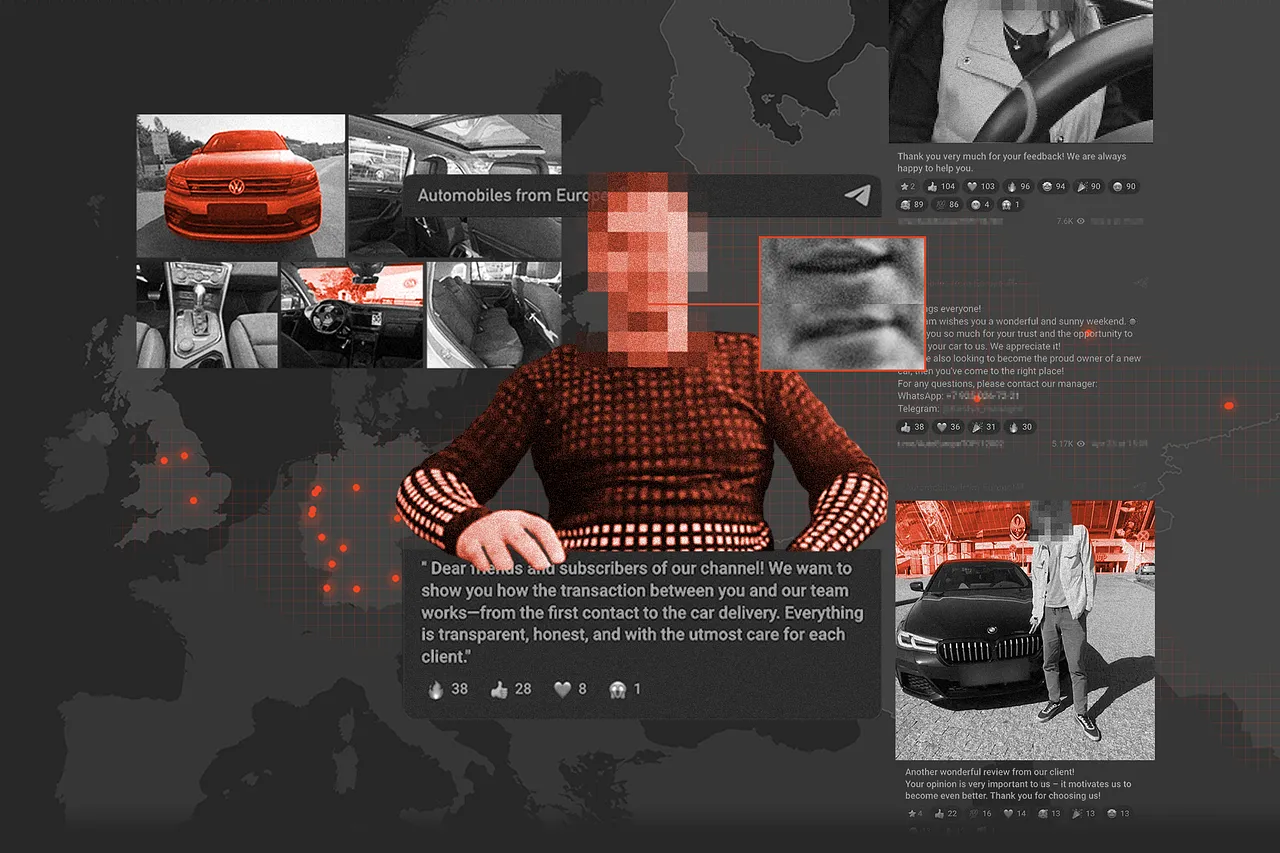

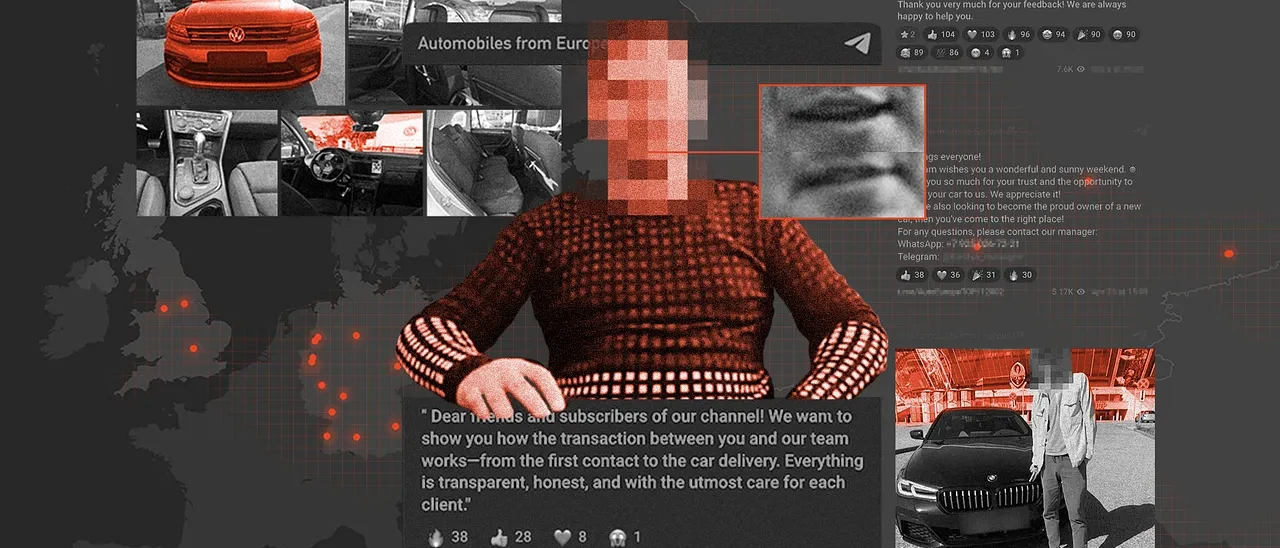

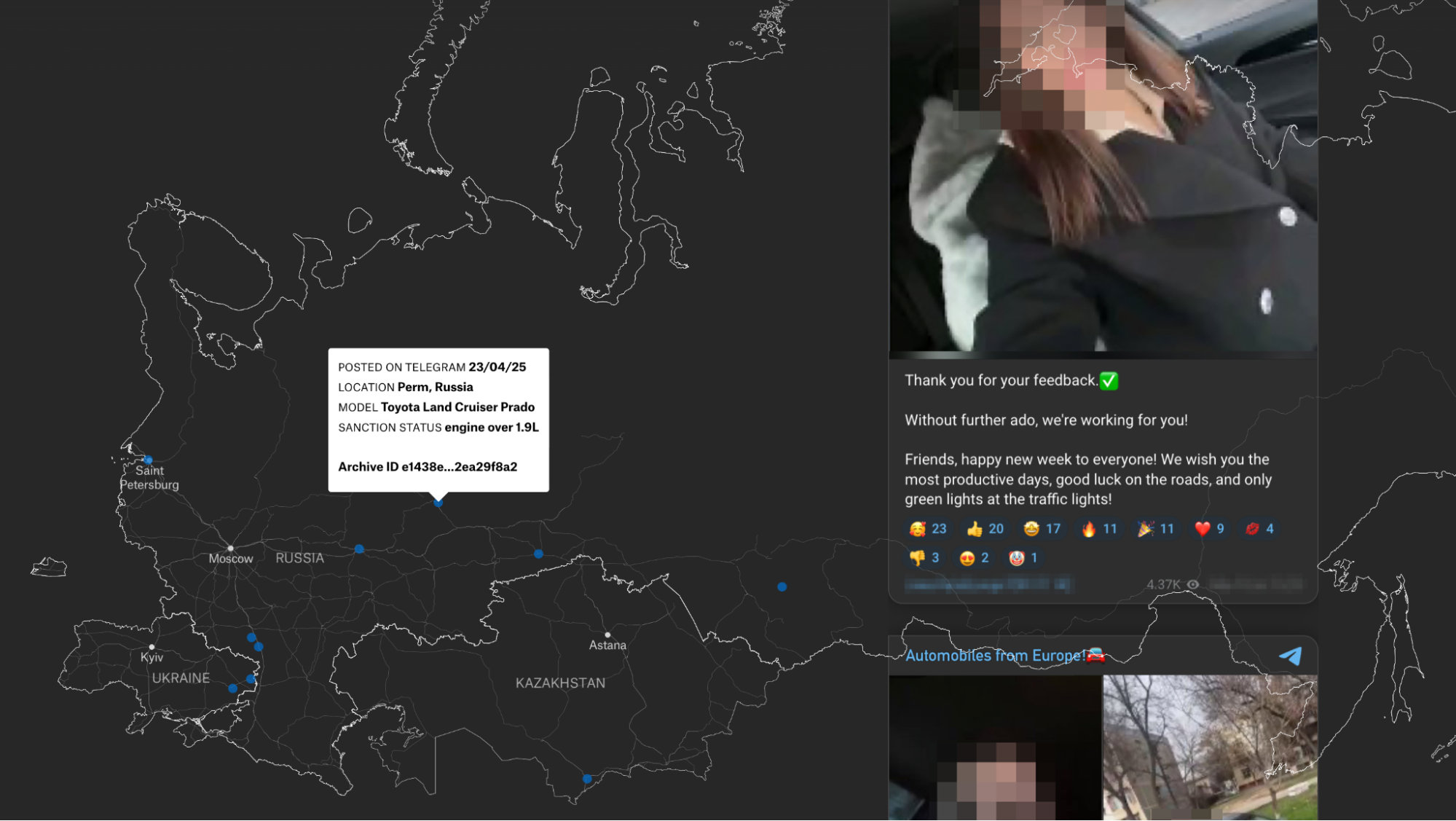

Today, we are proud to announce the publication of “Sanctions, Scams, and Deepfakes,” a major investigation by our partners at Airwars into the illicit flow of luxury cars from Europe to Russia. Starling Lab worked in support of the investigative team to preserve the digital evidence supporting their findings, while the reporters traced the physical movement of vehicles across borders.

At least, that’s what they originally set out to do.

At the center of this investigation into the apparent smuggling operation of cars into Russia laid a red herring. The investigative team initially believed they were tracking real sanctions-evasion schemes bringing luxury cars from Germany to Russia, but instead discovered the operation was largely a scam targeting Russians themselves. The deepfaked video of a legitimate Russian car dealer explaining the smuggling process, the paid actors posing as satisfied customers, and the geolocated cars in Germany were all elaborate deceptions designed to make the fraud appear credible – with potential victims losing thousands of euros to scammers likely operating from Ukraine rather than to actual smugglers delivering sanctioned vehicles.

The investigation was supported by IJ4EU, a grant scheme for cross-border investigative journalism in Europe. The Starling Lab did not participate in the grant application, nor did it receive any funds. Our support of the investigation was purely pro bono and limited to that project.

Technical Stack Deployed

During the investigative phase, the research and investigative team needed to browse hundreds of online sources, from Belarusian border cam footage to social media and social messages.

The core technical challenge was clear: How do we allow investigators to move fast while maintaining a forensic chain of custody? A simple screenshot is insufficient for legal or historical proof. We needed a system where an investigator could claim, “at this time and date, I browsed this unique URL which contained precisely this content,” and be able to back it up with cryptographic proof.

Fortunately, we have been working with state-of-the-art web archiving tools for years – and have even produced a whitepaper on best practices for web archiving. The approach described in this project aims to ensure the collected material meets the high bar for authenticity and probative value required in legal proceedings. It directly addresses the belief that these tools and techniques can be cumbersome or slow down the investigative process.

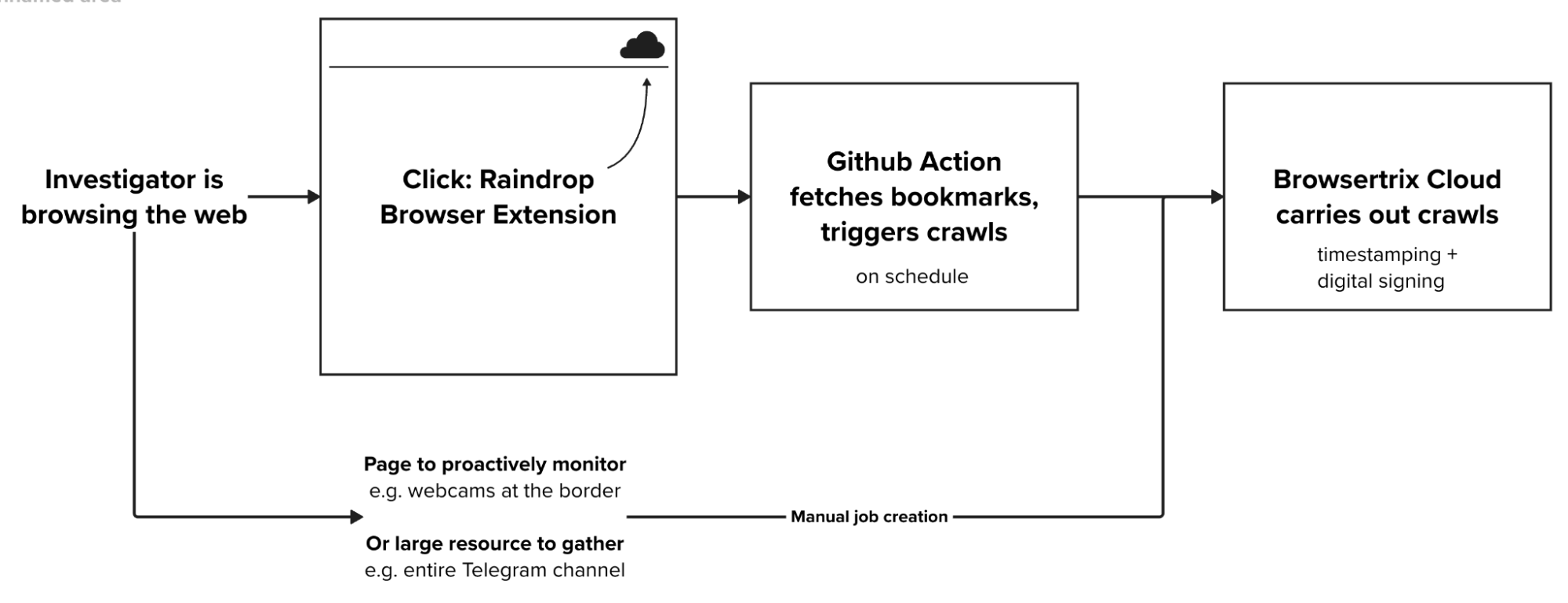

This perception being a key barrier to adoption, we set out to build a bridge between consumer-friendly tools and forensic-grade archiving. We recommended the team use Raindrop.io, a lightweight browser extension, to bookmark relevant links and add annotations. This allowed the investigators to simply “click and save” without leaving their browser.

Behind the scenes, however, a preservation pipeline was built: on schedule, Github Actions would trigger Typescript scripts tasked with fetching new bookmarks that might have been added to Raindrop; and where appropriate, to schedule their individual crawling in the Starling Lab Browsertrix Cloud account.

In total, we preserved more than 9,000 unique URLs, totalling 98 GiB in compressed form.

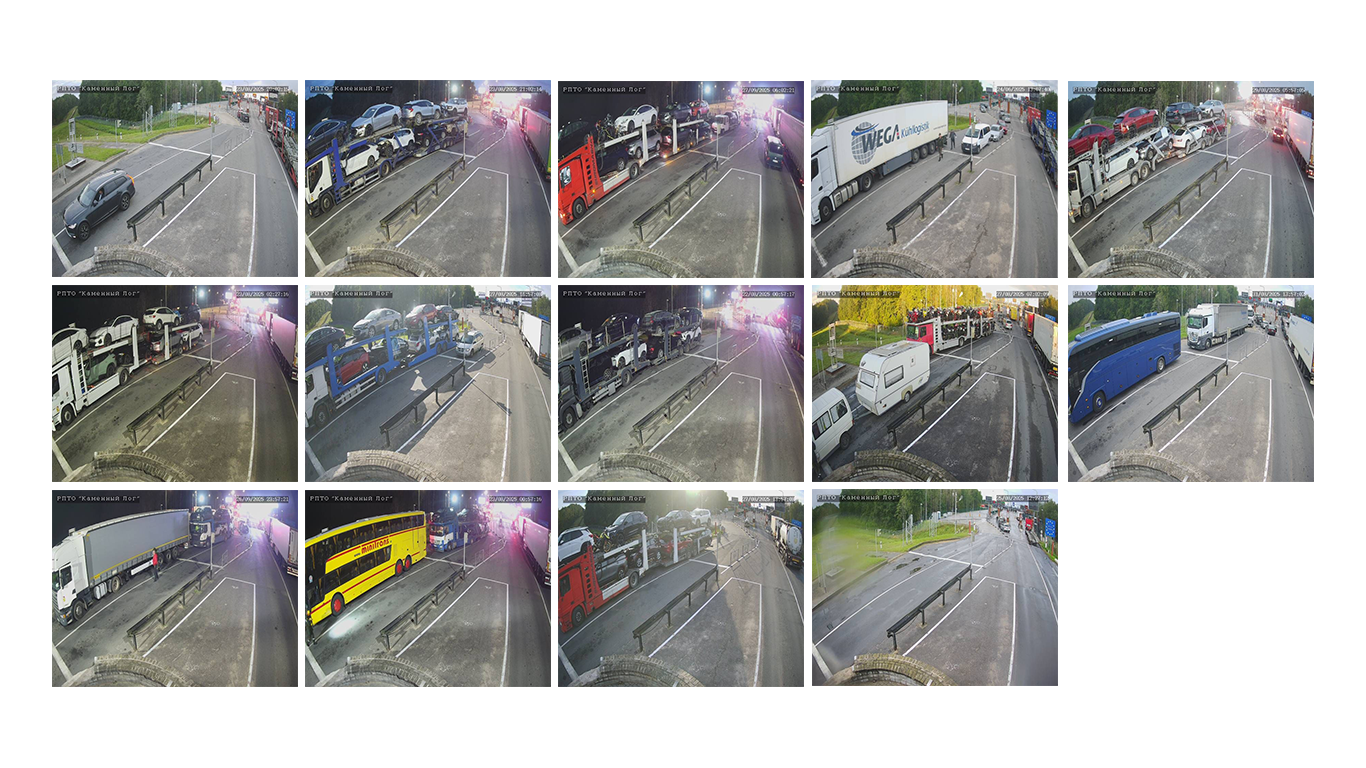

A major early lead was the case of the border crossing between Lithuania and Belarus, which the team understood as a key milestone in the supply of cars into Russia. The border crossing is monitored by webcams that refresh every 10 minutes or so. By running our crawls on schedule, we were able to provide the team once a day with a collage of captures – in the hope of substantiating that one of the known delivery lorries was doing the journey, as the Telegram channel seemed to claim e.g. below:

Despite monitoring the webcams for a month and a half, reviewing about 3,800 photographs (two shots every 10 minutes most hours of the daytime), we were not able to find one of the known trucks.

We, however, witnessed the spectacle of political opposition Mikalai Statkevich sitting in this no-man’s land after having been freed from Belarus, reportedly refusing to walk to Lithuania and go into exile.

Learnings

Proactive engagement

The earlier and the more closely we are involved, the better our chances to do good work. This oft-repeated canon of collaboration is worth its salt for a reason.

In the context of this investigation, we think we were able to support the collection of web content to a fair extent. Frequent touchpoints with a team helped identify developing parts of their workflow – for example the need to monitor the webcam photographs, which were being overwritten relatively frequently.

Time and opportunity to engage

As the story pivoted from tracking the supply chain to the realisation that key elements had been fabricated and were deepfakes or shallowfakes, the pressure to make a looming deadline led to a tightening of the loop on the investigative team’s side. The result of that was material received by investigators in the last days, prior to publication, was not shared with us for authentication and preservation.

Furthermore, we had prepared for a planned field trip to dealerships in Lithuania, and ran a short training for reporters on using the Proofmode app to take photographs, cryptographically seal them, and share them on – this trip however never materialized as the story pivoted away.

Seeing as the investigation relates to both forgeries and inauthentic material, we regard these omissions as missed opportunities.

The need for redactions

The pre-publication legal review sought by Airwars led to a cautious treatment of the evidence collected. The story was complex, with a good deal of uncertainty about what was real, and which of the persons and organisations involved were in full possession of the facts.

While technically well within reach, we opted to not publish full embeds of the Telegram posts and messages, as well as to redact some metadata from them. We are also not making the archive publicly accessible. These measures aim to protect car sellers, dealerships, clients, and anyone else whose photographs might have been used against their will and in support of the scam.

This case study further underscores the need for verifiable redaction technology to be part of the feature set of web archiving tools for journalists. In service of this requirement, we have deployed techniques from the field of Zero-Knowledge Proofs in our Rolling Stone investigation into war crimes in Bosnia. This technology allows publishers to redact sensitive information (such as names in documents or metadata in digital files) while generating a mathematical proof that certifies only specific pixels or data fields were obscured.

Scaleable

This pilot project allowed us to test and refine a preservation mechanism that is both robust and non-intrusive to the investigative workflow. We are eager to further deploy the processes developed during this collaboration, and this model more broadly, for future investigations and other partnerships with journalistic organizations.

Documenting Stockton’s Homeless

Documenting Stockton’s Homeless

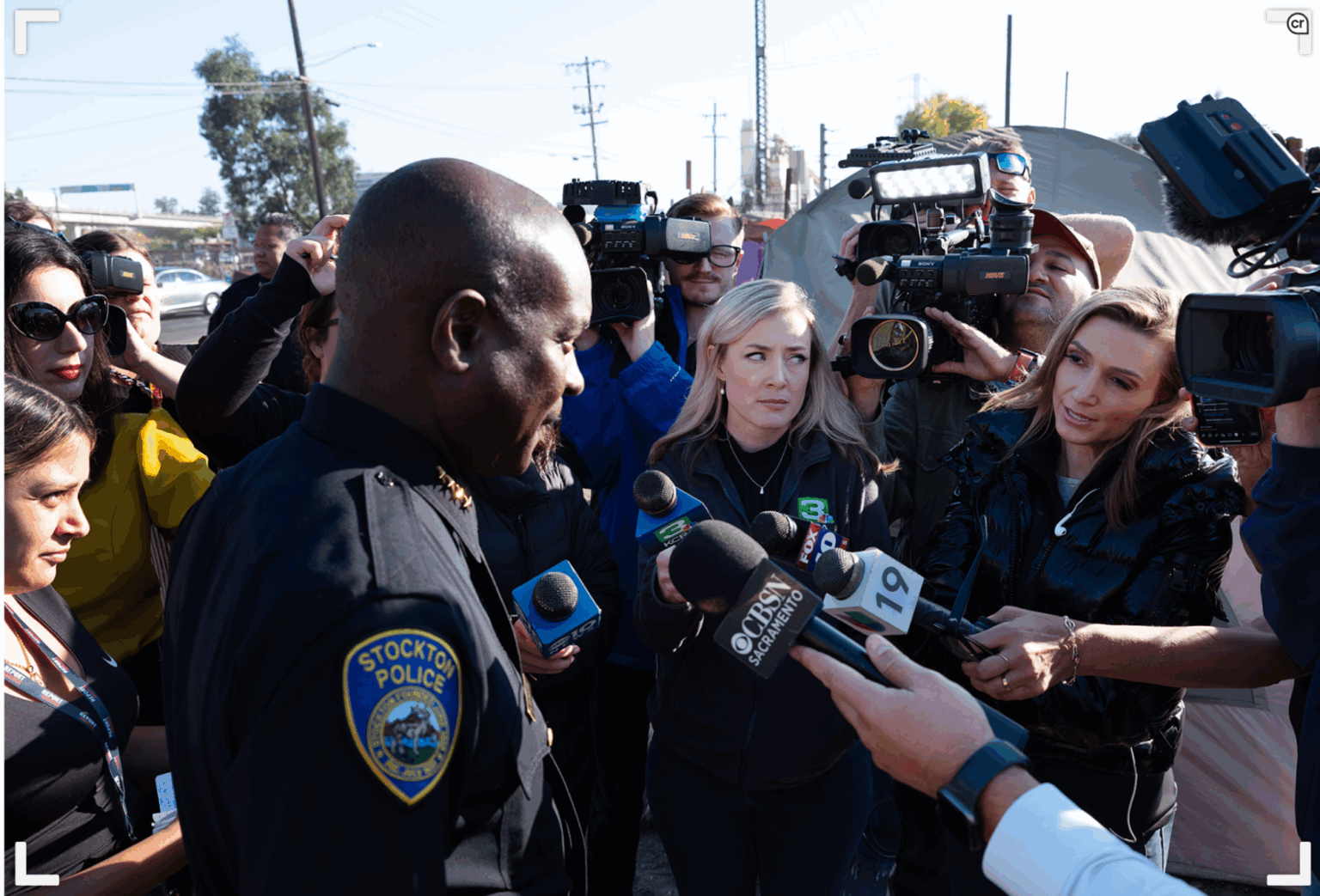

Bay City News and Starling Lab pioneer cryptographic tools to authenticate images and expose the truth of homelessness in California.

Starling LabReading Time: 5min

Prototypes

Share

Contents

Background

Context

Framework

TechnologyLearningsArchive

Background

The homelessness crisis in California has escalated dramatically in recent years. Point-in-time counts from 2022 show a nearly 6% rise from 2020, and a 17% increase in homeless but sheltered populations. Against this backdrop, the Bay City News (BCN) series Documenting Stockton’s Homeless was reported over six months, telling diverse stories from the plight of an individual on the street to data analysis quantifying the issue’s growth. It looked into rising living costs, economic disparities, and inadequate mental health and addiction services – all factors in the statewide problem. The series also addressed concerns faced by local business owners, government expenditures, and the availability and challenges of providing housing.

San Francisco’s homelessness issues have been widely reported, but now are expanding to nearby communities like Stockton in San Joaquin County. With a population of over 320,000 as of 2020, it is the County’s largest city. The scenes on the ground there tell the story of an growing problem despite significant resources invested to address it.

Crucially, the issue of misinformation has made its way in the public debate about homelessness. Local authorities often provided optimistic assessments that did not match the reality observed, leading to public distrust. This project highlighted these discrepancies. Together with Starling, BCN captured and presented the story of Stockton using advanced photo authentication tools to ensure accuracy.

In May of 2023, before Generative AI became a household term, Fred Ritchin, Dean Emeritus at the International Center of Photography and founder of the Four Corners Project, wrote about the urgent need for photojournalism to provide audiences with more data about their images. This includes context, explanation of ethics, eyewitness statements, and other background. He cautioned, “There is enormous panic in the photojournalistic industry due to the emergence of photorealistic synthetic imagery generated by AI that confuses the public as to what is going on and makes their actual photographs increasingly irrelevant.”

Bay City News also had their eyes on the future, anticipating issues when photojournalism is called into question when covering contentious or high-stakes issues. “It is imperative that local publications like Bay City News reassure readers that facts are verified and images are real,” emphasized BCN Photo Editor Ray Saint Germain.

Contents

Context

FrameworkTechnologyLearningsArchive

Context

This collaborative case study was a pioneering experiment for Bay City News and The Starling Lab for Data Integrity on a number of levels. Together, the organizations set out to cover the homelessness crisis in Stockton as it surged in 2022 and 2023, with meticulous documentation to reveal local funding disparities and the gap between reality and official statements.

Bay City News is a local San Francisco and Bay Area news agency that covers the region’s businesses, residents and government agencies. They publish a free, online newspaper called Local News Matters.

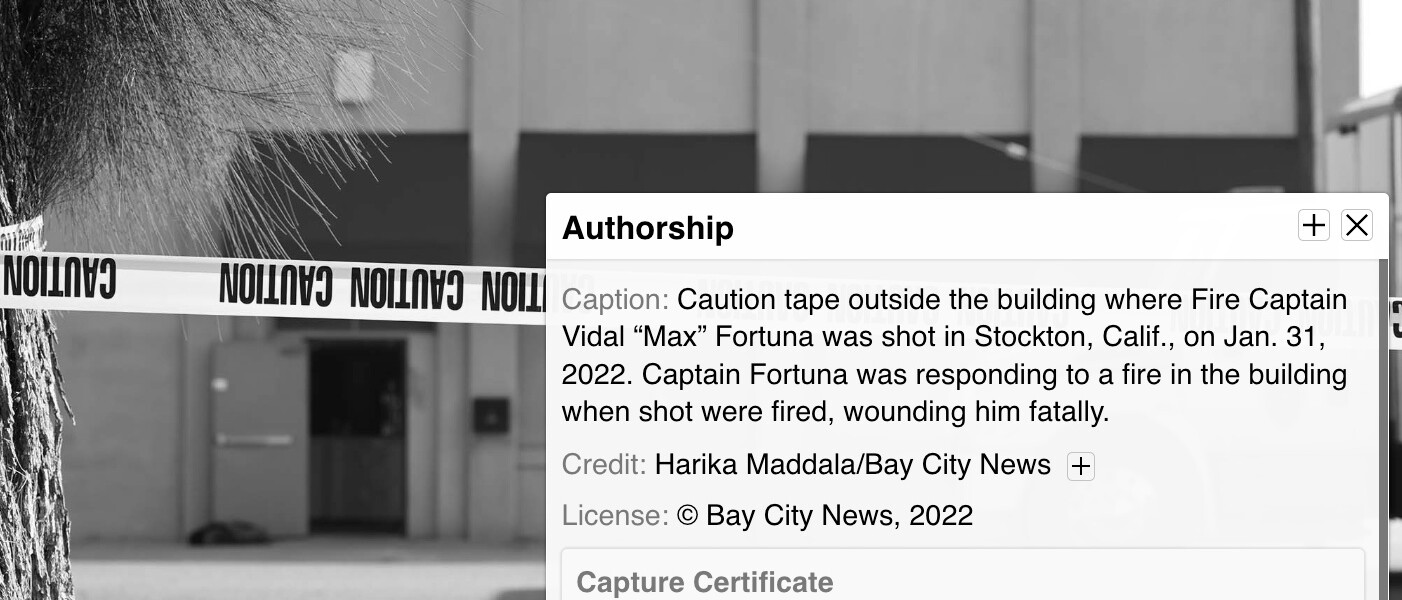

Under the direction of Ray Saint Germain, BCN photojournalists Harika Maddala and Victoria Franco, worked for six months in collaboration with Starling to develop and present cutting-edge authentication tools for photojournalism. Starling – which uses innovative approaches to integrate journalists, legal experts, and archivists into the decentralized web – helped the team enhance accuracy and combat misinformation and disinformation, reinforcing the critical role of trustworthy local news.

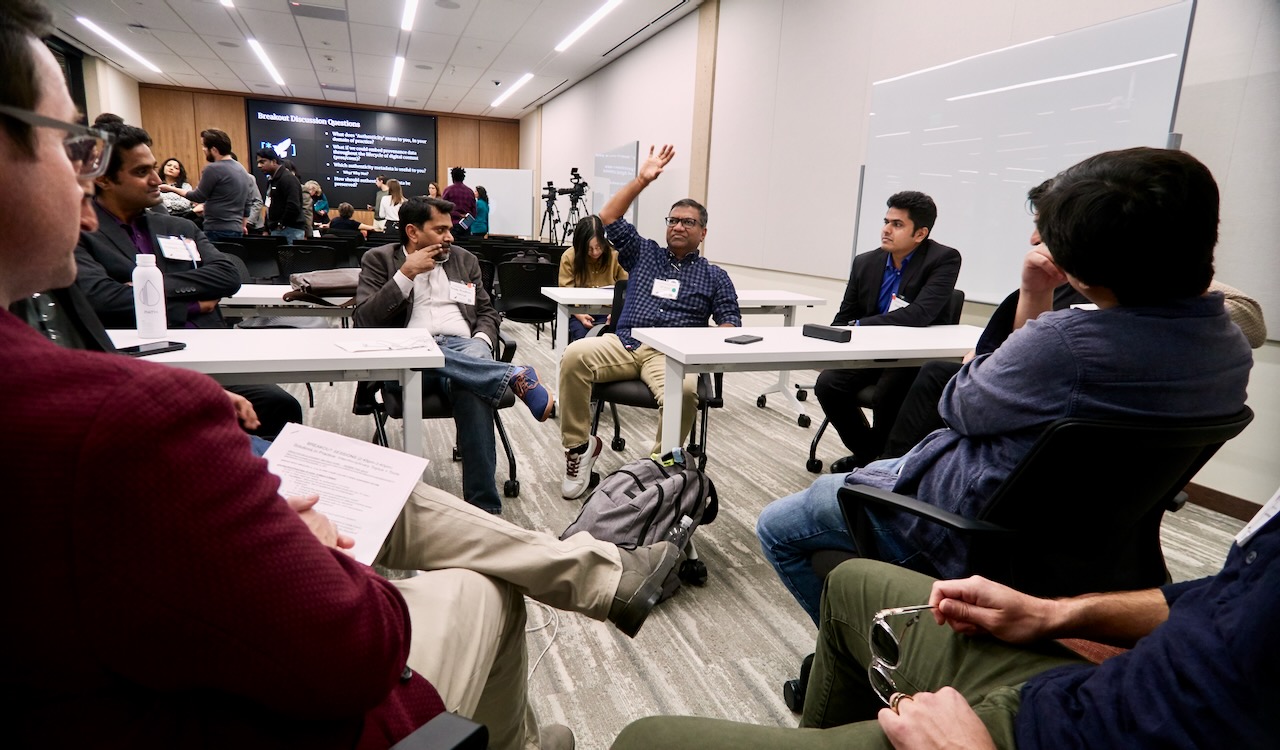

For this project, camera technologies were prototyped to capture and secure photo and contextual information at the point of capture. It was also the first large-scale, real-world deployment of Fred Ritchin’s brainchild—the Four Corners visual display of photographs alongside contextual information, which through this collaboration was further enhanced with C2PA technologies. Furthermore, with the newswire service, we explored the use of non-fungible tokens (NFTs) to publicly track the custody of digital photographs on a public ledger.

United with a vision to capture accurate representations of what the photographer witnessed, Starling Lab, BCN, and Four Corners began a collaboration that would create authenticated “time capsules” of images and contextual metadata, secured and presented as published articles available on LocalNewsMatter.org to document the Bay Area’s homelessness crisis and tracking data from relevant government agencies. These articles feature 35 authenticated photos, with six displayed using Four Corners. This first-of-its-kind initiative put together several in-development technologies and emerging standards over the long-running implementation, in hopes to show the art of the possible for authenticity in photojournalism in a world of manufactured images.

The sum of these technologies, along with the articles published on LocalNewsMatter.org, offer learnings for all stakeholders as we look forward to the future of trustworthy local news.

In addition to the BCN team and Starling Lab, collaborators on the project included Four Corners founder Fred Ritchin; Corey Tegeler, Engineering Lead at the Four Corners Project; and Newspack Automattic Manager Daniel Brown. BCN’s content management system is based on WordPress and supported by Newspack.

Contents

Framework

TechnologyLearningsArchive

Framework

The Challenge

Bay City News is a for-profit news agency that serves other media organizations in the Bay Area. It sustains a nonprofit Foundation, which runs LocalNewsMatters.org–and provides free local news for readers. BCN strives to reassure readers that their facts are verified and images real. “Being able to prove the origin and authenticity of photos could be useful to professional newsrooms like ours,” said BCN Photo Editor Ray Saint Germain.

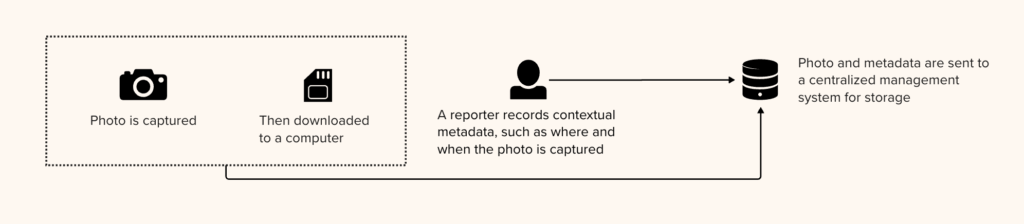

Ordinarily, BCN’s workflow kicks off with a photo assignment, where staff photojournalists shoot with DSLRs and mirrorless cameras. Most reporters use phones, and few of them use DSLR cameras. Breaking news happens fast, and photos need to be delivered to newsrooms quickly. Photos shot with a cell phone are directly sent to the photo editor, whereas photos shot from cameras are transferred to a laptop, then sent in. Non-daily assignments are filed by the end of shifts.

Contextual information recorded by the photographer is later associated with each captured photo, and included in the image file for storage and distribution. For example, on older cameras, the date and time are input manually and may be configured incorrectly at the time of capture (ex: due to Daylight Savings Time), so the correct times are put in afterwards. Captions are similarly added afterwards.

Once these files are published, their image pixels and embedded contextual information are vulnerable to manipulation. For example, anyone can simply update the timestamp and location recorded in image metadata and redistribute the file. A reputable outlet may even publish the altered photo, completely unaware that it contains tampered metadata, and the unsuspecting reader would receive incorrect contextual information about this photo. Even worse, the image may contain authorship information still showing the name of the BCN photographer, wrongly attributing the disinformation to BCN.

As a newswire service, BCN regularly uploads each photo three times: to a BCN server, WordPress server, and SmugMug. Any changes to captions have to be done on all three servers, adding to the risk of manual mistake. Without an audit log system, it may be hard to resolve disputes where two photos with conflicting metadata arise.

Lastly, the contextual metadata embedded in an image is very limited. And there is usually no available interface to showcase this limited set of contextual information to the readers, especially not in a way that adds value. It would be highly valuable if there are ways to embed rich contextual information, such as links to related photographs and the profile of the photojournalist, so the reader can get a richer experience with these same images.

Our hypothesis is that adding contextual information gives more value to the photo. However, the more information we add, the more there is to manipulate. What is the purpose of embedding a usage license if it can simply be edited by anyone without permission? Therefore, we must also secure all metadata alongside the image pixels. Starling’s approach is to do this via cryptographic methods.

Contents

Framework

TechnologyLearningsArchive

The Prototype

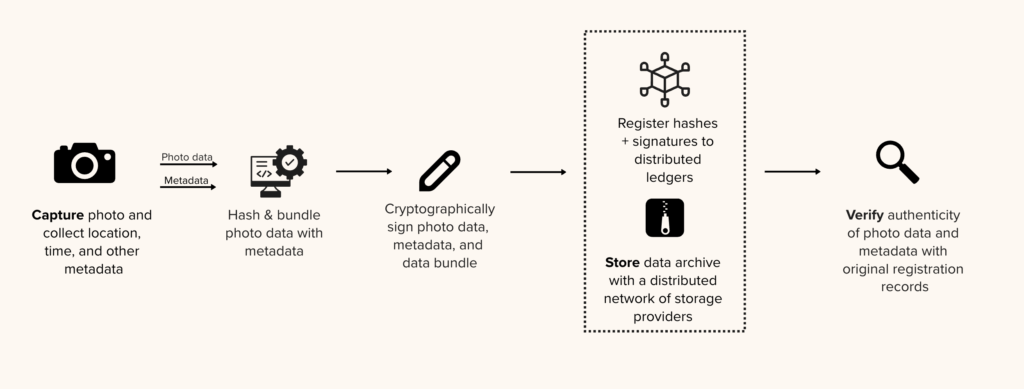

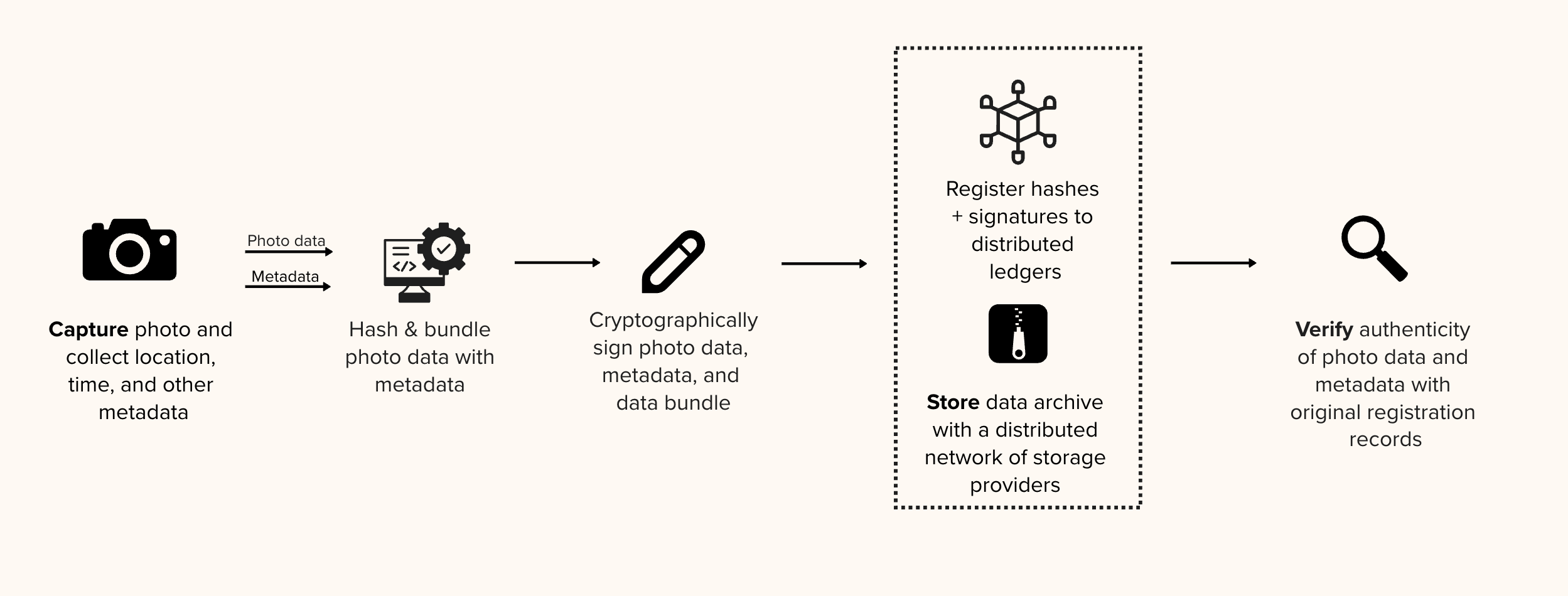

The team was eager to road-test new cryptographic tools that implement Starling’s three-part framework of Capture, Store, Verify.

Starling developed an altered workflow that allowed automated metadata capture and an audit trail of photo edits, but still fit with a standard photo assignment.

Aiming to minimize disruption for photojournalists, the BCN team was equipped with a Canon R5 camera tethered through Wi-Fi to an HTC smartphone loaded with specialized software.

Most importantly, the team focused on how to secure and surface rich contextual metadata for each photo to the reader at the point of publication. They partnered with the Four Corners Project to present these time capsules in such a fashion that readers could visually examine contextual information alongside photographs. This innovative graphical display allows readers to examine a photo’s backstory and metadata – including timestamps, GPS positions, and other provenance markers. This means readers can explore and verify the authenticity of images independently.

At a high level, these steps included:

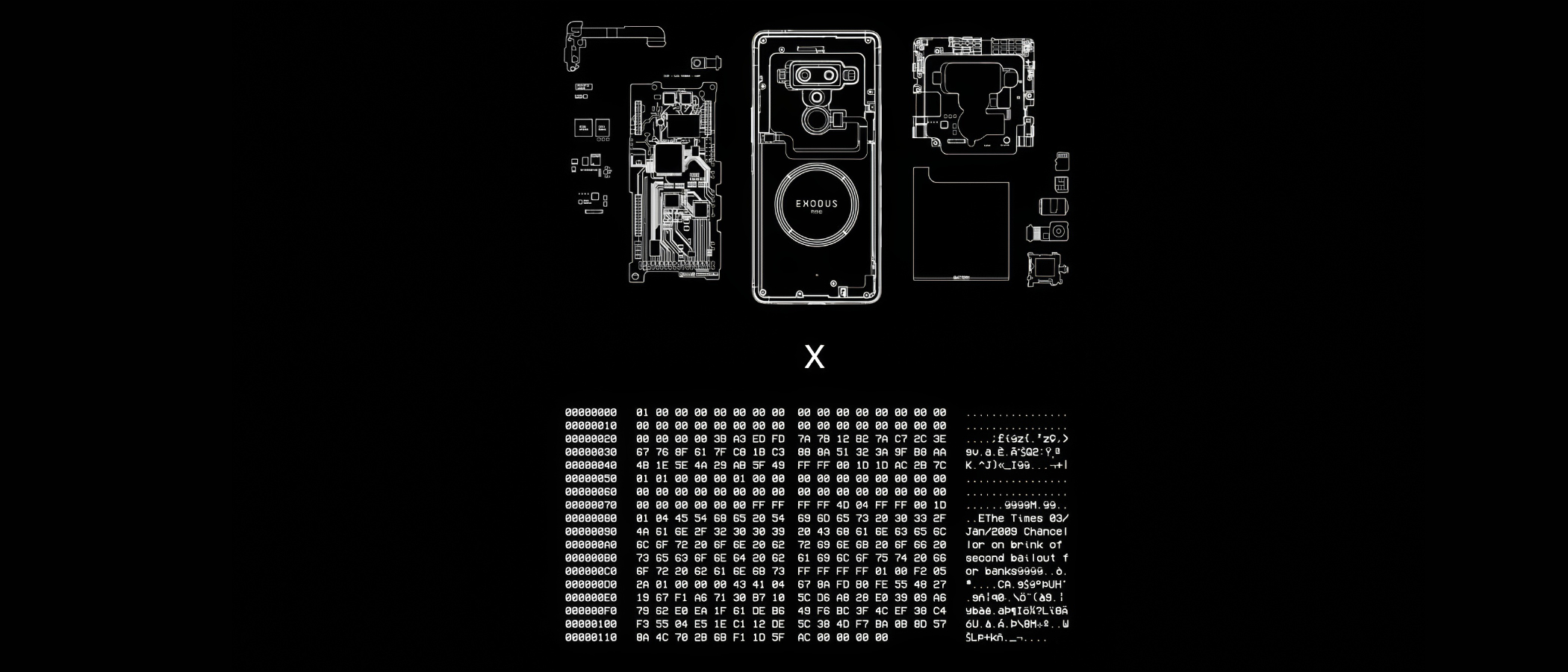

- Capture: Using the Canon R5 camera and HTC Exodus 1S phone, photos and metadata were sent to a Starling-managed server, and were immediately secured through hashing and signing. The authenticity records were registered on blockchains, sealing their proof of existence such that future alterations on pixel of metadata would be evident.

- Store: Images were stored on several BCN and partner systems, both for preservation and distribution. This was least disruptive to BCN’s workflow and copyright policies. The authenticity of the files stored can be verified against blockchain registration records for data integrity (i.e. they were untampered originals).

- Verify: C2PA attestations were made throughout the photo edit chain. In particular, a C2PA schema was developed for Four Corners’ rich contextual metadata, and a WordPress plugin was developed and deployed on the Newspack-operated content management system so the metadata can be surfaced to readers. This allowed the team to test the hypothesis of providing rich metadata to readers through a polished user interface.

A total of 15 stories were published through the course of the Starling Lab project and are all presented on a LocalNewsMatter.org project page with 35 authenticated photos and six authenticated photos displayed using Four Corners.

Contents

Context

Framework

Technology

LearningsArchive

Technology

Capture

The Capture Setup

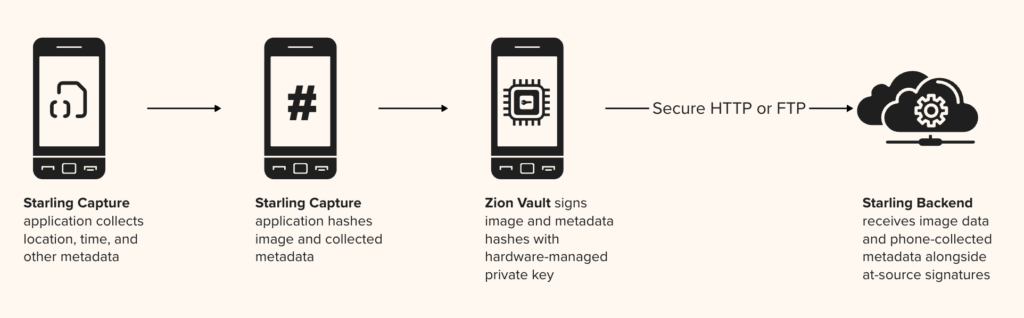

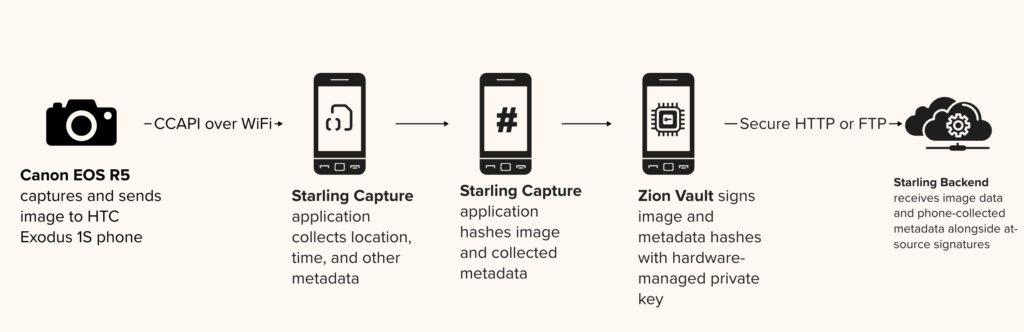

In a standard photo assignment, DSLR-captured photos would be downloaded to a laptop and sent directly to photo editors. However, this does not allow for an opportunity to automatically capture GPS location (as most cameras do not have a GPS module) and other at-source metadata. On the authenticity front, neither the camera or the delivery endpoints use cryptographic techniques to secure the image and metadata. Therefore, we modified the standard workflow to add an HTC Exodus 1S mobile phone, which was loaded with an application called Starling Capture and pre-authorized to send image files – with their metadata – to a Starling-operated server.

BCN Photojournalist Harika Maddala and Reporter Victoria Franco used a Canon EOS R5 camera. The Starling Capture mobile application, developed by Numbers Protocol, uses Canon’s Canon Capture API (CCAPI) to set up a Wi-Fi hotspot for the phone to connect with the camera. With each snap of a photo, the image file is automatically transmitted to the application, where GPS, time, and other metadata are gathered and associated with the photograph. An example of gathered metadata is available on GitHub.

Digital Signage on Device

Upon receiving an image, a cryptographic hash was immediately computed from the image file, serving as a secure and tamper-evident fingerprint to identify the file. Then signing keys pre-configured by Starling on that device were used to sign both the file hash and the metadata, sealing the data “at source” before any data was transmitted to a server.

This important step of digital signage secures all of the above, and protects against unnoticed tampering even during transmission. It is unfortunately not a capability that professional cameras had at the time of this project. However, in parallel, Starling has been working with camera vendors to add such capabilities, and recently vendors (including Leica, Sony, and Canon) started releasing digital signage features to a handful of models.

Uploading for Registration

After each photography session, the BCN team used a photo gallery within Starling Capture to inspect previews and select images to upload. Uploading to Starling servers requires an access token pre-configured on the phone. This gave assurance to Starling that the upload request was originating from a known source, and specifically that it came from the device provisioned to the BCN team. This also allows authorship information to be associated.

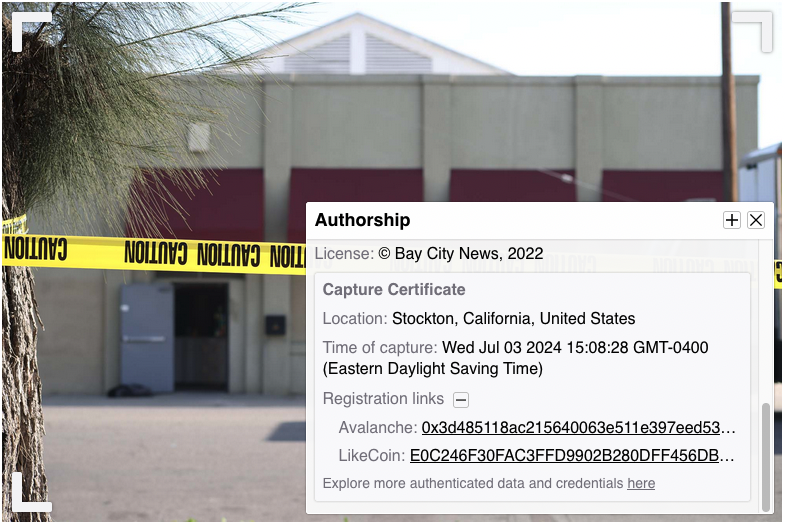

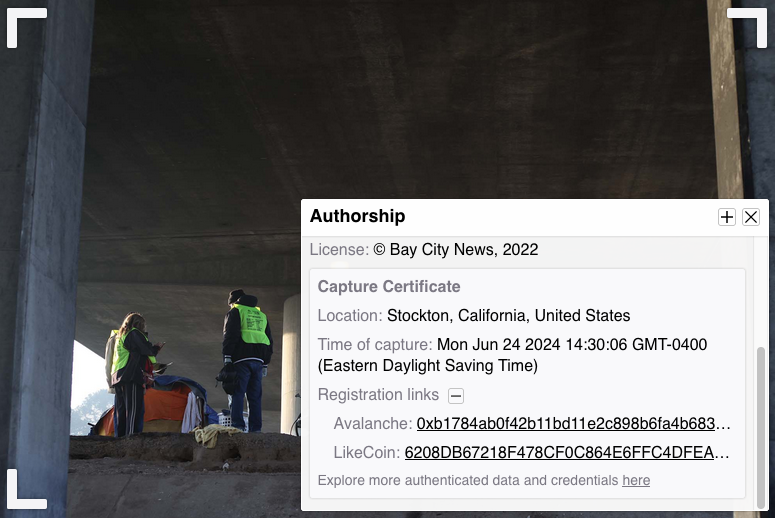

Once the server received an image, it was registered onto blockchains alongside contextual metadata and at-source signature information. These blockchains include Avalanche (a fast, decentralized, open-source blockchain that offers smart contract functionality) and LikeCoin (a chain specialized for decentralized publishing). These public ledgers use cryptography to ensure registration records are tamper evident and immutable. In the Verify section, we will explore how the registration information is presented to readers, so they can independently audit each photo against its registration records.

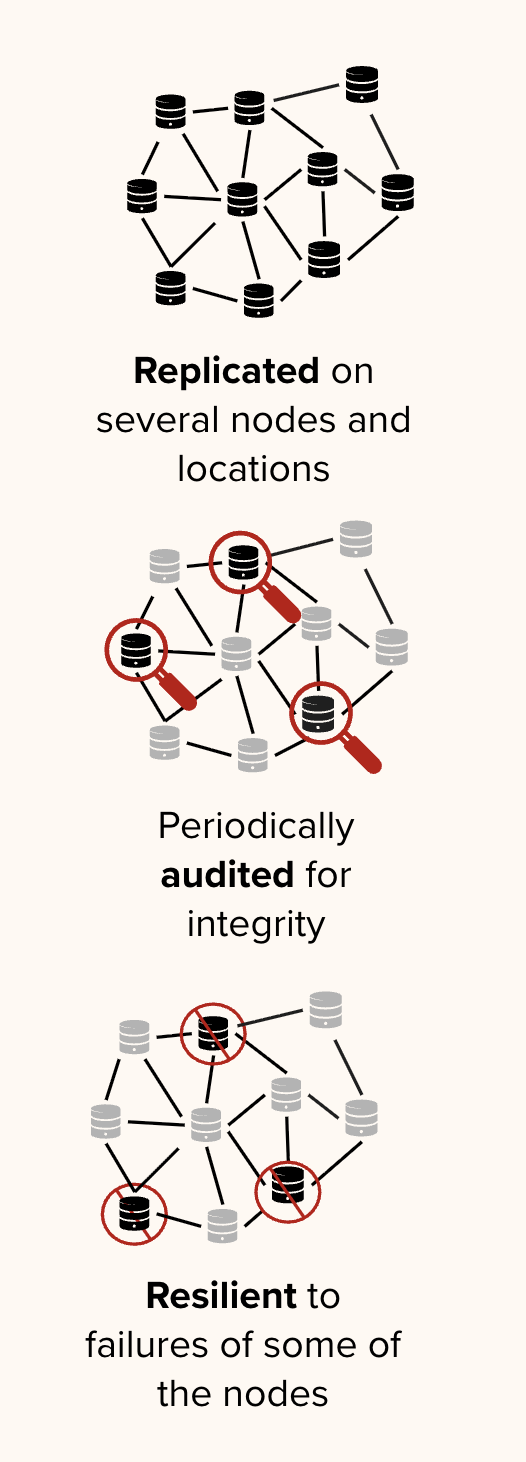

Store

Starling generally advocates storing data in multiple places to protect against various threats of data loss, and oftentimes employs decentralized storage systems as a preservation strategy. This comports with an industry standard 3-2-1 backup strategy, which encourages three copies in at least two different formats and one offsite location.

For this project, due to copyright concerns, the storage of this media and data remained on systems managed by BCN and Newspack, but the existing workflow at BCN already has file replication across a BCN server, a WordPress server, and SmugMug.

After Starling’s server received each photo for registration, we aimed to return authenticated versions of the photos to BCN as soon as possible to be minimally disruptive to their workflow. This was done via synchronization to a Dropbox folder with shared access. In this process, the server took the image pixels and metadata, then generated a C2PA manifest (signed by Starling), then bundled it with the image file according to the Content Authenticity Initiative’s specification, and finally returned it to BCN’s photo editors.

Verify

Upon receiving C2PA-authenticated images from the Starling server, the photo editorial process began.

Unlike traditional image files, these already contained metadata such as time, location, and author information that were automatically associated by the tethered phones and validated on the Starling server. From this point onward, Starling worked with BCN through the editorial process to track incremental edits and changes, so the published photo would retain an audit trail to the original photo. This can be referred to as glass-to-glass authentication, meaning from the moment light hits the lens of a camera to the moment it comes out of the audience’s device screen.

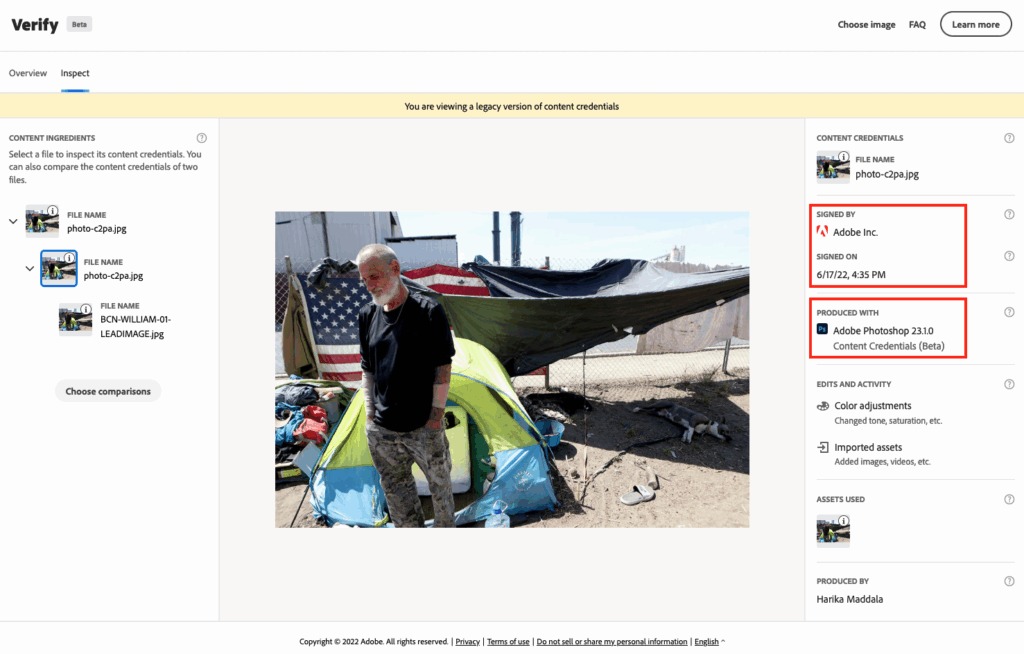

Image Edits with Adobe Photoshop Content Credentials

In order to resize photos, or to make other pixel or metadata changes, the BCN team used a beta feature in Adobe Photoshop called Content Credentials, which is an implementation of the C2PA Specifications. When the edited image is exported, a second C2PA manifest containing tracked changes is signed by Adobe Inc. attesting to that fact Photoshop was used to perform the changes listed under Edits and Activity in the Content Credentials Verifier.

Rich Contextual Metadata with Four Corners and C2PA

In addition to securing provenance information and establishing an audit trail through the photo editing process, the team also set out to prototype a better way to display rich contextual metadata to readers. The hypothesis was that by bundling more context with each photograph, we could provide a more valuable experience and accurate conceptual picture for readers.

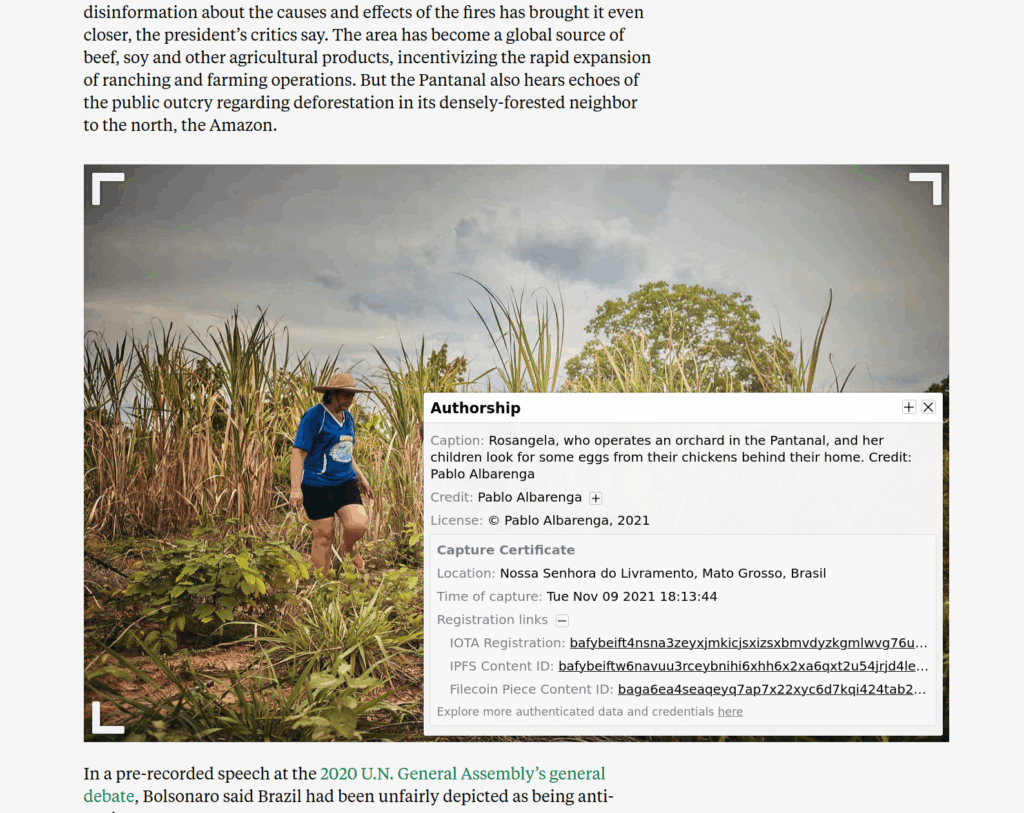

The team elected to use the Four Corners interface, which allows specific information to be toggled upon an interaction in each of a photograph’s corners. With the Four Corners team, we created a WordPress plugin which augments the standard Four Corners with additional features:

- The ability to read and display contextual metadata from C2PA manifests bundled with an image file

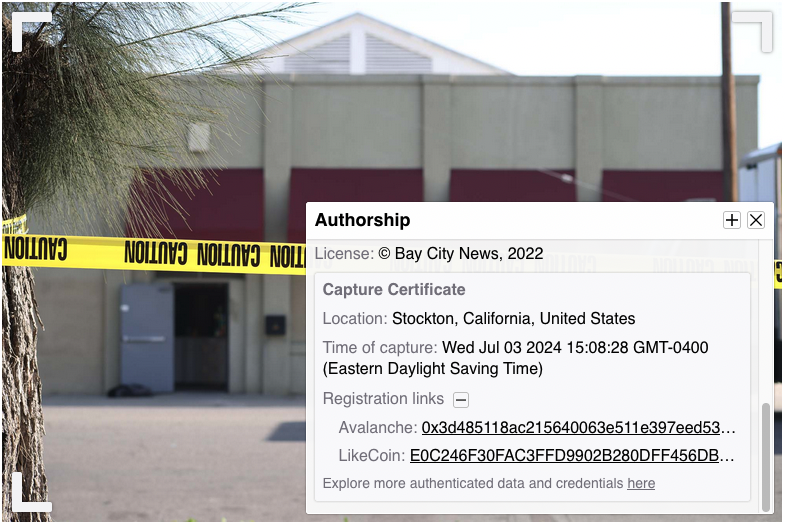

- A Capture Certificate displaying authenticity information at the bottom right corner

This added contextualization highlights the authorship by the photographer, the credibility of the image, and prevents misuse of photographs taken out of their original context.

- The lower right toggle shows authorship information as well as the Capture Certificate containing information about the photo’s authenticity.

- The lower left toggle shows the photojournalist's backstory for shooting the image.

- The top left toggle shows other related photos.

- The top right toggle shows additional links related to the photo and story.

To introduce the new feature, the BCN team added a short editor’s note to accompany the stories. The Nuts and Bolts article summarized technical details about Four Corners and the technology used to create the metadata displayed with it.

As part of this work, Starling worked with the Four Corners team to develop a C2PA-compliant metadata schema for bundling rich contextual metadata in C2PA manifests. This schema contained all the metadata contained in each of the Four Corners toggles. This data is then included in a C2PA manifest, and parsed by the WordPress plugin, for presenting contextual and provenance information on each article.

Capture Certificates & Blockchain Registrations

During the Capture step, at-source metadata was collected by the HTC Exodus 1S phone and sent to the Starling server. This data at capture time was registered to blockchains and bundled with the image for BCN photo editors to process with tracked changes. With the Four Corners interface, Starling added a Capture Certificate to surface this information to the reader. This could be compared to a “certificate of authenticity” and is a precursor to the subsequently introduced concept of Content Credentials.

Exploring Blockchain Registrations

Starling used immutable ledgers to register digital content in this project. This assists experts wanting to audit the provenance and authenticity of the content. The unique digital fingerprint and provenance information of each photo recorded on blockchains serves as a proof of existence, while the digital signature helps establish the identity of who took the picture. All of this is indexed on a publicly-explorable blockchain to provide a public facing record of what exactly was created, and when.

Readers explore the blockchain registration information in these BCN stories by hovering over an image and clicking on the bottom right corner. Expanding the Registration Links in the Capture Certificate would display blockchain transaction IDs that can be found on public explorers for the Avalanche and LikeCoin blockchains.

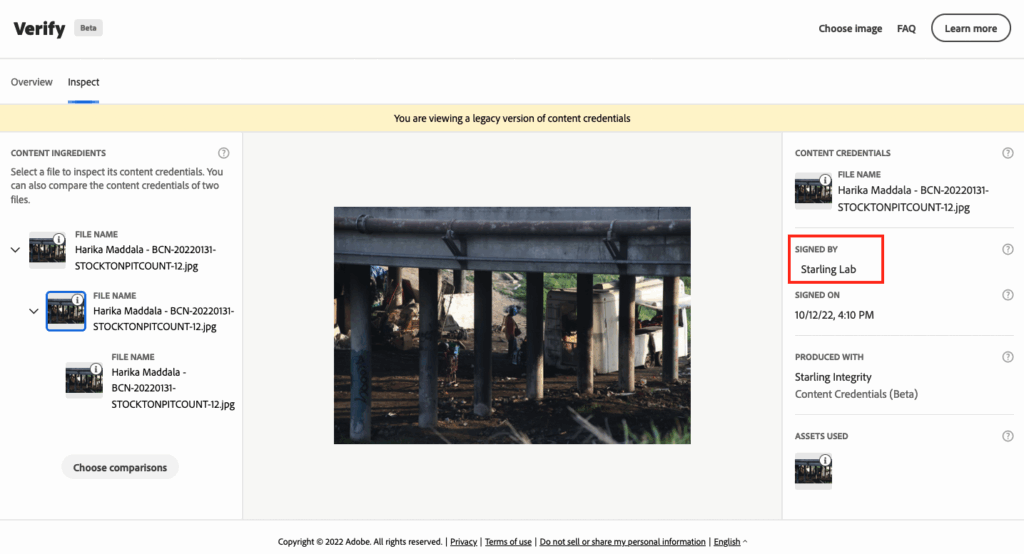

Verifying Content Credentials

Clicking a link in the bottom right text of the Capture Certificate opens up the authenticated image on a browser-based verification interface called Verify.

Verify is a tool created by the Content Authenticity Initiative (CAI) to help make content transparency a standard across the internet, and to make Content Credentials accessible to everyone. Any of the authenticated photos from the published articles can be downloaded and viewed in this tool. As this project was done before the first production version of this tool was released, you need to use the Verify Beta tool to view the images and metadata from this case study.

This interface displays the thumbnail and metadata contained in the C2PA manifests, protected by a cryptographic signature at each of the three steps, detailing the original capture by Harika, followed by photo edits (with Photoshop), and lastly the addition of Four Corners rich contextual metadata. Versions of edited photos can be compared side-by-side or in an overlay with a slider feature.

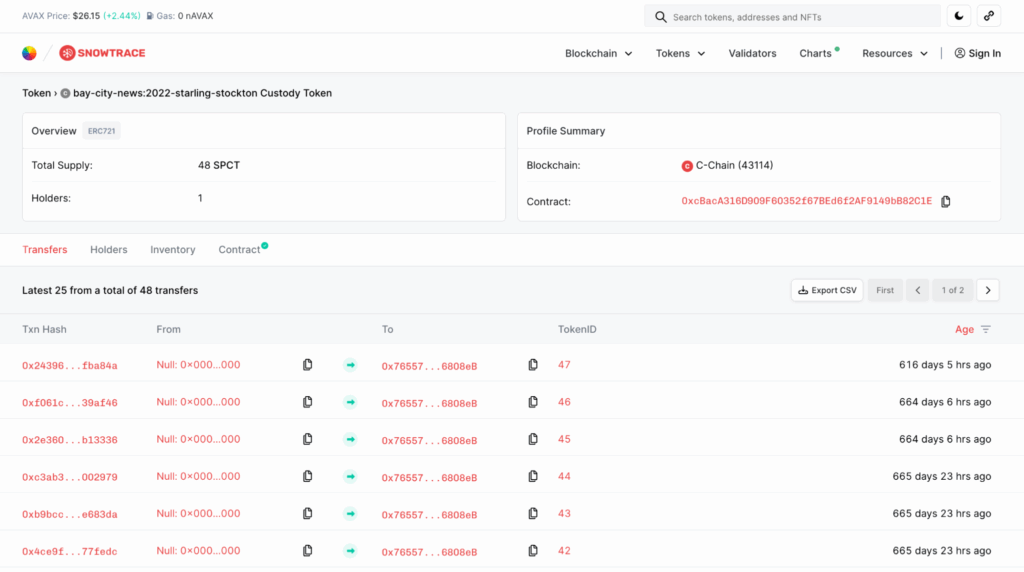

Custody Tokens

As an additional experiment, we minted a non-fungible token (NFT) for each of the 48 original images selected for authentication to publicly track custody of each image asset. The purpose of this experiment was to explore using the transferability of a digital representation of an asset to signal the custodian of the asset.

At the moment, producers of a digital asset, whether it be a photograph or an article, are oftentime expected to be the long-term stewards of the content they produced. But this is not always what happens in practice. Just as notable works often become preserved by museums and estates, it is also possible for photojournalistic work to transfer from one custodian to another. The non-fungibility aspect of an NFT allows for authentic representation of a digital asset, and the token features allow for transferability based on a set of rules.

Note that transfer of the token does not imply moving of the digital asset from one storage system to another, nor any change with respect to the terms of use or distribution strategies. It simply announces to the public who is the primary custodian of the digital asset, empowered to make the decisions listed above.

Readers can explore the collection, where each SPCT token represents an archive containing an authenticated image and metadata.

At the moment, the archives of this project are under the custody of the project team. But if the stewards of the original assets were to change, the tokens can be transferred to another institution for continued stewardship. The transfer history allows the public tracking of the chain of custody, as important assets are not necessarily maintained by a single institution forever. This provides an indication to the public of who to reach out to for access to specific assets.

Contents

Context

Framework

Technology

LearningsArchive

Learnings

The Capture Setup in the Field

Several difficulties were encountered using the newly developed camera and phone setup in the field. Having both a camera and phone increased the number of devices a photojournalist needed to carry and interact with. The Starling Capture application allowed only one camera to be connected at a time, limiting the photographer to use the lens that is connected to the camera, as switching lenses would require reestablishing the WiFi connection. In some assignments, BCN’s team carried two cameras with different lenses, each tethered to a different HTC Exodus 1S.That increased the number of devices carried by the photojournalists to four. Management of these devices got overwhelming.

The photo shooting experience also became more challenging. First, the photojournalist needs to ensure an active WiFi connection exists between the camera and phone. Then there are limitations to continuous “burst” shots, a common practice where tens of photos get captured in a matter of seconds. Not all images were received by the phone when shooting in burst mode, with an average of 3 of 5 photos successfully transmitted while others were skipped. The skipped shots still existed on the camera SD card, but never made their way to the HTC phone.

Another issue was battery life. The WiFi connection drained the battery on both devices. While camera batteries can easily be swapped out, the HTC phone took a long time to recharge.

Several of these issues could be addressed by integrating similar technologies directly onto cameras, or designing products and systems to address them prior to manufacturing.

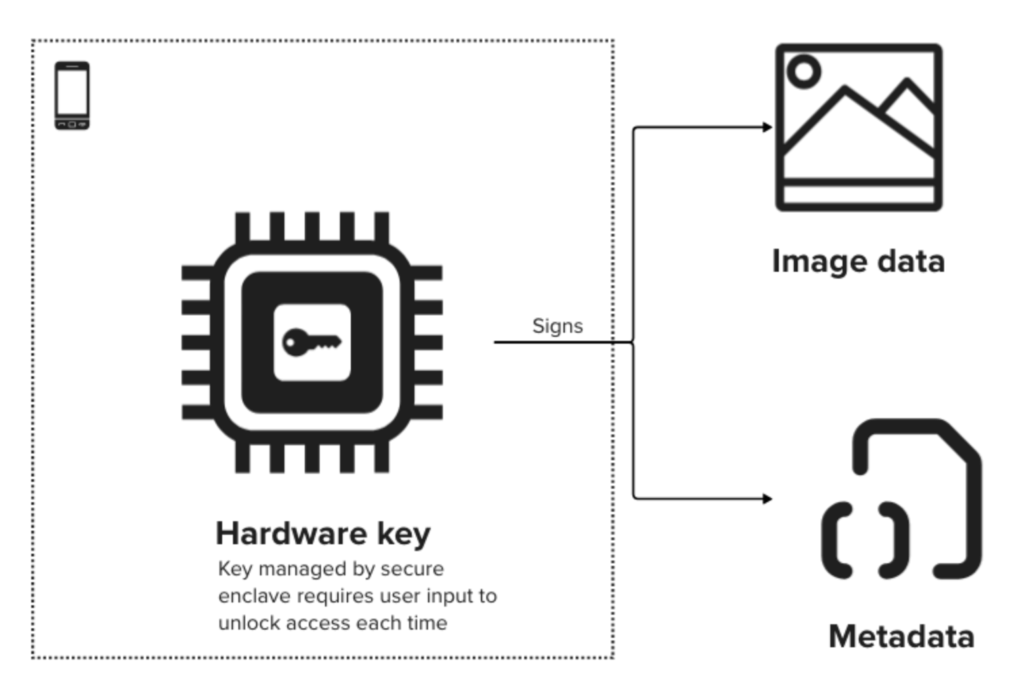

Digital Signage with Secure Enclaves

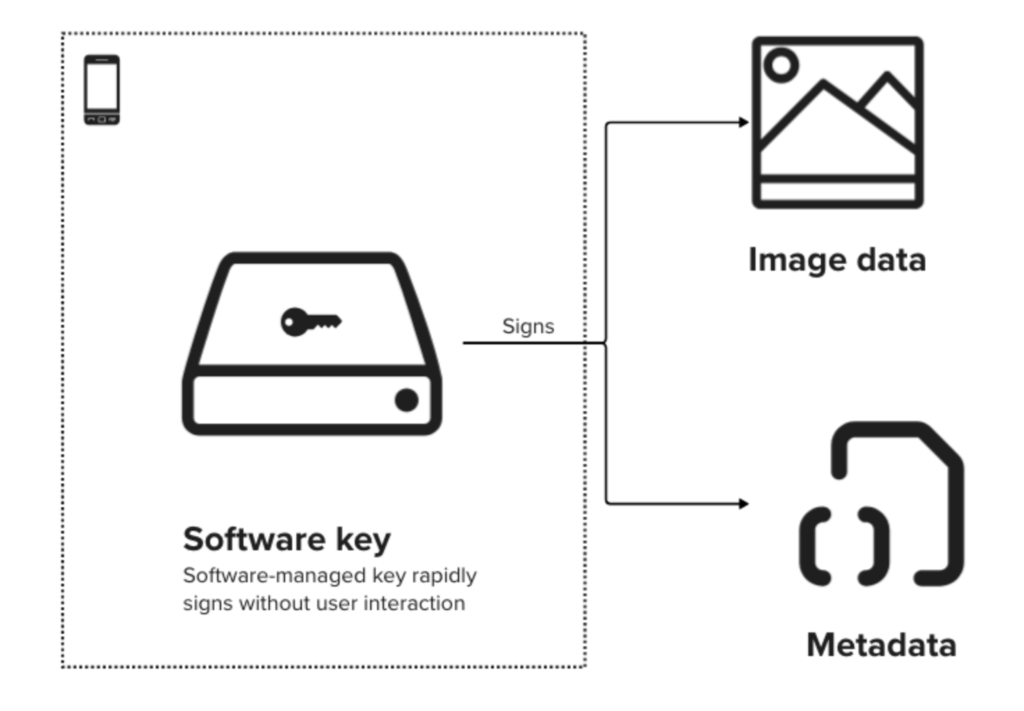

In this project, alongside standard software at-source signatures, the Starling Capture application prototyped a combination signature scheme that leverages the Zion hardware-secured signing key available on the HTC Exodus 1S. This key was generated by the phone hardware while configuring the Starling Capture application, and it served as a unique identifier tied to the secure enclave on that phone. This is the optimal standard for digital signing key management on a field device. The expected signer is known to the servers ahead of time, and signed data received can be verified to be originating from the particular device, with very little risk of key compromise.

The straightforward approach would have been to use that hardware key to sign every image and metadata bundle.

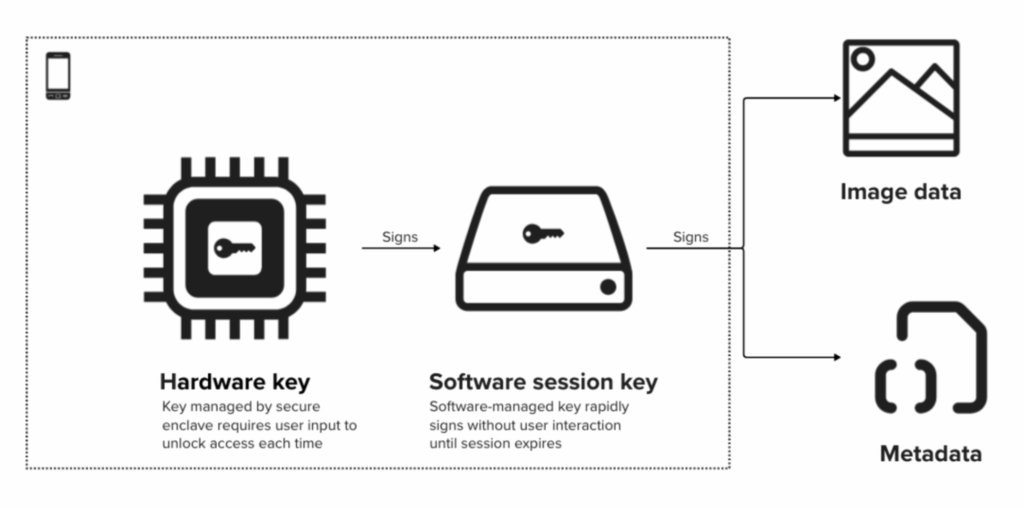

This is impractical, however, as Zion requires the user to type in a password every time the key is used to sign data. A photographer rapidly shoots many photos, sometimes triggering tens of signing events every few seconds. As a result, a temporary session key, not secured in specialized hardware, is used as the signing key.

This presents an opportunity for design of future phones and cameras with a secure enclave. When designing devices with hardware-based secure enclaves, it is important to consider use cases where requiring user authentication for every signature may be impractical

For this case study, we worked around these issues by generating a software session key for signing assets quickly for the duration of a single user session. This key itself is signed when it was generated with the hardware key, giving it a high standard of trust but improved speed and ease of use for a limited period of time.

For future device design, we recommend natively supporting authenticated sessions that have expiry triggers, thus allowing multiple signatures to occur without separate user authentication. Otherwise these features will be implemented in application software, rendering the overall solution less secure and verification more complex.

To verify the hardware root of trust, both signatures in the two-signature chain must be verified. In other words, one must verify the image and metadata signature by the software session key, then verify the signature of the software session key by the hardware key. Unfortunately in this deployment, due to a bug in the particular version of Starling Capture, the hardware key signature was not preserved with each photo. Therefore we cannot verify each photo against the hardware-secured root of trust.

In parallel, another software key based on AndroidOpenSSL was used to produce signatures that gave us a redundant at-source signature for the same data, and these signatures were intact across all versions of the app. This static software key was not session based, and was used to sign the data directly.

Since this key was not hardware-managed in a secure enclave, it would be more vulnerable to key compromise on a field device. However, in our controlled deployments with trusted fellows and Starling-provisioned hardware, we have high confidence that the software key is untampered, and we can rely on the digital signatures produced.

This experiment highlights the importance of automated verification of all signatures at every opportunity, and especially as data crosses system trust boundaries. For example, when the backend server receives a signed message, it should first discover what set of signatures are contained in the message, and then proceed to verify all of them. This assures all information required for verification is provided.

Another place where errors may occur is during preservation of these signatures. An incident occurred in another implementation where metadata was signed and transmitted as JSON payloads. The backend system proceeds to parse the JSON data, then writes the data back to disk. However, when it is read back again for verification, the ordering of the JSON content is altered. The two JSON documents are semantically equivalent but bit-by-bit different, and therefore no longer verify with the original signature. The original ordering transmitted was essentially lost in this process, making retroactive verification impossible.

The method to combat this type of error has evolved from first applying a round of binary-to-text encoding (e.g., Base64) to original data before signage and storage. Later implementations stored canonical representations such as JCS and CBOR with deterministic ordering of data. Similar challenges exist when data is “chunked” for hashing under certain implementations, and methods that standardize on this process such as BLAKE3 are adopted to provide determinism at verification.

Editing with Adobe Content Credentials

The C2PA specification, along with its tooling, were in early beta during this project. Updates that are not backward-compatible in the Content Credentials beta feature in Photoshop v23.1.0 occurred after some of the photos were captured and before the editorial team had an opportunity to edit them, leading to incompatibility in the C2PA tracking features during the edit phase.

Additionally, tracking of edits in Photoshop were limited. According to BCN Photo Editor Ray Saint Germain, “We wanted to see more from Adobe’s Content Credentials tool: to record detailed changes to photos including details of what was done with color adjustments – such as curves, hue/saturation, levels, etc. – and list of filters used.”

In other words, the version of Photoshop Content Credentials used at the time could be improved with more robust recording of editing changes showing the values of tonal changes, saturation, and any composition adds or removals.

Adding captions to photos with tracked changes proved to be challenging. Software like Photo Mechanic and Adobe Bridge could not be used because they didn’t have C2PA features to track changes, so captions must be entered using Photoshop, which did not offer a batch captioning feature and instead required toggling through multiple fields in the program’s interface. We also discovered that when exporting photos (rather than save), which was required for using the Content Credentials feature, Photoshop did not include captions with the exported images.

Due to these challenges, the team decided to sign photo edits with our own signing certificate for some of the files rather than to use Photoshop’s built-in Content Credentials. Therefore, for some photos in the project, readers will find edits that are signed by Starling Lab rather than Adobe.

This project used a very early pre-1.0 version of the C2PA Specification. Subsequently, versions 1.0 and 2.0 have been released. Content Credentials as a feature in Adobe Photoshop has also been improved, adding more granular change tracking, the ability to add information about the producer (Adobe account owner), among other updates.

Integrating Four Corners with C2PA

This was the first implementation of the Four Corners project with C2PA support. The process of formatting and adding the data that would appear in each of the Four Corners displays was mostly done manually. These displays allowed users to dig deeper to examine metadata and related assets.

Though this manual entry could be easily done for a small set of images, any newsrooms that wanted to do this on a larger scale would need to have a system for easily ingesting and formatting data to correctly display in the Four Corners Wordpress plugin.

Authentication Ecosystem & Business Model

In the world of deep fakes and generative AI, BCN Photo Editor Ray Saint Germain expresses the importance for Bay City News to authenticate all images made available to its clients.

“We source photos from subjects, agencies and public sites for many of our stories and each photo is vetted for authentication and copyright. Our clients, knowing the photos made available to them have been vetted, is a value we are working on monetizing by subscription and a la carte purchases.”

– Ray Saint Germain, BCN Photo Editor

Saint Germain points out how signed contextual and integrity metadata “could help us monetize our photos if other news organizations, viewers and even commercial clients who care about the integrity of the images are willing to pay a premium for them, knowing that the content is authentic.”

This remains to be tested, however. Saint Germain also states, “as it stands, most of our newswire clients, SF Bay Area print, radio and TV stations, China Press, New York Times and others, say they want photos but few would voluntarily pay for them because they are not concerned about high-quality images. Instead, they rely on user generated content submissions, stock photos, their own archives, or one master source like the Associated Press. So to charge even more for the authenticated photos might be a high bar.”

Importantly, with the related costs of equipment, software developers, blockchain registrations, and staff time, BCN Foundation and other similar-sized newsrooms can't accomplish this without adequate financial and technical support, or an ecosystem of affordable tooling. The development of cameras, content management, sharing, and editing tools, along with interfaces that allow inspection and exploration by verifiers are all part of an ecosystem that continues to be built.

Contents

Context

Framework

Technology

LearningsArchive

Archive

- Lodi shelter participates in local homeless count

- Stockton mourns killing of fire captain as he responded to emergency call

- A look at the day-to-day life of an unsheltered Stockton man and how he copes with regular displacement

- Stockton’s new Respite Center helps addicts get clean and sober on their path to recovery

- A matter of trust: Inside Stockton’s Police Department and the residents they serve

- Article on the process: https://localnewsmatters.org/nuts-and-bolts-the-bay-city-news-starling-lab-authentication-project/

Setting the Record Straight in Brazil’s Burning Wetlands

Setting the Record Straight in Brazil’s Burning Wetlands

Documenting the devastation of Brazil's Pantanal wetlands, this story showcases a collaboration between a writer, a photojournalist, and technologists fighting rampant disinformation during Brazil’s presidential election.

Starling LabReading Time: 5min

Prototypes

Tags

Brazil, Off-grid, Photojournalism

Share

Contents

Background

Context

Framework

TechnologyLearningsArchive

Background

Under President Jair Bolsonaro, climate-driven droughts and burns to clear land spawned more wildfires than ever throughout Brazil, especially in a central wetland region called the Pantanal. More than a quarter of the Pantanal (over 9.6 million acres) burned in 2020 alone, devastating subsistence farmers, small ranchers, and fishers. For years, Bolsonaro downplayed the crisis as “disinformation,” even in speeches to the United Nations General Assembly.

The October 2022 Brazilian presidential election captured the world’s attention with concerns about misinformation as Bolsonaro sought a second term. The incumbent had continued to spread his own disinformation about the cause and effects that threatened his country’s rare habitats.

Finding a way to prove to the public what was really happening in this vulnerable ecosystem was a high priority for local journalists. On September 30, 2022, Inside Climate News (ICN) published a story headlined “In Brazil, the World’s Largest Tropical Wetland Has Been Overwhelmed With Unprecedented Fires and Clouds of Propaganda.” In addition to the environmental challenges the country had been facing at the time, the story highlighted how Brazil has also been confronting two destructive forces challenging journalism: the contradiction of fact-based information and the denial of climate change.

Bolsonaro lost his bid for reelection the following month to his predecessor, Luiz Inácio Lula da Silva. While Bolsonaro was president, the Amazon had seen the highest deforestation rates in 15 years. In contrast, when “Lula” Da Silva was in office from 2003 to 2010, the same region saw a 48% decrease in deforestation rates. The 2022 presidential election was celebrated by those hoping to protect sensitive environmental habitats and seen as a victory over denialism.

Whether about climate change or any other high-stakes issue, it is of utmost importance that electorates (and all audiences) can access reliable evidence and make their own informed decisions. We set out to demonstrate how verifiable digital media can serve that function when it includes a provenance trail and cryptographic integrity.

Contents

Context

FrameworkTechnologyLearningsArchive

Context

In this project, we deployed new technologies to confront visual disinformation to help combat the denialism of the climate realities that were affecting Brazilians across the Pantanal.

Independent photojournalist Pablo Albarenga is a documentary photographer and visual storyteller who has documented land conflicts in Latin America involving indigenous people. He is a National Geographic Explorer, a grantee of the Pulitzer Center, and was selected in 2020 as the photographer of the year by the Sony World Photography Awards. In the spring of 2022, Albarenga traveled to Brazil’s largest tropical wetland area, the Pantanal, to document the environmental devastation of the area for a project published with Inside Climate News. The story was written by Jill Langlois, an independent journalist based in São Paulo, Brazil. Her work, which focuses on human rights, has appeared in National Geographic, The New York Times and The Guardian.

Founded in 2007, Inside Climate News is a Pulitzer Prize-winning, nonprofit, nonpartisan news organization that reports on and provides analysis of climate change, energy and the environment. Senior Editor and award-winning photojournalist Michael Kordas oversaw the editorial process, editing Langlois’s story and Albarenga’s photographs. Columnist and Web Producer Katelyn Weisbrod handled production, technical implementation and story layout.

At the Starling Lab for Data Integrity, an interdisciplinary team of journalists/editors and technologists worked on novel ways to bolster trust in this project’s key records. Albarenga worked with the lab’s engineering team, including Chief Technology Officer Benedict Lau and Head of Engineering Yurko Jaremko, to deploy authenticated camera capture tools to better secure metadata at source. This group oversaw development and implementation of photo capture and authentication solutions. They also worked with Four Corners developer Corey Tegeler who assisted with the final photo presentation.The Lab’s editorial team, led by Journalism Program Director Ann Grimes and former Executive Editor Sophia Jones, also worked with Albarenga, Langlois, and the Inside Climate News editorial and tech teams to implement and integrate new technologies into the news organization’s workflow.

Contents

Framework

TechnologyLearningsArchive

Framework

At the Starling Lab, our guiding objectives include establishing provenance of information and securing the integrity of digital content preserved for long periods. Integrity of digital content is assured through cryptography, allowing current and future verifiers to evaluate the authenticity and trustworthiness of each asset. To accomplish these objectives in this project, the Lab applied our three-step framework of Capture, Store, Verify.

The Challenge

Senior Editor Michael Kordas noted how climate change deniers may dispute the subject matter ICN covers and recognized the value of rigorous verification standards. "With photojournalism as part of my portfolio of responsibilities at ICN, I was excited to use the image verification technologies provided by Starling and the Four Corners template," he said.

Kordas pointed to ICN's commitment to maintaining the highest standards of vetting and verification for their stories. "ICN stories have occasionally been called into question or subjected to attempts to discredit them by climate change skeptics or those who have vested interest in preventing action on climate change," he explained. The integration of authenticity markers for an image is a crucial addition to their verification process in visual storytelling. "During the past few years, ICN has been expanding its photographic report, and being able to provide proof of an investigative photograph’s provenance and authenticity … can help bolster the credibility of the photography the site publishes and increase its impact," Kordas added.

So how might authenticated images disprove the rhetoric of Bolsonaro?

Contents

Framework

TechnologyLearningsArchive

The Prototype

In this project, we sought to deploy new technologies to confront visual disinformation by better authenticating digital content. Photos presented in our coverage provide a clear window into the environmental crisis. They go further by displaying contextual metadata that supports the provenance of what is documented, creating an unalterable “time capsule” of the events captured in the images, displayed using a UX called Four Corners and verification standards established by the Coalition for Content Provenance and Authenticity (C2PA).

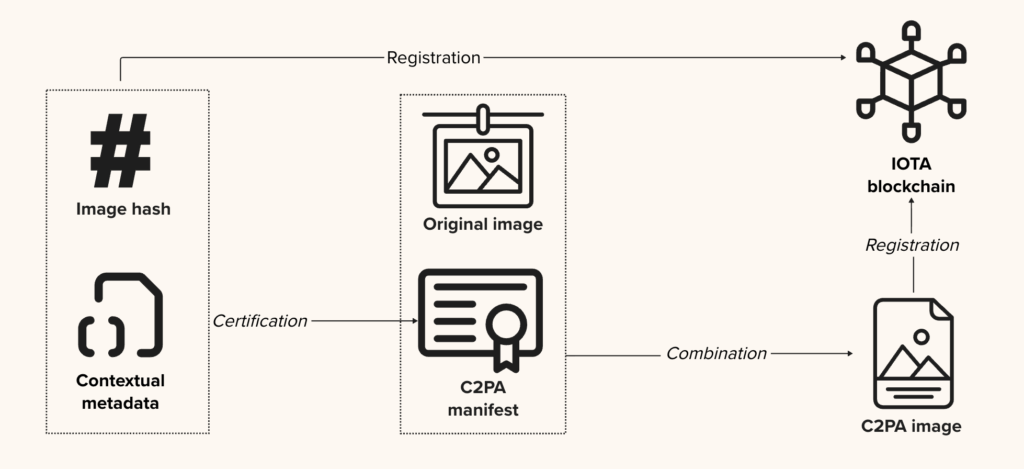

Capture: Starling strives to establish a root of trust in digital media as close to the point of capture as possible. For this purpose, we co-developed a mobile application—Starling Capture, in collaboration with Numbers, a Taiwan-based company that leveraged the hardware signing capability of the HTC Exodus 1S for their media authentication products. This smartphone, paired with a professional Canon camera via Canon’s Camera Control API (CCAPI), can authenticate digital content and metadata at the point of capture. Additionally, integrity information and provenance metadata from the images were registered on the IOTA blockchain.

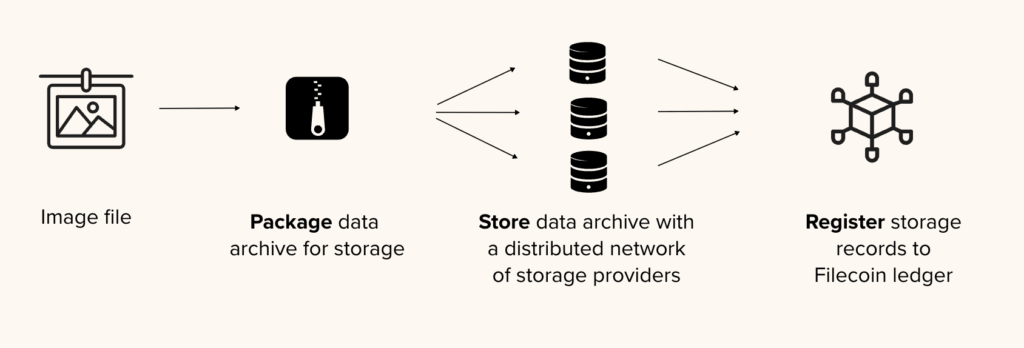

Store: Starling researches how advanced cryptography and decentralized networks can securely preserve and distribute content over time. While integrity records from the time of capture are registered on public ledgers, the images themselves were preserved on the Filecoin storage network and made available over IPFS for peer-to-peer distribution.

Verify: Starling uses immutable public ledgers to register digital content, as well as other techniques to include contextual information that are authenticated. This forms an auditable foundation that enables experts to verify the provenance and evaluate the trustworthiness of that content. With the Four Corners project, we created a unique display for contextual and authenticity information packaged in each image file using the Coalition for Content Provenance and Authenticity (C2PA) specification, and displayed the information in Inside Climate News articles using a custom WordPress plugin.

Contents

Context

Framework

Technology

LearningsArchive

Technology

Capture

The Capture Setup

The authenticated capture process begins with provisioning of equipment capable of collecting contextual information and cryptographically securing data at the point of capture. In this project, photojournalist Pablo Albarenga used a Canon EOS R5 camera paired with an HTC Exodus 1S phone, which was preconfigured with the open-source Starling Capture mobile application and authorized to send data to the Starling servers.

Uniquely, this smartphone embeds a secure vault for cryptographic keys inside a hardware enclave—permitting digital signing of data using a hardware-protected key. This significantly enhances security by isolating the keys from the main operating system and protecting them from tampering and unauthorized access.

This mobile application is specifically built to function with Canon cameras supporting Canon’s newly-released Camera Control API. The API permits the phone to interact with the camera over WiFi, receiving preview data and captured photos in the application. Once captured, a photograph is wirelessly transferred to the phone automatically, and the application then collects rich contextual metadata surrounding the capture such as location and time. It then computes the image’s unique digital fingerprint—its cryptographic hash. The image hash and contextual metadata are then digitally signed.

This digital signature verifiably ties together a private key provisioned on a specific device and each of the photographs originating from it. Only recently, and long after the completion of this project, have a very limited number of professional cameras become capable of digital signage directly on-camera as part of the capture process.

Certification and Registration

After the at-source authenticated data bundle was generated, it was then securely uploaded to a backend server managed by Numbers.

The backend server takes the image and metadata to produce a C2PA manifest, which is then combined with the image to create a digitally signed C2PA image. This represents the first version of the image as a C2PA-compliant file where all pixels and metadata are authenticated. These C2PA images are later used to produce the published images. In between, the photographer can use Photoshop to make and track edits to these images, allowing the modifications to be verifiable.

Provenance data of both the original and C2PA images are registered to the IOTA blockchain by means of recording the asset hash and some basic metadata—crucially, the image and most of its metadata are not displayed publicly on a ledger. This allows the photographer to later decide which images and metadata to provide to third parties for future auditing, and does not automatically expose sensitive information or outtakes they might want to keep private.

Store

Content online disappears at alarming rates, and for various reasons: website restructuring, domain name changes, expiration of domain name registrations, dynamic content updates, and server issues. This is the phenomenon known as link rot. In general, there is little guarantee that existing web or even archived content will continue to be stored in the future. To address this issue, Starling retrieved Albarenga’s edited photos from ICN, and stored them on decentralized systems for preservation. That way, no single centralized entity has full control of their long-term preservation, and the digital assets may become more resistant to loss or censorship.

There are several emerging content storage and distribution technologies, such as Filecoin and IPFS, which Starling uses for preservation and publishing of the images. These technologies rely on storing the data in many servers, owned and operated by different people or organizations, all preserving the exact same file to provide redundancy in storage. Filecoin in particular requires participating storage providers to put up a financial collateral, which they would lose to the network should they default or back out of the storage contract before the agreed term.

Verify

After applying the decentralized preservation methods to the photos, additional contextual metadata—such as the photo caption, links to related photos and background information—are added as an additional C2PA manifest. The purpose was to provide rich contextual metadata for readers of the published article to verify and evaluate the authenticity and trustworthiness of the image.

Implementing C2PA with Four Corners

With digital media, in the absence of cryptographic attestations at source, there is little forensic evidence of the origin of an image. When retrieving an image from decentralized stores, even though it can be verified that the image has not been tampered with, there is little information available about its context and provenance. The concern is growing in the face of rapid developments with generative artificial intelligence. To address this, Starling partnered with the Four Corners Project to embed contextual and provenance information into the images, so they can be surfaced in an interactive display for readers to explore.

Records of authenticity, including the ledger where at-source integrity metadata are registered, and storage records where the original image is preserved, are encoded into the image file, attested to via C2PA, parsed and rendered onto the published story using Four Corner’s WordPress plugin, specially developed for this collaboration.

Verifying Photos with the Four Corners Design

The presentation allows specific information to be viewable in each of a photograph’s four corners, where it is available for interested readers to explore by simply clicking on them. This increased contextualization strengthens both the authorship of the photographer and the credibility of the image. In this project, the Four Corners user experience was designed as follows:

- The lower right corner shows the “Capture Certificate” (showing readers how the photo has been authenticated) and the photo’s caption.

- The lower left shows the photographer’s backstory about making the image.

- The top left shows related photos from the story.

- The top right shows related links.

To introduce the new feature, ICN displayed a short Editor’s Note below the author’s byline on the main story. Readers also were invited to learn more about the technology by clicking a link that took them to another page with more information on the technical details.

Contents

Context

Framework

Technology

LearningsArchive

Learnings

Overall Reception and Distribution

According to the Inside Climate News team, the story was a hit, ranking as the top story on their site within the first four days after its publication, garnering almost 30,000 views. Weisbrod, the columnist and web producer, remarked: “Everything that gets more than 10,000 views is certainly a hit on our site.” The story’s popularity coincided with the Brazilian election run-off, giving both the Starling Lab and ICN a month-long opportunity to share the story widely, including through outlets like Covering Climate News, Poynter, and others.

In 2023, an interview in the Columbia Journalism Review with Brazilian journalist Gabriela Sá Pessoa linked the story when discussing how the press handled Bolsonaro’s approach to environmental issues. “There was regular imagery, especially on television, of fires in the Amazon and elsewhere—starting in 2020, fires also burned out of control in the tropical wetlands—which I think played a big role in shaping public opinion,” she said. “In fact, I think this coverage partly led to [former and again-president Luiz Inácio Lula da Silva], during last year’s presidential campaign, calling protecting the environment and fighting climate change his top goals.”

Connectivity and Gear Issues

Albarenga experienced several connectivity issues with his camera’s on-board WiFi, which at times required him to reset both phone and camera—a considerable disruption to his workflow in the field. As he put it, "I had to remove the battery of the camera and reset both the camera and the phone, sometimes twice, to make [the tethering connect]."

Additionally, Albarenga reported mixed feelings towards the sound notifications intended to confirm photo authentication. Each picture transfer triggered multiple such notifications, and produced a continuous noise during bursts of consecutive shots. That being said, these audio confirmations were helpful in keeping him focused on what was around him, instead of needing to pull out and look at his phone. Albarenga suggested reducing the number of notifications per image, and using a distinct sound for errors to further assist hands-free field capture and feedback.

Upload and Workflow Efficiency

Uploading images proved to be another significant issue for the photographer, who had to deal with slow internet speeds in remote areas of the country. Albarenga’s first attempt to upload around 200 photographs overnight failed, leaving many not uploaded. Crucially, the mobile application lacked the ability to filter and sort, making the identification and selection of failed uploads a painful endeavor. He said, “after [some of the photos] didn’t upload overnight, I had to [scroll through and] visually check which ones had the checkmark on them.”

Albarenga also remarked that the C2PA-compatible editing workflow through Photoshop was not particularly fit to handle a large field photoshoot, which is a common need for visual journalists. “Captioning through the phone app or one-by-one in Photoshop would take me several days,” he said, noting that he would have preferred bulk editing through tools like Lightroom or Photo Mechanic.

Reflecting on the two issues above, Albarenga saw opportunities in the future: “I think many of these issues will be resolved when the authentication is made in the camera itself.” It would also require broader adoption of C2PA by industry-standard editing tools, in this particular case, ones that photographers commonly rely on for bulk editing.

Presentation Challenges

“The entire ICN masthead was pleased with the way the Four Corners technology finally worked on our site and recognized the value of being able to provide information that verifies the authenticity of the images,” said Senior Editor Kordas. “We’re eager to see how these technologies develop and are adopted by a wide range of news media.”

Enabling readers to dive deeper into the visual assets was especially important for this story. As Weisbrod put it: “With topics related to climate change, which can be scientifically, politically and socially complex, being able to provide more details about the story behind photos can be a valuable way to expand ICN readers’ knowledge.”

Part of the success of the launch was due to the amount of planning and anticipation of technical challenges. This required coordination between teams to address potential glitches. After the Four Corners presentation was readied with all the assets assembled (authorship, ethics statement, backstory, related images, related links, metadata, certification of authenticity), the technical team tackled frontend issues, particularly on iOS and involving the lazy-loading of CAI tools by Four Corners. Kordas expressed hope that eventually these technologies would reach a “plug and play” level, making implementation smoother and less disruptive to the production process.

Contents

Context

Framework

Technology

LearningsArchive

Archive

Special Thanks to Inside Climate News

Founded in 2007, Inside Climate News is a Pulitzer Prize-winning, nonprofit, nonpartisan news organization that reports on and provides analysis of climate change, energy and the environment. Senior Editor and award-winning photojournalist Michael Kordas oversaw the editorial process, editing Jill Langlois’s story and Pablo Albarenga’s photographs. Columnist and Web Producer Katelyn Weisbrod handled production, technical implementation and story layout. Langlois is an independent journalist who has been based in São Paulo, Brazil since 2010. Her work, which focuses on human rights, has appeared in National Geographic, The New York Times and The Guardian. Pablo Albarenga is a documentary photographer and visual storyteller who has documented land conflicts in Latin America, involving indigenous people. He is a National Geographic Explorer, a grantee of the Pulitzer Center, and was selected in 2020 as the photographer of the year by the Sony World Photography Awards

Submission to the UN Special Rapporteur on Human Rights Defenders from our Brazil Coverage

Journalism

We are honored to see our work featured in Mary Lawlor‘s UN Special Rapporteur report to the Human Rights Council: “Out of Sight: Human Rights Defenders in Rural, Remote and Isolated Contexts.”

UPDATE: Since this was published, the UN Human Rights Council adopted a resolution on protecting human rights defenders in the digital age. It addresses several important issues that our Lab has been focused on. Scroll to the end of this page for more details.

The report notes how Starling Lab supported efforts in Brazil’s Pantanal wetlands with tools that work even in low-connectivity environments, which is needed where the “digital divide hits many human rights defenders very hard.”

In a speech this month presenting her findings, Lawlor observed how some of those most at-risk include “journalists covering human rights issues at the local level.” Starling’s methodology was used by journalists to document environmental destruction – and confront climate change denialism. Our submission to Lawlor’s office focused on data authentication, decentralized storage, and cryptographic verification. Together, these ensure documentation remains tamper-evident and accessible, even when governments seek to dismiss the truth.

Her report includes several valuable recommendations to governments and other international actors, two of which resonate with our work:

- “Expand access to the Internet and secure communication tools, including by increasing funding for such digital security resources as encrypted communication applications and secure reporting channels.”

- “Support efforts to enable human rights defenders to store and safeguard their information securely, without fear of unlawful surveillance or data breaches, including putting in place robust legal safeguards to prevent the misuse of digital tools to suppress dissent or target defenders and ensure that their digital rights are protected.”

We appreciate being included among so many other human rights defenders, and remain grateful to Pablo Albarenga for his photojournalism in Brazil, as well as to Inside Climate News for publishing this important coverage.

And we hope this underscores the vital role of trusted digital evidence in defending human rights and environmental justice.

🔗 Read the Special Rapporteur’s remarks;

📄 Read the full UN report;

📕 Don’t miss our own case study;

📰 And the Inside Climate News article

Starling Lab was previously referenced in a report to the UNHRC in 2023 by the Rapporteur on the right to education, who acknowledged similar methods used by the lab as emerging “good practices” for documenting war crimes against civilian objects like schools.

Update (April 16, 2025): Our submission is also echoed in a new resolution from the UN Human Rights Council, which addresses the protection of human rights defenders in the digital age (full text: A/HRC/58/L.27/Rev.1).

The resolution emphasizes “universal connectivity” and “meaningful connectivity” as essential for defenders’ work, calls for “technical solutions for strong encryption and anonymity,” and advocates for secure information storage “without fear of unlawful surveillance.” It specifically recognizes the “growing number of digital attacks” on defenders and acknowledges the “protection gap” caused by lack of accountability.

These address key points from our submission on data integrity and authentication technologies for remote areas.

We’re particularly encouraged by the HRC’s recognition that “new and emerging digital technologies can hold great potential for strengthening democratic institutions and the resilience of civil society, empowering civic engagement and enabling the work of human rights defenders, public participation and the open and free exchange of ideas, and for the exercise of all human rights.” This aligns perfectly with our mission at Starling Lab to harness technology to establish trust in digital records.

Our earlier submission outlined innovative approaches to produce documentary evidence and combat digital denialism. This includes cryptographic methods that authenticate evidence from the point of capture, enabling defenders in remote areas to establish credibility despite connectivity challenges. The submission also referenced ongoing work on telecommunication technologies, including 5G, to further enhance these capabilities.

Battling Link Rot

Battling Link Rot

Brandon Tauszik's journey with decentralized tools to protect his multimedia projects from the pervasive threat of disappearing online content.

Starling LabReading Time: 5min

Prototypes

Share

Contents

Background

Fellowship Projects and Awards

Background

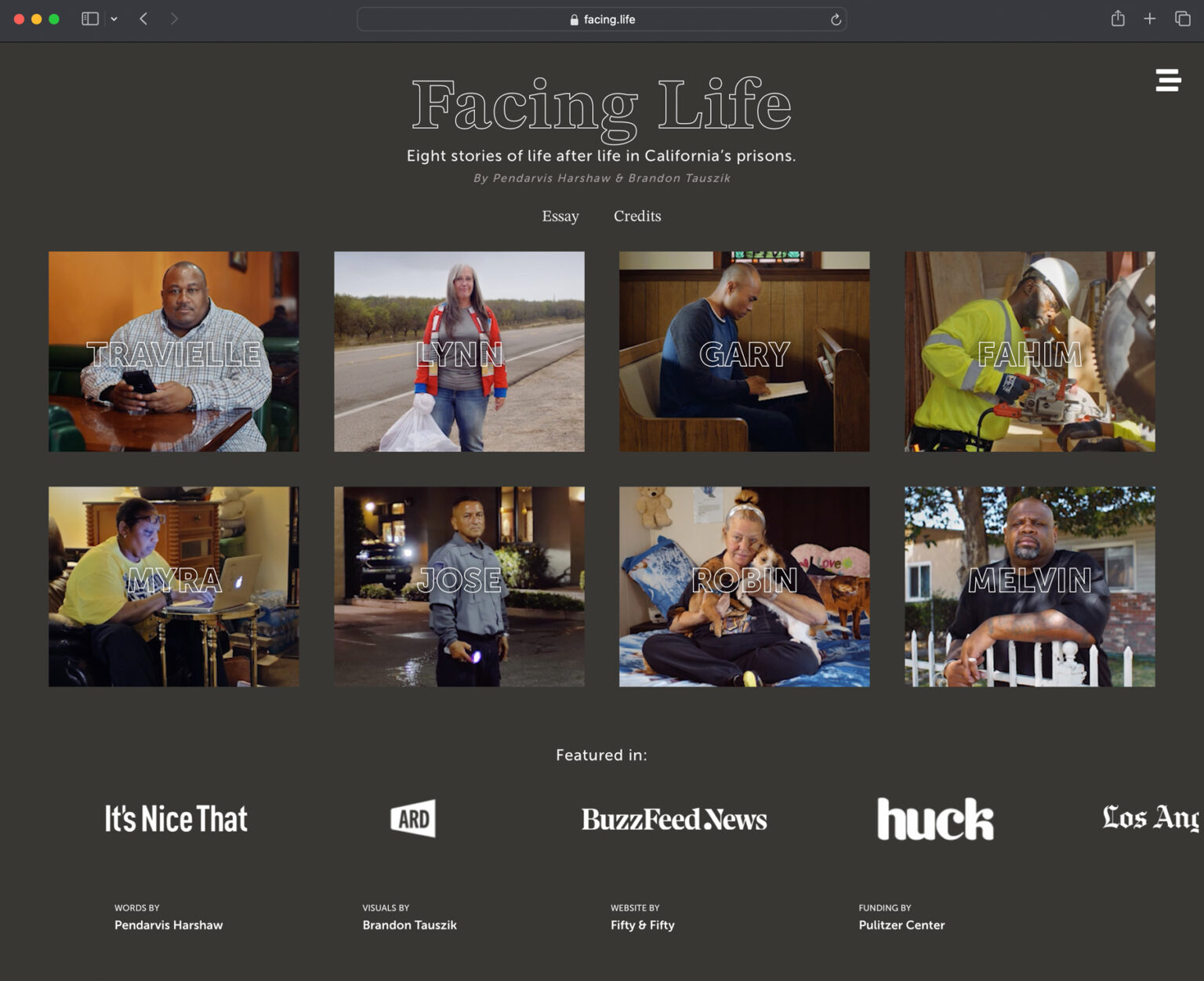

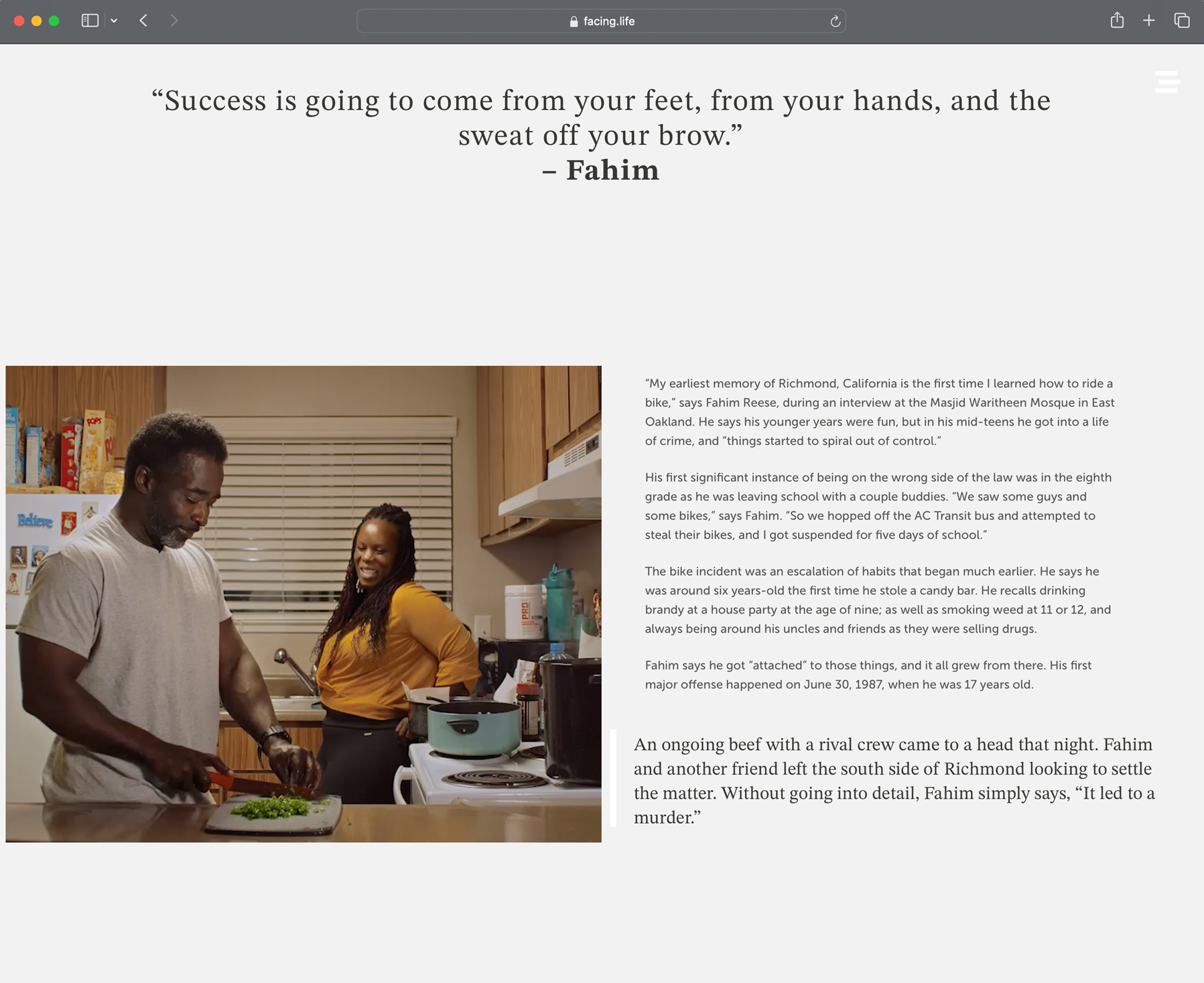

Brandon Tauszik is an independent photographer and filmmaker based in California. His multimedia projects are often built into custom website experiences. Incorporating the largely unexplored medium of cinemagraphs, as well as text, video, and 360 VR, these projects push the boundaries of digital storytelling. Projects included in this fellowship include Syria Street (commissioned by the International Committee of the Red Cross) and Facing Life (supported by the Pulitzer Center).

As a freelancer whose work tends to be hosted by a variety of publishers, Tauszik’s pieces, like the work of many freelance journalists, are often vulnerable to disappearing from the internet. Even when published by a large organization, maintaining and hosting the project websites can become a challenge.

To combat link rot, some artists, journalists, and website owners regularly update and maintain their content, which is an expensive and time consuming task. While web archiving services like the Wayback Machine and Conifer attempt to preserve websites for future reference, they generally have important limitations. For example, there may be challenges capturing password-protected sites (ex: paywalls) and services encounter IP rate limits on some domains. Once archived, users can’t make minor changes or updates, pages cannot be hosted at the original URL, nor can pages be readily ported elsewhere. The archived versions on these services are static copies of the original pages – like a snapshot in time. When creating a portable website that can move between platforms, it becomes important to have an archive with integrity – that is, one that can be verified, with certainty, to contain the original content and provide assurance that data is not misrepresented. To solve this for content creators, we explored customizable solutions that also integrated information about the provenance and integrity of a portfolio.

How We Got Here

The World Wide Web has no single owner, maintainer, nor even a single entity that governs it. What is on – and remains on – the web depends on those who create, serve, and choose to maintain it. Unfortunately, much of what is published on the web eventually disappears – succumbing to a phenomenon known as “Link Rot.”

The pervasiveness of the problem of link rot has been illustrated by studies over the years:

-

- A 2023 study by Pew Research found 38% of web pages that existed in 2013 had disappeared within a decade. A quarter of web pages that were online from 2013 to 2023 are gone.

-

- From 1996 to 2021, an analysis of roughly 2 million links in New York Times articles found that 25% of deep links no longer worked. Researchers noted the rot gets worse with age, observing that a staggering 72% of links from 1998 were dead.

Jonathan Zittrain, a Harvard professor who authored several of these studies, wrote in The Atlantic in 2021 about the implications for society: "They represent a comprehensive breakdown in the chain of custody for facts. Libraries exist, and they still have books in them, but they aren’t stewarding a huge percentage of the information that people are linking to, including within formal, legal documents. No one is."

Contents

Context

FrameworkTechnologyLearningsArchive

Context

Fellowship

In this collaboration, Brandon Tauszik teamed up with the Starling Lab for Data Integrity to understand how decentralized tools, alternative publishing options, and the Starling Framework of “Capture, Store, Verify” could be used to develop a workflow to future-proof his portfolio from link rot. Collaborating with the Sutty development team, his sites were converted to a portable version – with integrity – to make them more resistant to link rot and easier for Tauszik to maintain.

Tauszik’s past projects live on media rich websites. Each website is on a different platform and has a separate set of dependencies and assets to maintain. Currently, if Tauszik wants to preserve all the works in his portfolio, he must pay for and maintain a number of platforms. The goal was to keep his works public and available long term, ideally forever. As hosting costs increase along with the volume of projects in his portfolio, it is becoming unsustainable to maintain all his online work.

Furthermore, the older these projects become, the greater their historical significance and usefulness. Currently, if and when Tauszik is no longer around to maintain his online portfolios, all of his hosting and domain solutions will terminate once payment methods cease to be available (ex: expiration of credit cards). This is of great concern, as the passing of an artist should not cause the death of that artist’s work.

Syria Street Takedown

When one of his projects went offline, Tauszik became aware of the fragility of a portfolio that linked to content published across the web. Without notifying the communications department or Tauszik, the International Committee for the Red Cross IT department terminated Syria Street during a broad sweep of their mini-sites. Tauszik realized that ultimately, digitization is not preservation. Awarded a fellowship with Starling Lab in 2023, Tauszik sought to find the longest term, lowest cost, and safest way to host his work.

To be a successful independent journalist, practitioners like Tauszik need to be able to take control of their content, away from centralized and brittle platforms. While especially pertinent for freelancers, this is applicable to all forms of digitally published media and especially in politically unstable times.

Contents

Framework

Framework

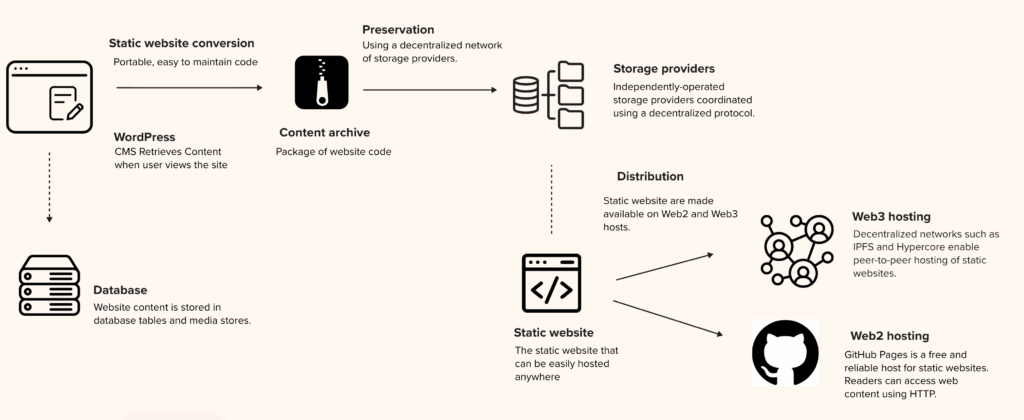

This case demonstrates how Starling’s Capture, Store, Verify framework can protect a freelancer’s portfolio against link rot: by capturing original site states and commit fingerprints; storing redundant copies on decentralized networks; and verifying distribution with content addressing and public registrations.

The result is portability with provenance—sites that can move between platforms while maintaining provable integrity over time.

The Challenge

Link rot is a pervasive problem that threatens the preservation and accessibility of work on the Internet. How might Tauszik’s portfolio avoid the same fate?

First, access to Tauszik’s website had to be optimized for current web systems. This means availability for the longest period of time possible, with minimal maintenance and cost. One of his projects, Facing Life, was self-hosted using Flywheel and WordPress, but the hosting costs were higher than necessary for a relatively static site. Another, Syria Street, was commissioned by the ICRC and hosted on HostGator. Using these platforms for hosting largely unchanging websites without user-generated content was unnecessary and expensive.

Second, Tauszik’s work had to be prepared for future-facing hosting solutions. These include approaches that can be more secure and longer lasting. Using decentralized systems for preservation would avoid control by large tech companies, mitigating risks of vendor lock-in, extensive maintenance, and sites that are not easily portable to other platforms. The Lab also wanted to maintain the integrity of his work. In this context, that means that if the content from the website had to be recovered from archives there would be a way to prove that the content is the exact, original version preserved as a part of this project (i.e.,not edited, censored, or otherwise modified).

Contents

Framework

The Prototype

The solution for preservation and availability consisted of a few simple parts. The Starling Framework (Capture, Store, Verify) provided a clear roadmap to address the important phases of the content’s lifecycle.

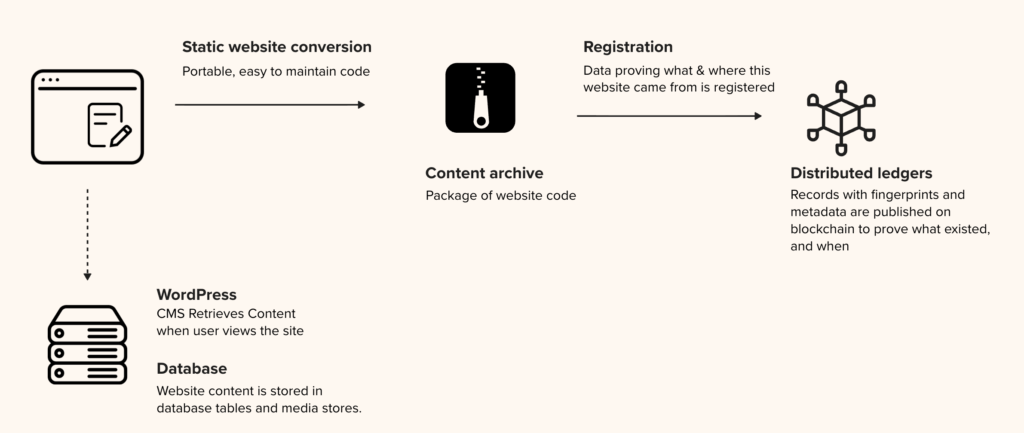

Capture

The team converted Facing Life (which was hosted on WordPress) to a static site using Wget mirror options. The Syria Street website was modified to create static code (a set of HTML, CSS, and assets such as images) that can easily be hosted anywhere. This code was published and managed on GitHub.

In addition, authenticity records were created on three different blockchains. The process is akin to making a fingerprint registry to identify a human, but done for digital content. This was accomplished by taking a cryptographically signed hash (effectively, a digital fingerprint of the site), and registering that data with a blockchain transaction. Blockchains are ledgers that cannot be modified after information is added. This process establishes a record of exactly what existed, and when. The blockchain registration is public, allowing anyone to verify what code and assets existed at the time of registration, and can prove when it existed by pointing to the time of registration recorded on the network of blockchain nodes. The registration records also include signatures from Starling Lab attesting to the authenticity of this information.

Store

Once authenticity records of the site assets were recorded on publicly maintained ledgers, the team needed to preserve the assets themselves, and in a way that is not prone to removal in the way records owned by a single central entity are. To achieve this, we created ‘cold storage’ archives on Filecoin. This distributed storage network facilitates the preservation of the content across many servers called “nodes,” a resilient approach intended to withstand everything from censorship to economic decay. This continued archival storage is backed by an economically incentivized system including collateral put up by the network of storage providers.

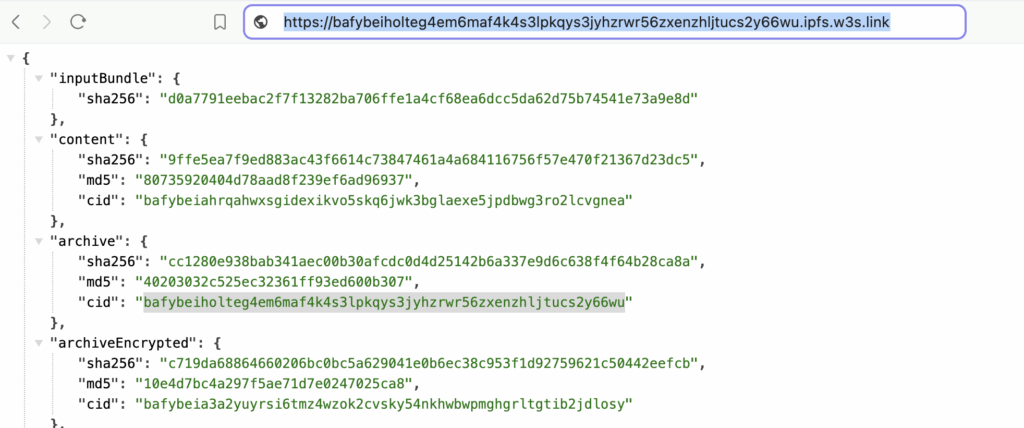

Verify