Comfy C2PA Signer

In Development

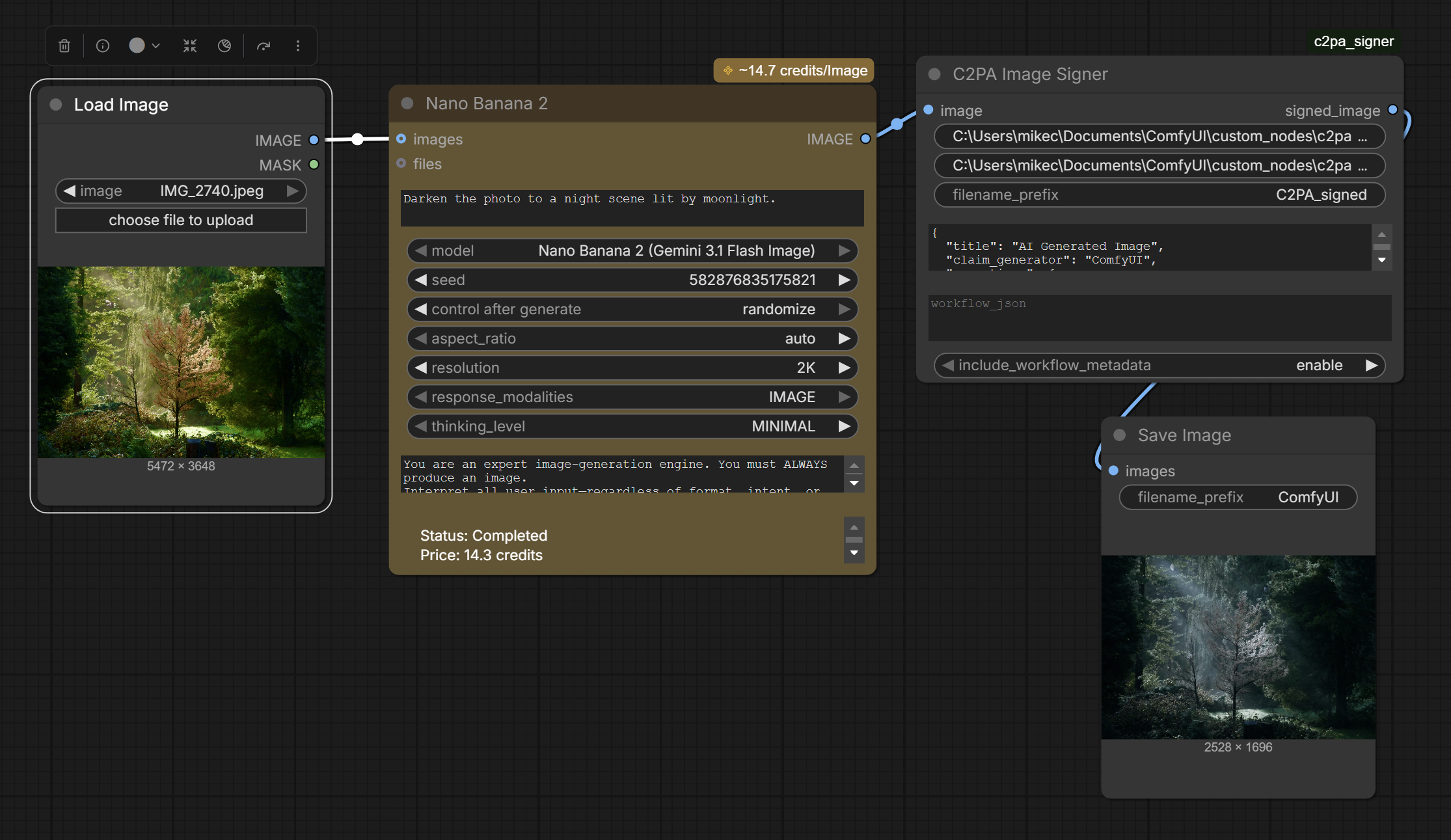

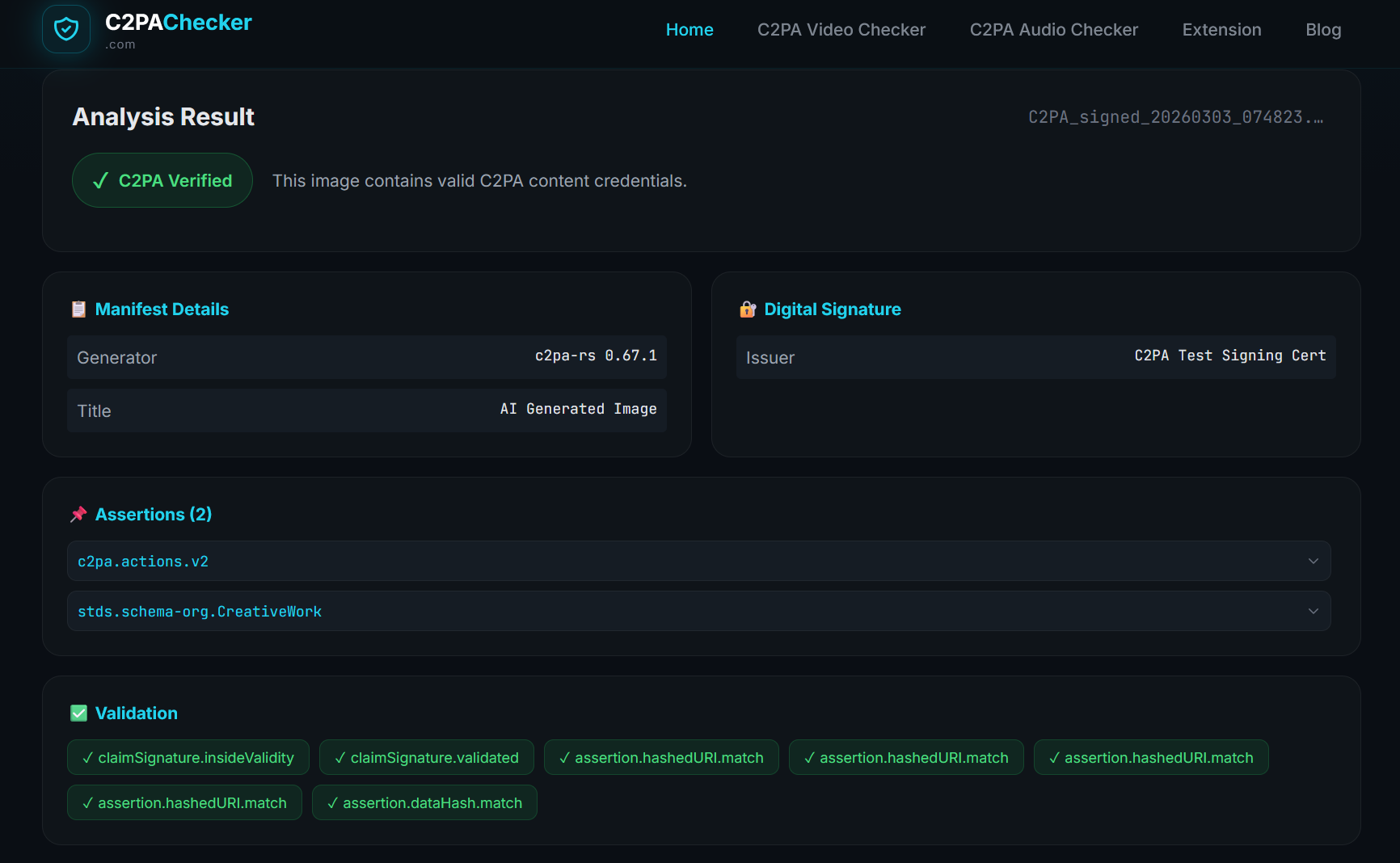

The ComfyUI C2PA Signer is an open-source custom node that integrates cryptographic content credentials directly into generative AI workflows. By signing synthetic media at the moment of generation within the ComfyUI interface, it allows creators to transparently certify that an image was AI-generated, which models and parameters produced it, and who authored it. The node is available on GitHub.

The Problem

Generative AI tools like Stable Diffusion have democratized the creation of hyper-realistic imagery, but they lack native mechanisms for provenance. Responsible creators have no standardized way to disclose that their output is synthetic, leaving their work indistinguishable from both authentic field captures and malicious deepfakes. This gap fuels the “liar’s dividend” – the ability for bad actors to dismiss genuine evidence of their wrongdoing as AI-generated, while at the same time making it difficult for audiences to identify what is real.

The Solution

We built the ComfyUI C2PA Signer to address a gap in the open-source AI creative ecosystem: the absence of any native provenance mechanism in the tools creators actually use. ComfyUI is one of the most widely adopted interfaces for Stable Diffusion workflows, and until now, none of its output carried content credentials.

Our custom node automates the application of C2PA Content Credentials as an image is rendered. It intercepts the output and cryptographically binds a compliant manifest directly to the file, explicitly flagging the content as AI-generated using the C2PA trainedAlgorithmicMedia digital source type, the industry standard assertion for content produced by machine learning models. The signed file returns to the ComfyUI workflow for further processing with its provenance intact.

The node is open-source, installable as a standard ComfyUI custom node, and designed to integrate into existing creative workflows without requiring changes to the generation pipeline itself.

JOURNALISM — Newsrooms increasingly encounter AI-generated images submitted as authentic. A standardized disclosure mechanism at the point of creation shifts the burden from reactive detection to affirmative proof, giving editors a verifiable signal before publication.

HISTORY — Archivists working with digitized collections need to clearly distinguish AI-enhanced or AI-reconstructed materials from unaltered originals. C2PA signing at the generation step prevents synthetic materials from entering the historical record without transparent labeling.

LAW — As AI-generated imagery becomes more photorealistic, legal proceedings require reliable methods to establish whether visual evidence is captured or synthetic. Content credentials applied at generation create an auditable record of origin.

Authenticated Attributes

In Development

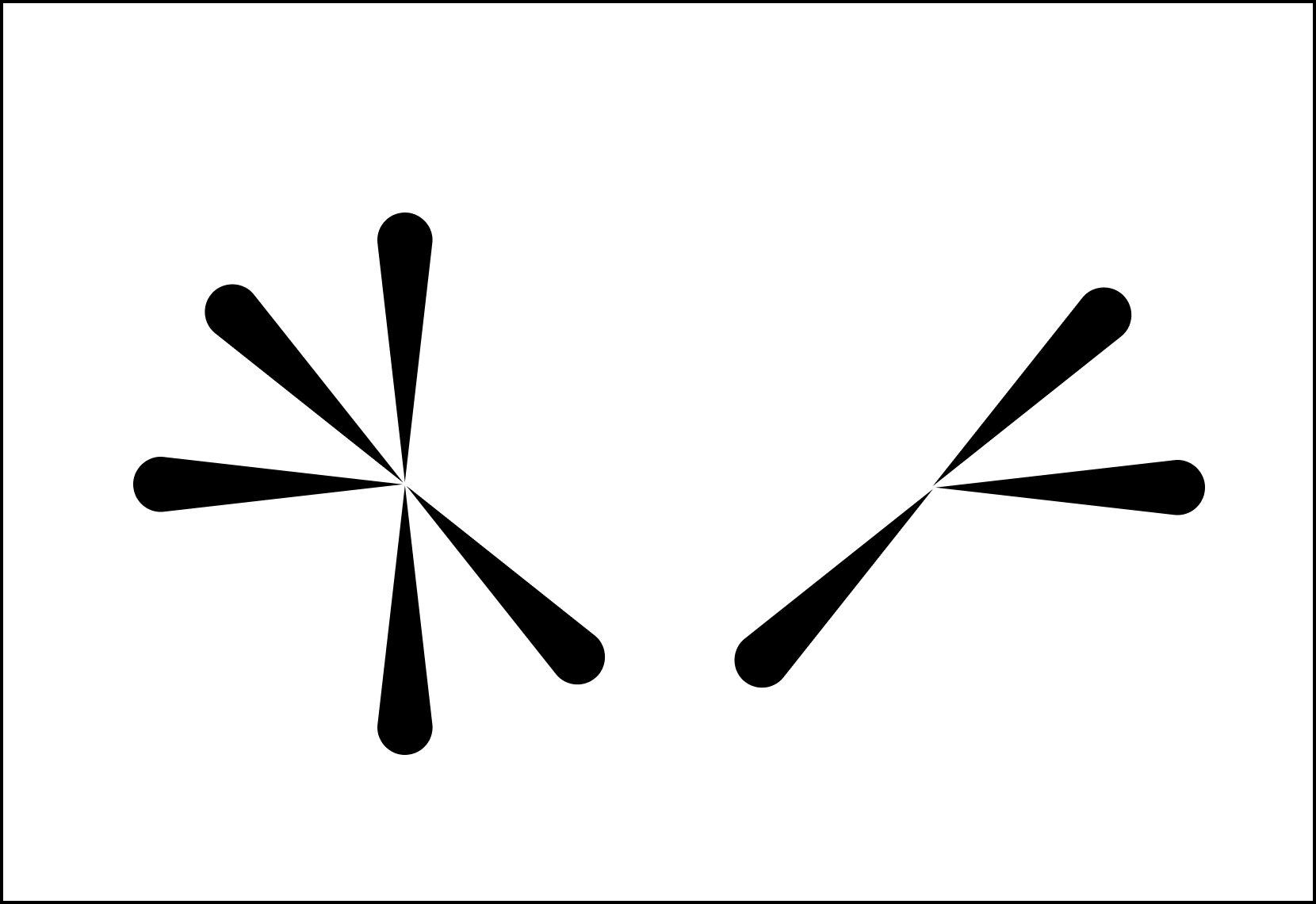

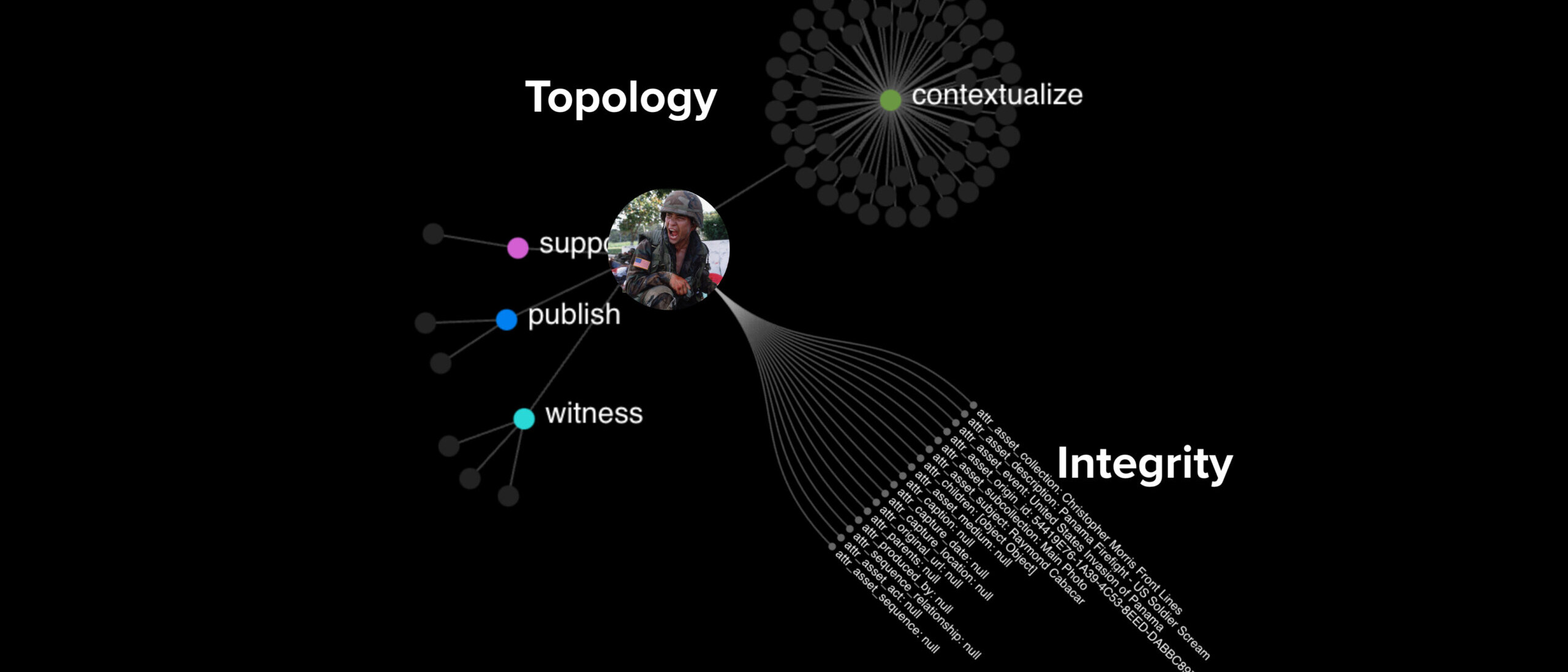

Authenticated Attributes (AA) is a decentralized infrastructure designed to restore trust in digital media by establishing the proven integrity of metadata. Moving beyond traditional, centralized databases, it treats every piece of information as an independently verifiable “atom” of fact, allowing investigators to construct unalterable timelines of evidence.

This concept shifts the verification paradigm from trying to detect AI-generated falsifications (deepfakes) to affirmatively proving authenticity. It anchors digital content to a “proof of existence,” utilizing third-party timestamping to demonstrate exactly when a file was created and ensuring it has not been altered since.

The Problem

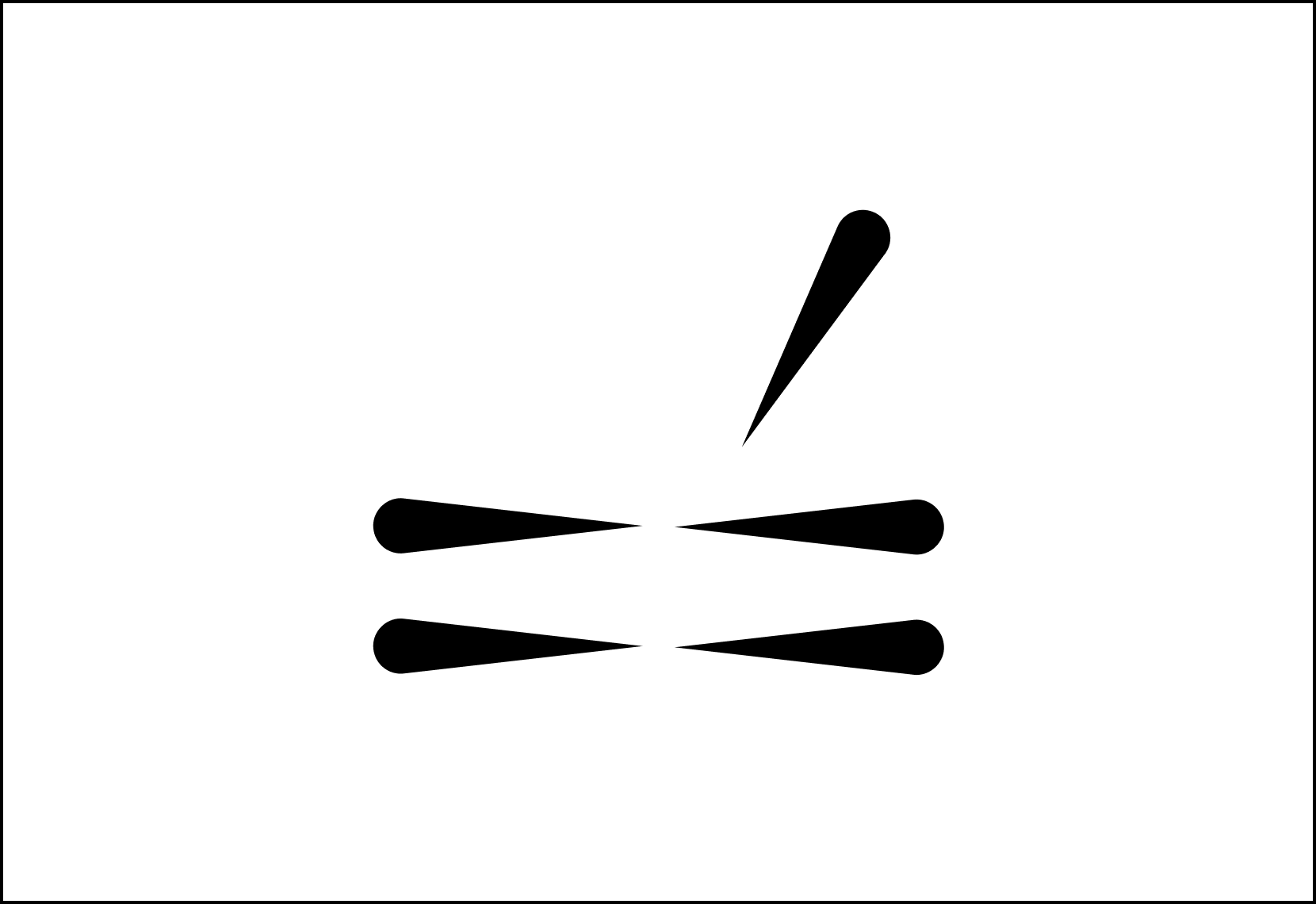

The traditional methods for trusting digital images and records are breaking down due to the rise of AI-generated content (deepfakes) and a general erosion of trust in institutions. Investigators and archivists face several specific challenges:

- There is no standard way to reference the same image or file across different organizations.

- Secondhand information is difficult to verify, and different sources often provide conflicting data.

- Centralized databases are vulnerable to tampering, often requiring strict access controls that limit collaboration

JOURNALISM

Authenticated Attributes allow newsrooms to cryptographically bind specific details (like location or time) to content so audiences can independently verify the reporting’s origin without compromising sensitive sources.

HISTORY

By attaching unalterable proofs of existence to digital artifacts, AAs ensure that future historians inherit primary sources that are traceable and immune to revisionism.

LAW

AA creates a tamper-evident link between evidence and its metadata, allowing investigators to prove that critical details haven’t been altered since the moment of capture.

The Solution

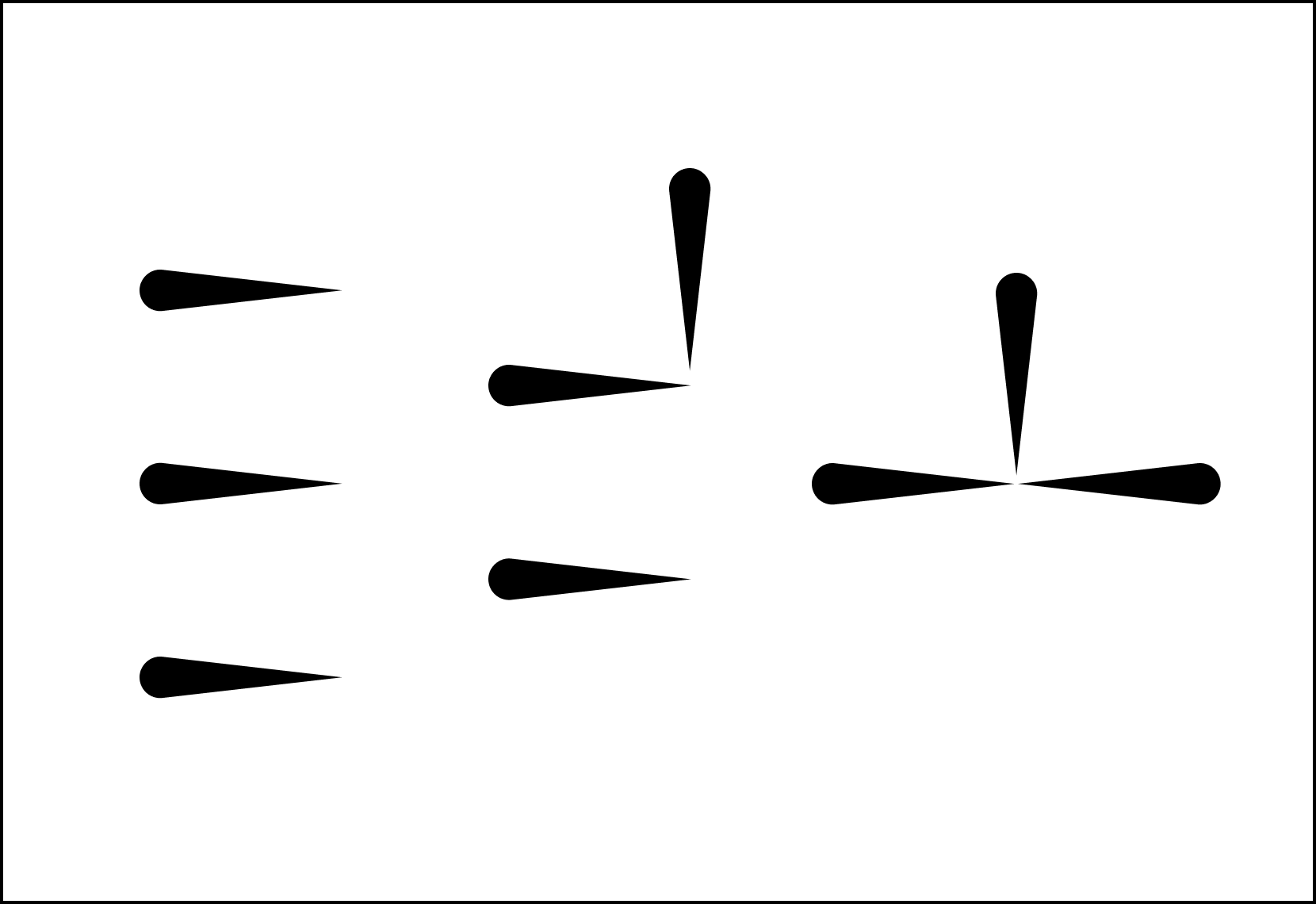

Authenticated Attributes is a tool that allows investigators to verify digital content by sharing authenticated metadata and attestations that cannot be faked or tampered with. It restores trust through a decentralized framework:

-

Cryptographic hashes and digital signatures uniquely identify files and authenticate sources.

-

Third-party timestamping proves exactly when data existed.

-

Flexible reconciliation lets investigators weigh conflicting sources without discarding data.

-

Peer-to-peer replication ensures data remains persistent and available.

—

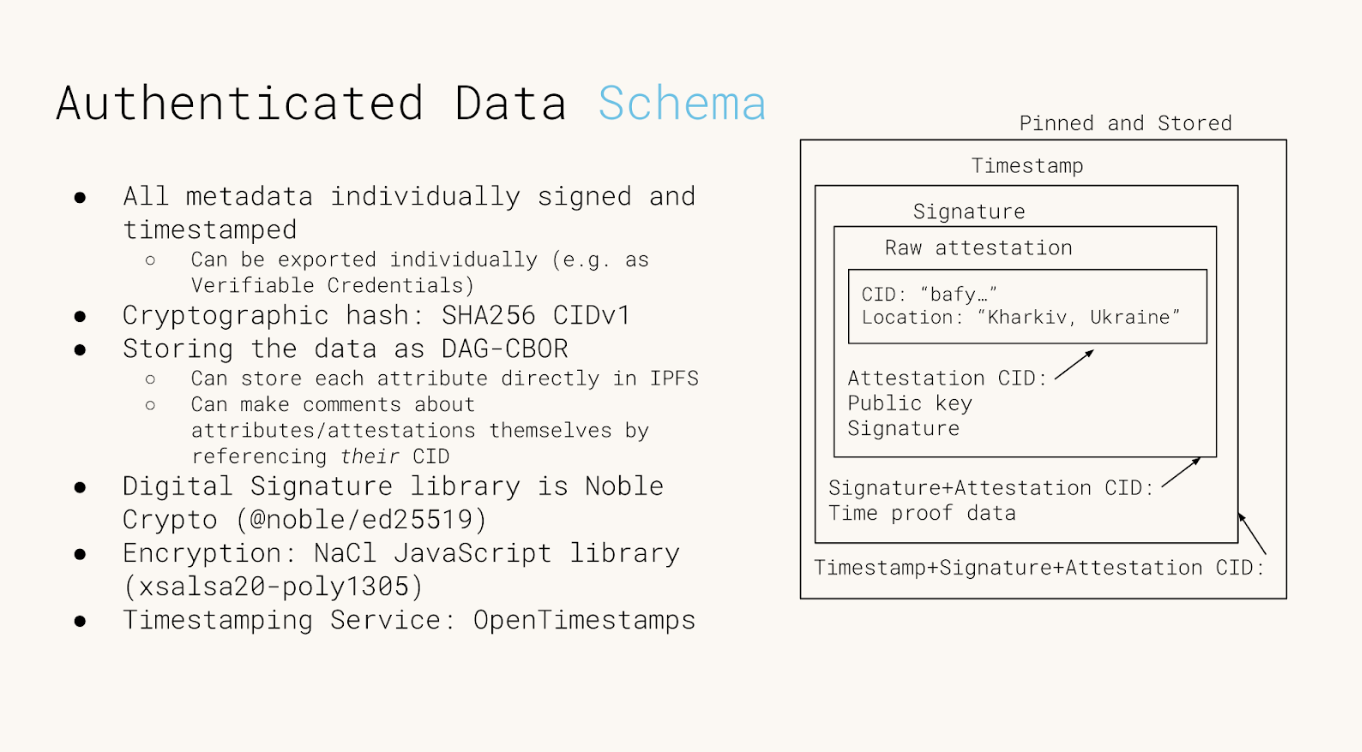

The initial implementation of Authenticated Attributes express them from the following data structure (more details in the Github repo):

SELECTED WRITING

Web Archives as Evidence (using AA)

Slide deck and Youtube video

Working on Authenticated Data with AA

By Cole Capilongo

Time for (Trusted) Timestamping

By Cole Capilongo

An Alternative to Deepfake Detection

By Kate Sills

UN Submission on ‘an Index for Accountability’, a prototype built on AA

Evidence Collection & Consent

In Development

A verifiable ingestion framework designed to transform multimedia submissions from secure messaging apps into court-admissible evidence.

By integrating decentralized chatbots with platforms like Signal and Telegram, it establishes a tamper-evident chain of custody that cryptographically binds media to the explicit, informed consent of the source.

This approach bridges the gap between high-risk field documentation and the rigorous evidentiary standards of international justice mechanisms, ensuring that humanity’s most critical records remain legally robust and ethically sound.

YEAR

2022

PARTNERS

Guardian Project

ProofMode app

Signal

Telegram

The Problem

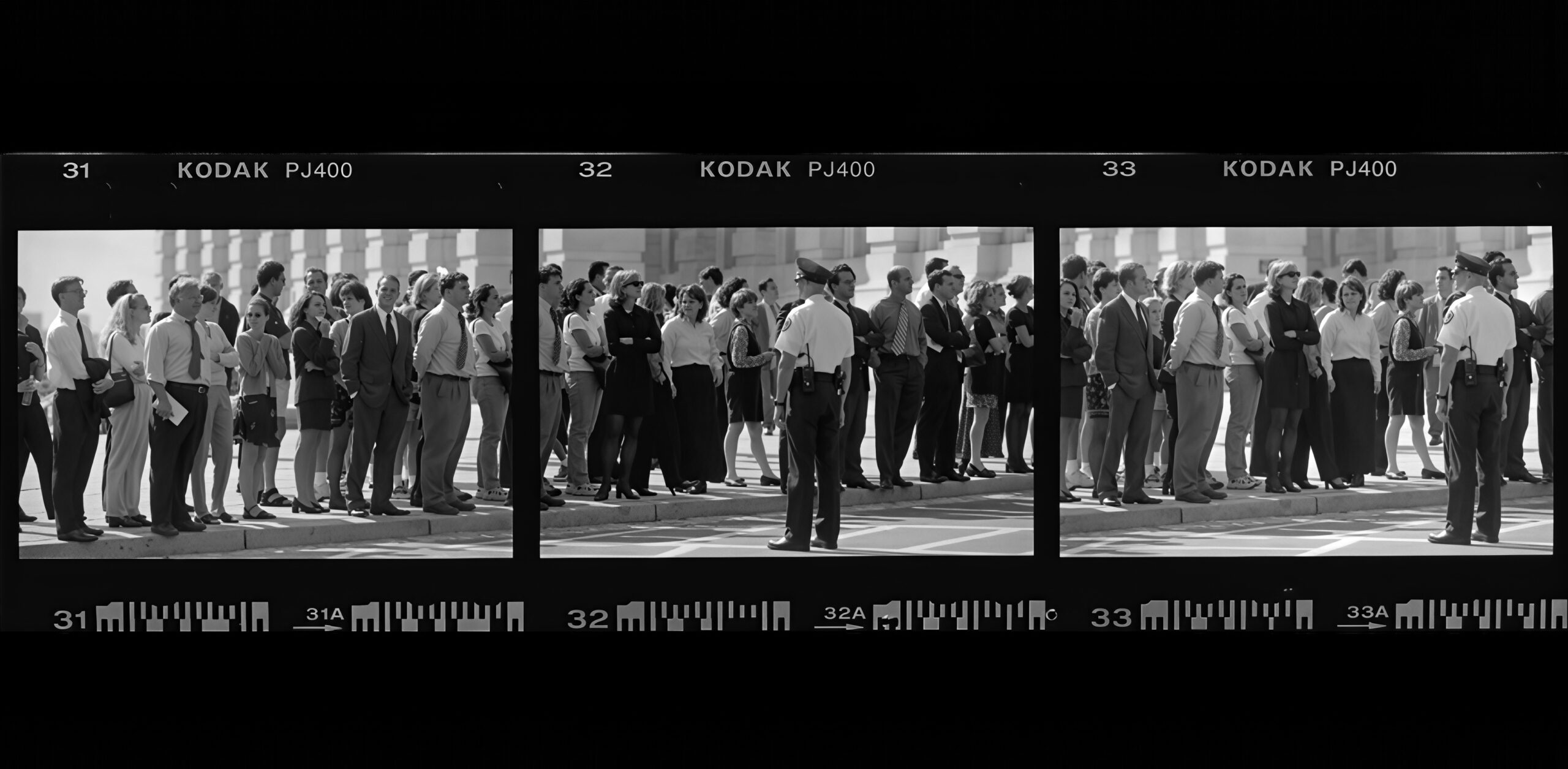

Digital media captured in high-stakes environments, such as war zones or human rights crises, may be required to meet the evidentiary standards required for criminal trials. While photos and videos are persuasive, they often lack a verifiable “chain of custody”. Traditional messaging services routinely strip critical metadata to protect privacy; however, this decontextualization makes it nearly impossible for investigators to prove origin or authenticity once the file leaves the original device.

Furthermore, without documented, informed consent from the source, such records are often deemed inadmissible, leaving critical survivor testimonies legally invisible.

The Solution

Starling developed a chatbot, integrating with secure messaging services like Signal and Telegram, to automate the authenticated collection of digital evidence. When a source sends media to the bot, the system instantly generates a cryptographic fingerprint (SHA-256 hash) and seals the file alongside its associated metadata in a tamper-evident archive.

Crucially, the bot leads the documenter through an interactive back-and-forth to record the informed consent of the sender at the moment of ingestion. This consent is cryptographically bound to the media’s unique hash, creating an immutable record of usage permissions. This authentication layer ensures that crowdsourced evidence can be prioritized, processed, and examined by international prosecutors with its legal and ethical integrity fully intact.

GOING FURTHER

Case Study: Building ProofMode as a Library in a custom, bespoke Signal build

Timestamp Verification

In Development

We utilize distributed ledgers to establish an immutable “proof of existence” for digital media and its metadata. By anchoring cryptographic fingerprints on public consensus networks, it creates a tamper-evident record of the absolute moment an asset was first observed.

This “birth certificate” for digital data shifts the verification model from reactive detection to affirmative proof, ensuring that the origin and integrity of critical information remain indisputable against the threats of revisionism and synthetic manipulation.

YEAR

2020-24

PARTNERS

Hedera

ProvenDB

OpenTimestamps

Numbers Protocol

Solana

LINKS

– Time for Trusted Timestamping

– Reuters collaboration, ProvenDB anchored on Hedera

– ‘Mom I See War’, a collection of drawings from Ukrainian children, anchored on the NEAR blockchain using the Numbers Protocol.

The Problem

Establishing the originality of a piece of content, and that a given piece of media is the first known version has been a conundrum since the invention of written communication. Whether one is looking to resolve disputes of which version comes first, or to prove media was created before the advent of certain AI technologies, a timestamp that can be verified with a trustworthy third-party can be a helpful solution. Verifiable timestamping can be used as a part of the digital media creation, preservation, and edit processes.

JOURNALISM

By anchoring field footage hashes on public ledgers, newsrooms can maintain an indisputable record of truth that survives both link rot and malicious denialism.

HISTORY

By registering high-fidelity fingerprints of historic records before the generative AI inflection point, institutions ensure that primary sources can be definitively distinguished from synthetic noise.

LAW

Third-party ledgers act as record holders from which to derive strong claims about integrity and point of origin of a digital item.

The Solution

Starling has used several Merkle tree-based technologies to efficiently create verifiable timestamps on public ledgers, and developed workflow using systems such as ProvenDB with Hedera Consensus Service anchors, OpenTimestamps proofs anchored on Bitcoin, as well as direct registration of media assets on many public blockchains. In all these cases, the block height is used to establish a verifiable timestamp for the registered digital media.

Adding an immutable proof of existence backed by distributed ledger consensus serves to establish a first known creation of digital media that is nearly impossible to refute.

READ FURTHER

Further to this work, we have created a reference implementation of timestamped databases in a project called ‘Authenticated Attributes’ that aims to integrate with digital media user interfaces and collaboration tools.

We have also created an offline, SMS-based silent registration prototype based on 5G technology, and integrating with the latest C2PA-capable cameras.

Witness Servers

In Development

Establishing institutional trust and technical corroboration for web evidence through decentralized, simultaneous crawling and cryptographic signatures.

YEAR

2024

PARTNERS

Webrecorder

FFDW

Harvard Library Innovation Lab

LINKS

– Concept note and Call for Contributions

– Our whitepaper on best practices in web archiving

The Problem

Minute, technical, cosmetic errors plague efforts of open source monitors who scour the Web and archive its content. In the context of legal investigations, these minor defects are considerable challenges to the reliability of the artifacts, and thus to the facts they aim to prove. Moreover, small organizations and individual investigators face a greater burden in arguing the probative weight of the material they collect than large, reputable, and established institutions.

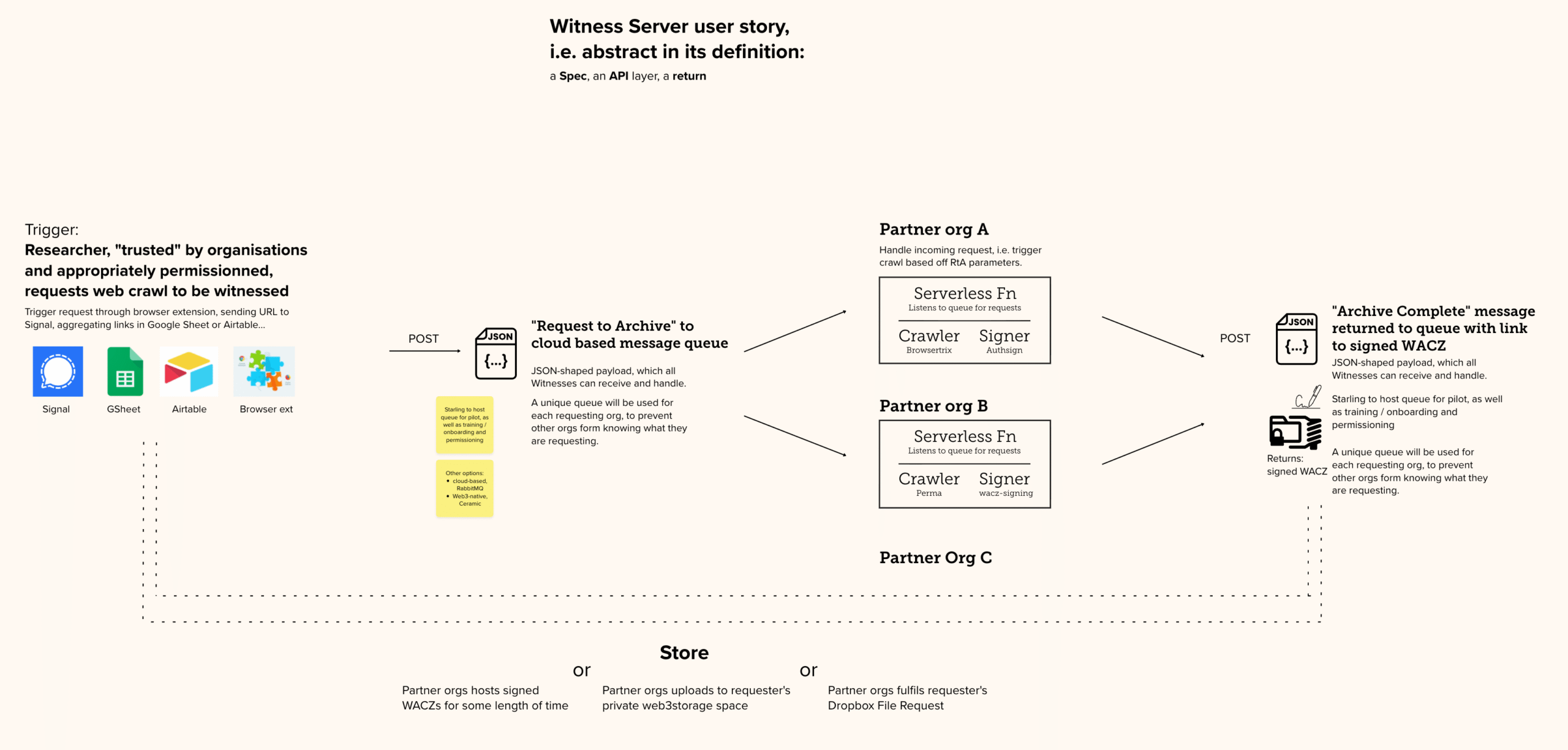

The Solution

We are developing the Witness Server prototype To replicate social trust in digital capture by involving reputable institutions as simultaneous observers of the web.

In short: a Witness Server is a service hosted by an institution that conducts on-demand web crawls. When a researcher initiates a local capture, a request is simultaneously dispatched to several partner Witness Servers (such as Harvard LIL or the Atlantic Council). Each institution performs its own crawl independently using its own infrastructure, creating a multi-perspective record of the same web content at that exact moment.

The prototypes follow all our learnings about web archives, Including the use of the WACZ open source format which bundles signed and hashed files.

By comparing multiple institutional archives, investigators can explain away non-material “cosmetic” defects (like pop-up banners) and provide overwhelming proof of the core content’s authenticity.

REFERENCE IMPLEMENTATION

On Github →

Still Photogrammetry

In Development

Still Photogrammetry integrates authenticated metadata with 3D spatial reconstruction to provide a verifiable record of physical environments and historical sites.

By utilizing a decentralized framework where every source image is treated as an independently verifiable “atom” of fact, this prototype allows investigators to build unalterable, three-dimensional timelines of evidence. It anchors complex digital twins to a cryptographic “proof of existence,” proving that the spatial data has not been tampered with since the moment of capture and restoring trust in digital primary sources for law and journalism.

YEAR

2022-26

PARTNERS

Mike Caronna

Pixelrace

Artem Ivanenko

LINKS

The New Horizon Lab

Starling Lab’s Spatial Digest

The Problem

Techniques such as photogrammetry (and more recently, NeRF and Gaussian Splatting) permit the reconstruction of a space in 3D, from stitching together 2D photographs. These tools are key to both extended reality environments and to investigations driven by architectural practices. However, integrity and provenance data is lost in the computing that renders the 3D models.

The Solution

Starling experiments with capture techniques supportive of 3D reconstruction while including provenance and integrity markers. From using smartphones to professional DSLRs, we test the technical constraints against the needs of photogrammetry workflows, which require a large amount of photographs of the scanned location.

Furthermore, we are also prototyping virtual UIs in virtual reality aiming to bridge the gap between what the viewer can see (the 3D model) and the original location (as per the 2D photographs). The viewer can navigate the space and select these authenticated “anchors” to interrogate the model, furthering their trust in the reconstruction.

SELECTED WRITING

Our 2025 paper: Verifiable Reality: Contrasting Approaches to Photorealistic VR Using NeRF Streaming and Gaussian Splatting Technologies

SD Card Encryption

The Problem

Losing control of data can happen when journalists, historians, or legal experts least expect it. SD cards can be seized during border crossings, left behind in a taxi, or stolen from a hotel room. Evidence that has been captured on an SD card, and which has not yet been anonymized, might carry geolocation or identity information that can lead authorities or militants to vulnerable people being photographed or interviewed. Another risk to unencrypted data on an SD card is that it could be manipulated undetected when not in one’s possession, with files erased or modified.

Prototypes are being tested by Starling Lab that both encrypt and hide important data captured by those in the field.

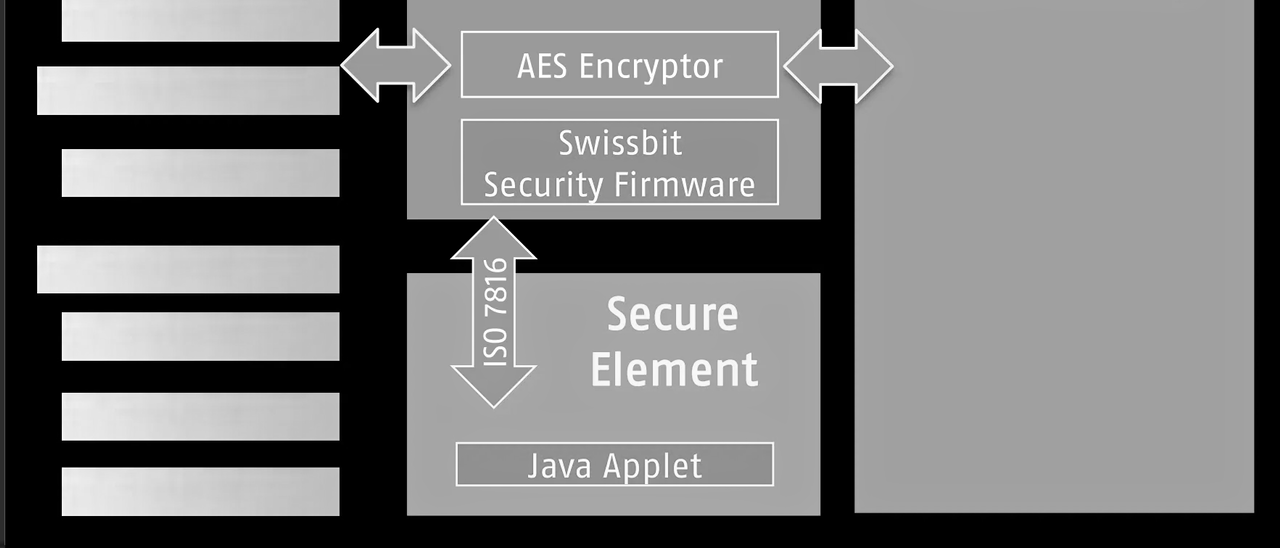

The Solution

Starling Lab is collaborating with industry partners including Swissbit, a leader in secure storage, to deploy hardware-encrypted SD cards that protect media the moment it is written to disk. This prototype ensures that digital assets are secured independently of the camera’s software vulnerabilities.

The solution creates an “encrypted tunnel” between the camera lens and the storage medium. Swissbit’s hardware-based encryption automatically protects image and video data without requiring additional software on the capturing device, ensuring that media cannot be manipulated or viewed in transit.

To protect the safety of practitioners in the field, the prototype relies on hidden partitions on the SD card. Sensitive files are stored in a manner that makes them impossible for unauthorized parties to discover or decrypt (even if the physical card is seized and inspected), while providing a critical layer of plausible deniability.