Authenticity in XR Spaces for Rapid Documentation

Authenticity in XR Spaces for Rapid Documentation in Ukraine

How much should we trust a VR reconstruction? We bring the original, authenitcated photographs and metadata fron and center in the 3D space. You explore, you decide.

Reading Time: 5min

Contents

Background

In response to the large-scale invasion of Ukraine by Russia, the collective response of the Lab was to start a broad project cutting across all of our three research areas: Journalism, Archiving, and Law. Under the project called Dokaz (“Доказ”, Ukrainian for proof), a loose coalition of organizations have shared material and ideas related to the support of Ukraine against aggression. In the context of this new large-scale conflict, documentation has been a cornerstone concern of our research into the creation of stronger digital material:

- The Lab’s law program led the submission of originally-collected field and remote evidence to the International Criminal Court;

- On the journalism side, we have supported the first demonstration of at-source cryptographic authentication, directly in-camera, for Reuters photojournalists;

- And we have prototyped the integration of metadata-rich, authenticated photography in Signal Messenger, most notably for the use case of crowdsourced citizen documentation.

In addition to this focus on audio/video documentation, significant external developments have happened in the last couple of years. New immersive storytelling methods using 3D graphics are emerging in news media, enhanced by devices like Meta’s Quest VR headsets and Apple’s Vision Pro mixed reality headset. These devices offer users 360-degree environments and interactive experiences. While consumers are accustomed to 3D environments in video games and CGI in films, the potential of these technologies extends to an interconnected 3D Metaverse, potentially revolutionizing work, play, and social interaction.

Another development worth mentioning is the penetration into the public space of techniques from the field of architecture, and relying on reconstruction of physical spaces in 3D environments by means of questioning a narrative – often official – and offering new facts to the public discourse. A pioneer of these techniques, Forensic Architecture, established at Goldsmiths university in the UK, has burgeoned into other collectives producing these counter-investigations. Similarly, newsrooms big and small have tried their hand at these new kinds of investigations – see for example the work of the Washington Post Visual Forensics team over an alleged violation in the West Bank, or the reconstruction of an alleged attack on journalists in Lebanon by the monitoring non-profit Airwars and the Agence France Presse (AFP).

Finally, these techniques are also used by groups aiming to capture or preserve objects, buildings, or scenes for posterity, collective memory, or cultural preservation. Of note are the international collective and grassroots effort SUCHO: Saving Ukrainian Cultural Heritage Online. We have also engaged with the Ukrainian company Aero3D, who employ their expertise in advanced scanning and photogrammetric reconstruction to preserve buildings and cultural heritage of their native Ukraine, as well as documenting the consequences of the ground invasion of their country.

To us at the Starling Lab, these changes represent new and interesting models of trust, as the result of the reconstruction forms the basis of new understandings of reality. It is through these 3D spaces that we are offered an analysis (in the case of an investigation), or an experience (in the case of cultural preservation). But what exactly are these 3D spaces made of? Can integrity markers in the original material used to create the 3D space persist across computations and reach the reader / viewer? Further, and as we recognize the potential impact of powerful immersive experiences and the metaverse on how people perceive the world around them, there is the potential for increased empathy and understanding, but also potential as a new route to misinformation and manipulation.

In this project we set out to test whether Starling Lab data integrity techniques could be applied to this new breed of immersive media. The questions we sought to answer were:

- Can Starling Lab data integrity techniques used on 2D imagery also be applied to 3D data?

- Can the underlying data and metadata of a 3D reconstructed model of real-world scene be secured?

- Can secured provenance metadata carry through the reconstruction process such that someone interacting with a 3D model can query and confirm the integrity of the model?

The overarching theme we sought to answer was, how can we give news consumers the tools to make informed decisions about what media to trust in the emerging realm of immersive 3D. This project aims to be a receptacle for some of these questions of authenticity and integrity in extended reality (XR) spaces and experiences.

Our collective thanks go to the project partners:

- Mike Caronna, Lab Fellow for Virtual and Extended Reality, on linking with various Ukrainian companies and photographers and helping set the expectations of the project.

- The Ukraine and Pixelrace team, notably Artem Ivanenko on scanning the locations around Kyiv; and Maciej Żemojcin on coordinating the relationship and the production.

- Weide Zhang, creative technologist at the ArtCenter College of Design California, on building the VR experience and UI inside Unity.

- And finally, the entire Starling Lab team, involving notably Basile Simon on project direction and in-country deployment; Alisha Seam on technical advice to the in-country team; and Dean Moro on the production of the VR experience.

Note: Following this project, we were informed that the building containing the murals had been leveled and the location cleaned up. We thought the art was gone in its physical form, and only survived digitally. Two years later, we were excited to find a Google Maps contribution to an exhibition apparently supported by the municipality, and featuring the artworks.

Below, a photograph of the site where the residential building we scan once stood, as well as a screen recording of the user-contributed video of the exhibition, dated June 2024.

Contents

Context

Our search for a project narrative and partner was one of compromises and of navigating constraints – the most important one being an access to the field we could all feel comfortable with. From a technical perspective, the idea was to demonstrate the capture of a scene for photogrammetric reconstruction, and to do so using consumer hardware in a minimal amount of time.

In the end, the team decided to rely on a location known to Artem: a group of residential buildings in Borodyanka (Бородянка, a suburb in the Kyiv Oblast, northwest of the capital), which received artillery fire at the onset of the ground invasion, and notably around the time fighting occurred around Bucha. Following the withdrawal of the Russian troops, several international artists produced artworks on the destroyed buildings. Among them were two stencil artists: Banksy, of international fame, and C215, from Paris. The latter, real name Christian Guémy, has produced several artworks of this kind in Ukraine over trips between March and April 2022.

These artworks were documented by Artem and the Murals team, and the locations in the region are well known to them, as Artem and his team have carried out numerous trips to photograph the pieces, ruins, and surroundings. The familiarity with the location helped support our risk assessment in reducing both time spent in the field and in unknown locations – a notable challenge when considering wandering around destroyed buildings and rubble.

Artem’s goal was to capture photographs of the ruined apartment hosting the stencil murals from C215, in order for the team to produce a photogrammetric / 3D model of the scene later. The photos Artem would produce would be strongly-authenticated (see Technology section below): they would be accompanied with strong guarantees of integrity and provenance. The 3D model created from these photographs would itself be viewable through a virtual reality (VR) headset permitting to navigate inside the ruins, as one would look around an apartment, and to look at the whole or details of the scene, rendered photorealistically.

Contents

Framework

The Challenge

The missing link in the extended reality trust chain is a byproduct of presenting a 3D model to the viewer through the VR headset. This model is the result of a photogrammetric computation, essentially matching features (e.g. cracks in the wall, furniture edges, etc.) across several photographs.

This 3D model does not account for the extra information we have about the strong original photographs. Nowhere can the metadata be presented, and there are no mechanisms to compare the work of the rendering computer against the actual original photographs.

Contents

The Prototype

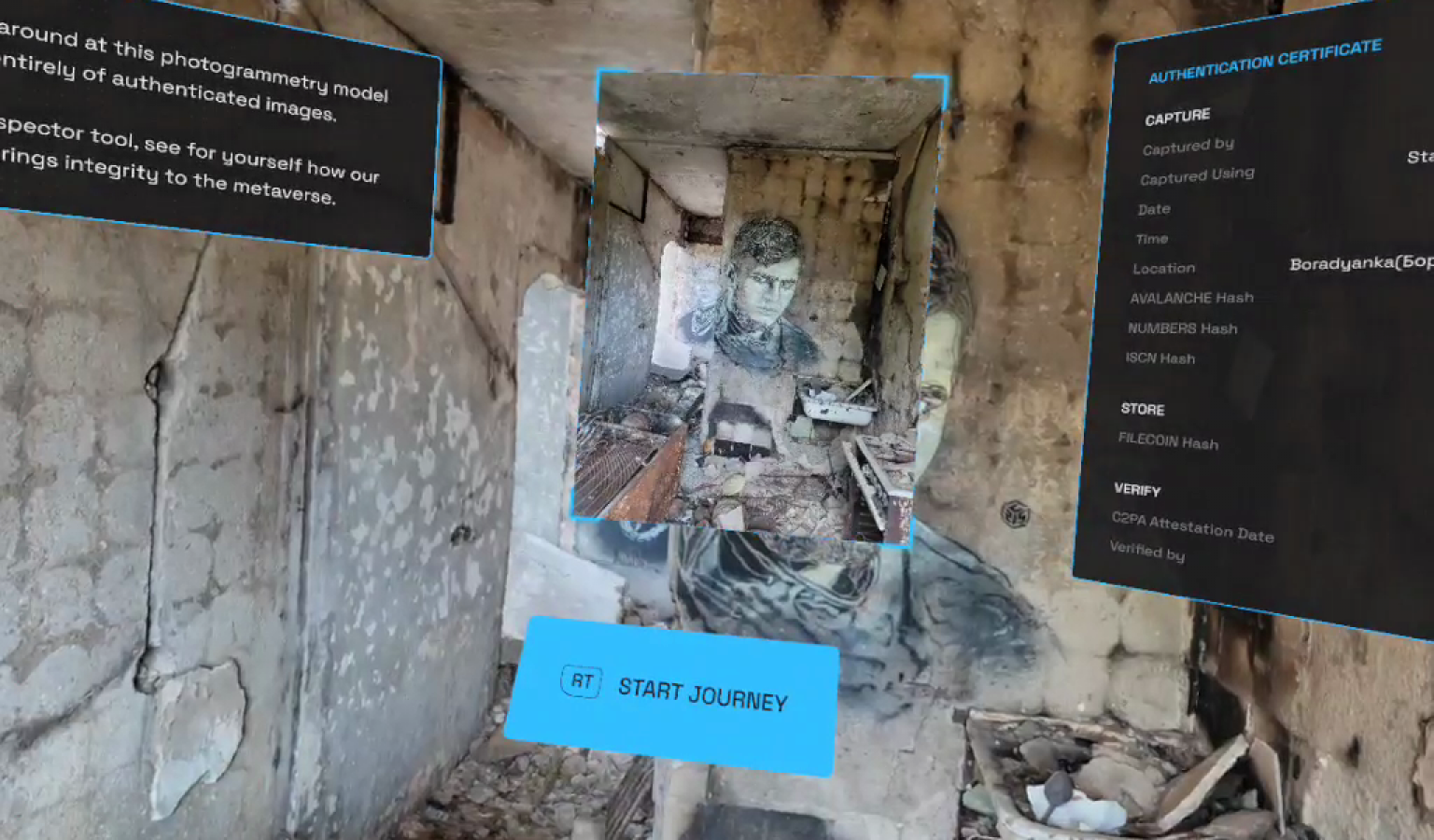

Until now. To bridge this gap between strong, contextualized, metadata-rich photographs and a complex compute, we produced a user interface study and prototype surfacing the integrity data we have available for the original photos.

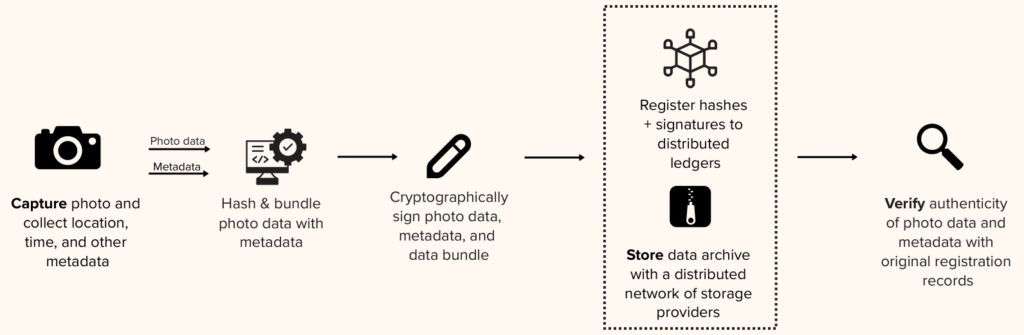

The prototype draws from our cross-disciplinary research in producing and leveraging authenticated data, best summarized by the three-step “Capture, Store, Verify” framework. The latter step, Verify, relates to permitting some verifications and inspections of the material, but also to communicating the value of having authentication data about a piece of digital media: What does it mean to me? To which extent does it affect the trust I might have in the material presented to me?

In this prototype, and inside the VR experience, the viewer is presented with means to explore not only the 3D space but also the original photographs, as well as relevant integrity data, in support of our research questions.

From authenticated photographs of the apartment ruins and murals in Ukraine, we produced a photogrammetric model, which we presented inside a Meta Quest VR headset through a Unity render. Inside this 3D space, we gave exploration tools to the viewer accessible from the physical controls linked to the headset.

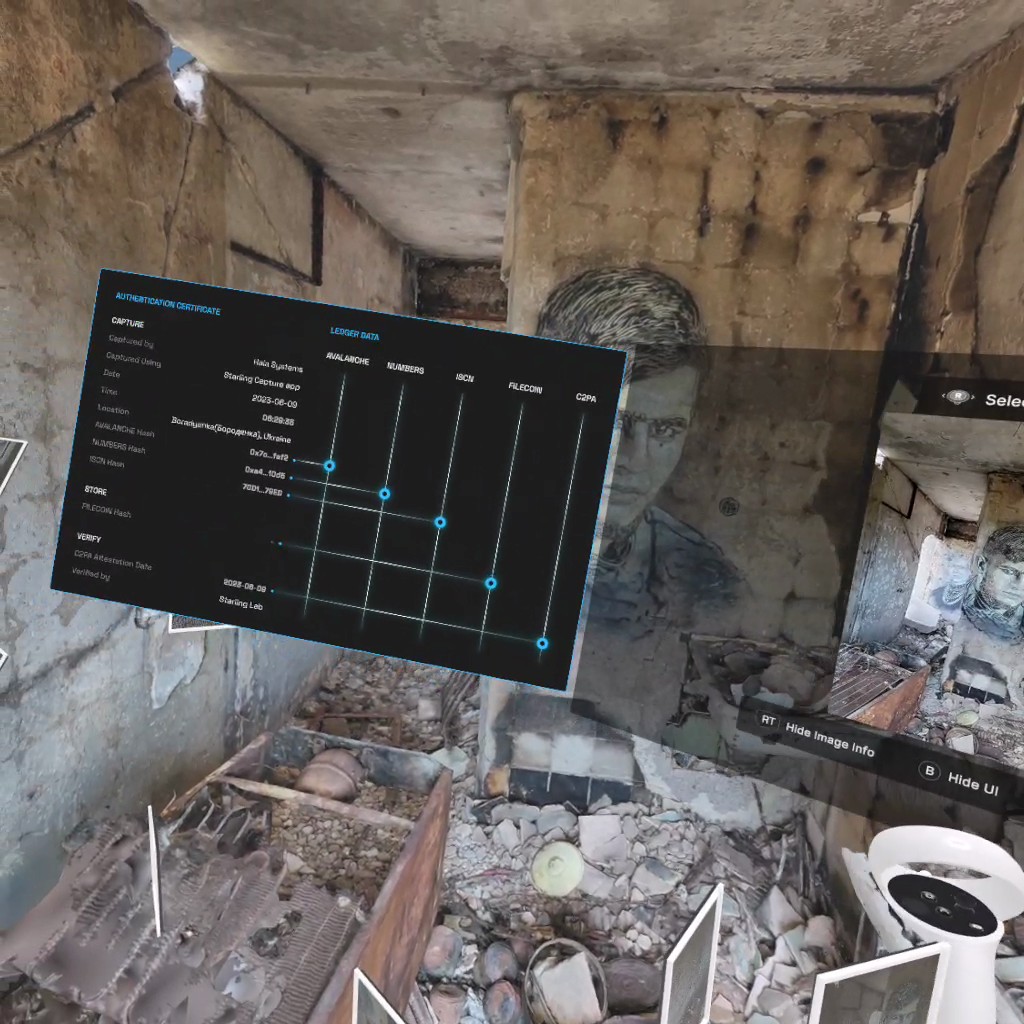

Within this photorealistic reconstruction, viewers now have a virtual heads-up display UI permitting them to trace all the way back to the original photographs, and to inspect relevant integrity data.

Contents

Technology

Capture

Artem captured detailed photographs of the site using a Samsung Galaxy Note 20 Ultra, an Android smartphone equipped with a high-end camera lens. The photos were processed through Starling Capture, an early iteration of what would later evolve into the Numbers Capture app. The app, built to be open source and available for public scrutiny, handled both the secure transmission and the cryptographic signing of the images, using a robust flow available on GitHub.

The Starling Capture app collected rich contextual metadata along with each photograph, including timestamp and GPS coordinates. The app then computed a unique cryptographic hash for each image and bundled this metadata for digital signing. The cryptographic signature, which tied the data to a secure, private key on Artem’s device, ensured both the authenticity and the integrity of the photographs. These signed data bundles were then securely transmitted to the Starling server infrastructure for further processing.

Store

Upon receipt at Starling, the data bundles were subjected to rigorous validation procedures. The validated images, along with their corresponding cryptographic metadata, were registered on multiple independent cryptographic ledgers. These included the Numbers blockchain, which provided fast and efficient registration; Avalanche, for its flexibility and speed in handling integrity proofs; and LikeCoin, where records were aligned with the International Standard Content Number (ISCN) specification.

The authenticated and signed bundles were then encrypted and stored on Starling’s secure storage pools. These storage servers feature state-of-the-art encryption at rest, protecting the data against unauthorized access or tampering. While the prototype user interface referenced an IPFS hash value—indicating the potential for decentralized storage on the IPFS network—the data was not uploaded to IPFS at this stage.

Verify

Verification of the images involved validating the original cryptographic signatures and associating the photographs with a C2PA stamp. This stamp, issued under the Starling certificate authority, served as an additional layer of authentication, clearly marking the photographs as unaltered and verified by a trusted source. The verified images were then employed to create a photogrammetric model of the scanned location.

For presentation purposes, the photogrammetric model was rendered using the Unity open-source 3D engine. A demonstration of this model was showcased through a Meta Quest VR headset, with side-loading used to deploy the model into the VR environment. This immersive setup allowed viewers to explore the rendered location while ensuring that all elements of the model could be traced back to their verified, original sources.

To enhance the credibility of the presented data, the user interface aggregated and displayed various forms of integrity information. The C2PA manifests, cryptographic hashes, and corresponding Content Identifiers (CIDs) were all made available for inspection. Additionally, the UI provided references to the Avalanche, Numbers, and ISCN records, alongside a hash of the Filecoin deal, which documented the agreement for decentralized storage of the original photographs. This multi-layered verification system ensured transparency and the ability to independently confirm the authenticity and integrity of the data.

Contents

Learnings

There are constraints to the use of photogrammetry

In the realm of 3D modeling and mapping, photogrammetry, while powerful, faces several limitations. The technique is inherently time-consuming, often requiring extended periods for data capture and processing. Accessibility to specific locations for on-site imaging can pose challenges, particularly when rapid documentation is critical. Furthermore, photogrammetry struggles with certain types of objects: those with minimal distinctive features, such as white painted walls, reflective surfaces, scenes with extremes of dark and bright areas, or moving targets, often yield unsatisfactory results.

These constraints, however, are being significantly addressed by the advent of Neural Radiance Fields (NeRF) models and Gaussian Splatting (3DGS), which promise drastic improvements in ease of capture both in efficiency and versatility in 3D imaging.

Photogrammetry uniquely benefits from quality photographs, and phones aren’t the best

The quality of source photographs is crucial, and smartphones, while convenient, often fall short compared to dedicated cameras. Successful photogrammetry requires sharp, minimally processed, low-noise photos, something that smartphone cameras sometimes struggle to deliver. Using a consumer-grade smartphone involves trade-offs: while accessible, a phone yields slower processing, lower quality results, and requires more effort than a professional camera.

Photorealism and heads-up display

The exceptional fidelity and clarity of the 3D models have drawn comparisons to an augmented reality (AR) experience, where a virtual user interface seamlessly integrates with the physical environment. This photorealistic rendering, often seen in heads-up displays, highlights the advanced capabilities of modern 3D modeling, offering a blend of digital and real-world elements that enhances user interaction and perception, a noteworthy achievement in both technical and legal contexts for its immersive quality.

Display of the cryptographic proofs

Even in VR, the presentation of cryptographic proofs still very much relies on a user’s understanding of complex concepts, yet there is a noticeable gap in user research and feedback on these designs. This lack of engagement in the user experience and research process raises concerns about effectiveness and accessibility. For a technical and legal audience, it highlights the necessity of bridging this knowledge gap to ensure that users can confidently interact with and understand the security and authenticity mechanisms in place.

On the generation of the 3D models

In the generation of 3D models, there exists a notable lack of clarity regarding their deterministic properties. This ambiguity raises concerns in the legal and forensic spheres, as these properties are crucial in establishing the authenticity and provenance of digital evidence. Current content and metadata authentication systems do not fully capture these nuances, potentially leading to a gap in the chain of provenance. This oversight underscores the need for more robust protocols in the verification and validation of 3D model data in legal and forensic investigations.

Further work

A continuation of this project aims to interrogate the benefits and limitations of this type of reconstruction in legal settings. The use of scene capture is of interest to both law enforcement and first responders for initial rapid documentation, as well as to investigators and trial teams for potential examination and analysis. As the reconstruction work implies computations and, for new algorithms, an element of machine learning and non-deterministic processes, we argue the use of anchors and explicit surfacing of the original, strongly-authenticated material provides guarantees and clues of explainability and support the verification of the model.