Comfy C2PA Signer

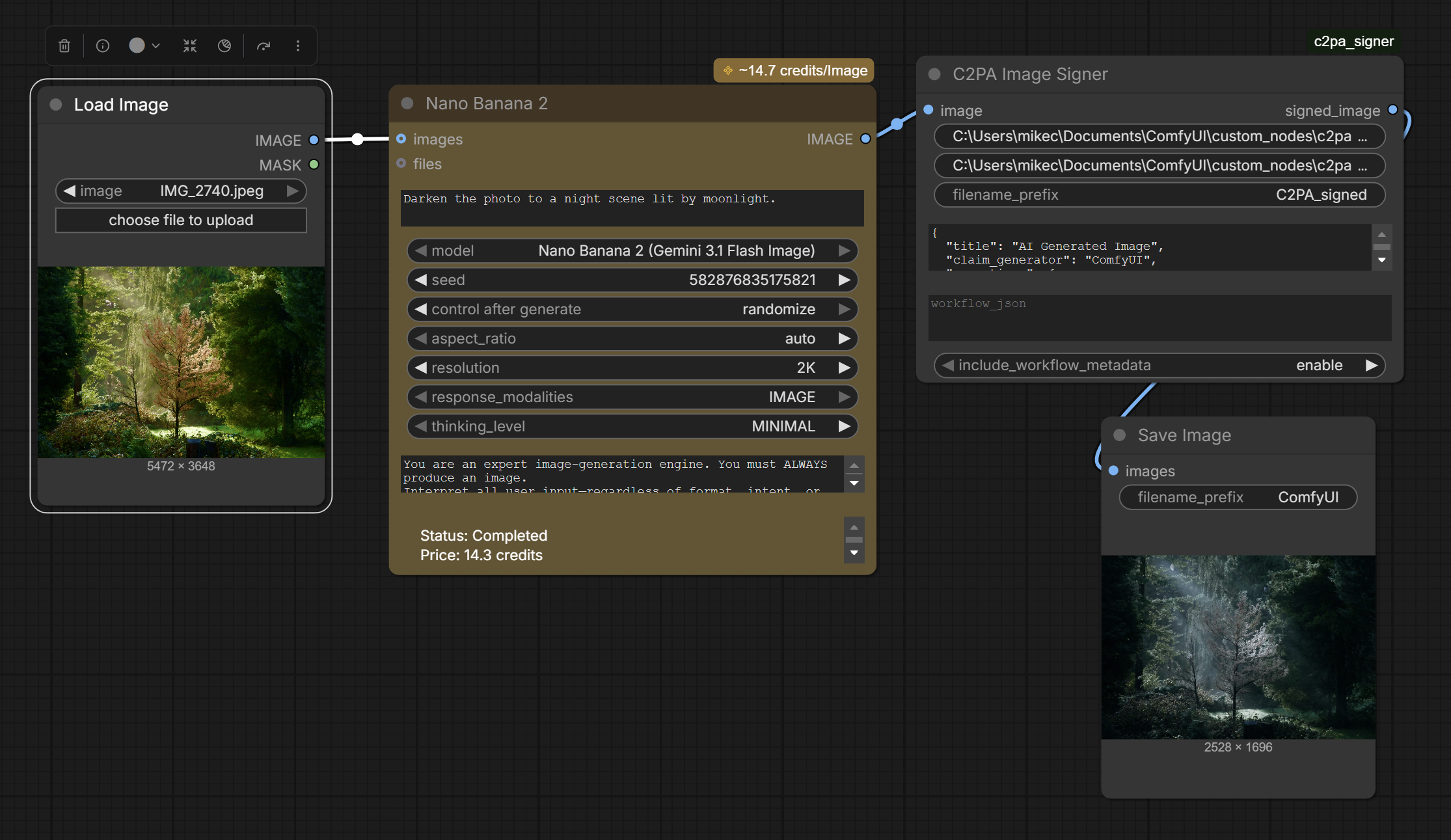

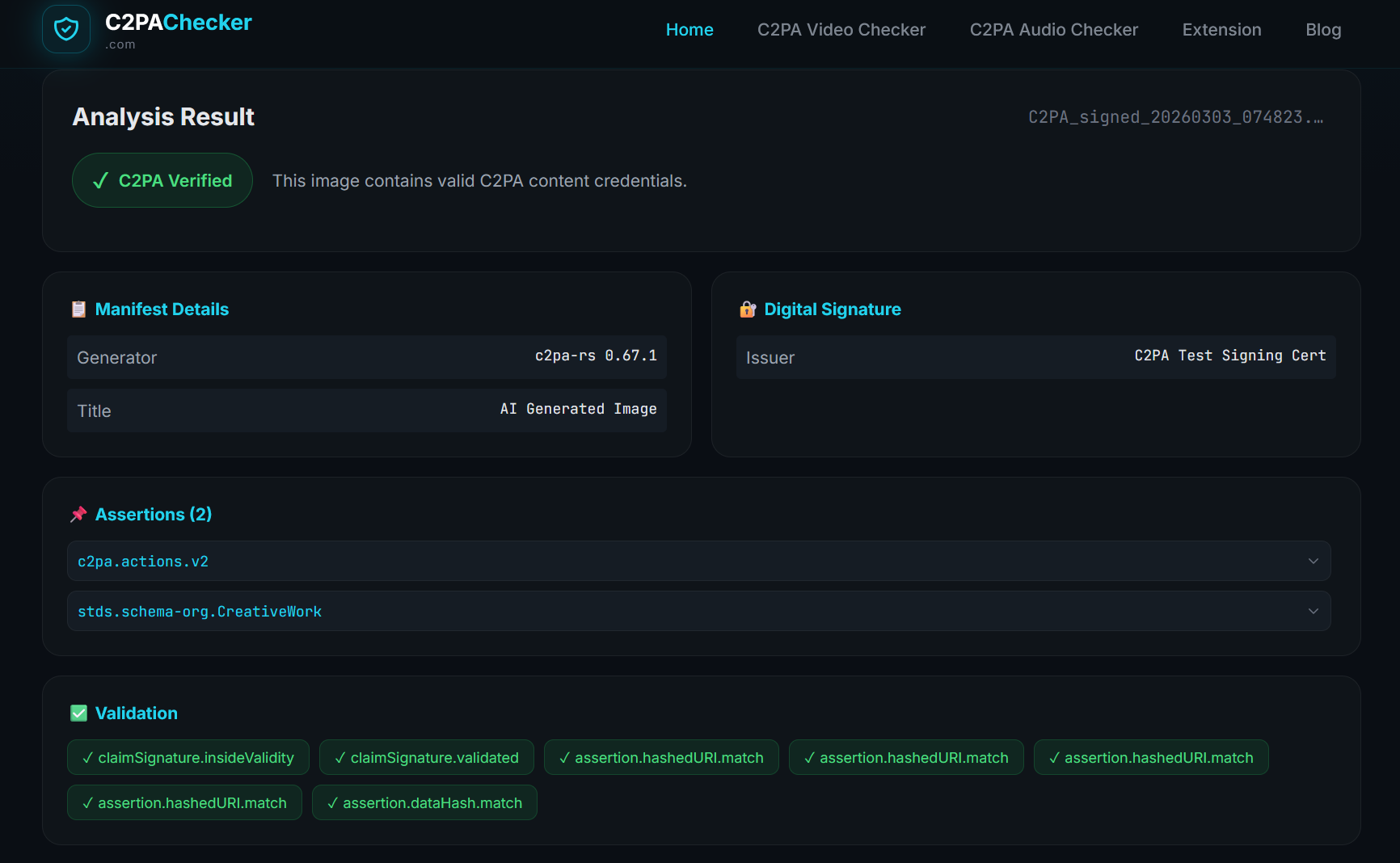

The ComfyUI C2PA Signer is an open-source custom node that integrates cryptographic content credentials directly into generative AI workflows. By signing synthetic media at the moment of generation within the ComfyUI interface, it allows creators to transparently certify that an image was AI-generated, which models and parameters produced it, and who authored it. The node is available on GitHub.

The Problem

Generative AI tools like Stable Diffusion have democratized the creation of hyper-realistic imagery, but they lack native mechanisms for provenance. Responsible creators have no standardized way to disclose that their output is synthetic, leaving their work indistinguishable from both authentic field captures and malicious deepfakes. This gap fuels the “liar’s dividend” – the ability for bad actors to dismiss genuine evidence of their wrongdoing as AI-generated, while at the same time making it difficult for audiences to identify what is real.

The Solution

We built the ComfyUI C2PA Signer to address a gap in the open-source AI creative ecosystem: the absence of any native provenance mechanism in the tools creators actually use. ComfyUI is one of the most widely adopted interfaces for Stable Diffusion workflows, and until now, none of its output carried content credentials.

Our custom node automates the application of C2PA Content Credentials as an image is rendered. It intercepts the output and cryptographically binds a compliant manifest directly to the file, explicitly flagging the content as AI-generated using the C2PA trainedAlgorithmicMedia digital source type, the industry standard assertion for content produced by machine learning models. The signed file returns to the ComfyUI workflow for further processing with its provenance intact.

The node is open-source, installable as a standard ComfyUI custom node, and designed to integrate into existing creative workflows without requiring changes to the generation pipeline itself.

JOURNALISM — Newsrooms increasingly encounter AI-generated images submitted as authentic. A standardized disclosure mechanism at the point of creation shifts the burden from reactive detection to affirmative proof, giving editors a verifiable signal before publication.

HISTORY — Archivists working with digitized collections need to clearly distinguish AI-enhanced or AI-reconstructed materials from unaltered originals. C2PA signing at the generation step prevents synthetic materials from entering the historical record without transparent labeling.

LAW — As AI-generated imagery becomes more photorealistic, legal proceedings require reliable methods to establish whether visual evidence is captured or synthetic. Content credentials applied at generation create an auditable record of origin.