Companion Secure Enclave Authentication

History

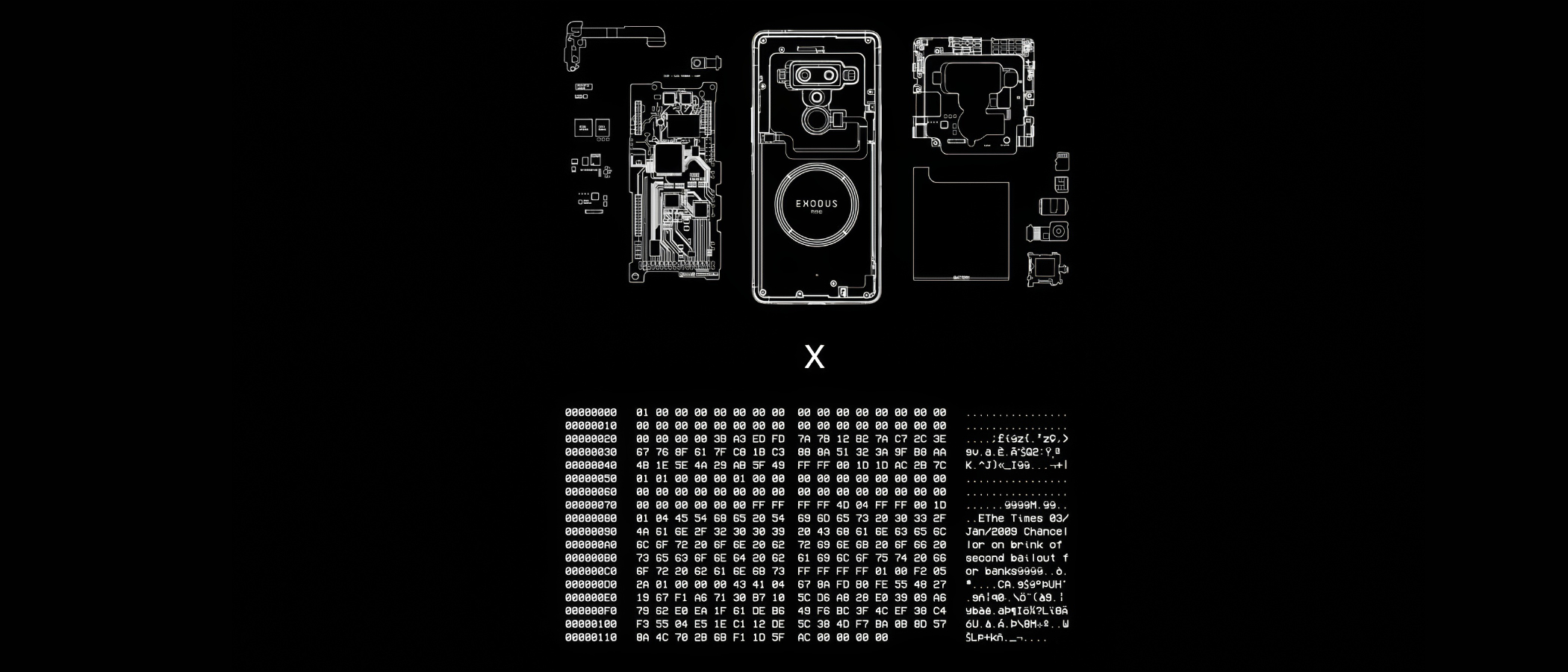

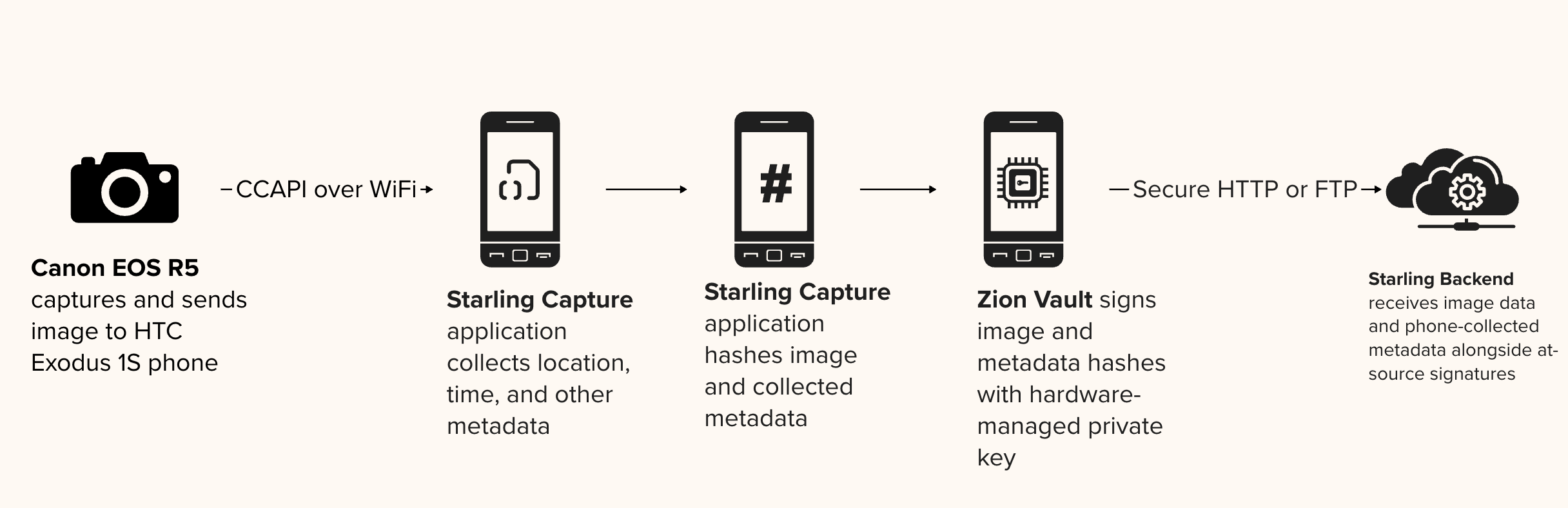

Companion Secure Enclave Authentication provides a “secure bridge” for professional photojournalism by tethering standalone cameras to mobile devices with hardware-level security. By pairing a professional camera with a smartphone’s secure enclave (such as the HTC Zion Vault), this prototype establishes a root-of-trust for images that traditional cameras cannot natively sign.

This method ensures that every photo is cryptographically sealed with a unique digital signature and sensor-rich metadata at the exact location and time of capture, creating an unalterable record of reality.

YEAR

2020-24

PARTNERS

HTC

Inside Climate News

Bay City News

Numbers

The Problem

Most professional cameras used in the field lack the internal hardware necessary to cryptographically sign assets or protect signing keys. Without a tamper-evident seal, digital photographs and their metadata (such as GPS and timestamps) are vulnerable to manipulation by AI tools or bad actors.

As these unverified images circulate, they lose their essential context, making it nearly impossible to determine the original version or defend against cheap- or deepfake allegations that distort the facts reported by photojournalists.

The Solution

Starling Lab pioneered a workflow that utilizes the hardware secure enclave of a companion smartphone to sign media from high-end cameras.

By tethering a professional camera (such as a Canon R5) to an HTC Exodus 1S phone via WiFi or USB, the Starling Capture app (co-developed with Numbers) instantly receives captured media. The phone’s Zion Vault hardware-secured signer then generates a cryptographic hash of the image and its associated sensor data (barometer, gyroscope, and GPS), sealing it with a private key that never leaves the device’s protected silicon.

CASE STUDIES

Stockton Homelessness: In 2022, Bay City News photojournalists documented the homelessness crisis in Stockton, CA, using Canon R5 cameras paired with HTC devices. These “authenticated time capsules” provided a verifiable record that challenged official statements and misinformation surrounding local funding disparities.

Brazil Pantanal: Photographer Felipe Albarenga documented the 2020 wildfires in the world’s largest wetland. By using the companion secure enclave, Albarenga created a tamper-evident archive of the devastation that could withstand the propaganda and denialism prevalent during the Brazilian presidential election.

Authenticated Web Archives

History

Accurate, reliable, simple to use, and secure workflows for archiving web content.

The Problem

Online content disappears rapidly, erasing critical evidence for investigative journalism, accountability, and cultural preservation. Social media platforms and hosting providers face pressure to implement stricter content moderation, with automated filters and human moderators making rapid decisions about what stays online. Records documenting potential crimes – especially those with violent imagery – risk being permanently deleted. Restoring content is often impossible: original posters may be arrested, lose device access, or no longer be alive when investigations begin.

Existing archiving methods face three challenges: platforms actively block automated crawlers, preserved content lacks the cryptographic verification and chain-of-custody documentation required for legal admissibility, and saved material becomes unsearchable across large collections.

JOURNALISM

Strong web archives provide a tamper-evident way to capture online evidence, safeguarding reporting against censorship and the erosion of digital sources.

HISTORY

These archives create a trustworthy and resilient collection of digital primary sources, ensuring that the ephemeral nature of the web does not erase our collective memory.

LAW

This technology establishes an unbreakable digital chain of custody, transforming fleeting web content into verifiable, court-admissible evidence.

The Solution

Starling is developing workflows using open source software for archiving web content to ensure the preserved archives are accurate and reliable, taking into consideration the sensitivity of the data. We draw from the considerable expertise deployed by national libraries and legal deposits from around the world.

Our case studies have experimented with forensically-sound web archiving, focusing on capturing broad contextual snapshots of web material.

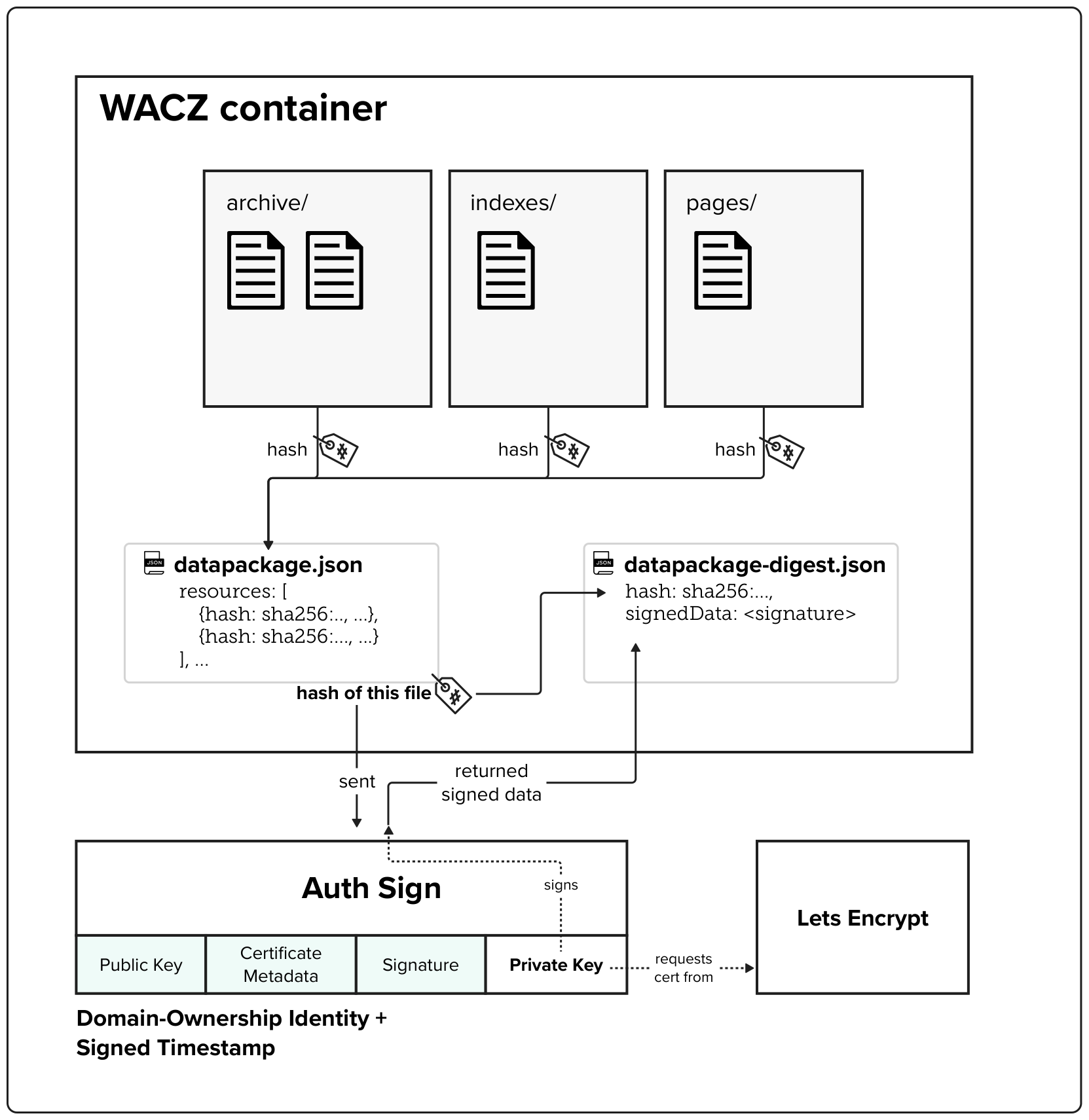

The WACZ standard and file format

The Web Archive Collection Zipped (WACZ) standard provides a portable packaging format for web archives that bundles WARC data, indexes, metadata, and verification information into a single ZIP file. Unlike traditional WARC files that lack contextual information and require complex server infrastructure for viewing, WACZ enables efficient browser-based rendering by organizing content with indexes that allow random access to only the data needed for each page.

Built-in Integrity Through Cryptographic Hashing

Every WACZ file includes a datapackage.json manifest that contains cryptographic hashes of all resources within the archive, providing a verifiable fingerprint to detect any unauthorized modifications. This hash-based integrity checking ensures that archived content remains tamper-evident throughout its lifecycle.

Authentication Through Digital Signatures

The specification adds optional authentication capabilities by allowing creators to digitally sign archives – notably using TLS certificates. These signatures validate both the identity of the entity creating the archive (using X.509 SSL certificates) and establish a trusted timestamp for when the capture occurred.

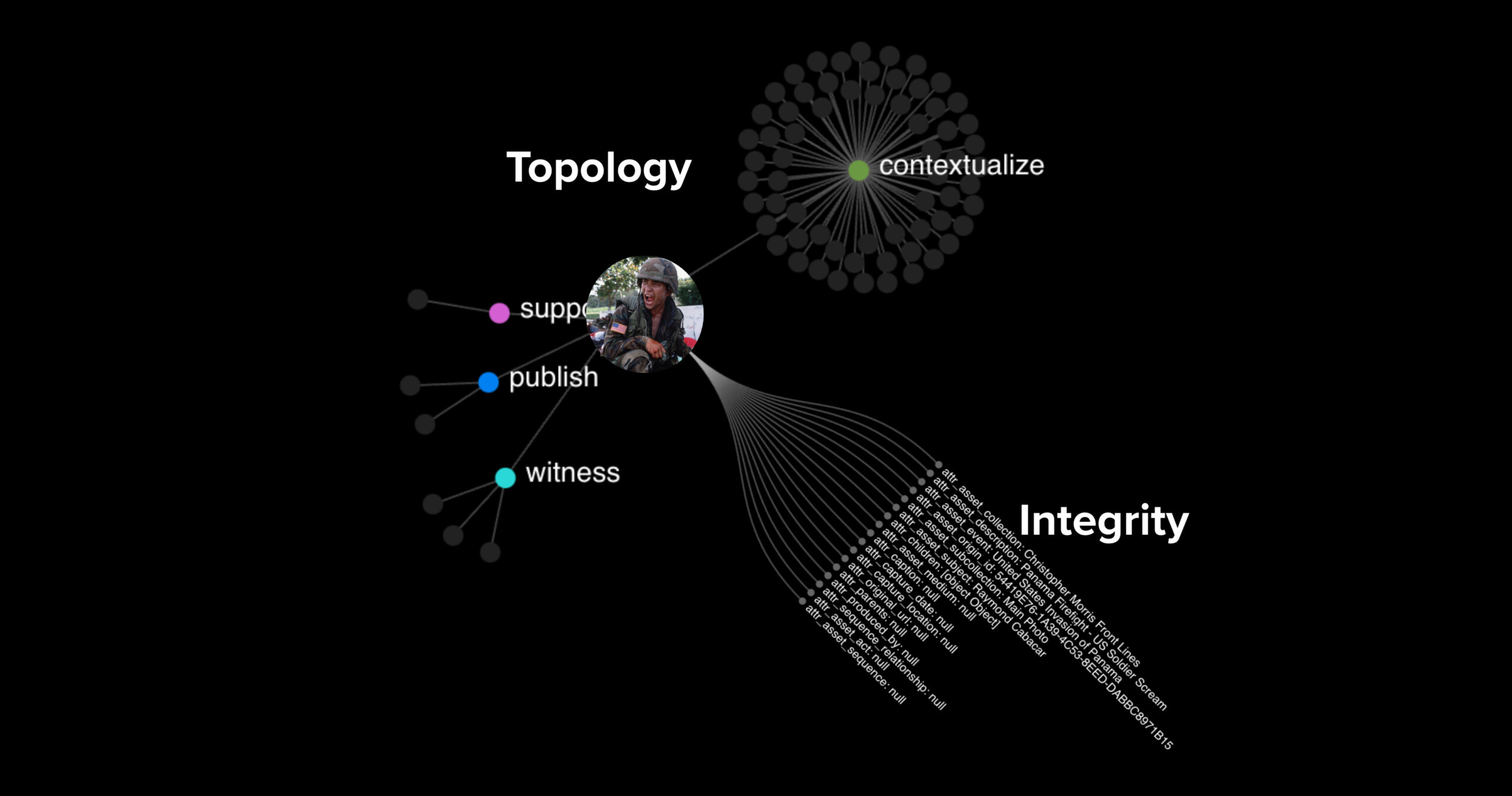

Working on Authenticated Data with Authenticated Attributes

History

Over the coming year, we are excited to focus on our new initiative, Authenticated Attributes, a design and prototype dedicated to enhancing the authenticity and trustworthiness of digital media. This effort addresses the growing challenges posed by AI-generated content, such as deepfakes, which make it increasingly difficult to distinguish between genuine and manipulated media.

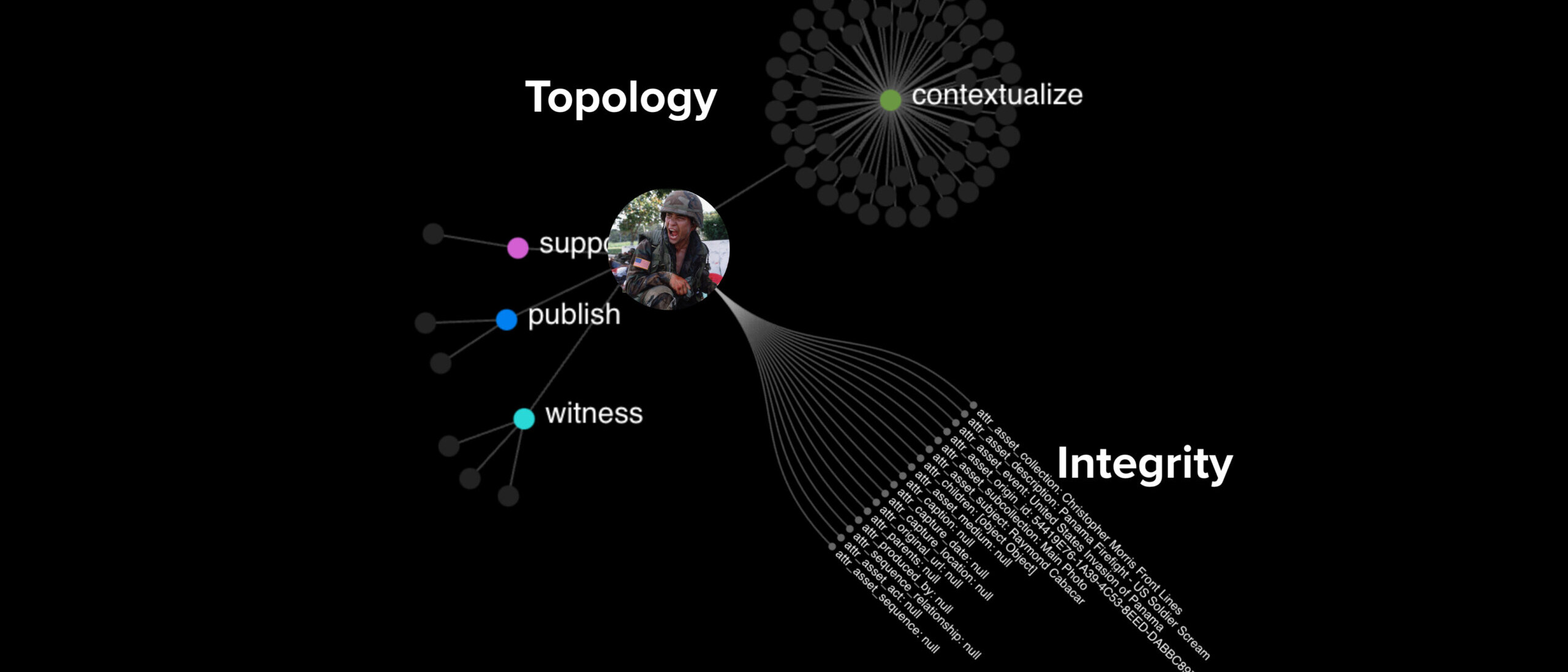

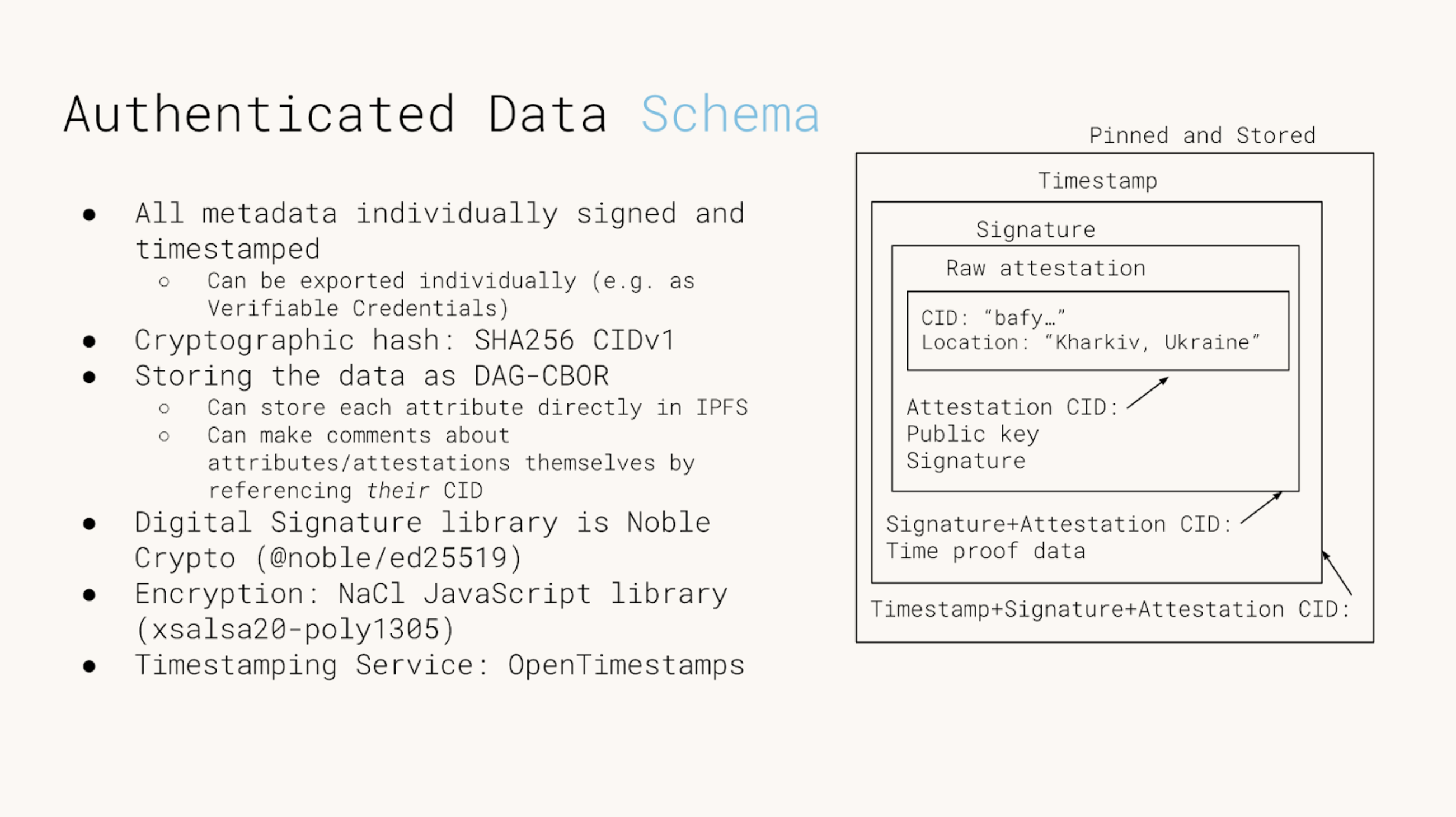

What is Authenticated Attributes?

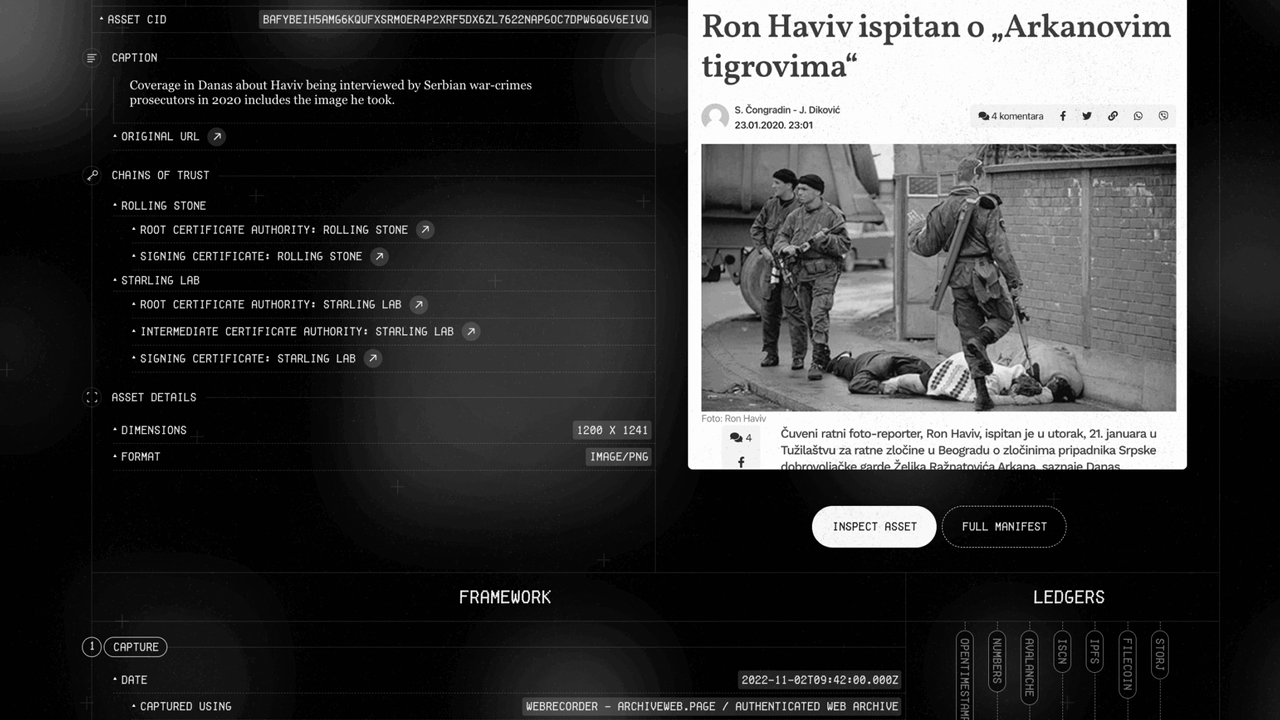

Authenticated Attributes aims to establish the integrity and authenticity of metadata for digital content. This project utilizes cryptographic tools such as hashing, timestamping, and digital signatures to achieve this, focusing on digital media. By leveraging these technologies, the Starling Framework<internal link> provides a robust process that shifts the burden of proof from detecting falsifications to verifying and supporting authenticity. Authenticated Attributes permits investigators and journalists to understand and produce authenticated data, making their work more accessible and trustworthy.

The Challenge of Deepfake Detection

The current landscape of AI-generated content presents significant challenges. As AI technology advances, so does its ability to create realistic fake images and videos, making traditional detection methods obsolete.

Authenticated Attributes offers an alternative by ensuring that digital media can be verified independently, fostering trust in the content we consume.

The Technical Foundation

Our approach builds on three core cryptographic tools:

- Cryptographic Hashes: These unique identifiers act like digital fingerprints for files. Any alteration to the file results in a different hash, enabling the detection of tampering.

- Timestamping Services: By recording the hash of a file on a third-party service that establishes a trustworthy time (more on that in this dispatch <Cole’s piece>), we can prove that the content existed at a specific time, which is crucial for verifying the authenticity of media.

- Digital Signatures: These provide a way to authenticate the source of information, no matter who provides it. It also ensures that the content has not been altered since it was signed.

So far, we have represented data as bundles, composed of a main asset hash (often, that of a piece of media) and of metadata, and integrity proofs. Authenticated Attributes does away with these monoliths of conjoined meaning, and promotes a more atomic way to represent data: as atoms of facts related to a principal hash or CID. They’re simpler, more portable – and relationships between these are much more explicit.

Verification and claims

Metadata about a piece of media, and its provenance, are verifiable following these steps:

- Acquiring or coming into contact with metadata: Metadata is stored in Authenticated Attributes with explicit reference to a unique media. It can be retrieved from an Authenticated Attributes instance (e.g. as one would work inside a content-management system) or encountered separately, as an exported file (e.g. as a sidecar to a media presented online).

- Verifying reference to the original media: The attestation (our term of a single piece of metadata) contains the CID of the specific asset, usually a media file. A potential verifier can hash their own media file, get the CID, and compare it to ensure the attestation they have is referring to the same file.

- Verifying provenance and integrity: The verifier can check that the signature is valid, and was made by the entity they expect, such as a specific individual or news organization. This proves the attestation has not been tampered with, and was authored by an entity they trust.

- Verifying the time origin: They can also check the timestamp proof. This operation combines information in the attestation timestamp proof with a calendar server and the Bitcoin blockchain, to prove that the attestation was made before a certain time. If this is valid, then the verifier can know that the attestation (including the signature) was made before a specific time, and has not ever been modified since. This “locks in” metadata to prevent organizations from secretly altering it in the future.

Integration with Asset-Management Systems

Authenticated Attributes supports verification workflows and the consumption of authenticated, verifiable data. It will be integrated into our research and verification processes, allowing for the creation of verifiable records of digital media. This system not only helps in identifying tampered content but also provides a way to preserve the original media’s integrity over time. Researchers, journalists, and the public can rely on this system to verify the authenticity of images, videos, and other digital content.

The original Authenticated Attributes prototype formed the backend of our latest integration with an asset-management system, allowing investigators and journalists to work with and produce authenticated data. We have integrated with Uwazi, a leading human rights product created and maintained by HURIDOCS. This integration makes it easier to work with and consume authenticated data, enhancing the accessibility and reliability of digital media.

Our reference implementation of the Starling Framework is presently undergoing a re-architecture to conform to the Authenticated Attributes design. This is motivated by learnings from the past two years operating in the field, so we can better handle interoperability and selective disclosure of potentially sensitive metadata to different parties.

Technical Details

The Authenticated Attributes project utilizes several sophisticated tools to ensure data integrity and provenance:

- IPFS (InterPlanetary File System): A distributed file system that allows secure and efficient storage and sharing of media.

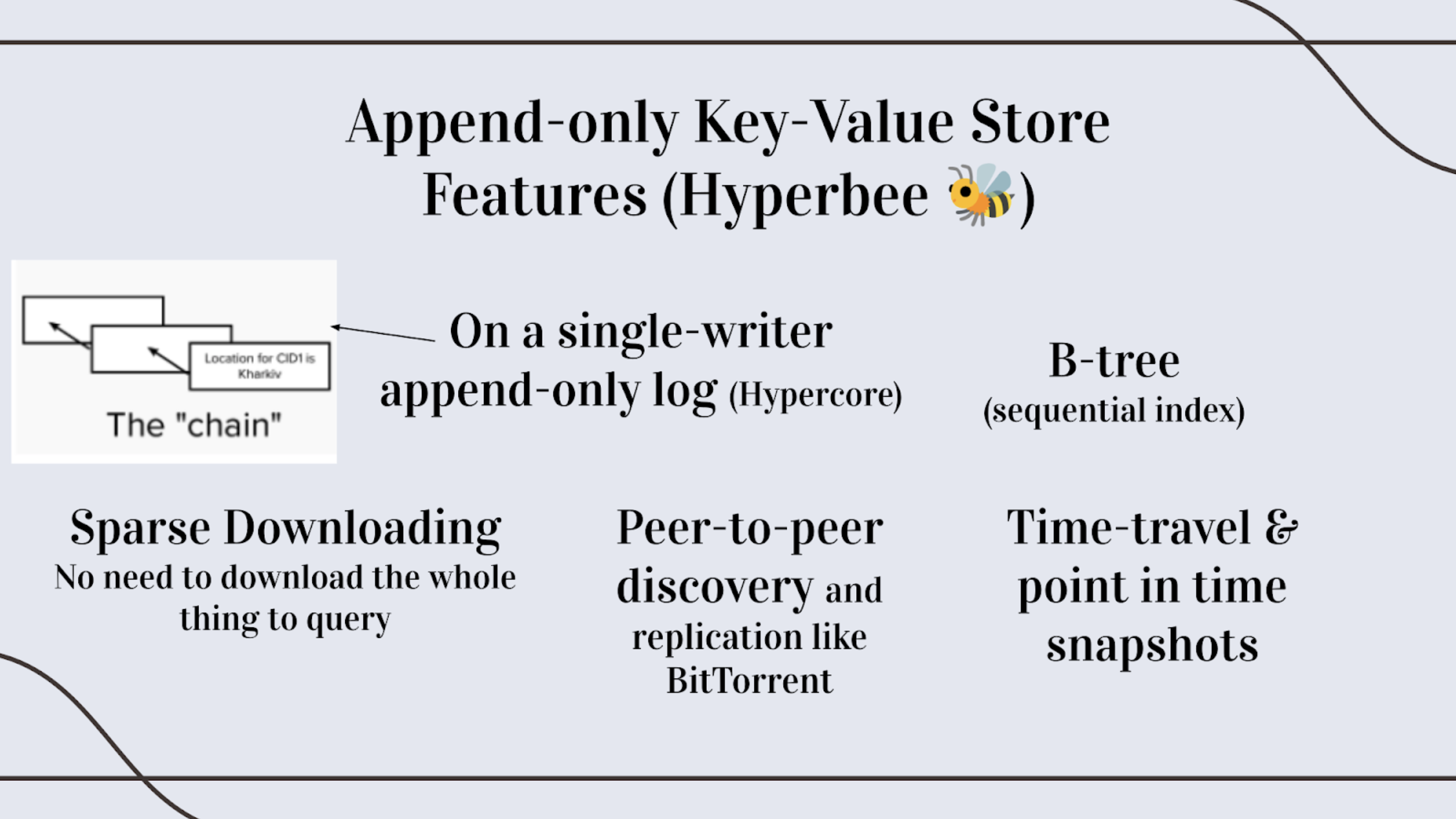

- Hyperbee: A key-value database built on the Hypercore protocol, enabling peer-to-peer interactions. Hyperbee supports a decentralized network of creators and consumers of authenticated data, enhancing the robustness and accessibility of our platform. Its peer-to-peer nature is particularly beneficial for applications like the Index for Accountability, a use case for the United Nations, where a decentralized network can enable more secure and reliable verification and documentation of human rights abuses.

- Verification systems: Asset-management systems, integrated or integrating with Authenticated Attributes to manage and verify digital assets, with integrity proofs packaged in industry standards such as the C2PA Specification and Verifiable Credentials

For more detailed information about the project and to get involved, visit our GitHub repository and read our detailed documentation here.

Mom, I See War: A Digital Archive by Numbers Protocol and Starling Lab

History

Mom, I See War (MISW) is the first known digital archive to document the way children experience war by preserving high-resolution jpeg scans of their drawings, metadata about the drawings, and encrypted versions of these that contain private, identifying information (the last names) of the children. MISW has a collection of 10,026 single-image assets from Russia’s invasion of Ukraine which Starling Lab has registered on the NEAR blockchain and archived on distributed web protocols.

- First, records of these assets were hashed and registered on the NEAR blockchain using Numbers Protocol.

- Second, versions of these drawings and separate metadata files (with the full names of the children redacted) were stored on IPFS.

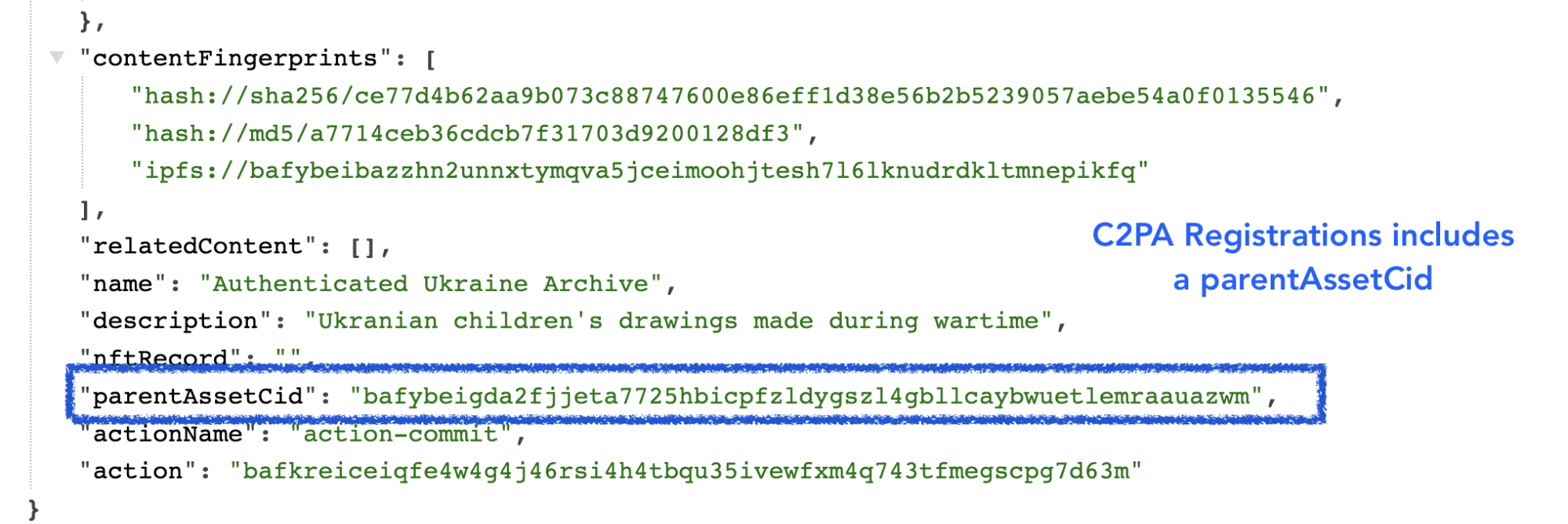

- Copies of the metadata were injected with information according to the C2PA standards, which enables users to inspect and understand more about what’s been done to these records, where it’s been, and who’s responsible.

- An encrypted archive of the drawings (assets) and full metadata were also sealed and preserved as archives on Filecoin.

The four versions of records of these assets each serve a different purpose. The registration of a hash (or an immutable ‘fingerprint’ of the drawing and metadata) on the NEAR blockchain networks establishes a record of exactly what was stored, and a date that it was stored on, without revealing any other information.

The publication of the full drawings and metadata on IPFS (without individual identifying information like the last name of the child who made the drawing) makes these assets available on a publicly owned, peer-to-peer system which is resilient against infrastructure deprecation and various forms of censorship, and the assets can easily be hosted by anyone.

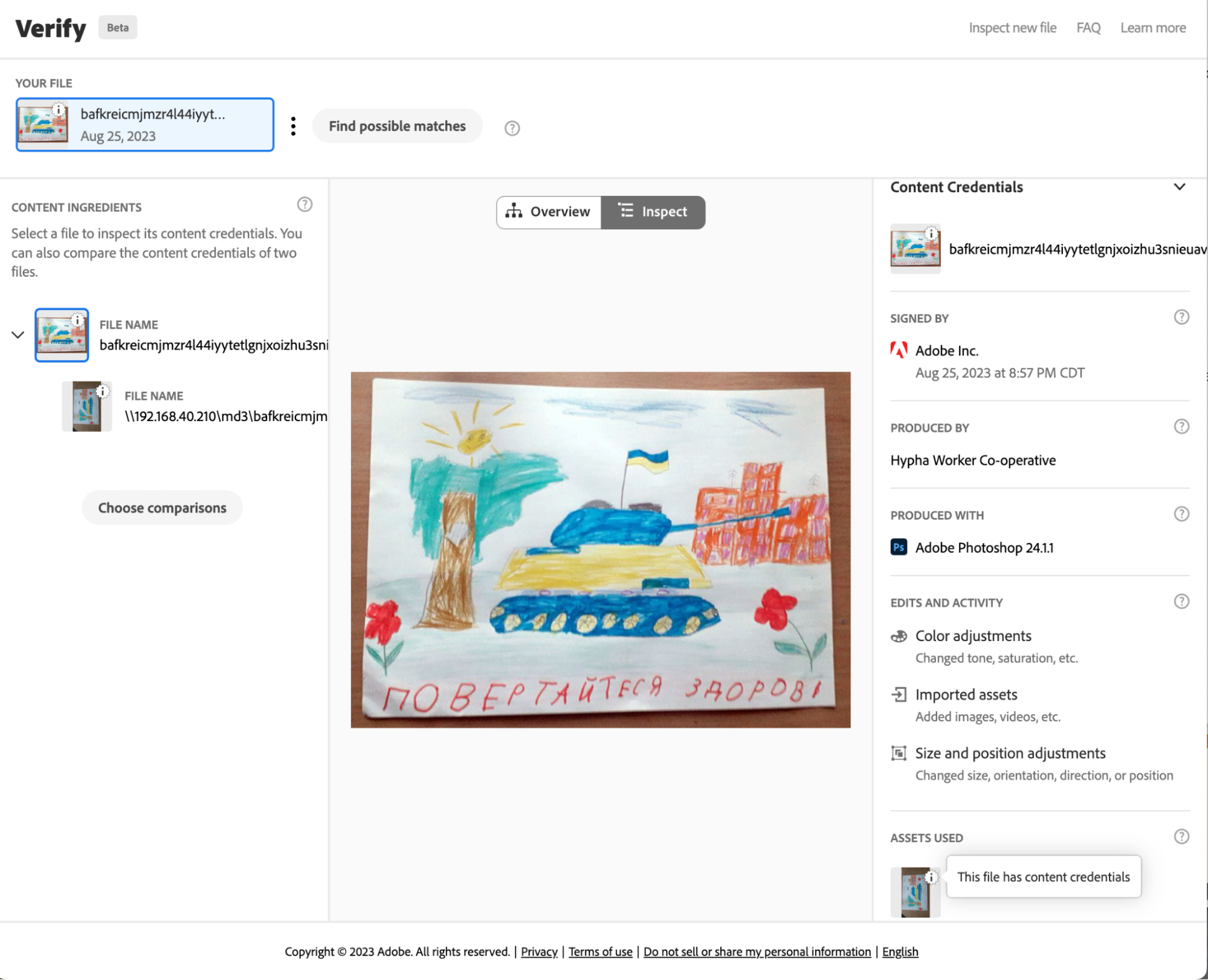

The C2PA version of the registered assets was created according to standards set by a coalition for standards development: The Coalition for Content Provenance and Authenticity (C2PA). These standards guide the development of tools, supported within Adobe products such as Photoshop, that create public and tamper-evident records that can be attached to an image. These images can then be used with different tools for inspecting and making edits that preserve the understanding of what’s been done to modify assets, where it’s been, and who’s responsible.

Finally, the archiving on Filecoin (drawings are bundled with full metadata and encrypted) helps preserve these drawings across multiple storage providers in a way that can outlast current media storage and publishing methods and technology.

Numbers Protocol Registration

Drawing & Metadata Registration

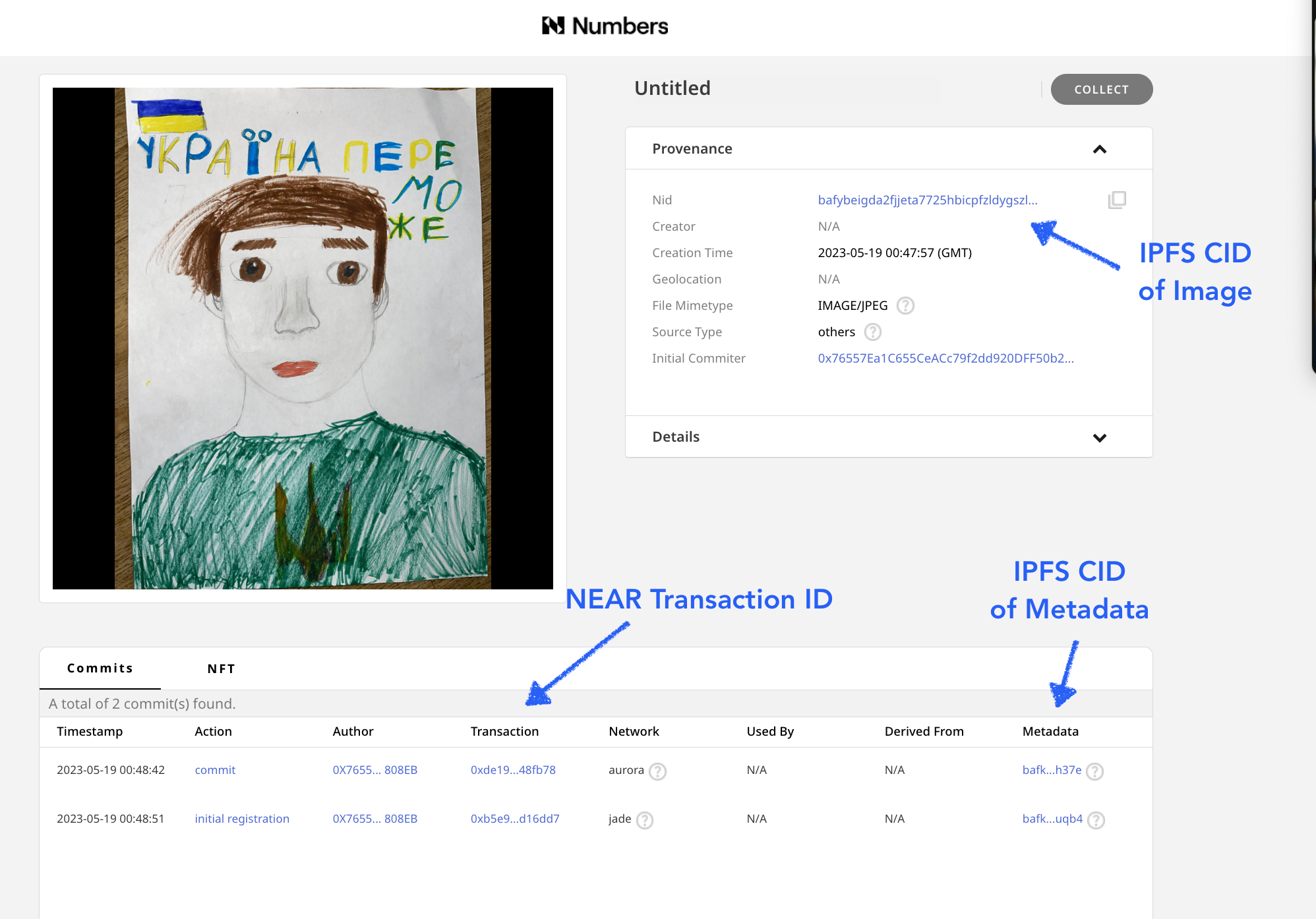

To register these assets, Starling Lab collaborated with the Numbers Protocol and also used the Starling Integrity pipeline to process the data and create publicly available registration records, publishing images and metadata records on IPFS. The records created include images of the drawings, along with a limited (redacted) set of metadata, which did not include certain identifying information, such as the children’s last names. See the example on Numbers Search.

The initial drawing and metadata record were both stored on IPFS with their IPFD CIDs stored on the NEAR blockchain and a registration of this record was added to Numbers Mainnet. Using the Numbers Protocol search, we can use the drawing’s IPFS CID to find the transaction containing all the information.

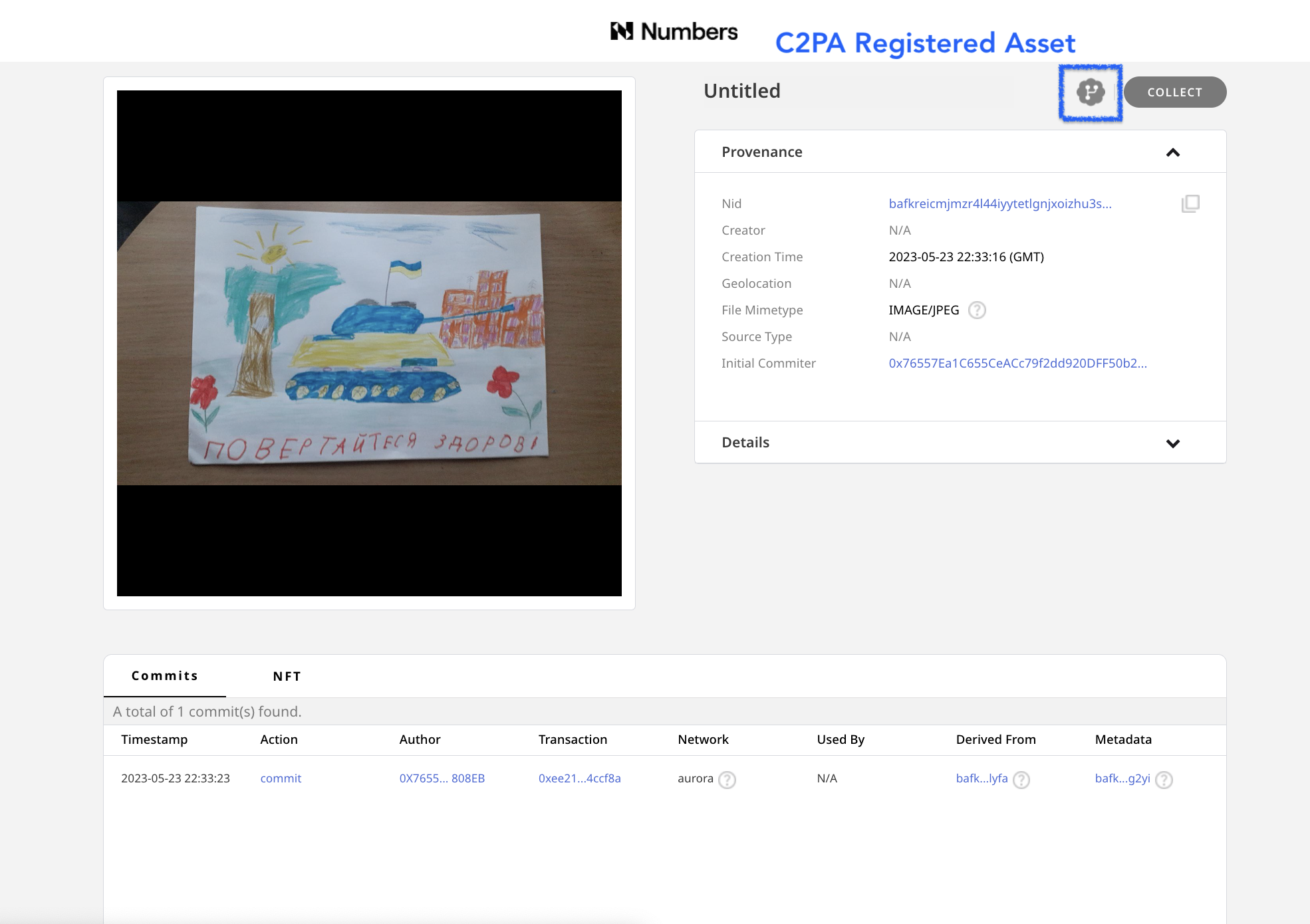

C2PA Registration

Once the regular versions of the drawing and metadata were registered, C2PA-compliant versions of these assets were also created.The presence of an icon next to indicates that an asset is one with C2PA metadata, and links back to the initially registered asset. The C2PA standard is a way of adding metadata that points versions of an asset back to the original asset, making an audit trail.

This makes it possible for anyone who may want to create verifiable, processed or modified versions (such as versions that are cropped or color enhanced for publication) of the drawings to create them, and connect them back to the original image with a record of any changes made.

The C2PA metadata added to the image is a standardized method used by tools such as Adobe Photoshop which allows each incremental edit to add a signed thumbnail and edit log to an image.

Starling Integrity Pipeline

All of the assets for this project were also bundled together, then registered and preserved using the Starling Integrity pipeline. This is a data processing pipeline that Starling Lab uses to bundle images with metadata, add registration certificates, encrypt, and add to various distributed storage and archival systems.

For this particular project, we used the Starling Integrity pipeline to zip complete copies of the image assets with private metadata including the last names of the children who made the drawing, then included authsign and OpenTimestamps certificates, and encrypted the file bundle. We then added these signed and encrypted files on Filecoin for archival storage, a cryptocurrency-collateralized archival storage system that will store the files for a duration committed by the set of storage providers, in this case 18 months, and run intermittent checks on the content to verify that the data is continuously preserved with the specified redundancy.

The archive itself is registered on NEAR using Numbers Protocol, as well as on the LikeCoin blockchain with ISCN.

Searching and Viewing Assets

The records for each drawing were archived by Starling Lab and the Numbers Protocol, with the CIDs & NEAR blockchain transaction IDs for the original drawing, the original metadata, as well as versions of these that have C2PA credentials, which allows one to understand the history and identity data attached to images that may need to be modified or edited.

For each drawing, you can view each of these four records using Numbers Search to:

- See the blockchain registration of these assets using a block explorer for NEAR or Numbers Mainnet.

- Inspect the images and sets of metadata on IPFS using the Numbers IPFS gateway.

- Use Filecoin explorer to see the archive of the assets.

- Download images and view C2PA-credentialed assets with the Verify tool.

Search for a Drawing and Metadata with a CID

In order to search and view the NEAR blockchain registrations with the Numbers Search tool, a user needs to have the asset CID provided from the list of archives. In order to search in a web browser, type in https://nftsearch.site/asset-profile?nid= followed by a CID, such as bafybeibazzhn2unnxtymqva5jceimoohjtesh7l6lknudrdkltmnepikfq

The resulting URL of the above C2PA-credentialed asset would look like: https://nftsearch.site/asset-profile?nid=bafybeibazzhn2unnxtymqva5jceimoohjtesh7l6lknudrdkltmnepikfq

Blockchain Registrations

The assets were registered on two blockchains. The basic information with the CID of the content stored on IPFS, plus a hash of the data (a check you can use to see if content you have, such as a copy of the drawing is the same as this registered version) is added to the Numbers Jade blockchain network. Next both the basic registration and more complete version of the asset’s metadata, which includes C2PA data, was registered on the NEAR Aurora blockchain.

The transactions where these are registered are linked from the Numbers Search interface and can also be found by appending the transaction ID to the following URLS

- Jade: https://mainnet.num.network/tx/<transaction ID>

- Aurora: https://explorer.mainnet.aurora.dev/tx/<transaction ID>

These registrations provide an immutable, timestamped record of the sha256 hash of the content, and can be used to verify whether or not any copy you may have of a drawing or it’s metadata are in fact identical to the version that was archived on the blockchain by Starling Lab and Numbers.

Viewing Data on IPFS

IPFS is a peer-to-peer protocol for sharing and hosting data and media. This is a resilient alternative to the client-server http-based web2 internet that can be accessed from web browsers using a gateway that bridges the http and IPFS networks.

Numbers Protocol hosts a gateway which you can use to view the IPFS copies of the drawings and metadata. To access these copies, you can click on a link in Numbers Search, or type in the gateway address along with the CID in any web browser: https://ipfs-pin.numbersprotocol.io/<CID>

Having this published on IPFS makes it easy to retrieve and inspect the images and metadata that has been registered on the different blockchain networks.

Viewing Filecoin Registration

Filecoin is a cryptocurrency (FIL) backed archival storage system. This system ensures archival storage on their network by the miners of their cryptocurrency. The protocol runs intermittent checks against sets of data that are archived, and if data isn’t stored, the miners (also known as storage providers) lose FIL collateral that they stake when they made the storage deal. The Starling Integrity pipeline used this to prepare and store these encrypted versions of the archive.

With the Filecoin CID Checker, you can view information about an archive, such as the identity of the node storing the data, the status of that archive, the deal made for the preservation, and the immutable content identifier or the payload that was submitted for storage. See the information about the MISW collection that was archived on Filecoin (Filecoin piece CID baga6ea4seaqdqgywa3n55hughvnfkroou6nm6qisqalzew7vy2vdqe3jfcr6wci), which includes all files, metadata, and a spreadsheet listing all assets.

Viewing C2PA assets with the Replay Tool

The Content Authenticity Initiative (CAI) is a group working together to fight misinformation and add a layer of verifiable trust to all types of digital content. Members of this group include those from media and tech companies, NGOs, academics, and more.

In February 2021, Adobe, Arm, BBC, Intel, Microsoft, and Truepic launched a formal coalition for standards development: The Coalition for Content Provenance and Authenticity (C2PA). These standards guide the development of tools, supported within Adobe editing tools such as Photoshop, that create public and tamper-evident records that can be attached to an image to understand more about what’s been done to it, where it’s been, and who’s responsible. These same standards were used to create metadata and records for the MISW collection.

With the Verify tool, you can download an image via IPFS from the MISW collection (adding a .jpg file extension), drop the image in the online tool, and inspect the image and data added according to the C2PA standards. If you were to edit the image with Photoshop, the hash of this record would change – however, you now have a tool that can tie this image back to the original, as well as show and compare any edits and changes made in Photoshop.

Conclusion

The project demonstrates the archival process for a valuable set of assets that create a record of the war in Ukraine to help future generations that want to understand this pivotal event in history, and helps create a reliable narrative of the past that may be used by future generations to help understand, make claims, and hold individuals and governments accountable and build a better future for humanity.

This set of tools not only creates an immutable archive of these assets, it also demonstrates the tools that can be used for the creation of an archive that is searchable and verifiable. It creates a set of assets that can be used in journalistic, legal, and historical depictions that allow those who may want to make minor edits to share these images with others by cropping, improving color, tone, and contrast, or make other minor edits, in a way that makes it possible for the public to understand the accuracy and veracity of these copies. Learn more about the collaboration with the Mom I See War Research and Numbers Protocol.

Creating Human Records that Stand the Test of Time

History

Preserving digital evidence for the long term using ledgers

This article was originally published in the FFDW DWeb Digest Issue #1, and is excerpted below. Don’t miss the whole publication, as well as the foreword from the editor, Mike Masnick.

We are ever so grateful to the Foundation for their continued support of our work, and their nudging us to talk about it more 😉

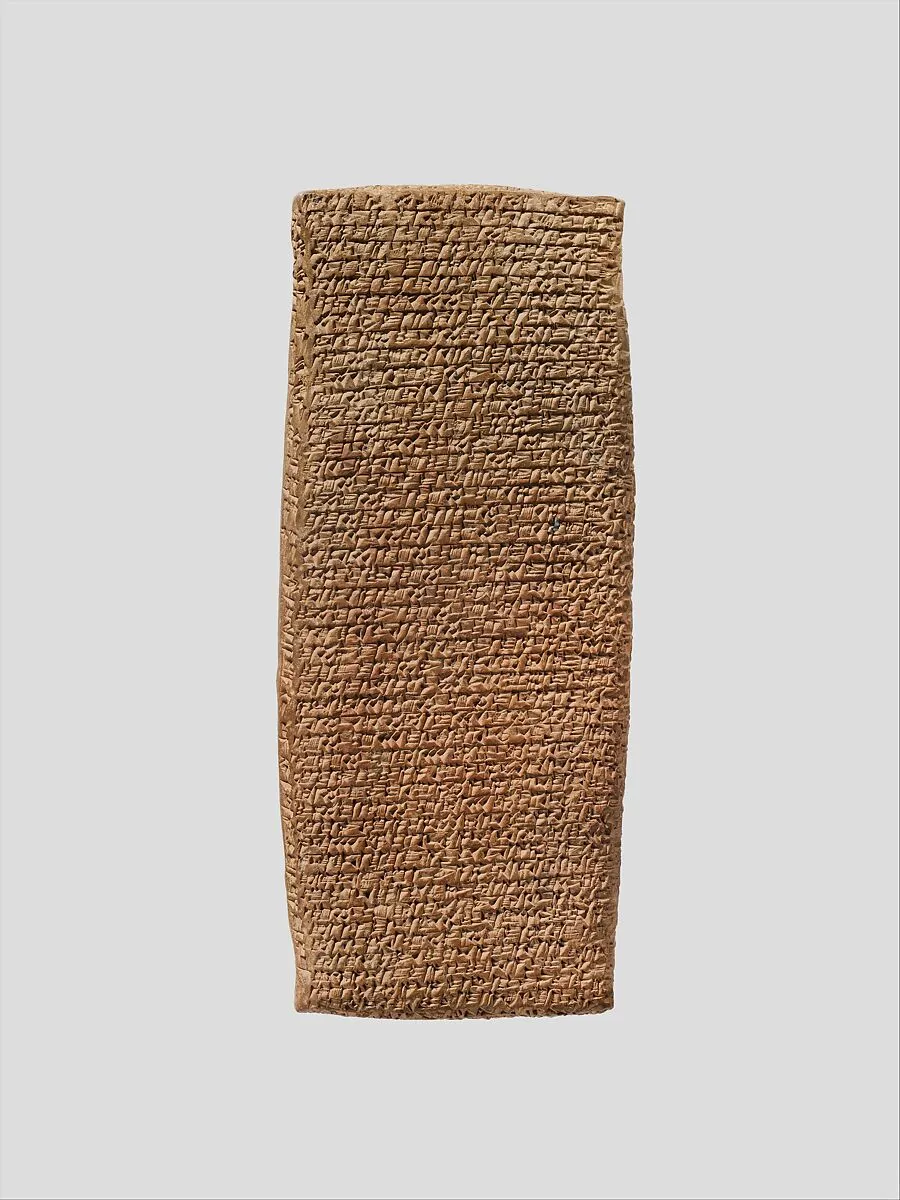

As business rivalries go, the story of Suen-nada and Ennum-Ashur was pretty routine. They both claimed ownership of valuable intellectual property, wrestled over control of accounts, and accused each other of theft.

Their case went to court. Witnesses were present. Testimony was delivered and became crucial evidence.

A fire burned down their Assyrian colony in 1836 B.C., but a record of their testimony survived and is now available for review at The Metropolitan Museum of Art in New York.

We know about the trial because, thousands of years ago, the world’s most advanced technologists figured out how to use a crude implement to etch markings into stone and clay. This data storage breakthrough would be used to record crop yields, trades, weddings, births, deaths, wars, legends, and other data that was critical to evolving human civilization.

While they are museum pieces today, at the time there was a logic to using hard materials for persistence. Even back then, this method wasn’t the fastest way to record information (papyrus was invented at least a millennium before) but it would last and, most importantly, be difficult to alter. Modern technologists still find this approach important — and the efficiency tradeoffs familiar.

(…)

Evidence has always needed to stand the test of time and the test of scrutiny. Long-established concepts can still help, including decentralization and cryptography (which was likely around even in the days of Suen-nada and Ennum-Ashur). A related modern concept can also help: blockchains.

In the spring of 2022, Russian artillery shells ripped through the walls of several schools in Kharkiv, Ukraine. The whole region was under heavy fire from advancing Russian armed forces, but an intentional attack on civilian targets is a war crime. The United Nations specifically defines education as a human right. Turning sanctuaries of learning into a battlefield creates a vicious cycle of illiteracy and poverty.

(…)

Starling Lab set out to authenticate and preserve these vulnerable assets. (…)

Our investigators made web archives of social media posts from Kharkiv, verified using state-of-the-art OSINT techniques. Decentralized storage deals preserve the collection on thousands of servers around the world, and the redundant recording of hashes and cryptographic signatures permit trustless inspection of the items and their audit log.

(…)

Open source intelligence (like social media posts) may still be questioned, so Starling arranged for photographers to visit two of the schools. They used the context-rich capture app ProofMode, from the Guardian Project, to include corroborating metadata (including time, GPS coordinates, surrounding cell network, phone locale, etc.). These bundles were cryptographically sealed with the images and their integrity proofs registered to several blockchains for safekeeping.

Starling Lab has since made a pair of submissions to the Office of the Prosecutor at the International Criminal Court, including an analysis of how these methodologies can establish credibility of the evidence.

(…)

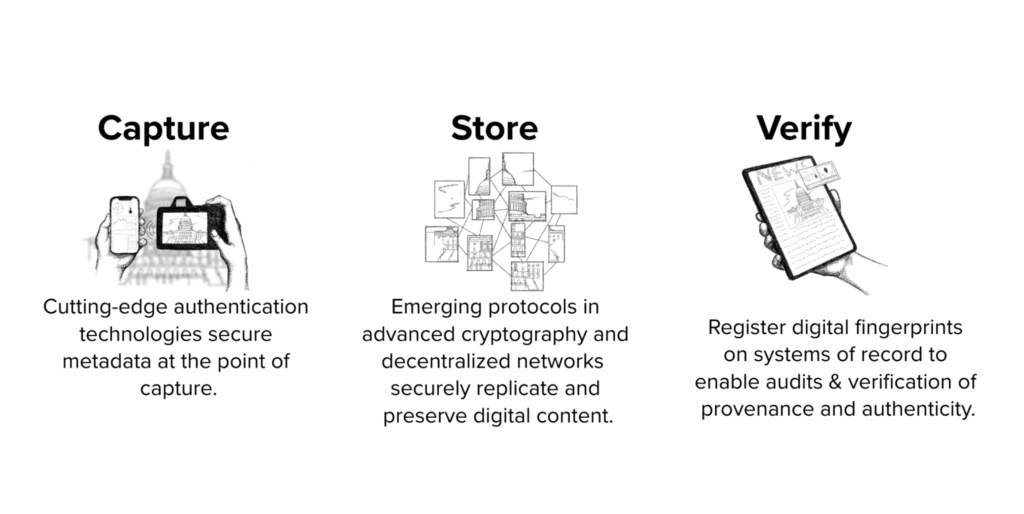

The same approach – using our Starling Framework of Capture/Store/Verify – has helped us to preserve testimonies of Holocaust survivors, store thousands of examples of Russian misinformation, document living conditions for the homeless in California, record promises by politicians about government surveillance, and save examples of climate change impacts in the Amazon.

Cryptography, decentralization, and blockchains are the tools we used to preserve these important records in humanity’s collective memory. These projects have created immutable records to stand up against challenges from the wide-scale adoption of generative AI, sophisticated disinformation campaigns, and changing digital custody practices.

Today’s courts and other civic institutions must confront similar challenges that undermine trust in their own critical records. By embracing similar innovations, there’s a chance for digital evidence to become as resilient as ever – the modern equivalent of being etched in stone.

What to Get Right First

What to Get Right First

By learning from the shortcomings of its predecessor, Web3 can avoid repeating the same mistakes and create a more just digital future.

Rebecca MacKinnon, Starling Fellow 2021

Reading Time: 5min

![]()

History, Journalism

Share

Background

In this piece, our 2021 fellow, Rebecca MacKinnon, lays out a crucial guide for the Web3 community, urging it to learn from the human rights failures of Web2.

At Starling Lab, we believe it's essential to proactively build a more equitable internet. Rebecca contends that the concept of "technological neutrality" is a fallacy that allowed Web2 to amplify societal biases, and calls on Web3 to instead embed human rights considerations into the very fabric of its technology and governance. To achieve this, she advocates for a proactive approach to risk mitigation, a critical examination of business models, and the establishment of robust grievance mechanisms from the outset.

Highlighting the importance of collaboration, this article stresses the need for the Web3 ecosystem to work with civil society and other stakeholders to identify blind spots and ensure accountability, ultimately making a compelling case for a more responsible approach to innovation.

The Single Paved Line Threatening the Achuar People

The Single Paved Line Threatening the Achuar People

How the Achuar community of Copataza is defending their ancestral territory against the illegal logging, cultural shifts, and economic forces that accompany a new highway.

TeamReading Time: 5min

Prototypes

Share

Contents

Background

Fellowship Projects and Awards

Background

In the heart of the Ecuadorian Amazon, the Achuar people – a community of approximately 6,000 – have long stood as guardians of their ancestral territory. For the Achuar, the jungle is not merely a resource; it is the foundationthat molds their culture, spirituality, and daily survival. However, this deep connection is under constant siege. Since the Spanish conquest, the vast resources beneath the Amazon floor have attracted juntas and corporations alike, subjecting Indigenous peoples to a centuries-old fear of expulsion.

Today, this chronic scramble for resources has manifested as deforestation, violent standoffs, and encroachments by multi-billion dollar mining operations. In partnership with Protocol Labs and the USC Shoah Foundation, photojournalist Pablo Albarenga traveled to the community of Copataza in March 2020 to document this collision of worlds—capturing the Achuar’s traditional way of life juxtaposed against the scars of industrial expansion.

The Achuar face a dual threat. The first is physical: the illegal entries, mining projects, and construction of roads that divide their land and invite illegal logging. The second, however, is digital and systemic.

In an era increasingly defined by deepfakes, AI-generated imagery, and the manipulation of digital context, the burden of proof for marginalized communities has become heavier than ever. When Indigenous communities like the Achuar attempt to hold state and corporate actors accountable in the court of law – or the court of public opinion – their visual evidence is often met with skepticism or dismissal.

How can a community prove, beyond a shadow of a doubt, that a specific event happened at a specific time and place? How do we ensure that the digital testimony of the Achuar preserves the same forensic integrity as physical evidence? The challenge was not just to take photos, but to create a chain of custody so robust that the truth of the Achuar’s struggle could never be denied.

Contents

Framework

Framework

The Challenge

In the remote Amazon, the distance between an incident and a courtroom is measured not just in miles, but in data integrity. For the Achuar, documenting environmental crimes like illegal logging or unauthorized road construction is only half the battle. The greater challenge is proving that these images have not been manipulated. In a legal landscape increasingly wary of digital fabrication and "deepfakes," standard metadata is no longer sufficient. To admit visual evidence into a court of law to defend land rights, the Achuar needed a tool that could guarantee the provenance of every pixel.

The Prototype

To bridge this gap, Starling Labs equipped Pablo Albarenga with the experimental "Capture" framework. This prototype moved verification from the editing room to the moment of capture.

Pablo utilized a modified workflow involving a Canon camera tethered to an HTC EXODUS 1 smartphone. This device was selected for its Trusted Execution Environment (TEE), allowing the team to leverage the Zion Vault: a hardware-backed key management platform that generates and stores keys in an isolated area of the processor, independent of the Android OS.

As he documented the mining operations and anew road in Copataza, the framework cryptographically hashed each image immediately upon capture. It did more than save a photo: it sealed the image with a hardware-generated signature unique to the device, binding it to authenticated sensor data (GPS, time, and orientation).

This process created a tamper-proof chain of custody anchored in the decentralized web. Content was cryptographically hashed and stored using the InterPlanetary File System (IPFS), ensuring data is content-addressed rather than location-based. This allows independent reviewers to verify the integrity of the files against their original hashes.

Contents

FrameworkLearnings

Learnings

While the deployment successfully proved the concept of authenticated capture, the field test in the humid, politically charged environment of the Amazon revealed critical tensions between technology and human reality.

The Friction of Verification

Forensic rigour often came at the cost of journalistic agility. The prototype workflow introduced significant friction. Pablo reported delays caused by the app requesting confirmation for every photograph taken. To operate discreetly and avoid drawing attention from authorities, Pablo resorted to dimming the screen and using the lower volume key to trigger the shutter, but the software's mandatory confirmation steps made capturing fleeting or stealthy moments in batches nearly impossible. Furthermore, the hardware struggled with the environment: the phone used for hashing frequently overheated after just minutes of video recording, and the app suffered from stability issues that disrupted the workflow.

The Paradox of Privacy

The most profound learning, however, was ethical. The very feature that made the evidence legally powerful – immutable GPS and metadata logging – posed a severe security risk. In a conflict zone where activists are often targeted, carrying a device that broadcasts an unalterable record of one's exact location can endanger both the journalist and their subjects. Pablo noted that the inability to selectively toggle metadata collection meant that protecting the "truth" of the image potentially compromised the safety of the people in it. This feedback highlighted a crucial need for future iterations: the ability to balance forensic transparency with the human need for obscurity and safety.

Contents

Dispatch

Pablo Albarenga’s Dispatch from the Field

It was barely dawn. The first rays of sunshine already tempered the thick foliage of the forest, causing the moisture accumulated during the nightly rains to rise above the treetops creating a heavenly landscape. There, where the Andes Mountains meet the Ecuadorian Amazon, a unique biome is born that constitutes one of the most biodiverse areas of the planet. The Shuar and Achuar Indians also live there, protagonists of the most recent demonstrations that shook Ecuador for several days, when thousands of Indians took the city of Quito, in October 2019, after the promulgation of a new decree with several economic adjustments agreed with the International Monetary Fund. The protests ended with several deaths and a popular victory.

At that time, the media focused attention on the rise in fuel prices, but this was just one more in the long list of demands brought by the native peoples. For them, the main demand revolved around the defense of ancestral territories, threatened by oil companies, logging companies and projects that did not respect the prior consultation of indigenous peoples regarding decisions involving their territories.

While the city was occupied, a new road promoted by the local government of Pastaza province, opened up the jungle inland, towards the Achuar community of Copataza, establishing the first road between it and the city of Puyo, putting the whole community to the test in the face of this new challenge. At that time, in spite of being still under construction and barely paved, several wood logs could already be seen piled up, waiting to be transported and then processed for export. Illegal loggers were the first to land when it came to a new road, as they were a profitable way to access wood that was well priced on the international market.

Just a few weeks ago, the road finally reached its destination, drawing a winding line that unites, but also divides. "I told my wife that we will only be united until the road arrives (...) and that is coming true," says Aurelio, one of Copataza's elders, as he comments on the different changes the community - founded on the youth of its parents, former nomads - has faced since the road arrived.

The sound landscape of Copataza is no longer the same, the sounds of machines working on the road intermingling with the songs of birds, insects, and chainsaws. The old landing strip that was the only fast way to the city of Puyo is now an abandoned field, crossed by the new road. Two cargo trucks are filled with balsa wood, extracted from the islands surrounding the community, belonging to the Achuar territory. Unknown people, who do not belong to the community, move freely, load and remove the wood.

Since the beginning of the project, the Achuar have analyzed the consequences that it could bring, as well as the advantages. In Wayusa hours, a traditional drink of the Achuar, with high concentrations of caffeine, used to purify themselves in the early morning and to discuss important matters, they decided to give a definitive yes to the new road, with the condition that their community be the last point reached by it.

The youngsters are enthusiastic about the benefits that the road promises, since it would allow them to sell their products in a simpler way, as well as to access the city at a low cost, mainly in case of emergencies. In the past, the only access to the city was by an expensive flight or a hard walk: "Before, one would walk for five days through the jungle to get to the city," says Julian Illanes, one of the former leaders of the Achuar Nationality of Ecuador (NAE).

It is unquestionable that the road has advantages for the community in terms of access to the city. The problem is that it also allows the arrival of outsiders interested in the natural resources and, once money becomes a necessity for the community, the fastest way to get it is to sell these resources.

"Now many of us are seeing the economic need. Everyone says 'out of necessity I do this'. Before there was need, but not so much. Before just having the clothes and the machete was enough," says Aurelio.

From the NAE, they clearly see how timber extraction poses a threat to the community, not only in terms of resources but also in terms of culture. "The first impact we face is the logging companies. We have many ideas and alternatives, but the logging company has been quicker to offer people money." Tiyua Uyunkar, President of the NAE, says: "We are following the necessary parameters for the total blockade of these companies, but the Ministry of the Environment has done absolutely nothing. It has said that this commercialization of balsa wood does not have so many restrictions, so we have not had support".

Formerly nomads, hunters and fishermen, today they are settled in a fixed territory. The traditional houses, with their wooden base and thick palm leaf roofs are gradually being replaced by new houses with sheet metal roofs, less insulating during the extreme heat of the Amazon summer and noisier in the rainy season. Agriculture is still practiced in the gardens, but hunting and fishing are gradually disappearing, to be replaced by products brought from the city. "Before there were enough fish to go around, not five or three, but two or three baskets, full. Today it is scarce, just like hunting. Before it was all jungle (...) now I see that in the Pastaza River there is so much motor canoe traffic making a racket every 10 minutes. It scares the fish away," says Aurelio.

Immersed in a rapidly changing context, culture is that intangible territory that absorbs the collateral damage of economic interaction in indigenous communities. This is where traditions are modernized and new ones are imported, but people go on with their lives. However, the greatest threat refers to the bond with the forest, with the territory. From here on, only time will tell whether this remains that common good indispensable for the sustenance of life or simply a finite resource capable of being quickly turned into money; for a short term.

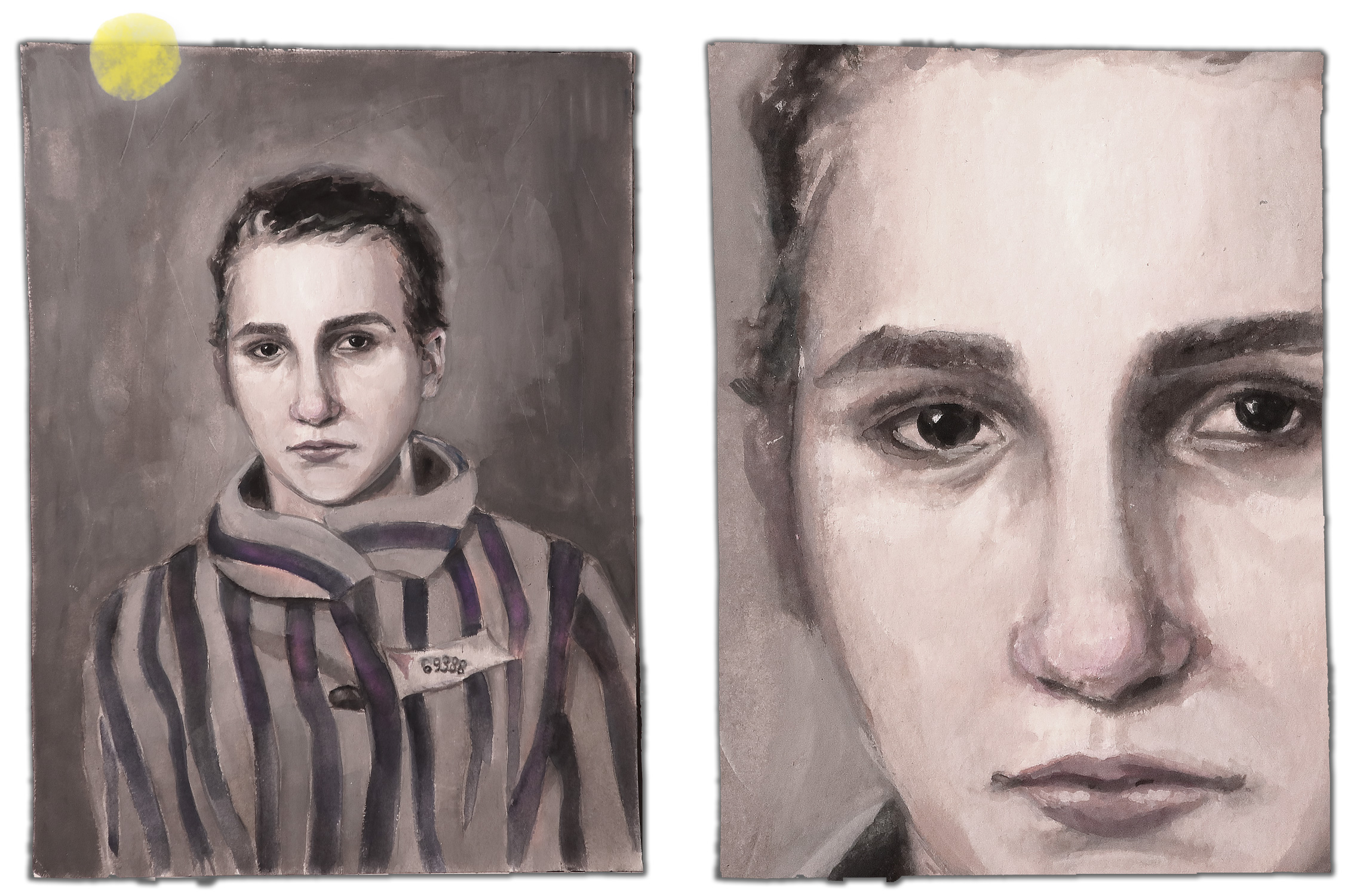

Preserving the Shoah’s Memory with Web3

Preserving the Shoah’s Memory with Web3

How do we ensure these digital records survive for future generations?

TeamReading Time: 5min

![]()

Prototypes

Share

Contents

Background

Background

USC’s Shoah Foundation Institute (SFI, or the Shoah Foundation) builds and preserves the Visual History Archive, a critical collection of more than 55,000 video testimonials of experiences of genocide, including the Holocaust and other atrocities. These firsthand accounts serve as powerful educational tools, historical documents, and memorials to those who suffered. The Foundation's work extends beyond archiving to creating educational programs, fostering scholarly research, and promoting dialogue about prejudice and intolerance worldwide.

The VHA Archive totals four petabytes (PBs) of digital data, and with multiple copies stored in different geographic locations for redundancy. There’s no single point of physical failure – a concern heightened by a campus located in a region known for earthquakes and wildfires: Southern California. Additionally, the Archive does not rely on a single corporate cloud storage provider. While cloud storage is generally exceptionally reliable, it can also suffer hardware and software failures, and a cloud storage provider’s corporate owners’ content policy can change to limit or refuse access to the data it holds, creating a new risk.

Contents

Context

FrameworkTechnologyLearningsArchive

Context

In 2021, the Shoah Foundation decided to go a step further in the protection of this collection by implementing Web3 distributed technologies, which, by their nature, avoid single point of failure risks. We partnered with two key organizations to bring this vision to reality.

Filecoin, a decentralized storage network built on blockchain technology (and a funding and operational partner for the Lab), offers an innovative approach to securing and preserving the Visual History Archive's extensive collection. The distributed storage infrastructure of Filecoin allowed us to ensure the protection of these invaluable testimonies across a global network.

PiKNiK, an enterprise computer infrastructure provider with extensive Web3 experience, joined our initiative as digital preservation specialists, bringing their technical expertise in adapting sensitive archival content for blockchain environments. Their experience with large-scale media collections proved essential as we navigated the complex process of implementing these new protective measures for the Foundation's testimonials.

Contents

Framework

Framework

The Starling Framework, which was developed at USC and Stanford and is being implemented in projects across both institutions, focuses on three phases of data’s lifecycle: Capture, Store, and Verify. The Framework provides both experts and novices with a guide to establish and evaluate data integrity.

The Challenge: Analog Problems Persist In A Digital Era

The USC Shoah Foundation's Visual History Archive isn't merely a collection of data—it's irreplaceable evidence countering Holocaust denial and preserving genocide survivors' voices. Yet despite its digital format, this 4-petabyte archive faces preservation threats eerily similar to those that plagued analog collections throughout history.

Centralization remains the core vulnerability. Just as the Library of Alexandria's destruction resulted from concentrated knowledge in a single location, today's digital landscape poses parallel risks. Four companies control approximately 67% of cloud infrastructure, creating dangerous points of failure. These repositories, while technologically advanced, remain vulnerable to corporate decisions, outages, and even censorship—as evidenced by content removals in countries from New Zealand to Saudi Arabia.

For the Visual History Archive specifically, the stakes transcend mere data loss. Any corruption, tampering, or inaccessibility directly threatens historical accountability. Traditional digital backups, even when duplicated across multiple systems, still face common modes of failure: bit rot (data corruption due to deterioration of storage media), natural disasters affecting data centers, technical obsolescence, or changing corporate policies.

Southern California's earthquake and wildfire risks further complicate physical preservation of the Foundation's digital assets. Meanwhile, the emerging threat of synthetic media and AI-generated content risks drowning out authentic historical records with false and misleading facsimiles.

Enter decentralized storage networks. With ever more powerful chips at our disposal, recent technological innovations seek to maintain, audit, and certify perfect digital preservation of content across many replicants, distributing concern and increasing resilience.

The Prototype

Modern implementations of cryptography and decentralization can be combined in powerful ways, and are critical as the internet evolves toward a new vision of the Web – an internet where data sovereignty and distributed control replace traditional centralized models.

A copy of the Visual History Archive’s collection was backed up to Filecoin. The decentralized storage network includes over 4,000 independent storage providers (also known as miners, who establish and maintain data storage nodes and win cryptocurrency rewards for proving continued storage). Filecoin has sometimes been referred to as the Airbnb of archival storage, because it allows any provider to sign up and offer space on their computer or other device. This may include traditional companies with large nodes, but can even allow individuals to support the ecosystem with the phone in their pocket. Symbolically, this is a powerful way for people to join in the preservation of humanity’s most critical records, but at scale it can also offer efficiencies. Notably, encryption ensures that the storage providers don’t know the details of any files they hold on their hard drives. All they can see is a string of incomprehensible 1s and 0s.

As a user of this system, the Shoah Foundation has more granular choices with Filecoin than simply putting data in a cloud. We chose to work with PiKNiK, one of those providers, who helped ensure the Visual History Archive collection was encrypted and then replicated across an additional seven of PiKNiK’s peer storage providers on the Filecoin network who met our pre-established criteria. This decentralized approach provides more resiliency, as copies can be distributed across geographies, providers, or servers meeting specific requirements. Instead of a complete photo, video, or document stored as a single file on a single server, the files can be sub-divided into pieces and stored in multiple locations, on multiple storage providers, effectively scattering pieces of each file around the world. This means that even if a storage provider could hypothetically decrypt the data they hold for a user, they only have fragmentary parts of files– further mitigating security concerns. Notably, this method can make storing and retrieving (commonly referred to as ingress/egress) data slow, meaning it’s best suited for “cold storage” of data that is not regularly accessed – perfect for large backups like the Shoah Foundation’s collections that are measured in petabytes.

Contents

Technology

Technology

The preservation of the USC Shoah Foundation's Visual History Archive (VHA) on a decentralized storage network followed the three-step methodology of the Starling Framework: Capture, Store, and Verify. Each stage ensured the integrity, security, and longevity of the archive’s four-petabyte collection, mitigating risks associated with centralized storage models.

Capture

The first stage, Capture, focused on ensuring the authenticity and integrity of the Visual History Archive’s digital assets before transitioning them to decentralized storage with PiKNiK. Initially, the collection was stored on a combination of the Shoah Foundation's tape-based storage and their Microsoft Azure cloud instance. However, high Azure retrieval costs and concerns about long-term integrity necessitated a migration to Web3 infrastructure.

The process began with the extraction and preparation of the Visual History Archive data. Given the vast amount of data, transmitting the collection over the internet was not an option. Instead, the entire four petabyte archive was copied from tape storage to individual hard disk drives, and the drives shipped to PiKNiK in two pallets weighing a total of 795 pounds. Once at PiKNik, the drives would be loaded into racks known in the data storage industry as “JBODs”, which stands for Just a Bunch Of Disks. A rigorous file integrity check was conducted to identify and replace any instances of bit rot or data corruption. Corrupt data was repaired from other existing copies of the material.

Encryption played a critical role in this phase, with all files secured using GNU Privacy Guard with an AES-256 cipher, ensuring that only the Shoah Foundation could access them with their private encryption key. Large files were further sub-divided into smaller chunks, with each assigned a Content Identifier (CID), a unique code for each chunk of data which enables the retrieval of a specific piece of data no matter where it ends up in decentralized networks.

Store

The next stage, Store, focused on securing the collection across a decentralized storage network to prevent loss, corruption, or censorship. The encrypted testimonies were distributed to carefully selected Filecoin storage providers, each chosen based on reliability and geographic diversity. By fragmenting and dispersing the data to spread the risk across multiple groups, this decentralized strategy significantly reduced the chance of loss due to natural disasters, cyberattacks, or institutional policy shifts.

Storage providers within the Filecoin network are bound by two commitments, known as Proof-of-Replication (PoRep) and Proof-of-Spacetime (PoSt), to ensure stored data remains intact. Before they begin storing any data, storage providers must stake cryptocurrency collateral with the Filecoin network. Once they commit to store data, known as a “deal”, every day the Filecoin network tests the integrity of their stored data by challenging storage providers to prove that they have the data agreed in the deal. If they pass the test, providers can earn more cryptocurrency. But if they fail the test, some of their collateral may be taken away as a penalty. In this way Filecoin network participants have a financial incentive to ensure they take good care of the data they promised to preserve.

Since the collection was intended for archival purposes rather than frequent retrieval, the storage model was optimized for cold storage, prioritizing long-term retention over accessibility. Metadata was preserved alongside the encrypted files, maintaining contextual integrity for future retrieval. To sustain decentralized availability, storage contracts required periodic renewals, necessitating ongoing coordination between the Shoah Foundation, PiKNiK, and the network of storage providers.

Verify – Archiving or Publishing

The final stage, Verify, ensured that the archive remained intact, tamper-proof, and accessible over time. Filecoin’s Proof-of-Spacetime validation automatically conducted daily integrity checks, confirming that storage providers continued to maintain the archive. These audits were performed without revealing file contents, preserving privacy and security, by using what is known as a cryptographic hash – a digital fingerprint that identifies a piece of data without revealing its content. The audits took advantage of a useful property of cryptographic hashes – if a piece of data changes in any way, no matter how slightly and whether due to corruption or an intentional edit, its hash changes as well.

PiKNiK developed a custom monitoring dashboard that provided the Shoah Foundation with real-time visibility into the status of stored files, retrieval performance, and hash consistency.

Verification was further strengthened through tamper detection methods that relied on hash comparisons. Upon retrieval, a file would be re-hashed and compared against its original fingerprint. Any alterations or corruption would result in a mismatch, immediately flagging data inconsistencies. This rigorous verification approach safeguarded the archive against the growing threat of synthetic media and AI-generated forgeries, ensuring that the testimonies remained authentic and distinguishable from manipulated content.

Contents

LearningsArchive

Learnings

First mover challenges

Preparation for this project began in 2018, preceding Filecoin’s mainnet launch. In this sense, the Shoah Foundation and its partners were testing the frontier of Web3 preservation before the supporting infrastructure was fully mature. The project successfully demonstrated the feasibility of securing the Visual History Archive using decentralized storage. Although the scope was ultimately limited to about half a dozen nodes. This was still an unprecedented achievement at the time—possibly the largest cultural collection ever committed to the decentralized web.

The team met its three-year preservation goal, structured around carefully designed tokenomics, but long-term sustainability depended on the broader decentralized ecosystem. Once the initial contracts concluded, continued storage faced the realities of energy demands, operational costs, and provider incentives. The project deliberately focused on integrity and redundancy of files; aspects like retrieval optimization and cost-of-service sustainability remained outside its scope.

The project used mezzanine (lower-resolution) files for distribution across Filecoin nodes, balancing resilience with feasibility. This approach allowed many providers to participate and helped distribute fragments of the collection worldwide across diverse configurations. However, the decision highlighted the ongoing trade-offs between file size, accessibility, and storage economics in Web3 environments.

Data Preparation is the Critical Phase

In the Filecoin ecosystem, preparing data for storage (rather than the storage itself) represents the greatest challenge. This highlights the value of specialized service providers like PiKNiK who can coordinate between data preparation and storage providers to ensure successful implementation.

While anyone can store files in the Filecoin network, significant data management is still required: mapping file names to CIDs (Content Identifiers); tracking storage deal durations and lifecycle management of files; encrypting and processing raw data into compatible CIDs; handling deal renewal costs; managing storage provider relationships; and providing visibility to file status and retrieval processes. These complex preparation requirements underscore why organizations undertaking large-scale preservation projects benefit from partnerships with technical specialists experienced in Web3 environments.

Strategic Planning Supersedes Technical Challenges

The most significant obstacles in the implementation of the preservation solution weren't technical but related to project management, requirements planning, and communication. Organizations should establish clear security frameworks and implementation timelines before beginning similar preservation projects.

Financial Commitment Must Be Well-Defined

Organizations should have stable, well-planned financial frameworks when adopting Web3 preservation technologies. This includes understanding both immediate implementation costs and long-term preservation expenses, especially given the potential volatility of emerging technological ecosystems. Broader ecosystem challenges, including the “crypto winter”, constrained the ability to expand further.

The Need for Cross-Compatibility

One need that emerged clearly was the lack of S3-class compatibility—a standard that would have made the decentralized system more easily interoperable with existing archival and enterprise tools. Without this, bridging workflows between traditional storage environments and decentralized networks proved more complex than anticipated.

A Positive Experience and Next Steps

Building on the ground-breaking preservation of the Visual History Archive on Filecoin, USC Shoah Foundation is now expanding its Web3 strategy. The Foundation plans to add 2 PiBs from its innovative Dimensions in Testimony collection, which features interactive testimonies allowing viewers to converse with recordings of survivors.

Additionally, work is underway to build a dedicated Filecoin Academic Node with a substantial 20 PB storage capacity, enabling the Foundation to not only store its collections but also actively participate in the decentralized network as a storage provider.

Contents

LearningsArchive

Archive

Also by this author

Anita Case Study

Art + Authenticity: A Case Study

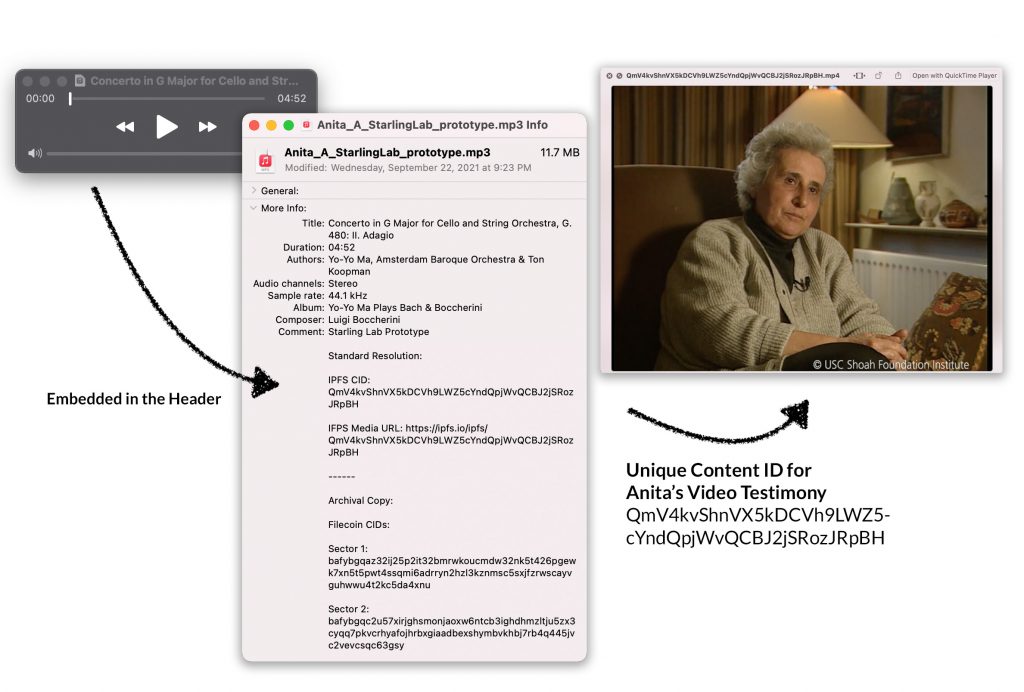

This recording of Yo-Yo Ma’s performance of the Adagio from Boccherini’s Cello Concerto, is not just your typical MP3 File.

Starling Lab Staff

Reading Time: 1 min

![]()

Share

This MP3 file is a vessel.

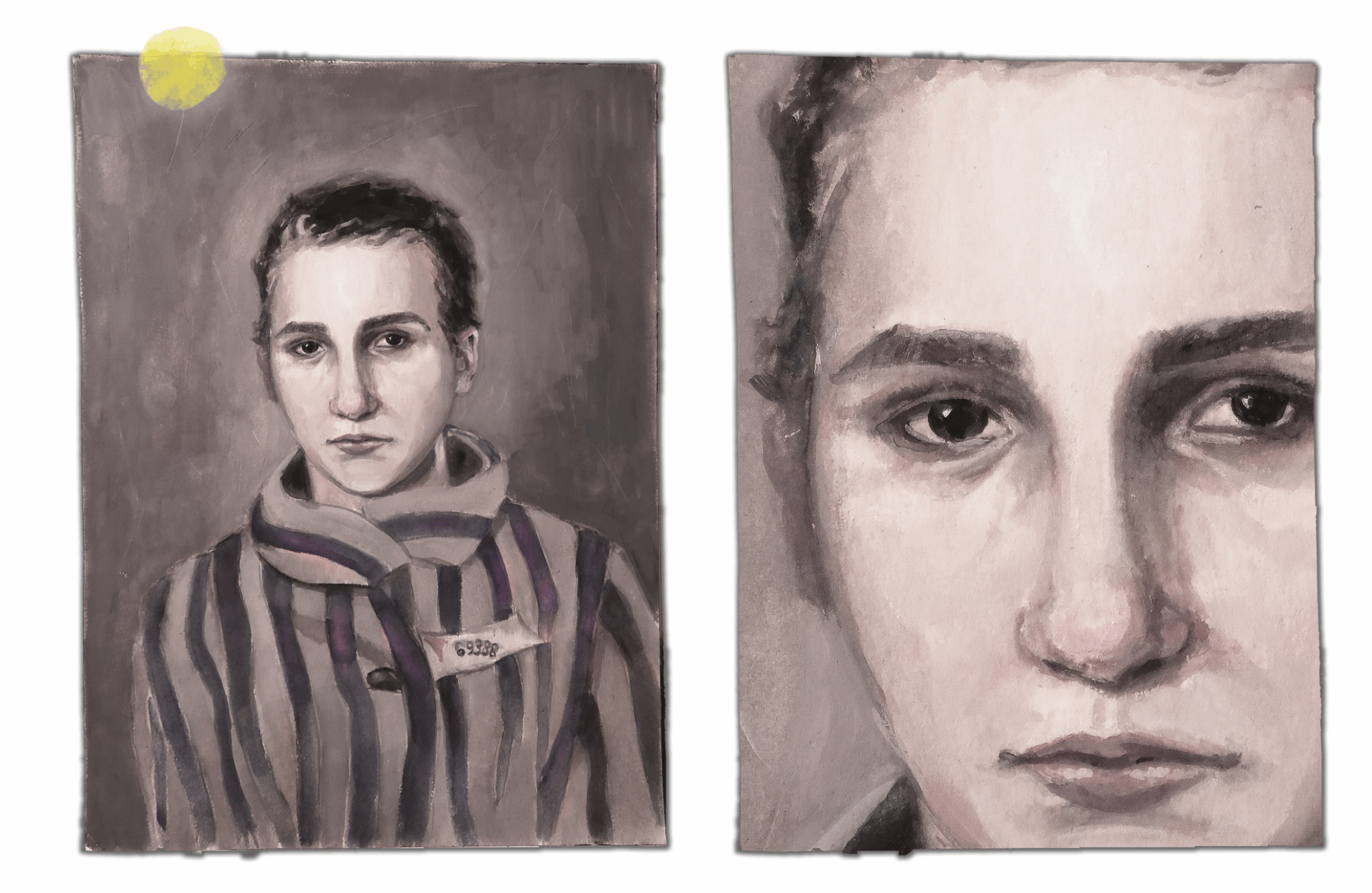

It holds within it, through the language of music, the incredible story of how Anita Lasker-Wallfisch survived the horrors of the Holocaust.

Read our Case Study – and download your own copy of the audio file to preserve the memories it contains.