Safeguarding History: Preserving Armenian Cultural Heritage on the Decentralized Web

History

Our mission is to securely capture, store, and verify the world’s most vulnerable digital records. Today, we are proud to announce a significant milestone in that mission: the successful preservation of several terabytes of critical data from the TUMO and Armenian Cultural Heritage Institute’s Scanning Project. This data is now securely stored at the USC Digital Repository, part of the USC Libraries – our academic co-anchor.

This collaboration represents more than just data storage: it is a vital effort to protect cultural memory in the face of conflict and erasure.

Preserving “Digital Twins” of Endangered Sites

The data we have preserved consists of high-fidelity 3D scans – including photogrammetry and laser scan data – of Armenian cultural heritage sites located in the Artsakh (Nagorno-Karabakh) region. The collection includes original raw photographs and 3D models of monasteries, churches, and monuments, many of which are currently inaccessible or at risk of destruction due ethnic cleansing policies carried out by Azerbaijani authorities in the region (see EU Parliament resolution and Caucasus Heritage Watch at Cornell University for more).

By creating and preserving these “digital twins,” TUMO, Armenian Cultural Heritage Institute and Starling Lab are ensuring that even if the physical sites are damaged or erased, accurate and immersive records will survive for future generations of historians, researchers, and the public. This initiative directly counters the threat of cultural erasure by creating an immutable, decentralized record of this heritage.

Powered by World-Class Preservation Infrastructure

To ensure these records are resilient against censorship, data loss, and single points of failure, we have stored them on the USC Filecoin Node.

More than a simple server, this node is in fact a massive 22-petabyte storage facility integrated into both the USC Digital Repository and the Filecoin network. It leverages cryptographic proofs to guarantee data durability while offering the immense scale required for high-fidelity 3D historical archives.

It is operated by us at Starling Lab and the USC Digital Repository, a service of the USC Libraries. While the Filecoin node represents the cutting edge of decentralized storage, it is just one part of the Libraries’ deep expertise in preservation and archiving. By housing this node within a leading research university, we combine the innovation of Web3 protocols with the rigorous preservation standards developed over decades by archivists and librarians.

We have committed to preserving this archive for 20 years, ensuring that the history of Armenian heritage in Artsakh (Nagorno-Karabakh) remains accessible in the long term – at no cost to TUMO, the custodians of that archive.

The Frontier of Spatial Intelligence

This preservation effort also dovetails with our broader research into 3D and spatial intelligence. As we move beyond 2D images, technologies like Neural Radiance Fields (NeRFs) and 3D Gaussian Splatting allow us to transform standard photographs into fully immersive, navigable 3D environments.

By applying our “Authenticity by Design” framework to these 3D datasets, we are not just storing files; we are establishing a root of trust for spatial experiences. This allows us to verify that a 3D reconstruction of a heritage site is faithful to the original scans and has not been manipulated. As we continue to develop these tools, the TUMO collection will serve as a foundational dataset for testing how we can authenticate and experience history in the metaverse.

Preserving such a collection is a powerful demonstration of how decentralized technology can serve humanity. By locking these records on the blockchain, we are ensuring that the history of Armenian heritage in Artsakh (Nagorno-Karabakh) can never be deleted, denied, or forgotten.

Beyond the Blackout: Documenting Atrocities in Tigray's Forgotten War

Beyond the Blackout: Documenting Atrocities in Tigray's Forgotten War

Local journalists and healthcare professionals risked their lives to collect fragile digital and analog evidence of the 21st century's deadliest conflict.

TeamReading Time: 5min

Prototypes

Share

Contents

Background

Context

Framework

TechnologyLearningsArchive

Background

In response to the 2020-22 war in the Tigray region of northern Ethiopia and the government-imposed communications blackout and systematic blockade that obscured atrocities from external scrutiny, Tigrayan civil society actors launched a coordinated initiative to preserve digital evidence of war crimes and human rights violations.

Although formal restrictions are technically lifted, the extensive destruction of telecommunications and power infrastructure, combined with the incomplete implementation of the Pretoria Agreement, has created a sustained, de facto blackout, leaving a significant portion of the population digitally isolated. To counter state censorship and mitigate the risk of evidentiary loss and historical erasure, the initiative employs a deliberate, multi-phased strategy that includes the collection, exfiltration, and archiving of fragile digital materials.

Conceived as both a protective measure and an act of resistance, the project advances three long-term objectives: (1) building a credible evidentiary base for future accountability and transitional justice processes; (2) protecting the historical record of affected communities against denial, manipulation, or revision; and (3) enabling ongoing research, digital forensics, and human rights analysis by scholars, practitioners, and advocates.

Contents

Context

FrameworkTechnologyLearningsArchive

Fellowship Projects and Awards

Context

The conflict that erupted in November 2020 in Ethiopia’s Tigray region rapidly devolved into one of the deadliest wars of the 21st century. Credible estimates indicate up to 600,000 civilian deaths, the vast majority not through direct combat but through starvation, preventable disease, and the collapse of essential services. More than 2 million people were internally displaced, nearly one million fled as refugees to neighboring countries, millions were subjected to mass starvation, and more than 100,000 were victims of forms of sexual violence.

From the conflict’s outset, the Ethiopian government, in coordination with the Eritrean Defense Forces (EDF) and Amhara regional forces, imposed a systematic siege that functioned as a strategy of collective punishment. Essential services, including telecommunications, banking, electricity, fuel, and commercial supply chains, were deliberately severed. This weaponization of deprivation was compounded by the routine obstruction of aid convoys, the suspension or expulsion of relief organizations, and the denial of access to independent media and the expulsion of journalists. Bureaucratic restrictions and relentless checkpoint inspections deliberately impeded the delivery of food, medicine, and other life-sustaining resources. These integrated measures cut off vital remittances, halted commerce, prevented civilians from accessing critical care, and enforced a state of utter isolation. The siege was reinforced by a state-imposed information blackout, comprising the longest consecutive internet shutdown on record and coordinated digital disinformation and hate speech campaigns, which successfully obscured the scale of violations and shielded perpetrators from international scrutiny. All parties to the conflict have been accused of war crimes.

The severity of the atrocities has led legal scholars to conclude the conduct of the war may meet the legal threshold for genocide. The Tigrayan community has consistently described the campaign as a deliberate genocide. The pathway to accountability was systematically blocked: the Ethiopian government denied access to the UN's International Commission of Human Rights Experts on Ethiopia (ICHREE), and the Commission’s mandate was ultimately dissolved in October 2023. This outcome left grave crimes unexamined and institutionalized impunity, especially since institutions implicated in the violations retained positions of authority over the national transitional justice process.

It is within this systemic accountability vacuum that Tigrayan civil society actors mobilized to safeguard digital evidence of atrocities. As one civilian journalist stated, "The most precious asset is information now, not gold, and it needs to be protected." This atmosphere of fear, censorship, and violence created an urgent need for an independent, community-based effort to archive and preserve evidence of the atrocities being committed.

In late 2022, a member of our team traveled to Tigray to meet with a healthcare professional and a civilian journalist who had each spent more than a year risking their lives to document atrocities using whatever tools were available to them. Both relied heavily on their mobile phones to capture photos, videos, and testimonies, assuming the devices themselves were a safe form of storage. When asked about their preservation plans, they didn’t have one, they had been too focused on survival to consider what might happen if a screen cracked, a battery failed, or a phone was confiscated at a checkpoint. When digital capture wasn’t possible, they turned to paper and pen, methods even more vulnerable to loss.

Their stories were not unique. They reflected a stark and pervasive gap between the extraordinary courage of frontline documentarians and the technical knowledge required to ensure that evidence survives long enough to reach a courtroom. That gap is what gave rise to this initiative. In anticipation of denial, erasure, and the consolidation of state narratives, civil society actors began the systematic collection, exfiltration, and preservation of digital and analog evidence.

Contents

Framework

TechnologyLearningsArchive

Framework

The Challenge

The team’s methodology was forged by the extreme hostility of the operating environment. Working with a small team and limited financial resources, every decision was a direct response to a specific set of constraints designed to prevent evidence from leaving the region. The key challenges included:

A Total Information Blackout

This was not just a matter of slow internet. The team’s data collection took place during the longest consecutive internet shutdown in history, creating a total information blackout that made digital transfer of evidence impossible. This forced a complete reliance on offline-first solutions. As partial connectivity resumed, the aggregation and transport of data began, but the team faced a new set of challenges. The state-controlled ISP actively blocked access to essential security tools, including the Proton suite, specific VPNs, and the Bitwarden password manager. Furthermore, in areas with connectivity like Mekelle, the connection speed was so low that it could not handle multimedia datasets; attempts to upload video evidence (over 1,500 files totaling 450 MB) would consistently time out.

Extreme Physical and Personal Risk

Moving data was a life-threatening activity, exposing the team to different risks across the operational chain:

Local Data Collectors: Their exposure centered on the immediate liability associated with the initial acquisition and transient possession of sensitive material. This vulnerability created a risk of surveillance and arbitrary detention during the collection process if caught.

Central Custodians: These managers, responsible for local data aggregation at a central site, faced a significant, persistent liability. The data was stored temporarily in private residences, meaning there was no secure facility for staging the evidence. Every piece of evidence constituted a constant threat of discovery via raid or accidental operational exposure.

Transporters: Individuals tasked with the physical movement of data for exfiltration encountered the most acute threat. They risked being stopped and searched at checkpoints, where finding a hard drive could lead to imprisonment on fabricated charges of espionage and terrorism. The risk only subsided once the evidence physically left the country.

Severe Resource Scarcity

The war created a severe scarcity of basic technology. Laptops and computers had been widely destroyed or looted, and the few devices that remained were often older models, unable to receive software upgrades and lacking sufficient storage. Key items like external SSDs were not just scarce in Tigray but unavailable for reliable purchase in Ethiopia as a whole. While a secondary market existed, it was too untrustworthy due to the high risk of malware or corrupted hardware. To mitigate this, the team adopted a strict procurement strategy: all equipment was purchased new in the United States. This approach addressed the dual challenges of trust – ensuring devices were not tampered with – and logistics, as it was less of a red flag than a bulk purchase in the region. A team member then transported all hardware personally in their carry-on luggage, maintaining a secure chain of custody and bypassing the risks of confiscation or damage associated with checked baggage.

Ensuring Data Integrity Across a Human Chain

With no digital transfer options, data had to be physically transported via a "human chain of custody." This method was not only a security challenge but also a significant logistical gamble. The entire process was dependent on international travel from the US to Tigray and back – a journey with no direct flights, requiring layovers in Addis Ababa. As a result, the mission was constantly at the mercy of potential flight cancellations, which could trap the data courier and halt the extraction process entirely. The team, therefore, had to ensure not only that the data was protected from tampering, corruption, or loss, but that its perilous physical journey could be completed at all.

The Prototype

The project's methodology was built around a three-phase lifecycle for each piece of evidence: secure capture, long-term preservation, and availability for future analysis – echoing our Starling Framework. This framework was designed to be resilient in a low-tech, high-risk environment.

Secure Capture of Field Data

The data capture strategy prioritized security and ease of use for contributors on the ground. The team recognized that complex security protocols could increase the risk of human error, so user-friendly tools were selected. This approach ensured that journalists and civil society members could focus on documenting events without being hampered by technical complexity. All equipment was successfully transported to Tigray, and the local team received training in encryption, data security best practices, and storage resilience through redundancy.

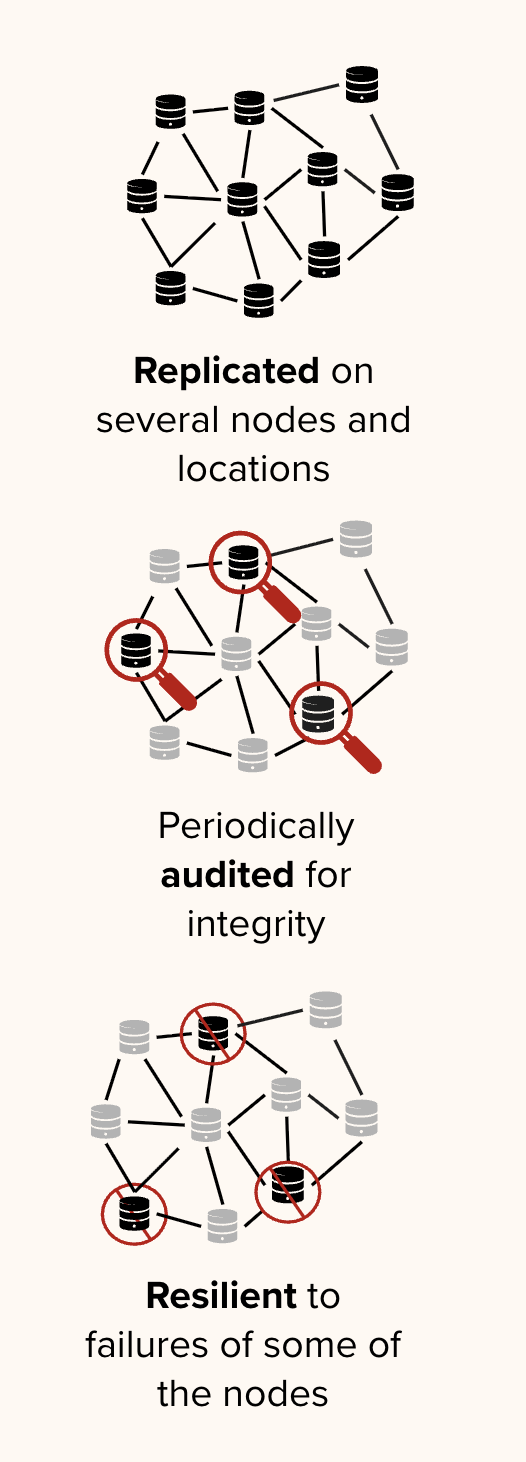

A 3-2-1 Archival Strategy for Preservation

For long-term storage, the team adopted the 3-2-1 archival best practice: maintaining three copies of the data, on two different types of media, with at least one copy stored off-site. This strategy was chosen to mitigate the risks of data loss due to hardware failure, corruption, or physical seizure of any single data store. This distributed approach ensures redundancy and the long-term availability of the evidence.

Contents

Context

Framework

Technology

LearningsArchive

Technology

The project's technical methodology was designed for an environment of extreme hostility and minimal resources. Every tool and process was chosen to prioritize user safety, data integrity, and resilience, following the Starling Framework principles of Capture, Store, and Verify.

However, it's critical to define the boundaries of this workflow. The cryptographic integrity measures begin the moment a contributor's data is ingested into our central MacBook in Tigray. This starting point was a necessary adaptation to the realities of sourcing; because some data was ingested off-the-record from sensitive sources via direct drive copies or downloads, formal handoffs using digital signatures or email were impossible. The journey of the data before this point – from the original capture device to the contributor – is not cryptographically documented in this process. Our chain of custody therefore covers a "known universe" ranging from ingestion to final archival storage.

This can be visualized as follows:

- Phase 1 (Undocumented): Event Capture → Initial Transfer to Contributor Storage

- Phase 2 (Documented & Verified): Ingestion → Hashing → PGP Signing → Timestamping

- Phase 3 (Out of Band): Phase 2 Files → Transport → Copy to Redundant Storage

To verify, retrieve files from redundant storage, decrypt files to actual files, verify against Phase 2 records.

Capture and Initial Processing

The capture phase focused on safely ingesting data from contributors and securing it for transport. The primary challenge was the trade-off between ideal security and the practical usability of tools for non-technical journalists operating under extreme stress.

Choice of Field Hardware

The core field kit included a new 2023 16-inch MacBook Pro and multiple Samsung T7 Shield SSDs. The MacBook Pro was specifically requested by a core contributor who was already familiar with the product from their video journalism work, minimizing the learning curve. It was chosen for its powerful graphic rendering and storage capabilities, which were essential for handling high-resolution media. Procuring a new device also ensured it would be compatible with software upgrades and other devices over a multi-year horizon. The Samsung T7 Shield SSDs were selected for their durability and built-in AES 256-bit hardware encryption, providing a crucial layer of security during transit.

Use of Encryption and Usability

We made a deliberate choice to prioritize simple, reliable encryption over more complex, error-prone alternatives. For the laptop, we relied on the MacBook’s native FileVault for "set-and-forget" full-disk encryption, which minimized the risk of human error under duress.

For the external SSDs, the team used symmetric encryption, storing the shared passwords in Bitwarden. While asymmetric encryption was considered (encrypting files separately for each person), it was rejected because it would have made key management too challenging across stakeholders. The symmetric approach was also more communal; since everyone had the same key, it made the team feel more comfortable, a deliberate trade-off for a more manageable and inclusive security posture.

Broad Use of Secure Communications

All team coordination and sensitive communications were conducted via both Signal Messenger as well as WhatsApp (the most widely-used messenger locally), leveraging its strong, open-source, end-to-end encryption.

Store

The storage strategy was built on the archival best practice of 3-2-1 (three copies, on two different media, with one off-site) to ensure redundancy and resilience against data loss. The choice of storage solutions was the result of a formal evaluation that prioritized security and censorship resistance.

| Criteria | Dropbox / Google Drive | Proton Drive / Tresorit | AWS S3 / B2 |

| Usability | High | Medium | Low |

| Privacy | Low (No E2EE) | High (E2EE by default) | Medium (User-configured) |

| Censorship Risk | High | Low (Swiss Jurisdiction) | High (US Jurisdiction) |

Based on this analysis, the following three-tiered storage model was implemented:

- Primary Cloud Storage: Proton Drive was selected for its default end-to-end encryption and its legal jurisdiction in Switzerland, which offers strong privacy protections. This served as the primary off-site copy.

- Local Device Storage: Two separate Mac laptops (one in Tigray, one in the US) held complete, encrypted copies of the archive.

External Physical Media: A dedicated external hard drive stored the third copy, providing an additional layer of media diversity.

Verify Archiving or Publishing

The verification phase was designed to create a transparent, auditable record proving that the collected data was not tampered with after ingestion. This process established a cryptographic chain of custody for our "known universe" of data – from the moment we received it to its final archival.

Step 1:Hashing for Integrity

The first step was to create a unique cryptographic "fingerprint" for every single file. Using the CLI, we generated a complete manifest of SHA-256 hashes. This provided a baseline record of the dataset's exact state at the time of ingestion.

$ find . -type f -exec shasum -a 256 {} ; > TigrayData_V1_fingerprints.txt

Step 2: Signing for Authenticity

The resulting *_fingerprints.txt manifest was then cryptographically signed using an organizational PGP key. This signature acted as a digital seal, proving that the manifest itself is authentic and has not been altered since it was created by our team.

The plaintext signature remains publicly verifiable with the .sig file: anyone can verify that the signature was applied by this specific PGP key – without requiring decryption.

$ gpg --import keys/public.asc

key 55082AE8487FB65C: "Tigray Archive <tigrayarchive@proton.me>"

Total number processed: 1

$ gpg --verify signatures/TigrayData_V1_fingerprints.txt.sig

data/TigrayData_V1_fingerprints.txt

TigrayData_V1_fingerprints.txt

⎿ gpg: Signature made Wed Oct 15 22:44:18 2025 CEST

using RSA key 9945877FA0290DB20547B81C55082AE8487FB65C

Good signature from "Tigray Archive <tigrayarchive@proton.me>"

(...)

Step 3: Timestamping for Proof of Existence

To establish an immutable, publicly verifiable record of when this dataset existed, the team used OpenTimestamps—a service that anchors cryptographic proofs to the Bitcoin blockchain.

During active conflict operations, the team timestamped the encrypted signed manifest (.sig.gpg file). This served dual purposes: it created a blockchain-anchored proof that a specific sealed dataset existed at a specific time, while keeping the file list confidential to protect sources, operational security, and the safety of individuals still documenting evidence under threat.

For public release and independent verification, the team is now publishing the unencrypted signed manifest with a new OpenTimestamps proof. This enables anyone – researchers, investigators, legal practitioners, journalists – to verify the exact file list and SHA-256 hashes without requiring decryption keys or trusting intermediaries.

$ cat TigrayData_V1_fingerprints.txt

a50d392fb51080c1052991e17cd1ac8b1d642fc36dc766a771a3ac2666773605 ./ttv jounalist/himemte shekore.mxf

a2a667af88b9f6443734011ade36f99c577f27f473bd263813ddf008cd500f62 ./ttv jounalist/emahoy tiemtu.MXF

...

Commits are signed:

commit 93b45d...13276897 (HEAD -> main, origin/main)

Signature made Sat Oct 25 09:05:30 2025 CEST

using RSA key B826CF0ED10B023C411879FD9B66FE79F29FFF9C

Good signature from "Tigray Archive <tigrayarchive@proton.me>" [ultimate]

Author: Basile Simon <tigrayarchive@proton.me>

Date: Sat Oct 25 09:05:30 2025 +0200

Initial commit

The companion GitHub repository to this case study, which contains the public cryptographic proofs that allow any third party to independently verify the integrity and provenance of the collected data, contains four key files that work together to establish provenance:

- TigrayData_V1_fingerprints.txt – The complete manifest, listing the SHA-256 hash for every file in the dataset.

- TigrayData_V1_fingerprints.txt.sig – A GPG signature of the manifest file, creating a tamper-proof seal.

- TigrayData_V1_fingerprints.txt.sig.ots – An OpenTimestamps proof, which anchors the signature to the Bitcoin blockchain to prove when the manifest was signed.

- pubkey.asc – The Tigray Archive public GPG key used to create the signature. A user must import this key to verify the manifest's authenticity.

Contents

Context

Framework

Technology

LearningsArchive

Learnings

Adapting to a Low-Tech, High-Risk Environment

This project underscored the necessity of adapting security protocols to the realities of the operating environment. The team learned that an emphasis on offline-first solutions was more effective than complex digital tools that could fail due to infrastructure limitations. This reliance on physical transport, robust hardware, and user-friendly encryption was successful for nearly every aspect of the workflow, with the notable exception of timestamping, which remained a challenge due to the severe connectivity issues.

The Importance of Trust in Community-Based Archiving

Trust was the cornerstone of this initiative. In a conflict affecting a tight-knit community like Tigray, every decision—from selecting tools to identifying data sources and training local journalists—was made collectively. Nothing proceeded without the full consensus of community members.

This collaborative structure was possible because the initiative grew from years of voluntary work and credibility earned through lived experience. With fewer than six million people and minimal degrees of separation between families, everyone in Tigray is traceable within the social and political landscape. This closeness fostered trust but introduced risks absent with outside actors. Local sharing carries cultural and social sensitivities, such as the fear of bringing shame to families of survivors, particularly in cases of sexual violence. It also risks being misinterpreted: grassroots evidence collection can be viewed as operating outside official channels, raising suspicion that shared data might be commodified or used for personal gain.

In this environment, consensus-based decision-making and trust as the organizing principle were essential and enabled the work.

This collaborative approach was critical for the project's success and the ethical stewardship of highly sensitive data. The project demonstrated that technology is merely a facilitator; the real work lies in building human networks of trust. Interestingly, diaspora and foreign entities were often trusted more than local journalists, as they were perceived to have greater influence in justice and accountability processes.

A Need for Accessible and Resilient Tools

The challenges faced in Tigray highlight a broader need for cheap, accessible, and open-source tools designed for evidence preservation in resource-constrained environments. The success of this project relied on commercially available, consumer-grade products that were simple to use.

The project’s most significant technical challenge was balancing security best practices with the reality of the operating environment. While tools like VeraCrypt (for creating hidden, encrypted volumes) and TailsOS (for anonymous browsing) were considered, they were ultimately rejected. In a high-stress conflict zone with non-technical users, compounded by the fact that the local team's second language was English, the complexity of these tools was deemed a liability; a single mistake in their use could lead to the permanent loss of critical data.

This led to a crucial and deliberate compromise. The team encountered errors when using OpenPGP to encrypt large individual files and folders, which would have provided the most granular, asset-level security. Instead of risking data corruption or overwhelming users with complex command-line workarounds, the team opted for simpler, device-level encryption using the native and user-friendly FileVault on the MacBooks. While technically less secure than encrypting each file individually, this approach provided robust, reliable protection for the entire dataset and, most importantly, minimized the risk of human error, which was identified as the greatest threat to the data.

Finally, the initial assumption that large, terabyte-sized drives would be optimal for storage provisioning proved incorrect. We initially provisioned drives ranging from 1TB to 4TB. However, optimal utilization dictated that drives with significantly smaller storage capacities, approximating 128GB, would have been more effective for field distribution. The rationale was twofold: the collected data was highly distributed, and local journalists required dedicated copies. Provisioning 1TB+ units resulted in low storage utilization and substantial empty space. The team ultimately accepted the compromise of larger drives, as deploying the necessary volume of 128GB units would have substantially increased the logistical burden and operational risk associated with transporting a higher equipment count.

User Experience as a Security Imperative

The project revealed that in a high-stakes field environment, user experience is a critical component of security. We initially considered deploying gold-standard security tools like TailsOS for anonymity and VeraCrypt for creating hidden encrypted containers – see point above.

However, in practice with non-technical journalists operating under extreme stress, these tools proved to be a liability. A complex interface with multiple steps increased the likelihood of a user making a mistake that could either fail to protect the data or, worse, lead to its permanent loss, or trigger unnecessary operational redundancy, increasing costs and harming team moraleWe learned that the greatest immediate risk was not a sophisticated adversary cracking our encryption, but a well-meaning user accidentally locking themselves out of a hidden volume forever.

As a result, we actively chose simpler, more intuitive tools. Showing a journalist how to enable the native FileVault encryption on their MacBook – a one-time, set-and-forget process – was infinitely more effective and secure in practice than training them on a complex, multi-step process they would have to remember under duress. This experience demonstrated that the most secure tool is often the one that a user can reliably operate correctly every single time. Crucially, the tools had to be zero-cost solutions. Given that the operating environment in Tigray is largely cash-based and lacks widespread digital payment infrastructure, any solution requiring a subscription or online purchase was deemed inherently sub-optimal.

The Human Cost and Cognitive Burden

Beyond the technical and logistical complexities, the project illuminated the human toll of evidence preservation in conflict settings. Civil society actors bore a dual burden: conducting high-risk documentation under constant security threats while also carrying the weight of their own trauma as survivors or witnesses and engaging directly with other survivors of violence. This required navigating a delicate balance between the imperative to document and the ethical obligation to center survivor dignity and sensitivity. High-stress conditions, fractured infrastructure, and perpetual risk of exposure compounded the psychological strain. In this context, user error and burnout were not incidental operational risks but outcomes of the challenging environment itself.

For future efforts, two areas for improvement stand out. First, dedicated Mental Health and Psychosocial Support (MHPSS) resources should be integrated into operational design to address the cumulative psychological burden on frontline teams. Second, establishing core staffing structures with individuals more operationally removed from the conflict can help sustain continuity, reduce direct exposure of local actors, and ensure the work is not solely borne by those most at risk.

Recognizing psychosocial resilience as inseparable from technical security is central to the integrity and sustainability of evidence preservation in conflict environments. The most important insight was that resilience and trust proved as critical to data integrity as any protocol or hardware. Technical safeguards alone could not succeed without deliberate attention to human and psychosocial dimensions.

Contents

Context

Framework

Technology

LearningsArchive

Archive & Appendix: Case Summaries of Documented Atrocities

The following summaries detail five incidents documented in this dataset. This appendix is intended to serve as a resource for researchers, investigators, and legal practitioners by providing foundational information and leads for further inquiry into alleged war crimes and crimes against humanity. All listed incidents have been subject to public reporting. Utmost care must be taken to protect the identity of sources when utilizing any related data.

Researchers should reach out to info@starlinglab.org to discuss access to the documentation.

Case 1: Debre Abay Monastery Massacre

- Date: January 5-6, 2021

- Location: Debre Abay Monastery, Mai Harmaz, Tigray

- Incident: Massacre of civilians at the monastery. The events were recorded by members of the Ethiopian National Defense Forces (ENDF).

- Evidence Type: Videos

- Public Corroboration:

- https://www.tghat.com/tag/debre-abay-massacre/

- https://observers.france24.com/en/africa/20210312-ethiopia-tigray-video-massacre-war-mai-harmaz-investigation?ref=tw_i

- https://www.telegraph.co.uk/news/2021/02/19/should-have-finished-survivors-ethiopian-army-implicated-brutal/

- https://x.com/ViiHaakon/status/1358565080393203720

- https://www.ethiopiatigraywar.com/incident.php?id=I00108

- https://www.youtube.com/watch?v=xtiWchSghGE

Case 2: Togoga Massacre

- Date: June 22, 2021

- Location: Togoga, Debre Nazret, Enderta, Tigray

- Incident: Massacre of civilians following an airstrike on a local market.

- Evidence Type: Videos and Photos

- Public Corroboration: The bombing was reported by at least 12 international media outlets.

Case 3: Teka Tesfay Massacre

- Date: March 23, 2021

- Location: Teka-Tesfay, Sendada, Tsaeda Emba, Tigray

- Incident: Passengers from two minibuses traveling from Mekelle to Adigrat were stopped and summarily executed by ENDF soldiers. The aftermath was filmed by an individual in a passing vehicle.

- Evidence Type: Videos

- Public Corroboration:

Case 4: al-Nejashi Mosque Shelling and Looting

- Date: November 26, 2020

- Location: al-Nejashi Mosque, Negash, Kelete Awelallo, Tigray

- Incident: Shelling and subsequent looting of the historic al-Nejashi Mosque—a significant Islamic heritage site housing ancient artifacts—by Ethiopian and Eritrean troops.

- Evidence Type: Photos

- Public Corroboration:

Case 5: Destruction and Looting of Atse Yohannes High School

- Date: Ongoing during the period of military occupation.

- Location: Mekelle, Tigray

- Incident: The systematic destruction and looting of Atse Yohannes High School, which was occupied and used as a military base and detention site by Ethiopian government forces, representing a violation of the right to education and protection of civilian infrastructure.

- Evidence Type: Videos

- Public Corroboration:

Sam Gustman

Sam Gustman

Associate Dean, USC Libraries

CTO, Shoah Foundation

Sam Gustman has been chief technology officer (CTO) of the Shoah Foundation since 1994. Gustman is also associate dean and CTO at the USC Libraries where he oversees IT for the Libraries and started the USC Digital Repository.

As CTO of USC Shoah Foundation, Gustman provides technical leadership for the integration of the Institute’s digital archives into USC’s collection of electronic resources, ensuring the Archive’s accessibility for academic and research communities at USC and around the world. He is responsible for the operations, preservation and cataloging of the Institute’s 8-petabyte digital library, one of the largest public video databases in the world. He also manages the videography group responsible for collection of both traditional oral testimony and interactive AI testimony from genocide survivor and witnesses. His office offers technical support for universities and organizations that subscribe to the Institute’s Visual History Archive. His office also provides website support and duplication services for USC Shoah Foundation, which is part of the USC Dornsife College of Letters, Arts, and Sciences.

Gustman has twenty-eight years of leadership experience in information technology, twenty-six with USC Shoah Foundation. In addition to his responsibilities for USC Shoah Foundation, he has been the primary investigator on National Science Foundation research projects with a cumulative funding total of more than $8 million.

The Starling Lab for Data Integrity brings scholars and industry to advance innovation in digital trust for journalism, history, and law.

Rahwa Berhe

CONTACT

Rahwa Behre

Archive Accelerator Lead

Rahwa is a human rights defender, researcher, and archivist leveraging emerging technologies to champion freedom of expression, community memory, digital equity, and journalist safety. Collaborating with global NGOs, Rahwa has contributed to reports on human rights abuses and led programmatic efforts for government-funded transitional justice initiatives in post-conflict zones. Her work has been featured in the BBC documentary, “War Crimes: Are social media companies removing key video evidence?” In 2021, she founded Permachive, a technology, information and media organization democratizing the capacity to remember, reimagine, and reform for the benefit of humanity. Prior to this, Rahwa served as the SVP of Digital Assets and Markets at a global cryptocurrency exchange and has been in web3 since 2016. Rahwa’s current focus areas include censorship-resistant technologies, internet freedoms and safety, storytelling, and community archives.

Rahwa led the remote Archive Accelerator program, designed to empower community-based and community-led archives in protecting their digital assets from neglect and manipulation. She introduced the innovative Store Framework developed by Starling Lab, along with next-generation storage technologies, to support archivists assess the efficacy of decentralization and cryptography in enhancing their preservation strategies.

The Starling Lab for Data Integrity brings together individuals with experience in academic research, technological innovation, journalism, history, and law.

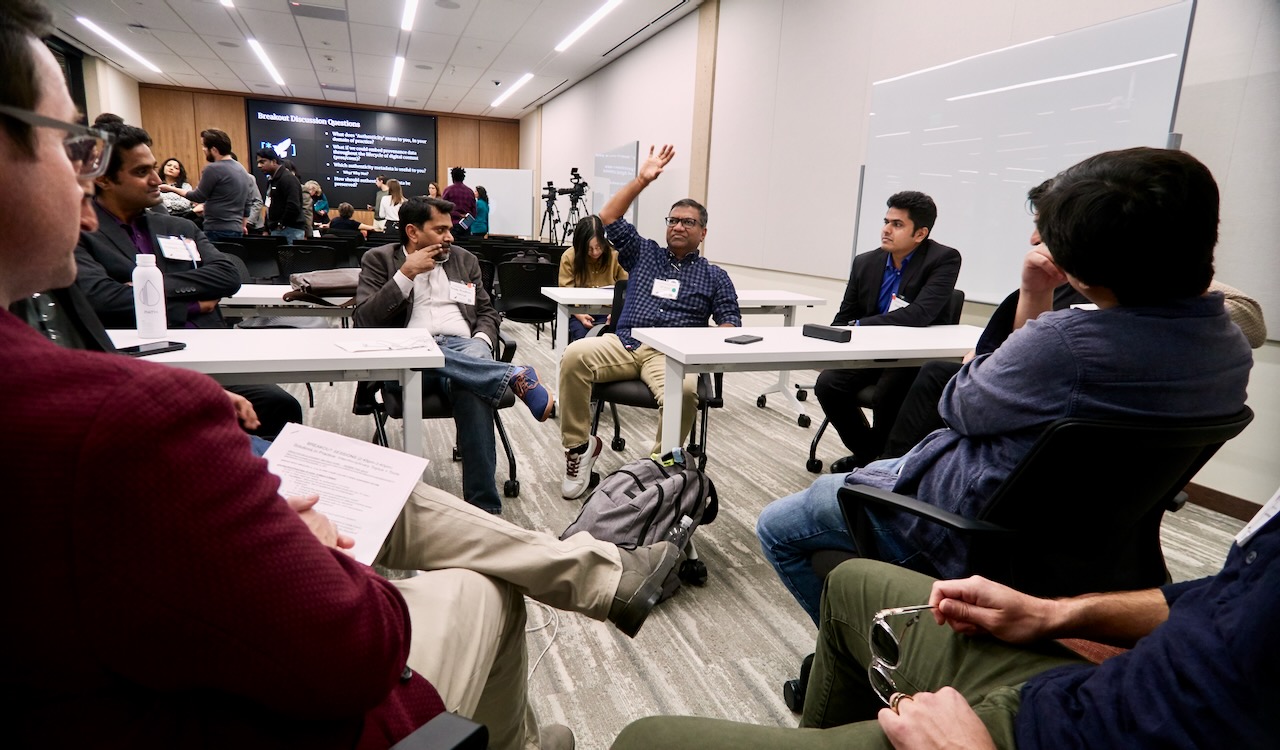

Building Trust in the Age of AI – A Starling & HAI Conference at Stanford

History

On October 22, the Starling Lab and Stanford HAI convened a diverse group of over 100 technologists, journalists, legal experts, and archivists at the Cecil H. Green Library for our conference, Trusting Digital Content in the Age of AI.

We want to extend a sincere thank you to everyone who took part – from speakers like Brewster Kahle and Zach Seward to attendees from the AP, BBC, and the Internet Archive. Together, we moved the conversation beyond the “arms race” of deepfake detection and toward “upstream” solutions: cryptography, provenance, and interoperable ecosystems of trust.

Thank you for helping us design a more authentic digital future. We look forward to continuing this vital work with you in 2025.

The event convened a diverse group of experts to address the erosion of trust in digital ecosystems. James Landay (Stanford HAI) and Tsachy Weissman (Starling Lab) opened the conference, framing the urgent need to design new systems for authenticity.

In the first general session, Jonathan Dotan moderated a discussion on building interoperable systems of trust. Zach Seward (New York Times) addressed how newsrooms are adapting to AI, while Riana Pfefferkorn (Stanford Cyber Policy Center) explored the legal challenges of deepfakes and the “liar’s dividend.” Brewster Kahle (Internet Archive) spoke on the critical mission of preserving digital history amidst technical threats.

A second panel, led by Vanessa Parli, reviewed the year in Generative AI. Michael Bernstein (Stanford) discussed the logic of social media platforms, Oren Etzioni (TrueMedia.org) presented on deepfake detection at scale, and Aimee Rinehart (Associated Press) shared insights on AI procurement and combating misinformation in journalism.

Later sessions focused on solutions, with Dan Boneh (Stanford) demonstrating cryptographic proofs for content authenticity, joined by Jeff Hancock and Margaret Hagan on the human and legal aspects of trust. Finally, Ann Grimes, Basile Simon, and Adam Rose led discipline-specific roundtables on tools for journalism, law, and archiving.

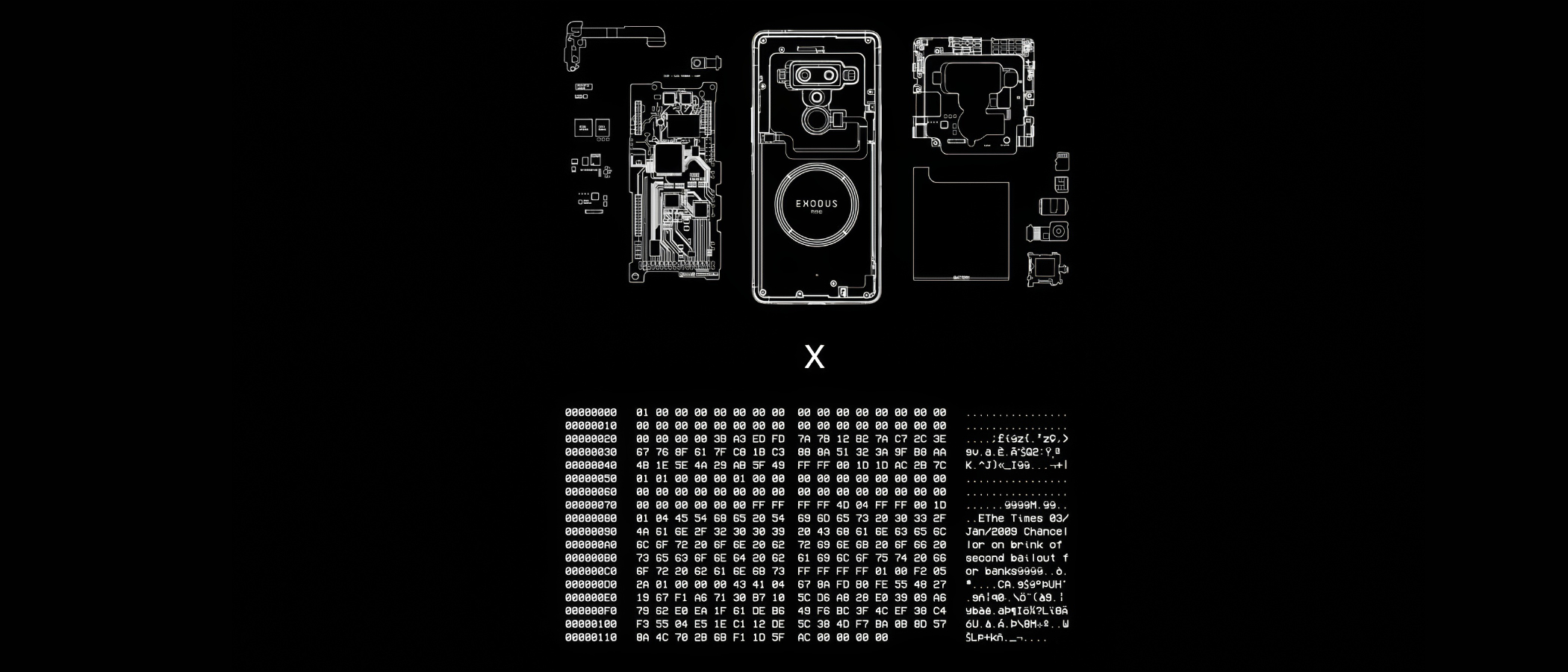

Secure Enclave Signing

History

This prototype establishes a hardware-based root of trust for digital media by cryptographically sealing assets inside a device’s protected silicon environment. It shifts the security boundary from vulnerable software to dedicated cryptographic processors, ensuring that signing keys remain inaccessible to external threats and that every asset is tied to an immutable hardware identity.

By anchoring provenance at the absolute point of capture, it creates a foundational “proof of origin” that is resilient against both digital manipulation and systemic distrust.

YEAR

2022

PARTNERS

HTC

Numbers and the Numbers Protocol

LINKS

– HTX Exodus 1S Phone

– The Starling Framework

The Problem

The ideal environment to manage digital signing is a cryptographic processor within the capture device, where the keys are never revealed and the system will only sign data within a predefined pathway. This ensures all authenticated data carrying a signature by those keys are unambiguously originating from the capture device. Unfortunately, hardware secure enclaves and similar technology, are not widely included in professional capture devices, or implemented with sufficient firmware that supports these digital signing use cases.

JOURNALISM

Anchors in hardware rather than software support shielding reporters from deepfakes accusations, and gives them a digital “negative” as an origin record of their work.

HISTORY

By binding historical records to the unique physical identity of the capture device, it creates a resilient, verifiable archive that ensures the “first draft of history” cannot be silently altered by future actors.

LAW

Hardware-level signing establishes an airtight digital chain of custody and ensures cryptographic keys are physically isolated and never exposed, aiming to meet the most rigorous standards for legal admissibility.

The Solution

Starling Lab’s prototype utilizes Secure Enclaves (isolated cryptographic processors) to generate and store signing keys where they can never be revealed. This implementation creates a tethered workflow, pairing a digital camera with a secure-element-equipped device (such as the HTC Exodus 1S).

As media is captured, the system generates a cryptographic hash that is signed within the hardware’s protected environment, creating a tamper-evident record from the first millisecond of the asset’s existence.

This prototype serves as a technical blueprint for hardware vendors, advocating for a decentralized framework where privacy-respecting key management and data authentication are baked into the physical design of professional tools.

Distributed Storage

History

A decentralized infrastructure designed to ensure the long-term persistence and auditability of digital records by stripping centralized platforms of their outsized control over information.

Moving beyond fragile cloud silos, it cryptographically seals media and metadata across independent, multi-jurisdictional networks .

This framework shifts the preservation paradigm from blind trust in a single provider to a “proof of existence” model, where automated audits continuously verify that data remains untampered, replicated, and accessible .

YEAR

2021-25

PARTNERS

Filecoin

IPFS

Storacha

USC Libraries

The Problem

Traditional storage models rely on centralized cloud providers and social media platforms that exercise absolute authority over the availability and integrity of digital content. This creates a single point of failure: critical historical records can be silently modified, deleted due to shifting terms of service, or lost in jurisdictional disputes.

Standard databases also lack the transparency required for “chain-of-custody” documentation, making it difficult for archivists to prove that a file has not been altered since its initial preservation .

LINKS

– Case Study: Preserving 70 Years of Testimony with the USC Shoah Foundation

– Preserving Armenian Cultural Heritage on the Decentralized Web

– “Mom, I See War”, a collection of drawings from Ukrainian children, preserved on decentralised storage

The Solution

Starling Lab leads the world’s first academic center dedicated to using decentralized tools to advance human rights, backed by a multi-million dollar commitment from Protocol Labs and the Filecoin Foundation. We have moved beyond theoretical prototypes to large-scale implementations that safeguard humanity’s most sensitive digital records.

Our collaboration with the USC Shoah Foundation permanently preserves an archive of 55,000 video testimonies from genocide survivors. In tandem with the USC Digital Repository, a service of the USC Libraries, we run a 22-petabyte Filecoin node at USC – just one part of the Libraries’ deep expertise in preservation and archiving.

By housing this node within a leading research university, we combine the innovation of Web3 protocols with the rigorous preservation standards developed over decades by archivists and librarians.

Still Photogrammetry

History

Still Photogrammetry integrates authenticated metadata with 3D spatial reconstruction to provide a verifiable record of physical environments and historical sites.

By utilizing a decentralized framework where every source image is treated as an independently verifiable “atom” of fact, this prototype allows investigators to build unalterable, three-dimensional timelines of evidence. It anchors complex digital twins to a cryptographic “proof of existence,” proving that the spatial data has not been tampered with since the moment of capture and restoring trust in digital primary sources for law and journalism.

YEAR

2022-26

PARTNERS

Mike Caronna

Pixelrace

Artem Ivanenko

LINKS

The New Horizon Lab

Starling Lab’s Spatial Digest

The Problem

Techniques such as photogrammetry (and more recently, NeRF and Gaussian Splatting) permit the reconstruction of a space in 3D, from stitching together 2D photographs. These tools are key to both extended reality environments and to investigations driven by architectural practices. However, integrity and provenance data is lost in the computing that renders the 3D models.

The Solution

Starling experiments with capture techniques supportive of 3D reconstruction while including provenance and integrity markers. From using smartphones to professional DSLRs, we test the technical constraints against the needs of photogrammetry workflows, which require a large amount of photographs of the scanned location.

Furthermore, we are also prototyping virtual UIs in virtual reality aiming to bridge the gap between what the viewer can see (the 3D model) and the original location (as per the 2D photographs). The viewer can navigate the space and select these authenticated “anchors” to interrogate the model, furthering their trust in the reconstruction.

SELECTED WRITING

Our 2025 paper: Verifiable Reality: Contrasting Approaches to Photorealistic VR Using NeRF Streaming and Gaussian Splatting Technologies

5G Network Attestation

History

Validating device metadata through fixed network infrastructure and reputable independent observers to establish trust in captured data.

YEAR

2024

PARTNERS

T-Mobile

Deutsche Telekom

Vonage

LINKS

– UN submission: Authenticity for human rights defenders in remote areas

The Problem

Mobile phones allow journalists to capture and transmit digital media with contextual information. However, since mobile phones are controlled by the end users, they permit manipulation of all forms of metadata, like GPS spoofers to fake location data. It is not enough for the location data collected by the Starling Capture app to be derived from metadata recorded solely by that mobile phone; it must be cross-referenced against the data signals transmitted and received by GPS, WiFi, cell towers, and/or beacons so that there is third-party attestations that the mobile phone is reporting location data correctly.

The Solution

Starling Lab is moving the “trust anchor” from the individual handset to the fixed infrastructure of the 5G network. By utilizing the C1 Mini framework, we enable a new class of “Network Attestations” that treat the mobile carrier as a reputable, independent witness to the capture of digital evidence.

The core of this prototype is the C1 Mini application, which leverages the standardized CAMARA APIs (exposed via Vonage and Deutsche Telekom). Instead of relying on easily phished 2FA codes, the system uses Silent Network Authentication (SNA). This process cryptographically verifies the unique identity of the subscriber’s SIM card directly with the carrier’s core network in the background. This ensures that the data registration request is coming from a legitimate, physical device authenticated by the network operator, not a spoofed virtual instance.

To support practitioners in remote or high-risk areas, the C1 Mini facilitates the “expedited registration” of asset fingerprints. Users can transmit the cryptographic hash of a photo or video via a secure SMS tunnel to a Starling registration server. This allows for a “proof of existence” to be anchored on a decentralized ledger (such as Solana or Hedera) even in low-bandwidth environments where uploading large raw files is impossible. This creates a tamper-evident timestamp that is significantly harder to manipulate than a device’s internal system clock.

This creates a multi-layered defense against disinformation: a hardware-signed image, a network-verified location, and a decentralized timestamp, all established at the point of capture.

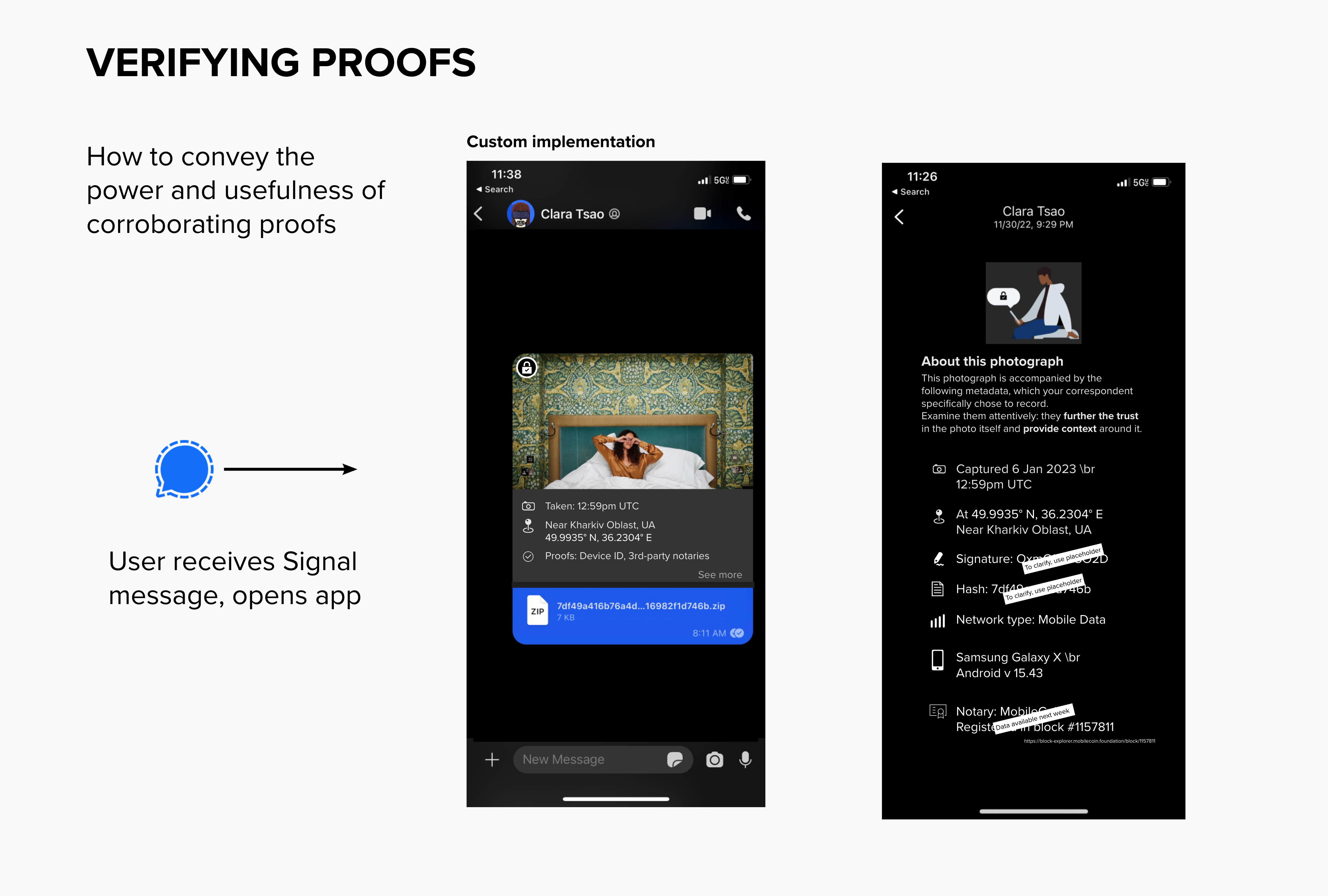

ProofMode Authentication

History

Experimenting with the integration of lightweight, forensic-grade verification into secure messaging workflows.

YEAR

2022-23

PARTNERS

Guardian Project

Hala Systems

Signal Messenger

LINKS

– Case Study: The Proof’s in your Pocket

– From the Guardian Project team: Integrating libProofMode

The Problem

Citizen-captured photos and videos are becoming powerful reporting tools. But faked footage, or footage with missing crucial context, threatens to break the trust between a newsroom and its audience. Professional journalists thus need to be able to vet the footage captured by citizens to ensure that the files sent in by citizen journalists are authentic and accurate representations of the depicted event.

The Solution

ProofMode (developed by the Guardian Project) promotes trust by providing a means to strongly authenticate multimedia at the point of capture.

We were the first to experiment with its distribution as a software library, under the name libProofMode. Starling Lab developed a bespoke fork of Signal Messenger that embeds authentication as a native feature. Users of this custom app can snap photographs directly within the app, which automatically generates a unique OpenPGP key pair to sign the media and its surrounding sensor metadata, including location, time, and cell tower environment.

At capture, media hashes are automatically registered on OpenTimestamps to create a “proof of existence” on the Bitcoin ledger. To ensure secure transport, every file sent via Signal triggers an automated MobileCoin micro-transaction; the first 16 digits of the “proof hash” are embedded in the transaction memo, allowing the recipient to cryptographically verify that the file received exactly matches the file captured in the field.

To reduce the burden on legal and journalistic investigators, the prototype features a visual layer of UI inside the Signal conversation view. Both sender and recipient can instantly surface contextual metadata snapshots and check them against immutable third-party record holders, such as the LikeCoin or Avalanche blockchains. This “glass-to-glass” approach ensures that technical authenticity markers are accessible and legible to the field practitioners who need them most.

HIGHLIGHT

In response to the shelling of Kharkiv’s schools, Starling Lab launched Project Dokaz (“Proof”). Local photographers were equipped with the custom Signal app to conduct “preventative documentation” in support of the Safe Schools Declaration. By capturing regular rounds of authenticated imagery, the team was able to verify the absence of military co-option at these sites, confirming their protected status under international law.

Read mode about Project Dokaz →