Authenticated Attributes

Verify

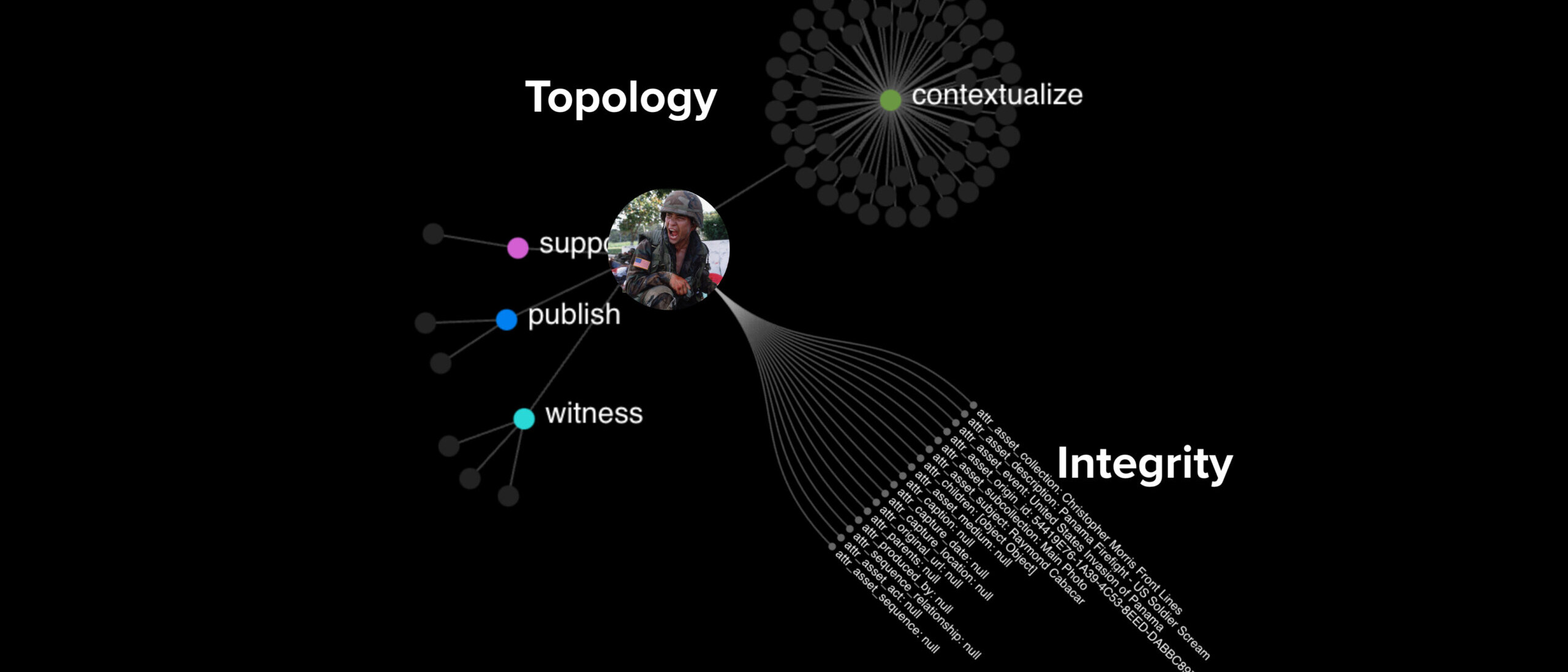

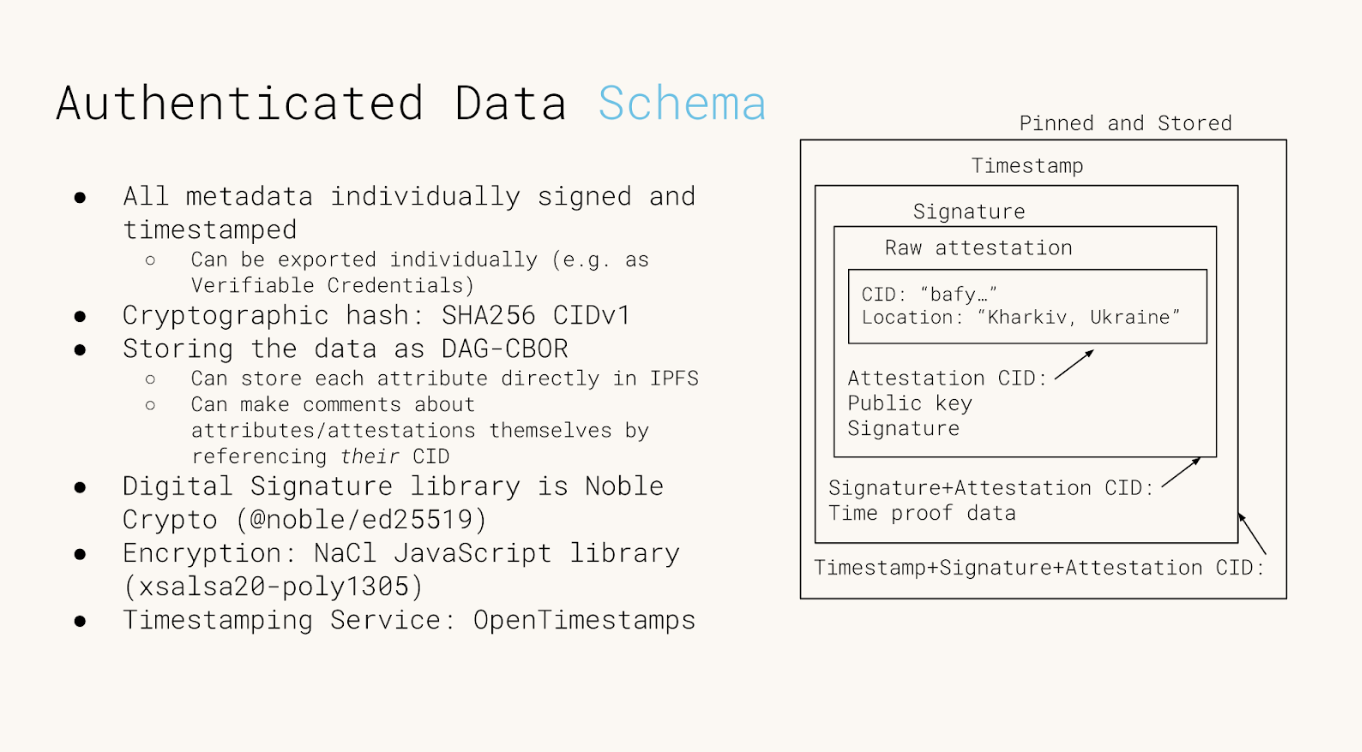

Authenticated Attributes (AA) is a decentralized infrastructure designed to restore trust in digital media by establishing the proven integrity of metadata. Moving beyond traditional, centralized databases, it treats every piece of information as an independently verifiable “atom” of fact, allowing investigators to construct unalterable timelines of evidence.

This concept shifts the verification paradigm from trying to detect AI-generated falsifications (deepfakes) to affirmatively proving authenticity. It anchors digital content to a “proof of existence,” utilizing third-party timestamping to demonstrate exactly when a file was created and ensuring it has not been altered since.

The Problem

The traditional methods for trusting digital images and records are breaking down due to the rise of AI-generated content (deepfakes) and a general erosion of trust in institutions. Investigators and archivists face several specific challenges:

- There is no standard way to reference the same image or file across different organizations.

- Secondhand information is difficult to verify, and different sources often provide conflicting data.

- Centralized databases are vulnerable to tampering, often requiring strict access controls that limit collaboration

JOURNALISM

Authenticated Attributes allow newsrooms to cryptographically bind specific details (like location or time) to content so audiences can independently verify the reporting’s origin without compromising sensitive sources.

HISTORY

By attaching unalterable proofs of existence to digital artifacts, AAs ensure that future historians inherit primary sources that are traceable and immune to revisionism.

LAW

AA creates a tamper-evident link between evidence and its metadata, allowing investigators to prove that critical details haven’t been altered since the moment of capture.

The Solution

Authenticated Attributes is a tool that allows investigators to verify digital content by sharing authenticated metadata and attestations that cannot be faked or tampered with. It restores trust through a decentralized framework:

-

Cryptographic hashes and digital signatures uniquely identify files and authenticate sources.

-

Third-party timestamping proves exactly when data existed.

-

Flexible reconciliation lets investigators weigh conflicting sources without discarding data.

-

Peer-to-peer replication ensures data remains persistent and available.

—

The initial implementation of Authenticated Attributes express them from the following data structure (more details in the Github repo):

SELECTED WRITING

Web Archives as Evidence (using AA)

Slide deck and Youtube video

Working on Authenticated Data with AA

By Cole Capilongo

Time for (Trusted) Timestamping

By Cole Capilongo

An Alternative to Deepfake Detection

By Kate Sills

UN Submission on ‘an Index for Accountability’, a prototype built on AA

Finding Your Files in Decentralized Storage Just Got Easier

Verify

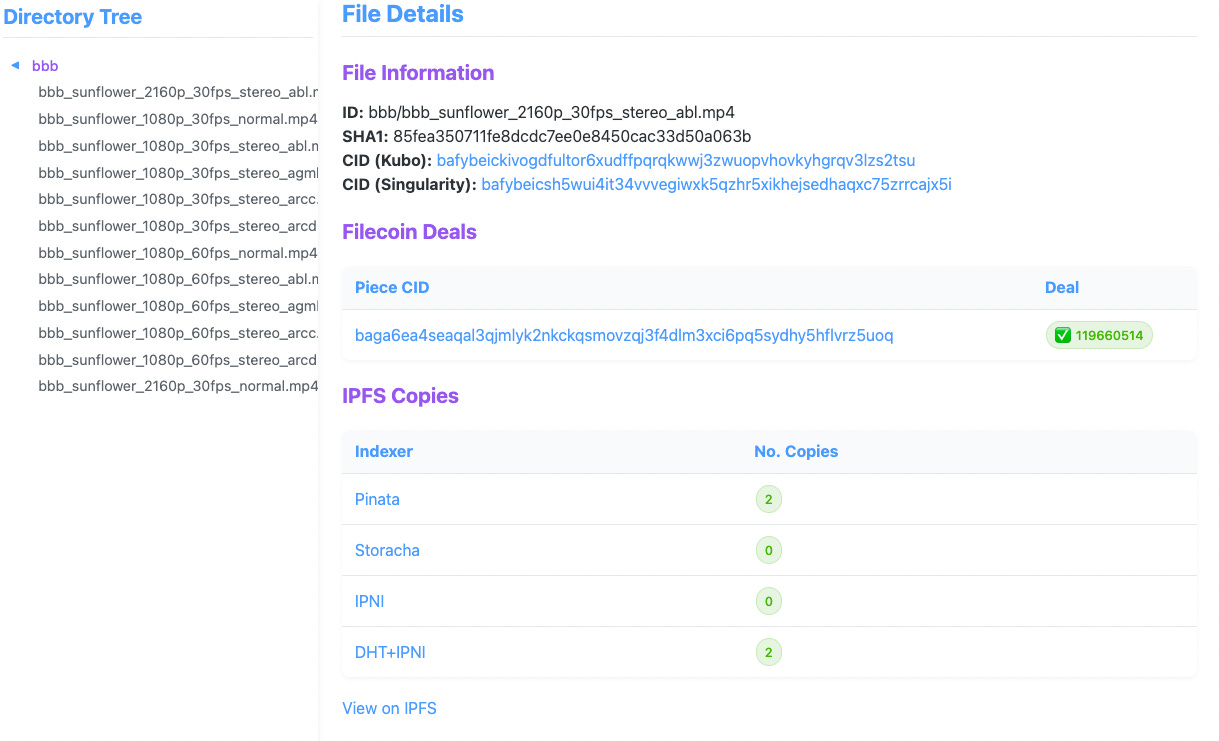

At Starling Lab, we’re dedicated to building tools that empower secure and verifiable data storage. Today, we’re thrilled to announce the CAR Content Locator, a tool originally developed to support our Filecoin archiving work at the USC Digital Repository. With support from the IPFS Implementations Grants program, we’ve evolved this browser-based tool beyond its original purpose – tracking private files on the USC Filecoin node – into a general-purpose content locator across Filecoin and IPFS networks.

Why We Built the CAR Content Locator

As decentralized storage solutions become more prevalent, the need for robust, user-friendly tools to manage and verify content availability is critical. Our work with the USC Digital Repository highlighted a significant challenge: tracking individual files within CAR files (Content Addressable aRchives) and verifying their continued preservation without relying on public indexing services.

Organizations storing large datasets on Filecoin and IPFS face a practical problem: once dozens of files are bundled into CAR archives, how do you later find and verify specific ones?

Existing public indexing services can’t help with private or institutional data, and unfortunately our work with the USC Digital Repository – which looks after 1.2PiB+ of private archives – highlighted this challenge.

This led us to develop a tool that:

- Maps file CIDs (Content Identifiers) to their locations within CAR files, especially when CAR metadata and file data exist in separate CAR files.

- Extracts specific files using either a file identifier or CID across multiple CAR files.

- Connects Piece CIDs and Deal IDs on the Filecoin network without requiring a full indexer.

Feature Highlights

The CAR Content Locator codebase is now published on GitHub under the MIT License, and can easily be deployed using Docker Compose. It features:

- A web-based file browser that displays file integrity information (i.e. CIDs) and their availability across Filecoin and IPFS networks;

- For Filecoin storage information, a Singularity-based workflow is required as it relies on its databases to keep track of Piece and Deal information;

- For IPFS content availability, the tool relies on delegated routing endpoints to be available;

- Although the tool is preconfigured with several public indexers, such as Pinata and Storacha, it also supports private IPFS networks, and similarly, private Filecoin nodes with the required Singularity databases.

Explore the tool in action at our demo instance!

Learnings & Next Steps

During development of the CAR Content Locator, we also conducted research on how different organizations – particularly those managing large datasets like the USC Digital Repository – address similar use cases for tracking their files across CAR-compatible networks.

We found little consistency in how users interact with Filecoin and IPFS, and workflows and toolings are usually bespoke and tailored to specific organizational needs. We hope that publishing this open source tool will also participate to the conversation on building common tooling across similar organizations.

We’d greatly appreciate your input:

- Share with us how your organization tracks files stored on Filecoin and/or IPFS;

- File tickets on starlinglab/car-content-locator on what features would make this tool helpful to you.

The CAR Content Locator’s development has been significantly shaped by the specific needs of the USC Libraries, and it continues to keep track of its 1.2 PiB+ of private archives on their Filecoin node. Our team also works closely in parallel with the Internet Archive’ Filecoin operations.

This work is made possible by the Open Impact Foundation and the Filecoin Foundation for the Decentralized Web, as well as those who advised / contributed feedback: Mosh, Robin, Bumblefudge, Lidel, Arkadiy, Casey, Sankara, Ian, and the whole USC Digital Repository and Libraries team.

Submission to the UN Special Rapporteur on Human Rights Defenders from our Brazil Coverage

Verify

We are honored to see our work featured in Mary Lawlor‘s UN Special Rapporteur report to the Human Rights Council: “Out of Sight: Human Rights Defenders in Rural, Remote and Isolated Contexts.”

UPDATE: Since this was published, the UN Human Rights Council adopted a resolution on protecting human rights defenders in the digital age. It addresses several important issues that our Lab has been focused on. Scroll to the end of this page for more details.

The report notes how Starling Lab supported efforts in Brazil’s Pantanal wetlands with tools that work even in low-connectivity environments, which is needed where the “digital divide hits many human rights defenders very hard.”

In a speech this month presenting her findings, Lawlor observed how some of those most at-risk include “journalists covering human rights issues at the local level.” Starling’s methodology was used by journalists to document environmental destruction – and confront climate change denialism. Our submission to Lawlor’s office focused on data authentication, decentralized storage, and cryptographic verification. Together, these ensure documentation remains tamper-evident and accessible, even when governments seek to dismiss the truth.

Her report includes several valuable recommendations to governments and other international actors, two of which resonate with our work:

- “Expand access to the Internet and secure communication tools, including by increasing funding for such digital security resources as encrypted communication applications and secure reporting channels.”

- “Support efforts to enable human rights defenders to store and safeguard their information securely, without fear of unlawful surveillance or data breaches, including putting in place robust legal safeguards to prevent the misuse of digital tools to suppress dissent or target defenders and ensure that their digital rights are protected.”

We appreciate being included among so many other human rights defenders, and remain grateful to Pablo Albarenga for his photojournalism in Brazil, as well as to Inside Climate News for publishing this important coverage.

And we hope this underscores the vital role of trusted digital evidence in defending human rights and environmental justice.

🔗 Read the Special Rapporteur’s remarks;

📄 Read the full UN report;

📕 Don’t miss our own case study;

📰 And the Inside Climate News article

Starling Lab was previously referenced in a report to the UNHRC in 2023 by the Rapporteur on the right to education, who acknowledged similar methods used by the lab as emerging “good practices” for documenting war crimes against civilian objects like schools.

Update (April 16, 2025): Our submission is also echoed in a new resolution from the UN Human Rights Council, which addresses the protection of human rights defenders in the digital age (full text: A/HRC/58/L.27/Rev.1).

The resolution emphasizes “universal connectivity” and “meaningful connectivity” as essential for defenders’ work, calls for “technical solutions for strong encryption and anonymity,” and advocates for secure information storage “without fear of unlawful surveillance.” It specifically recognizes the “growing number of digital attacks” on defenders and acknowledges the “protection gap” caused by lack of accountability.

These address key points from our submission on data integrity and authentication technologies for remote areas.

We’re particularly encouraged by the HRC’s recognition that “new and emerging digital technologies can hold great potential for strengthening democratic institutions and the resilience of civil society, empowering civic engagement and enabling the work of human rights defenders, public participation and the open and free exchange of ideas, and for the exercise of all human rights.” This aligns perfectly with our mission at Starling Lab to harness technology to establish trust in digital records.

Our earlier submission outlined innovative approaches to produce documentary evidence and combat digital denialism. This includes cryptographic methods that authenticate evidence from the point of capture, enabling defenders in remote areas to establish credibility despite connectivity challenges. The submission also referenced ongoing work on telecommunication technologies, including 5G, to further enhance these capabilities.

Timestamp Verification

Verify

We utilize distributed ledgers to establish an immutable “proof of existence” for digital media and its metadata. By anchoring cryptographic fingerprints on public consensus networks, it creates a tamper-evident record of the absolute moment an asset was first observed.

This “birth certificate” for digital data shifts the verification model from reactive detection to affirmative proof, ensuring that the origin and integrity of critical information remain indisputable against the threats of revisionism and synthetic manipulation.

YEAR

2020-24

PARTNERS

Hedera

ProvenDB

OpenTimestamps

Numbers Protocol

Solana

LINKS

– Time for Trusted Timestamping

– Reuters collaboration, ProvenDB anchored on Hedera

– ‘Mom I See War’, a collection of drawings from Ukrainian children, anchored on the NEAR blockchain using the Numbers Protocol.

The Problem

Establishing the originality of a piece of content, and that a given piece of media is the first known version has been a conundrum since the invention of written communication. Whether one is looking to resolve disputes of which version comes first, or to prove media was created before the advent of certain AI technologies, a timestamp that can be verified with a trustworthy third-party can be a helpful solution. Verifiable timestamping can be used as a part of the digital media creation, preservation, and edit processes.

JOURNALISM

By anchoring field footage hashes on public ledgers, newsrooms can maintain an indisputable record of truth that survives both link rot and malicious denialism.

HISTORY

By registering high-fidelity fingerprints of historic records before the generative AI inflection point, institutions ensure that primary sources can be definitively distinguished from synthetic noise.

LAW

Third-party ledgers act as record holders from which to derive strong claims about integrity and point of origin of a digital item.

The Solution

Starling has used several Merkle tree-based technologies to efficiently create verifiable timestamps on public ledgers, and developed workflow using systems such as ProvenDB with Hedera Consensus Service anchors, OpenTimestamps proofs anchored on Bitcoin, as well as direct registration of media assets on many public blockchains. In all these cases, the block height is used to establish a verifiable timestamp for the registered digital media.

Adding an immutable proof of existence backed by distributed ledger consensus serves to establish a first known creation of digital media that is nearly impossible to refute.

READ FURTHER

Further to this work, we have created a reference implementation of timestamped databases in a project called ‘Authenticated Attributes’ that aims to integrate with digital media user interfaces and collaboration tools.

We have also created an offline, SMS-based silent registration prototype based on 5G technology, and integrating with the latest C2PA-capable cameras.

Four Corners Wordpress Plugin

Verify

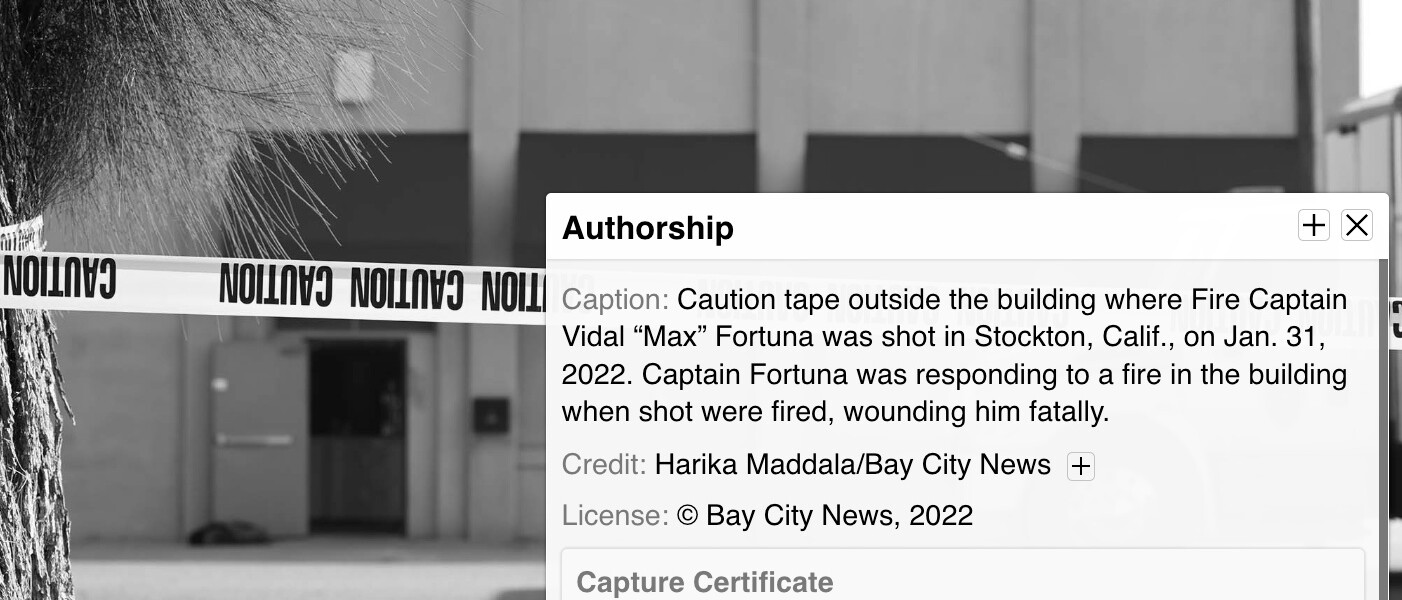

An embeddable display for photographs to show contextual and provenance information, such as photographer information, related images, and proof of existence on distributed ledgers.

YEAR

2022

PARTNERS

– Fred Ritchin

– The Four Corners Project

The Problem

Online platforms routinely strip metadata from images to protect user privacy – a necessary safeguard on the Web. However, this decontextualizes professional photojournalism, leaving viewers unable to verify a photo’s origin, time, or location. This creates a critical dilemma for photographers. They need a secure method to re-associate their work with this essential data, but require absolute control to do so.

They must be able to present this enriched, verifiable context only when they deem it safe, on platforms they trust – like their own website or a specific publication – to restore the full story behind the shot.

CASE STUDIES

– Setting the Record Straight in Brazil’s Burning Wetlands (with Inside Climate News)

– Documenting Stockton’s Homelessness (with Bay City News)

The Solution

As often with Starling’s prototypes, the process begins at the moment of capture, where technical metadata like time and location are cryptographically signed, creating a tamper-evident record of rich, contextualizing metadata. This authenticated foundation still allows a photographer to later add richer contextual information – such as their byline, a narrative description, or related images.

Based on the Four Corners Project research and user interface, Starling Lab and Four Corners co-developed a WordPress plugin to bring this UI to the biggest blogging platform on the Web. This tool allows publishers to easily embed photos with an interactive layer, enabling viewers to explore the rich, attributable context and verify the circumstances of the photo’s origin.

As part of this work, Starling worked with the Four Corners team to develop a C2PA-compliant metadata schema for bundling rich contextual metadata in C2PA manifests. This schema contained all the metadata contained in each of the Four Corners toggles. This data is then included in a C2PA manifest, and parsed by the WordPress plugin, for presenting contextual and provenance information on each article.

Overlaid on the photograph in each of the four corners are floating carets which, once clicked or hovered over, reveal additional data about the photograph.

Document Redaction

Verify

Establishing a cryptographic seal of transparency for sensitive digital records, moving beyond traditional “black-box” redaction.

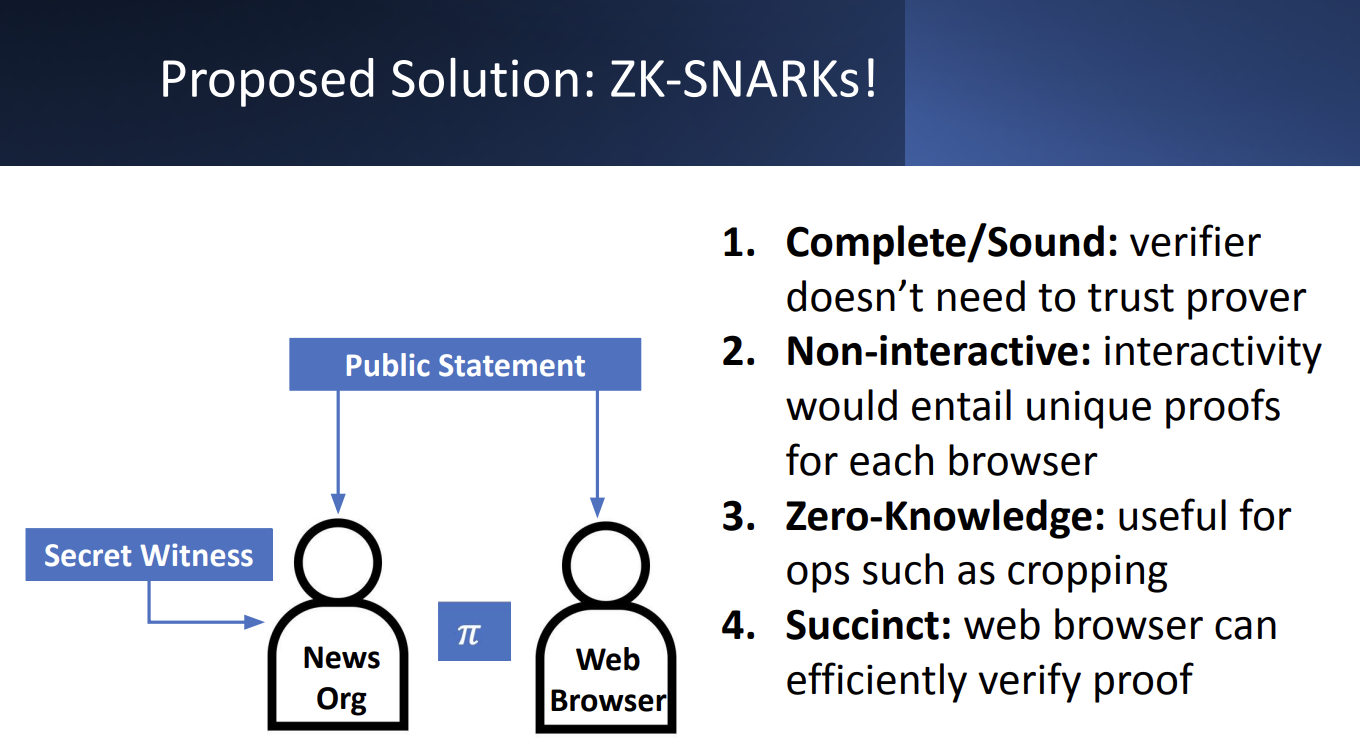

Zero-Knowledge Proofs (ZKP) allow investigators to obscure personally identifiable information (PII) while providing a mathematical guarantee that no other part of the document has been altered.

This concept shifts the trust model from requiring blind faith in a publisher’s edits to providing affirmative proof of a document’s integrity, ensuring that critical primary sources remain both ethically protected and legally robust

YEAR

2022

PARTNERS

Stanford Applied Cryptography Group

LINKS

– Case Study: The DJ and the War Crimes

– Presentation from Trisha Datta and Dan Boneh

The Problem

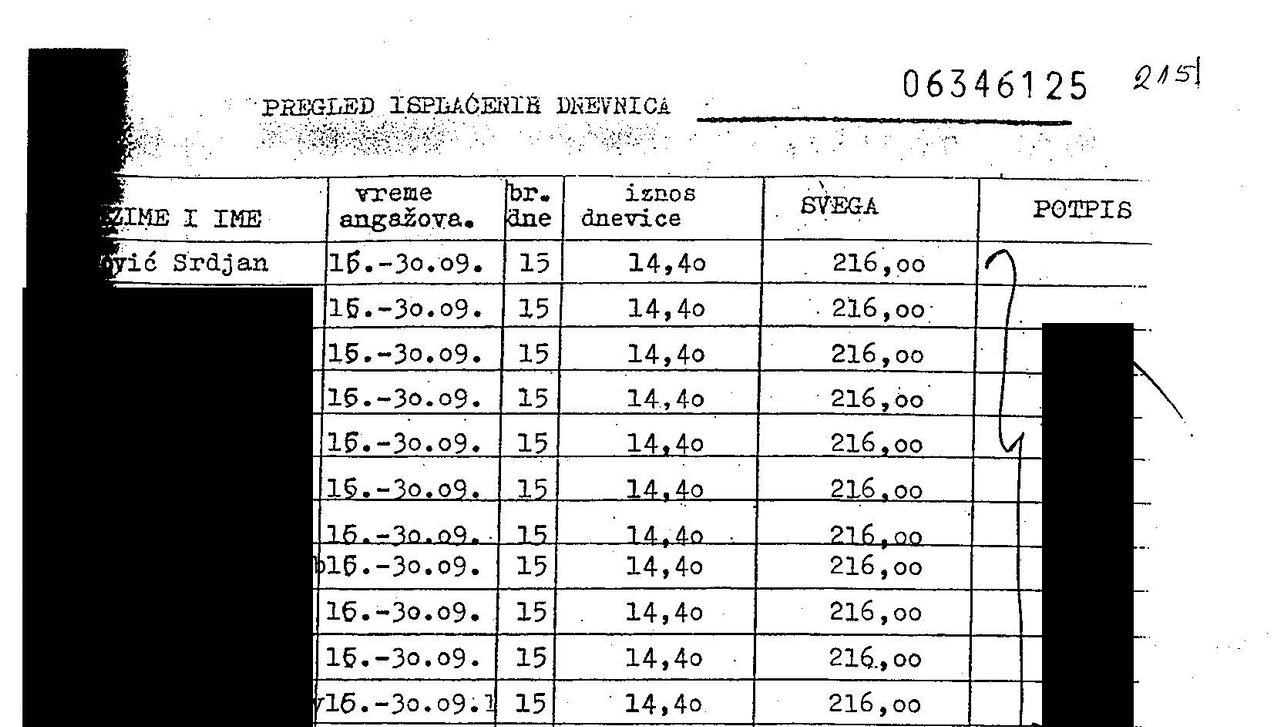

Accountability investigations often rely on digitized primary sources – such as the UN payroll records unearthed in our Bosnia war crimes probe – that contain sensitive PII of individuals not central to the investigation. While redacting this information is a journalistic and ethical necessity, it creates a “trust gap”. In an era of widespread denialism and “cheapfakes,” any manual modification to a source document can be weaponized by bad actors to claim the entire record is a forgery, undermining the evidentiary weight of critical testimonies.

The Solution

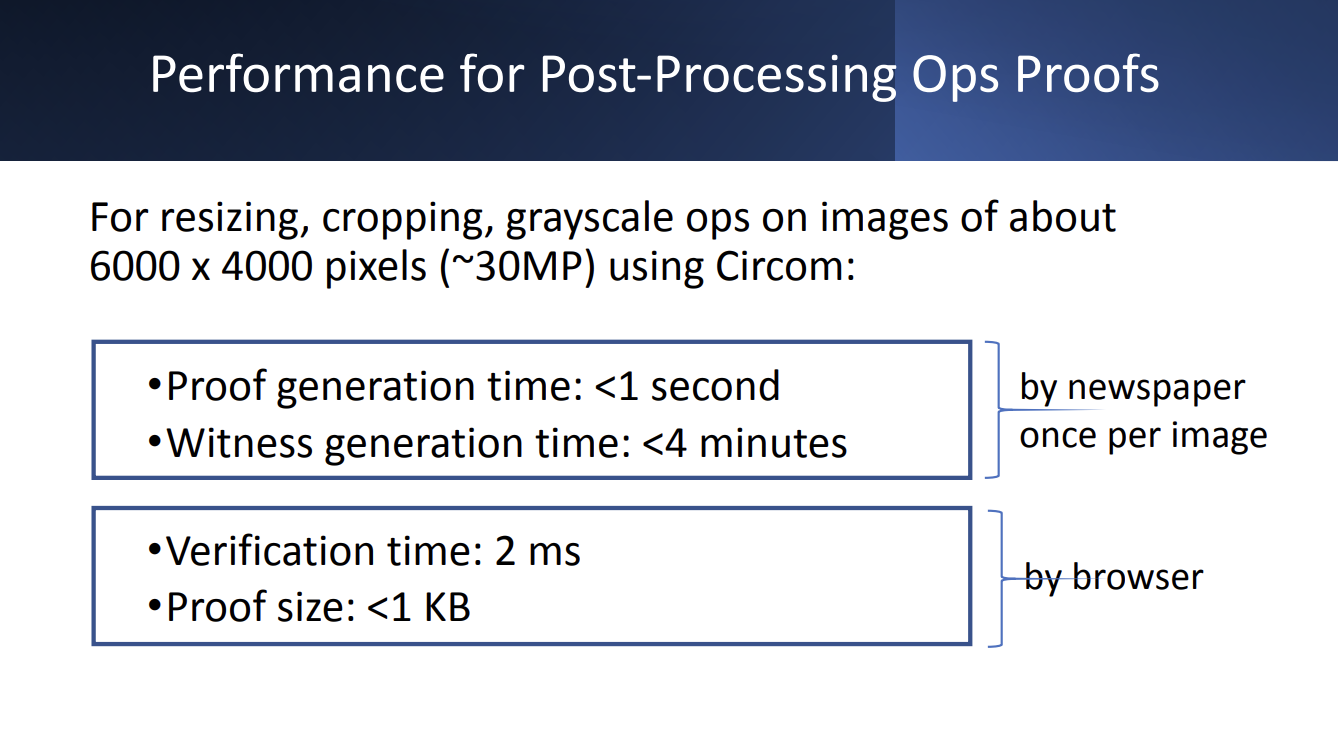

In partnership with our principal investigator’s Professor Dan Boneh’s students from the Stanford Applied Cryptography Group, Starling developed a workflow that integrates forensic ingestion with cryptographic proof systems, and managed redactions.

It relies on a Zero-Knowledge Proof that certifies the relationship between the original and the redacted file. This technology generates a mathematical proof that the only changes made to the published PDF were the addition of black boxes over specific pixels. This allows third parties, such as expert witnesses, to “check the math” and verify that no text was altered or deleted, maintaining the document’s integrity while fulfilling privacy obligations

JOURNALISM

Verifiable Redaction allows newsrooms to protect the privacy of vulnerable bystanders without sacrificing the credibility of their reporting. By providing a cryptographic guarantee that only specific PII was obscured, journalists can defend their primary sources against bad-faith accusations of manipulation.

HISTORY

This technology safeguards the sanctity of historical records by ensuring that “anonymized” archives remain verifiable links to the past.

LAW

Verifiable Redaction establishes a court-admissible chain of custody for documents containing sensitive material. ZKPs benefit can facilitate the verification of proprietary forensic software, complex discovery datasets, and sensitive testimonial claims without compromising the underlying trade secrets or personal privacy that often create insurmountable disclosure dilemmas.

5G Network Attestation

Verify

Validating device metadata through fixed network infrastructure and reputable independent observers to establish trust in captured data.

YEAR

2024

PARTNERS

T-Mobile

Deutsche Telekom

Vonage

LINKS

– UN submission: Authenticity for human rights defenders in remote areas

The Problem

Mobile phones allow journalists to capture and transmit digital media with contextual information. However, since mobile phones are controlled by the end users, they permit manipulation of all forms of metadata, like GPS spoofers to fake location data. It is not enough for the location data collected by the Starling Capture app to be derived from metadata recorded solely by that mobile phone; it must be cross-referenced against the data signals transmitted and received by GPS, WiFi, cell towers, and/or beacons so that there is third-party attestations that the mobile phone is reporting location data correctly.

The Solution

Starling Lab is moving the “trust anchor” from the individual handset to the fixed infrastructure of the 5G network. By utilizing the C1 Mini framework, we enable a new class of “Network Attestations” that treat the mobile carrier as a reputable, independent witness to the capture of digital evidence.

The core of this prototype is the C1 Mini application, which leverages the standardized CAMARA APIs (exposed via Vonage and Deutsche Telekom). Instead of relying on easily phished 2FA codes, the system uses Silent Network Authentication (SNA). This process cryptographically verifies the unique identity of the subscriber’s SIM card directly with the carrier’s core network in the background. This ensures that the data registration request is coming from a legitimate, physical device authenticated by the network operator, not a spoofed virtual instance.

To support practitioners in remote or high-risk areas, the C1 Mini facilitates the “expedited registration” of asset fingerprints. Users can transmit the cryptographic hash of a photo or video via a secure SMS tunnel to a Starling registration server. This allows for a “proof of existence” to be anchored on a decentralized ledger (such as Solana or Hedera) even in low-bandwidth environments where uploading large raw files is impossible. This creates a tamper-evident timestamp that is significantly harder to manipulate than a device’s internal system clock.

This creates a multi-layered defense against disinformation: a hardware-signed image, a network-verified location, and a decentralized timestamp, all established at the point of capture.

Time for Trusted Timestamping

Verify

Most of Starling Lab’s work involves trusted timestamping. Normally timestamping is very easy: you simply add a timestamp field to your metadata and call a function such as time.Now(). Trusted timestamping, by contrast, is concerned with proving that the provided timestamp is actually correct, and wasn’t forged after the fact.

This is simple to do in one direction, in order to prove some data existed after a certain point in time. That can be easily achieved by including unguessable current information, such as news headlines and stock prices. A classic pop culture example is kidnappers proving a hostage is still alive by having them pose with that day’s newspaper.

But trusted timestamping is concerned with the opposite direction: proving data existed before a certain time. This is not so easily done, but it is possible, and the use cases are quite important.

Purpose

There are three main reasons for doing trusted timestamping.

- Verifying old cryptographic signatures

- Data integrity

- Proof of technology ✨

In addition to these there is the obvious one: authenticity. Trusted timestamping can prove that a document that claims to have been made on a certain date was actually created on that date.

Verifying Signatures

The mainstream industry use case is verifying that a signature was added on a given date. For example, Apple requires timestamping as part of its code-signing process. The reasoning is simple: sometimes private keys get leaked or revoked. This scenario can break the guarantees signatures provide, namely that you can be sure of the author of the signed data.

Once a key is leaked, can we validate any previous signatures? They could have been made by a third party using the leaked key. But if the signature was timestamped, we can know whether the signature was created before the key was made invalid. This is a real problem that trusted timestamping solves.

Data Integrity

A basic method of ensuring data integrity is storing hashes. But what if those hashes are tampered with? Using signatures instead allows us to verify that no one but a person/org we trust has modified the data. But what if we don’t want to trust that person, or their ability to guard their keys? Using signatures adds an extra moving part and security risk that in some cases may not even be needed. If we can agree that the data was valid and unmodified at a certain point in time, trusted timestamping will provide data integrity forever. An example use case is backups, or storing org-internal archival data like legal agreements.

Proof of Technology

This is a new, modern use case. As AI-generated content gets better and better, being able to prove that a piece of media was generated before certain AI software even existed will become extremely important. Trusted timestamping has the ability to end all these new uncomfortable arguments that such-and-such picture was created whole-cloth by some inscrutable algorithm, and instead bring us back into old school discussions about whether something looks “PhotoShopped”.

Of course, it can be that AI content generation has advanced too far for trusted timestamping to be useful. But the technology is not perfect, nor has it peaked. As the saying goes, the best time to plant a tree timestamp data was 20 years ago, the second best time is now.

Software

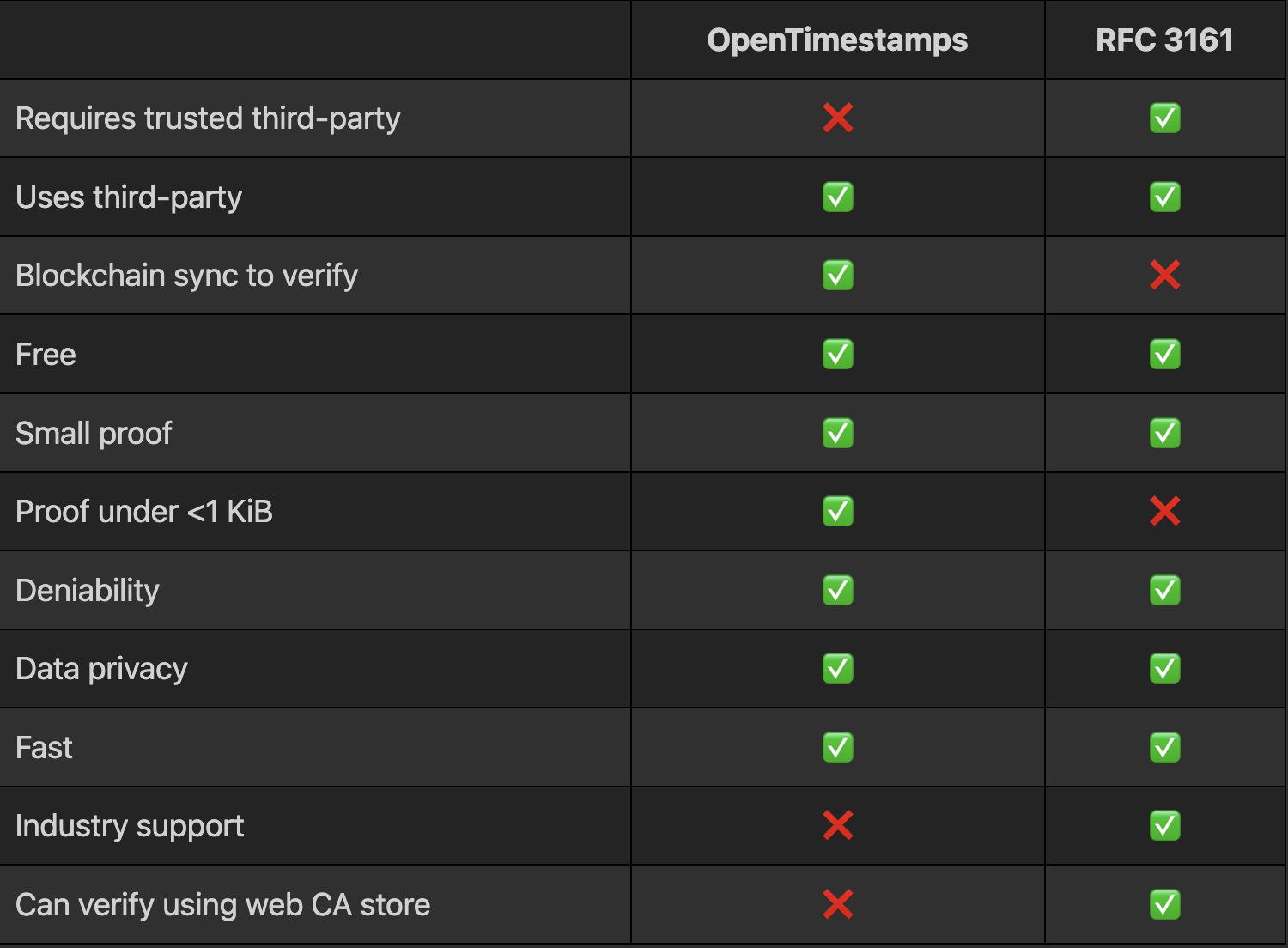

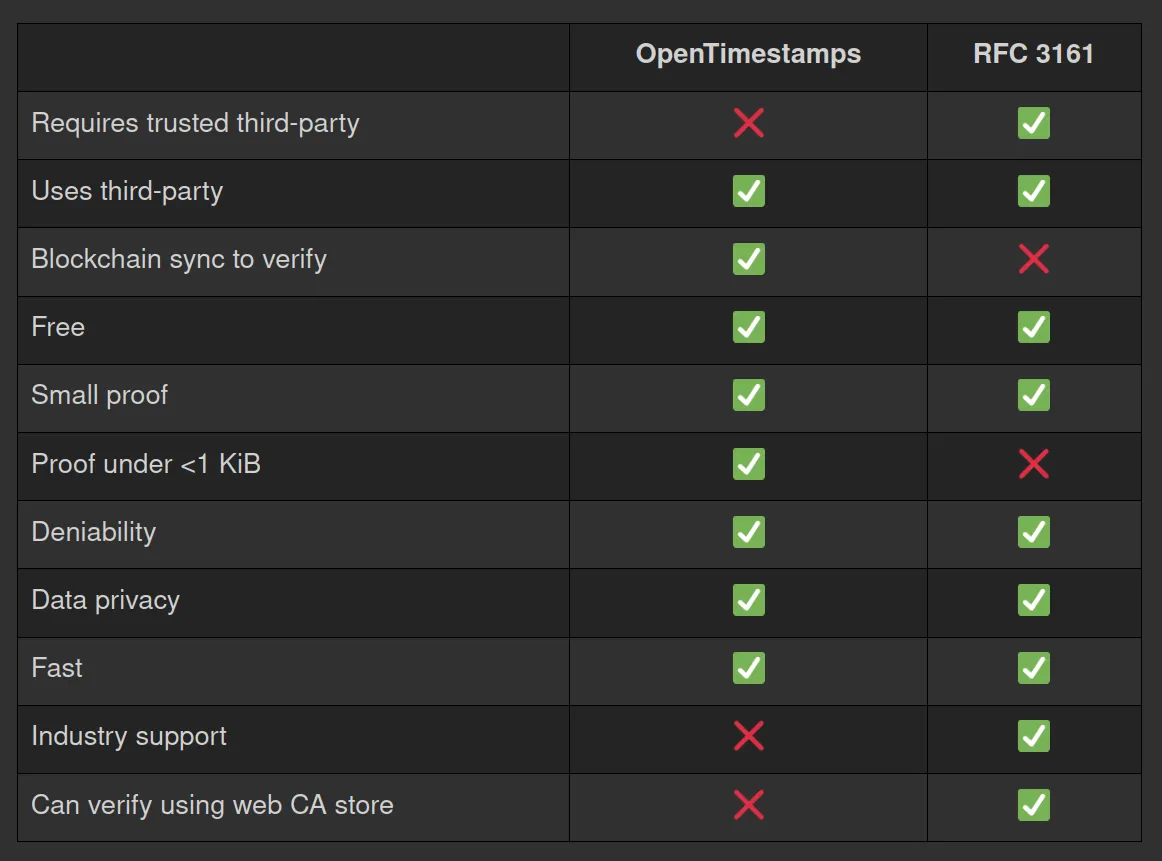

There currently exist two viable trusted timestamping methods I am aware of. The first is OpenTimestamps, and the second is the Time-Stamp Protocol, or RFC 3161. The former timestamps using Bitcoin (but efficiently, not one timestamp per block) and the latter uses the signature of a trusted service (Time Stamp Authority).

Here is a quick comparison:

You can learn more about OpenTimestamps on the website. Beyond the actual RFC linked above, you can learn more about RFC 3161 by playing around with OpenSSL, use the CLI and type the command man openssl-ts

I’m not going to dive any deeper into these tools than the above table, but my general recommendation is to use RFC 3161 with a good third-party unless not needing to trust anyone is actually a requirement for your project. And you could always do both!

Usage

OpenTimestamps has a CLI tool and libraries in several programming languages, see here. It also has a GUI on the website.

RFC 3161 is supported by OpenSSL as a CLI tool with the openssl ts command. To make RFC 3161 timestamping on the CLI easier (as it’s not just one OpenSSL command), I’ve created a bash script each for timestamping and verifying. You can get them here.

The only GUI option for RFC 3161 I’m aware of is on the freeTSA.org website, although personally I have no reason to trust freeTSA. Better than nothing though, for anyone who doesn’t use the command line.

For trusted third-parties there are various options, including reputable companies such as DigiCert.

Conclusion

It’s time for trusted timestamping! The cost of this is basically zero (storing a few kilobytes of proof at most), but the advantage can be very large. It’s one of those things that you’ll wish you had done at the start.

Many groups can act on this by adding timestamps for their documents and media. In increasing order of importance:

- General public: timestamp your important documents and media to prove their authenticity. You can do this today on your phone with ProofMode, and on the desktop using CLI or GUI tools as discussed above.

- Archivists: timestamp everything you archive, for integrity and authenticity. For redundancy consider using both methods mentioned above and many RFC 3161 timestamp authorities.

Developers: if you work on software where data or signature integrity is at all important, add trusted timestamping to your application. And if you use Git signing, you can easily start timestamping all those signatures, see here.

Mom, I See War: A Digital Archive by Numbers Protocol and Starling Lab

Verify

Mom, I See War (MISW) is the first known digital archive to document the way children experience war by preserving high-resolution jpeg scans of their drawings, metadata about the drawings, and encrypted versions of these that contain private, identifying information (the last names) of the children. MISW has a collection of 10,026 single-image assets from Russia’s invasion of Ukraine which Starling Lab has registered on the NEAR blockchain and archived on distributed web protocols.

- First, records of these assets were hashed and registered on the NEAR blockchain using Numbers Protocol.

- Second, versions of these drawings and separate metadata files (with the full names of the children redacted) were stored on IPFS.

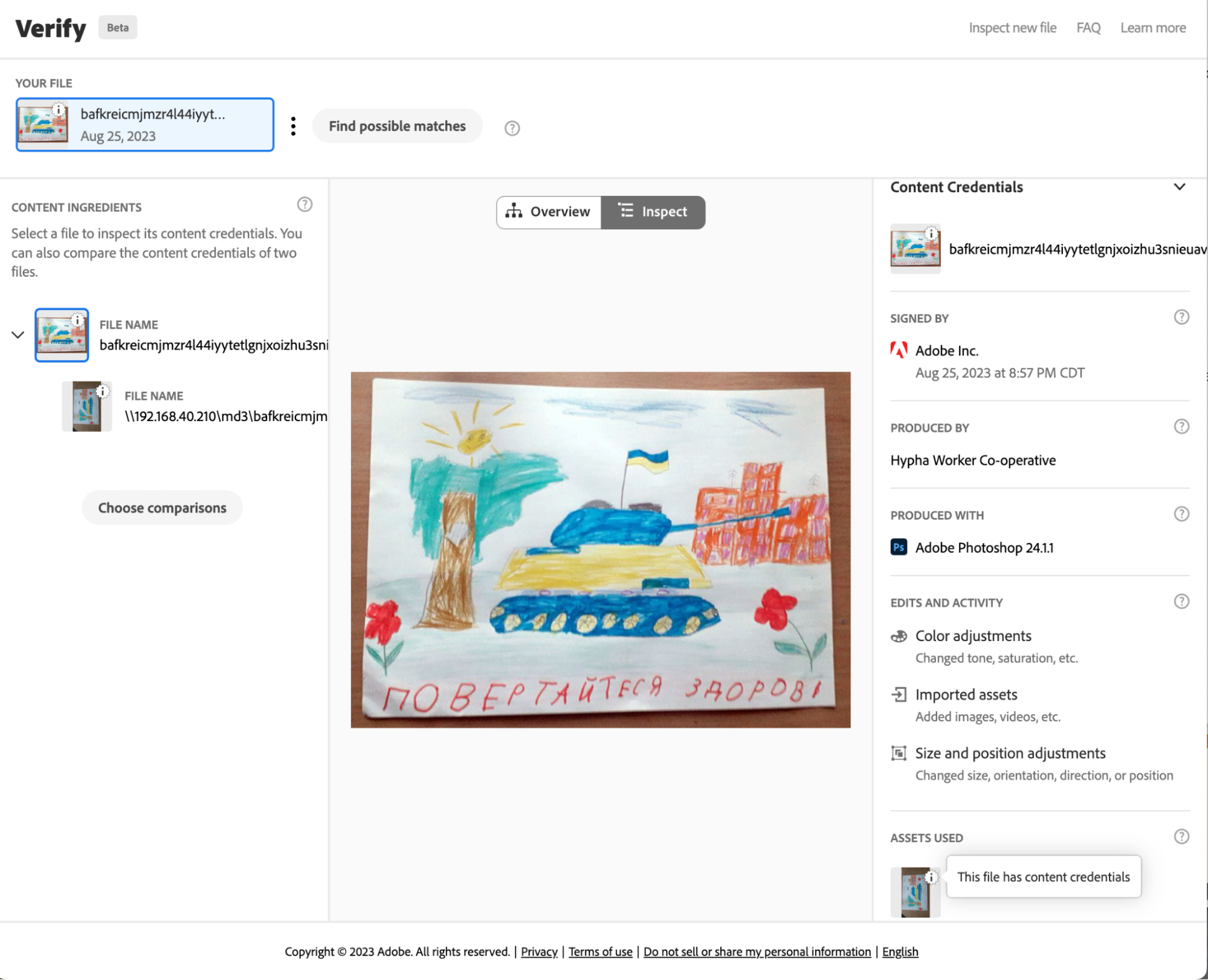

- Copies of the metadata were injected with information according to the C2PA standards, which enables users to inspect and understand more about what’s been done to these records, where it’s been, and who’s responsible.

- An encrypted archive of the drawings (assets) and full metadata were also sealed and preserved as archives on Filecoin.

The four versions of records of these assets each serve a different purpose. The registration of a hash (or an immutable ‘fingerprint’ of the drawing and metadata) on the NEAR blockchain networks establishes a record of exactly what was stored, and a date that it was stored on, without revealing any other information.

The publication of the full drawings and metadata on IPFS (without individual identifying information like the last name of the child who made the drawing) makes these assets available on a publicly owned, peer-to-peer system which is resilient against infrastructure deprecation and various forms of censorship, and the assets can easily be hosted by anyone.

The C2PA version of the registered assets was created according to standards set by a coalition for standards development: The Coalition for Content Provenance and Authenticity (C2PA). These standards guide the development of tools, supported within Adobe products such as Photoshop, that create public and tamper-evident records that can be attached to an image. These images can then be used with different tools for inspecting and making edits that preserve the understanding of what’s been done to modify assets, where it’s been, and who’s responsible.

Finally, the archiving on Filecoin (drawings are bundled with full metadata and encrypted) helps preserve these drawings across multiple storage providers in a way that can outlast current media storage and publishing methods and technology.

Numbers Protocol Registration

Drawing & Metadata Registration

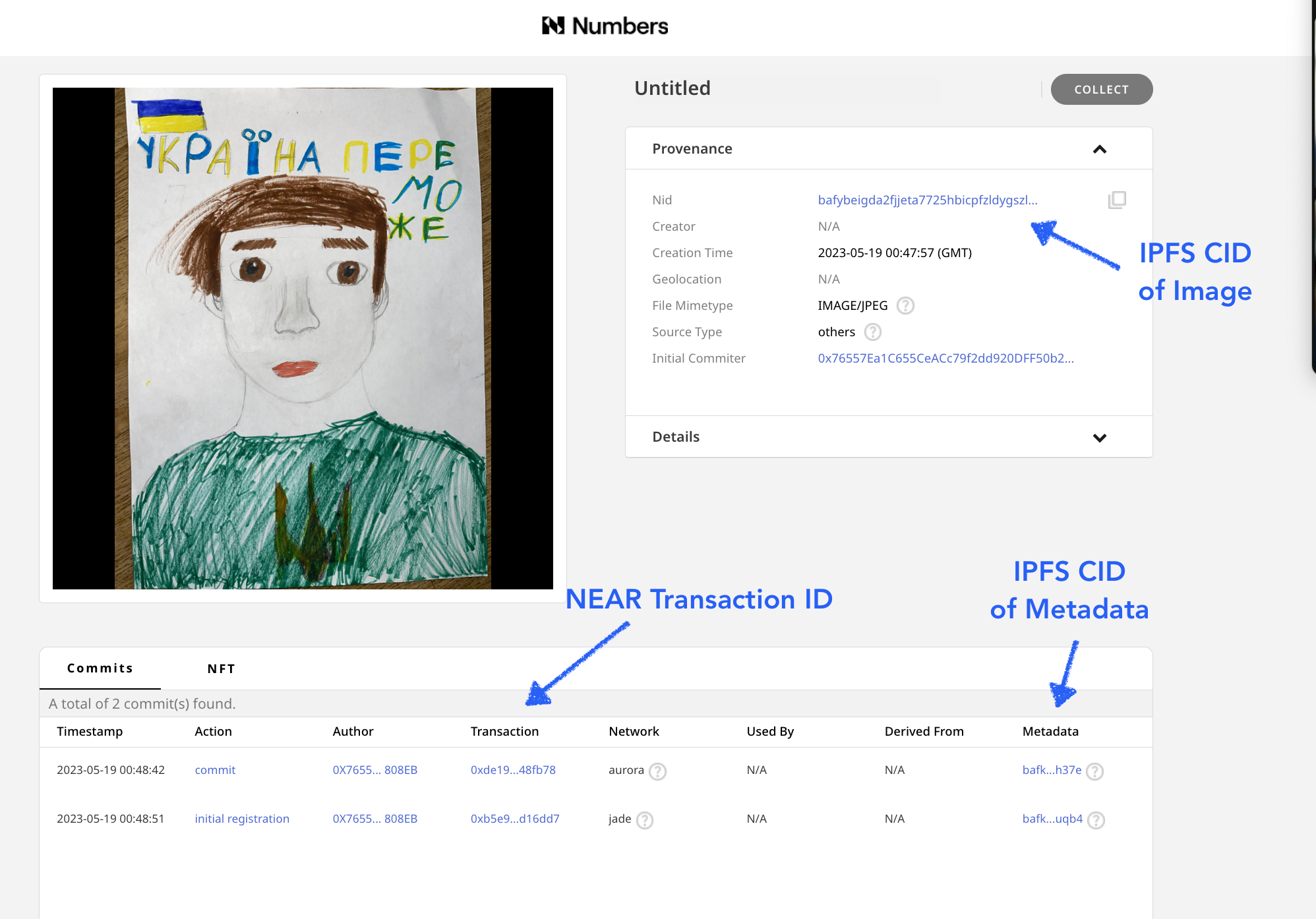

To register these assets, Starling Lab collaborated with the Numbers Protocol and also used the Starling Integrity pipeline to process the data and create publicly available registration records, publishing images and metadata records on IPFS. The records created include images of the drawings, along with a limited (redacted) set of metadata, which did not include certain identifying information, such as the children’s last names. See the example on Numbers Search.

The initial drawing and metadata record were both stored on IPFS with their IPFD CIDs stored on the NEAR blockchain and a registration of this record was added to Numbers Mainnet. Using the Numbers Protocol search, we can use the drawing’s IPFS CID to find the transaction containing all the information.

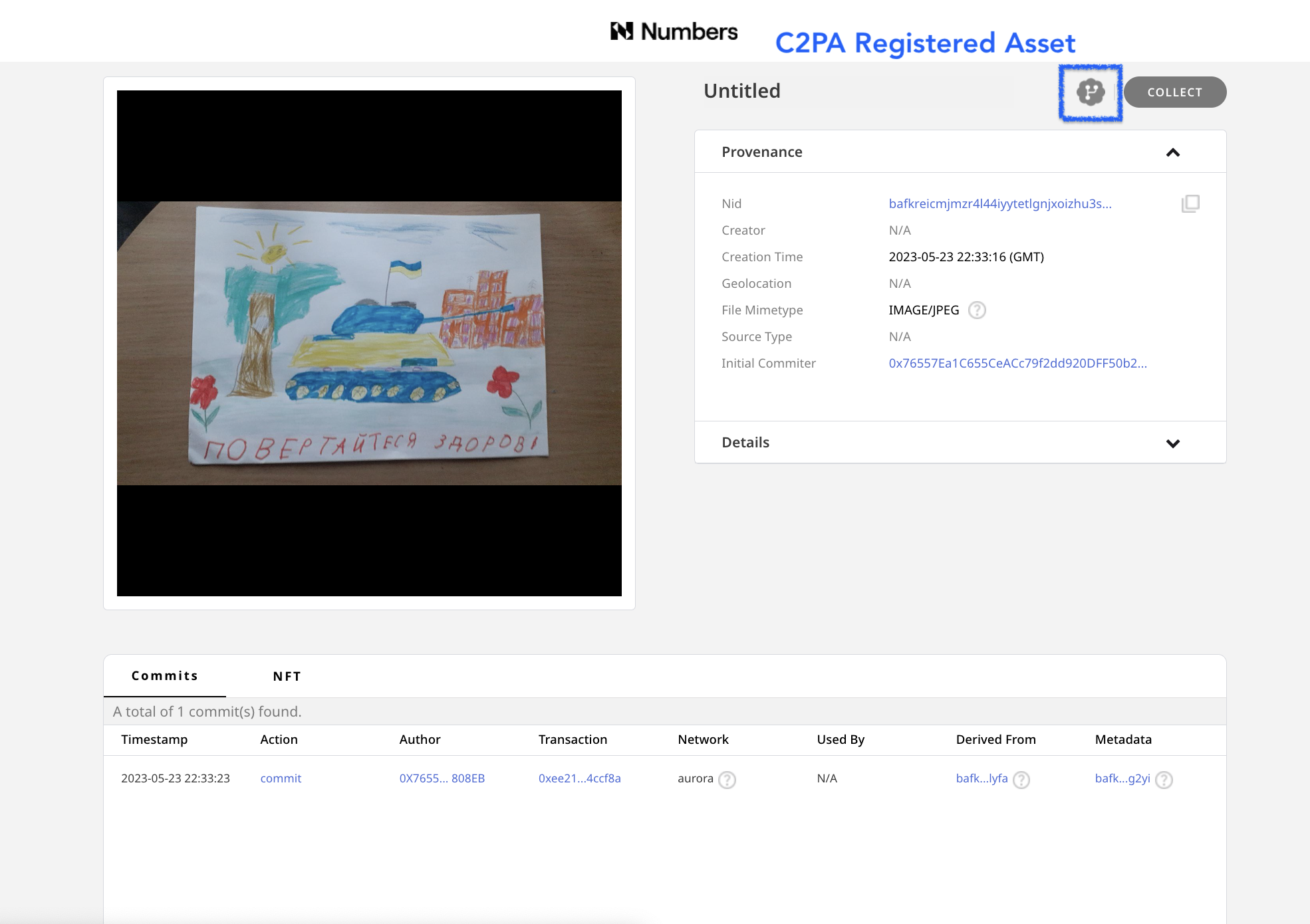

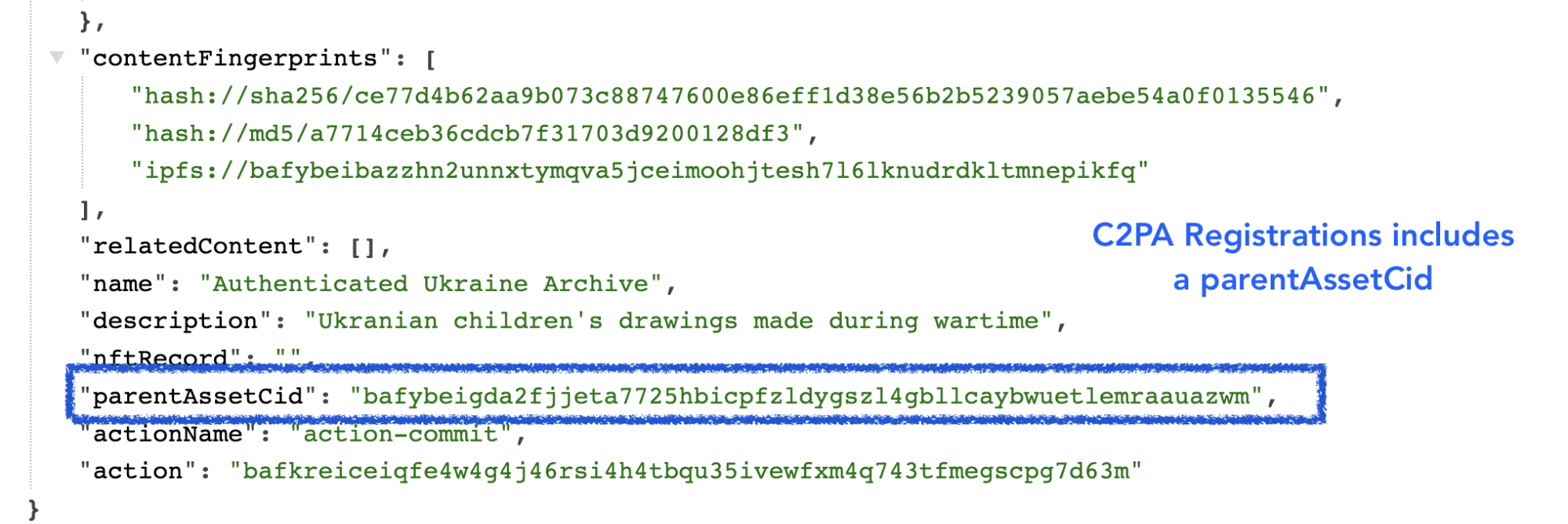

C2PA Registration

Once the regular versions of the drawing and metadata were registered, C2PA-compliant versions of these assets were also created.The presence of an icon next to indicates that an asset is one with C2PA metadata, and links back to the initially registered asset. The C2PA standard is a way of adding metadata that points versions of an asset back to the original asset, making an audit trail.

This makes it possible for anyone who may want to create verifiable, processed or modified versions (such as versions that are cropped or color enhanced for publication) of the drawings to create them, and connect them back to the original image with a record of any changes made.

The C2PA metadata added to the image is a standardized method used by tools such as Adobe Photoshop which allows each incremental edit to add a signed thumbnail and edit log to an image.

Starling Integrity Pipeline

All of the assets for this project were also bundled together, then registered and preserved using the Starling Integrity pipeline. This is a data processing pipeline that Starling Lab uses to bundle images with metadata, add registration certificates, encrypt, and add to various distributed storage and archival systems.

For this particular project, we used the Starling Integrity pipeline to zip complete copies of the image assets with private metadata including the last names of the children who made the drawing, then included authsign and OpenTimestamps certificates, and encrypted the file bundle. We then added these signed and encrypted files on Filecoin for archival storage, a cryptocurrency-collateralized archival storage system that will store the files for a duration committed by the set of storage providers, in this case 18 months, and run intermittent checks on the content to verify that the data is continuously preserved with the specified redundancy.

The archive itself is registered on NEAR using Numbers Protocol, as well as on the LikeCoin blockchain with ISCN.

Searching and Viewing Assets

The records for each drawing were archived by Starling Lab and the Numbers Protocol, with the CIDs & NEAR blockchain transaction IDs for the original drawing, the original metadata, as well as versions of these that have C2PA credentials, which allows one to understand the history and identity data attached to images that may need to be modified or edited.

For each drawing, you can view each of these four records using Numbers Search to:

- See the blockchain registration of these assets using a block explorer for NEAR or Numbers Mainnet.

- Inspect the images and sets of metadata on IPFS using the Numbers IPFS gateway.

- Use Filecoin explorer to see the archive of the assets.

- Download images and view C2PA-credentialed assets with the Verify tool.

Search for a Drawing and Metadata with a CID

In order to search and view the NEAR blockchain registrations with the Numbers Search tool, a user needs to have the asset CID provided from the list of archives. In order to search in a web browser, type in https://nftsearch.site/asset-profile?nid= followed by a CID, such as bafybeibazzhn2unnxtymqva5jceimoohjtesh7l6lknudrdkltmnepikfq

The resulting URL of the above C2PA-credentialed asset would look like: https://nftsearch.site/asset-profile?nid=bafybeibazzhn2unnxtymqva5jceimoohjtesh7l6lknudrdkltmnepikfq

Blockchain Registrations

The assets were registered on two blockchains. The basic information with the CID of the content stored on IPFS, plus a hash of the data (a check you can use to see if content you have, such as a copy of the drawing is the same as this registered version) is added to the Numbers Jade blockchain network. Next both the basic registration and more complete version of the asset’s metadata, which includes C2PA data, was registered on the NEAR Aurora blockchain.

The transactions where these are registered are linked from the Numbers Search interface and can also be found by appending the transaction ID to the following URLS

- Jade: https://mainnet.num.network/tx/<transaction ID>

- Aurora: https://explorer.mainnet.aurora.dev/tx/<transaction ID>

These registrations provide an immutable, timestamped record of the sha256 hash of the content, and can be used to verify whether or not any copy you may have of a drawing or it’s metadata are in fact identical to the version that was archived on the blockchain by Starling Lab and Numbers.

Viewing Data on IPFS

IPFS is a peer-to-peer protocol for sharing and hosting data and media. This is a resilient alternative to the client-server http-based web2 internet that can be accessed from web browsers using a gateway that bridges the http and IPFS networks.

Numbers Protocol hosts a gateway which you can use to view the IPFS copies of the drawings and metadata. To access these copies, you can click on a link in Numbers Search, or type in the gateway address along with the CID in any web browser: https://ipfs-pin.numbersprotocol.io/<CID>

Having this published on IPFS makes it easy to retrieve and inspect the images and metadata that has been registered on the different blockchain networks.

Viewing Filecoin Registration

Filecoin is a cryptocurrency (FIL) backed archival storage system. This system ensures archival storage on their network by the miners of their cryptocurrency. The protocol runs intermittent checks against sets of data that are archived, and if data isn’t stored, the miners (also known as storage providers) lose FIL collateral that they stake when they made the storage deal. The Starling Integrity pipeline used this to prepare and store these encrypted versions of the archive.

With the Filecoin CID Checker, you can view information about an archive, such as the identity of the node storing the data, the status of that archive, the deal made for the preservation, and the immutable content identifier or the payload that was submitted for storage. See the information about the MISW collection that was archived on Filecoin (Filecoin piece CID baga6ea4seaqdqgywa3n55hughvnfkroou6nm6qisqalzew7vy2vdqe3jfcr6wci), which includes all files, metadata, and a spreadsheet listing all assets.

Viewing C2PA assets with the Replay Tool

The Content Authenticity Initiative (CAI) is a group working together to fight misinformation and add a layer of verifiable trust to all types of digital content. Members of this group include those from media and tech companies, NGOs, academics, and more.

In February 2021, Adobe, Arm, BBC, Intel, Microsoft, and Truepic launched a formal coalition for standards development: The Coalition for Content Provenance and Authenticity (C2PA). These standards guide the development of tools, supported within Adobe editing tools such as Photoshop, that create public and tamper-evident records that can be attached to an image to understand more about what’s been done to it, where it’s been, and who’s responsible. These same standards were used to create metadata and records for the MISW collection.

With the Verify tool, you can download an image via IPFS from the MISW collection (adding a .jpg file extension), drop the image in the online tool, and inspect the image and data added according to the C2PA standards. If you were to edit the image with Photoshop, the hash of this record would change – however, you now have a tool that can tie this image back to the original, as well as show and compare any edits and changes made in Photoshop.

Conclusion

The project demonstrates the archival process for a valuable set of assets that create a record of the war in Ukraine to help future generations that want to understand this pivotal event in history, and helps create a reliable narrative of the past that may be used by future generations to help understand, make claims, and hold individuals and governments accountable and build a better future for humanity.

This set of tools not only creates an immutable archive of these assets, it also demonstrates the tools that can be used for the creation of an archive that is searchable and verifiable. It creates a set of assets that can be used in journalistic, legal, and historical depictions that allow those who may want to make minor edits to share these images with others by cropping, improving color, tone, and contrast, or make other minor edits, in a way that makes it possible for the public to understand the accuracy and veracity of these copies. Learn more about the collaboration with the Mom I See War Research and Numbers Protocol.

Publication of our whitepaper on Best Practices for Admissibility of Web Archives

Verify

In the autumn of 2022, we convened two pivotal workshops to focus on the evolving role of archiving web pages and social media in the context of international justice, particularly concerning Russia’s war against Ukraine. These workshops, one technical and the other legal, aimed to explore how recent advances in web archiving could support the collection, storage, authentication, and utilization of digital evidence in accountability proceedings for victims of the conflict.

The discussions formed the basis of a whitepaper, authored by Scott Martin (Global Justice Advisors) and Basile Simon (Starling Lab) and set of best practices that outline the ideal characteristics of a web archive for use in court, drawing on the requirements of the Berkeley Protocol on Digital Open Source Investigations.

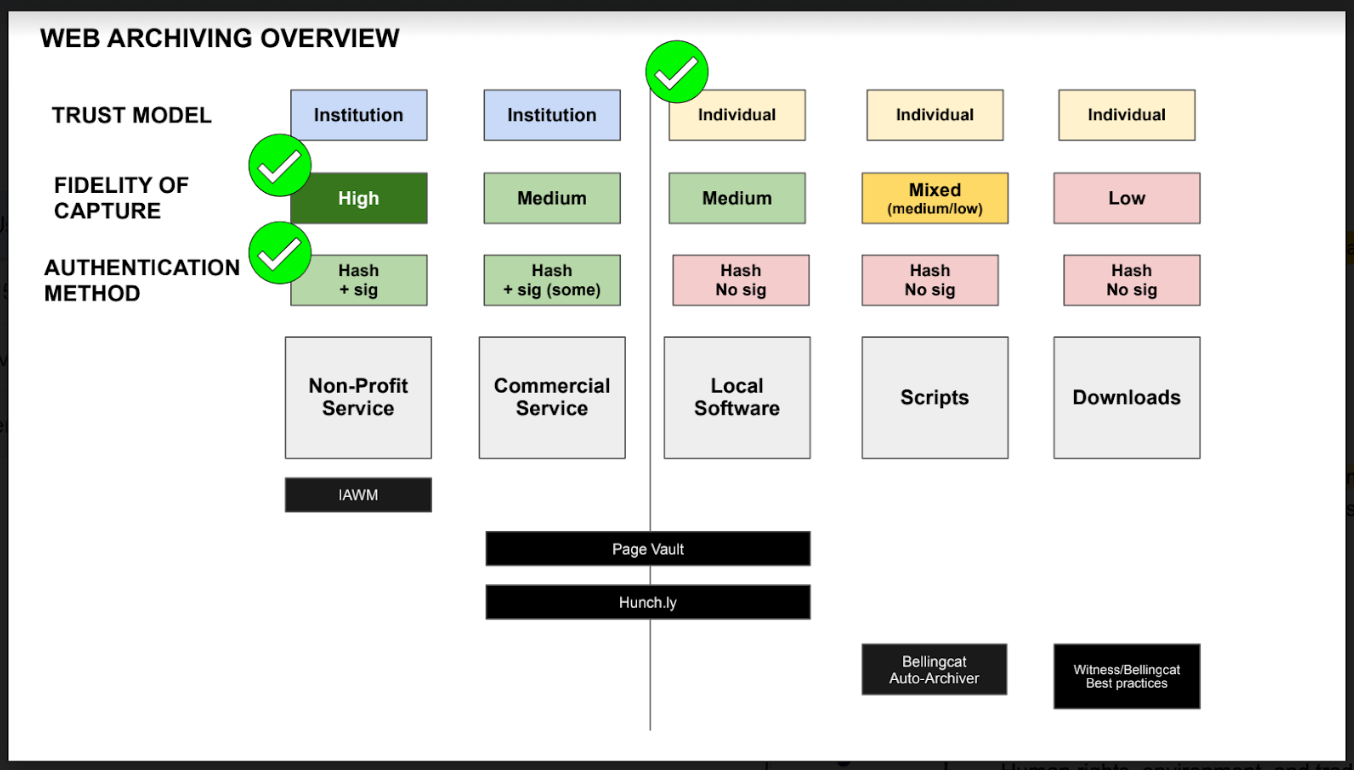

Best Practices for Web Archiving

According to this whitepaper, the ideal web archive demonstrates the following properties:

- It can be produced by anyone, notably by individual actors with tools they can grasp and control (as opposed to using a commercial service or being granted access to a platform). This is correlated, to an extent, with the use of open-source and local software.

- It is of high fidelity, meaning it was carried out by a tool that preserved most, if not all, of the original material.

- It includes the content itself, its surrounding metadata, the metadata of the web scraping software. This includes cryptographic hashes of all website assets and the signature of these hashes authenticating it to the author.

- Furthermore, cryptographic hashes and signatures must be preserved, that is to say, stored securely and made available for the long term, as would the content itself.

Establishing Clear Methodologies

To maximize the admissibility of a web archive as evidence, archivists and legal professionals must establish clear, detailed methodologies. These methodologies should document the provenance of the digital evidence — detailing where it comes from, how it was procured, who procured it, when it was procured, and the process followed. This includes documenting the chain of custody and demonstrating that the webpage has not been altered during archiving.

Key points include:

- Detailed Record-Keeping: Identify the person conducting the archiving, their qualifications, and the web collection protocols observed. Describe the hardware and software used, and explain the process for selecting and assessing websites and articles for credibility and resistance to manipulation.

- Storage Protocols: Describe measures against corruption, hacking, and other risks to ensure the integrity of the archives over time. This should be recorded in a chain of custody that tracks who has handled the document.

Background on Workshops

Technical Workshop: Enhancing the Integrity of Web Archives

On August 25, Starling brought together experts in web archiving to discuss methods to preserve information for accountability purposes in Ukraine. The workshop delved into various collection, authentication, and preservation strategies, emphasizing the technical aspects that ensure the integrity of recorded web pages and other digital materials.

Participants first examined existing web archiving practices and their operation on a technical level, then discussed the potential risks to these archives that could threaten their integrity. A significant focus was on the vulnerabilities of storing web archives using traditional archival models. The discussion highlighted how a shift towards more distributed and decentralized models could offer improved long-term resilience and availability, essential for maintaining the integrity of the archives in unpredictable environments.

We thank the following participants for their contributions:

- Mark Graham, from the Internet Archive;

- Ilya Kreymer, from WebRecorder;

- Michael Nelson, from the Old Dominion University;

- Nicholas Taylor, expert witness in the Internet Archive Wayback Machine;

- Ed Summers, from the Stanford Libraries;

- And Cade Diehm, from the New Design Congress.

Legal Workshop: Web Archives in the Courtroom

Following the technical discussions, a roundtable of legal experts convened on September 27 to explore the legal dimensions of web archiving practices. This group included lawyers specializing in war crimes and legal professionals experienced with digital evidence. The goal was to identify potential legal vulnerabilities in current archiving practices and determine how such materials could be admitted into evidence in courtrooms, particularly in war crimes and other international criminal proceedings.

The legal experts articulated best practices to ensure that web archive data are preserved, produced, and authenticated in ways that maintain their integrity. This enhances their reliability, utility, and probative value as evidence in a judicial context. The roundtable discussed the characteristics and challenges of various web archiving practices and presented a framework to assess these methods.

We thank the following participants for their contributions:

- Scott Martin, from Global Justice Advisors;

- Melissa Bender, from Ropes and Gray LLC;

- Tim Parker, from Blackstone Barristers;

- Cari Spivack, from the Internet Archive;

- Karolina Aklamitowska, from Tallinn University;

- Clare Stanton, from Harvard Law School;

- Bastiaan van der Laaken, from the UN IIIM Syria.

Next Steps: Call for Contributions on Witness Servers

Finally, to improve on the process of entering web archives into evidence, Starling are formalizing the concept of “Witness Servers” as an additional layer of self-corroboration for web archives. A Witness Server is a service, hosted and run by an institution, which carries out web crawls on-demand on behalf of individuals conducting web archiving activities.

Participating institutions, e.g. the Stanford Libraries, WebRecorder, or the Harvard Library Innovation Lab, bestow the individuals or team they accept to witness with the trust that might be placed in the institutions themselves. The roundtable findings identified the reliance on the social trust placed in institutions as particularly supportive of strengthening the work of potentially vulnerable investigators and archivists.

Several Witness Servers act in concert on the instruction of a web archivist and simultaneously capture the same web page. Such an approach addresses the possibility of a webpage having slight variations depending on locale (and many other potential anomalies) and works to otherwise corroborate the contents of a website through a replication process that validates the contents of a web archive from several different locations and actors.To learn more, participate as an institution or a researcher, read the Call for Contributions.