Immersive Worlds for Documentary Filmmaking

Contents

Background

Artificial intelligence is increasingly sophisticated in creating, understanding, and interacting in 3D environments. Also known as “spatial intelligence,” this emerging field is being pioneered by world-class researchers and engineers around the world. As our own contribution to the field, Starling fellow Fred Grinstein has begun to explore how this use of generative AI might be applied to documentary filmmaking and non-fiction narrative works to engage audiences.

As much as spatial technologies offer a promising future of immersive, informative experiences, they also blur lines between reality and simulation, posing risks to trust in records used in documentary filmmaking, as well as journalism, law, history, and other human rights fields that require verifiable ground truth.

Today’s AI generated photos and videos—some convincing, and others mired in the uncanny valley—share a common trait that the AI generating them has no real understanding of the world. Current generative AI is like a newborn baby: object permanence, cause and effect, and the behaviors of solids and liquids are complete mysteries to it, resulting in the inconsistent and sometimes amusing quirks of some of today’s AI videos seen online.

However, the next generation of more sophisticated AI understanding is already emerging. World models, which aim to not just visually approximate, but actually physically simulate the real world are upon us. Whether announced or not, all companies behind today’s big foundational models are working on algorithms that will capitalize on opportunities in this field. These include industry titans like Nvidia and Meta’s Reality Labs, as well as overnight unicorn startups like World Labs. Currently confined to the lab and a few first movers such as Odyssey and Marble, soon anyone will be able to create utterly convincing 3D worlds from any photo, video, or text prompt as a starting point. When simulated worlds have visual quality and behavioral accuracy that renders them indistinguishable from reality, what will that mean for practitioners of journalism, history, and law.

As Starling Lab researchers, the core value we can offer is no longer the technical excellence of our 3D reconstructions of the world—that bar is collapsing under rapid commoditization—but philosophical, ethical, and epistemic clarity. Our focus must evolve from how beautifully we can reconstruct the world to how reliably we can verify what it represents.

Vital professions like law, journalism and history are scrambling to understand the implications of artificial intelligence. They’re even less prepared for the emergence of spatial intelligence and related 3D reconstruction techniques using generative AI. These innovations will necessitate new approaches to ensure authenticity. Therefore, we are proposing to create a new center that leverages the most cutting edge AI technologies to push the conventional boundaries of trust and provide a roadmap and recipe for practitioners to bolster authenticity for new types of trust.

Note: Following this project, we were informed that the building containing the murals had been leveled and the location cleaned up. The art is now gone in its physical form, but survives digitally.

Contents

Context

Starling Lab, co-founded by Stanford University and the University of Southern California, has an award-winning track record of working on data integrity and cryptographic authentication of digital records. In 2025, Starling Lab has undertook a series of experiments exploring Gaussian splat reconstructions of archival media—transforming still and video assets into immersive, explorable 3D environments.

We partnered with documentary filmmaker David France, experimenting with scenes from his seminal story around the AIDS crisis in How to Survive a Plague.

We also dug into the U.S. Library of Congress collection of 1930s and 1940s historical photography of the Great Depression, Dust Bowl, migrant workers, and incarceration of Japanese-Americans during World War II.

At the outset of this project, our emphasis was on the technical R&D of creating Gaussian splat-based immersive experiences from fragile, analog-era materials. At that time, 3D reconstruction required at minimum scores, and usually hundreds or thousands of technically perfect images. Even with unlimited images, many subjects—finely detailed, translucent, and thin materials such as hair, glass, and tree leaves—were difficult or impossible to reconstruct. For that reason, attempting to create 3D reconstructions from documentary films, or photos and videos found in historical archives was a significant technical challenge and area of focus.

A recently popularized technique, Gaussian splatting made reconstructing those previous subjects possible. Encouraged by initial results, we attempted to push the technique as far as it would go. Given that with historical photos, there was often only a single image, we asked the question: How might we 3D reconstruct a historical scene with only a single frame from a documentary film or a single black and white archive photo?

The broader goal has been to evaluate the creative and evidentiary potential of spatially reconstructed archives as a new mode of engagement with verified historical content. Would a 3D reconstruction of a historical event aid in understanding and open up new avenues to explore?

These experiments, which demonstrated that 3D reconstruction of a single historical image was possible, have validated early instincts: that 3D world generation from stills and low-dimensional media would soon become a ubiquitous capability. A year later, this has proven true—from Apple, Nvidia, Google, OpenAI, WorldLabs, and Odyssey Systems, and countless open-source and academic projects are now building similar pipelines.

As spatial intelligence opens new opportunities for journalists, lawyers, and historians there are many unfolding questions about how these fields can authenticate these new AI assets:

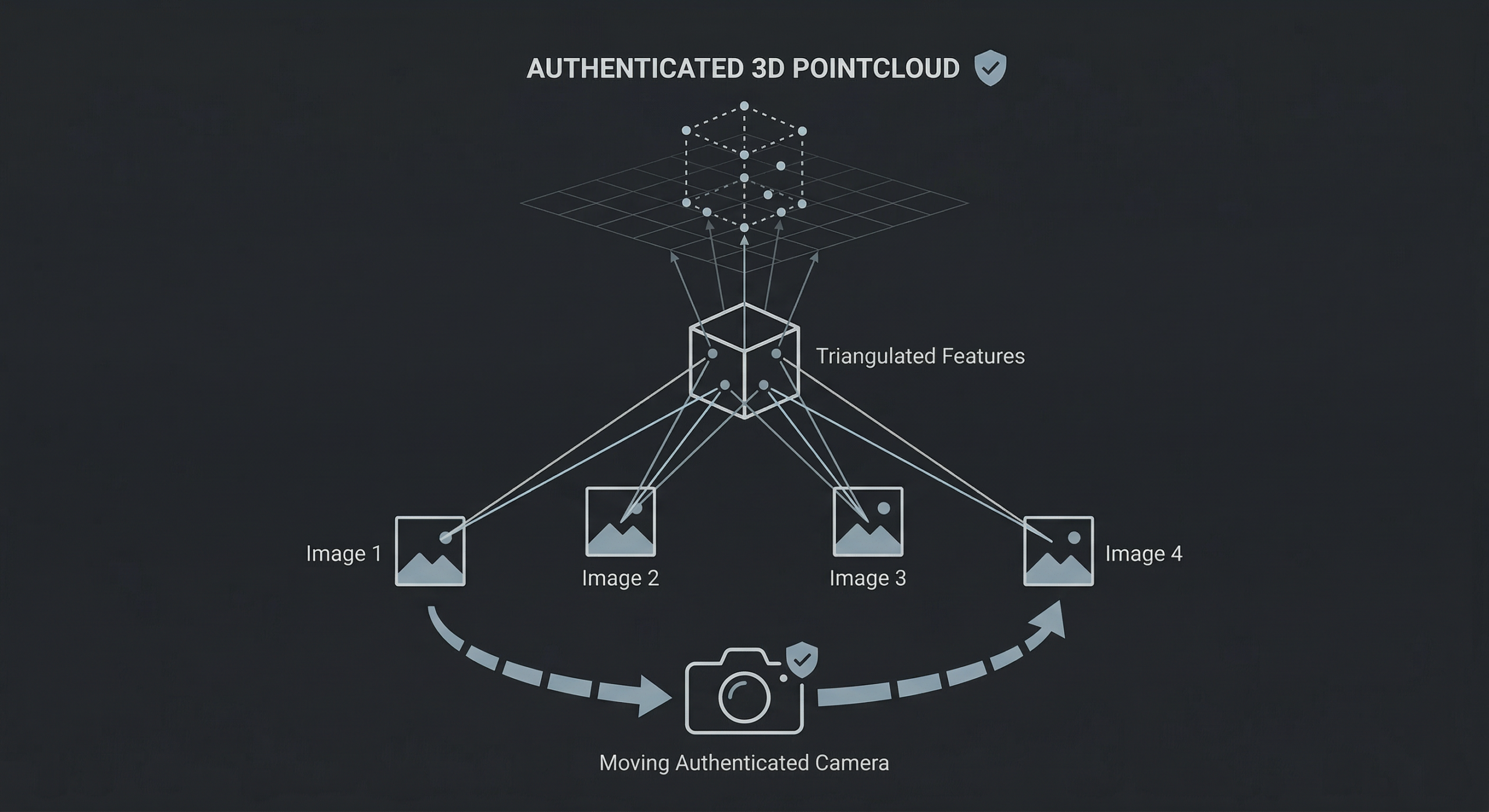

Building Trust in 3D Reconstruction Pipelines: At each step as 3D creators ingest content and extend scenes with generative AI, trust needs to be built to ensure the authenticity of the data and the models is assured.

Spatial Understanding: As 3D scenes use a variety of generative tools there are opportunities to develop graphs of semantic understanding that can improve the fidelity of 3D scenes but also provide classification of objects and concepts in the scene.

User Experience: As 3D models are trusted to create digital twins for simulation or exploration, we need new user interfaces that allow for inspection of both the original captures and the synthetic objects.

Starling Lab fellow Fred Grinstein sought to establish new guidelines and develop and test solutions along the boundaries between authentic and synthetic media in 3D spaces, ensuring the reliability and trustworthiness of digital records.

Contents

Framework

The Challenge

When we began the project in 2025, the possibility of creating an immersive environment from a single photo or short clip of video was an open question. Our focus was technical, reading technical papers and trying out various approaches using AI diffusion models to create 3D environments. Progress was slow and labor intensive.

Images were fed into video diffusion models in order to synthesize additional images showing more sides of the scene, that could then be fed back into the same models to create even more aspects of the scene until a complete 3D model could be created. The work was time consuming and error prone as slight deviations from image to image would be amplified and thwart the 3D reconstruction.

However, while working on this problem other groups in spatial intelligence were also working on this problem. With the launch of world model services such as World Labs’ Marble in [month] 2025, we came to the realization that, as Fred Grinstein put it, “Soon anyone will be able to create 3D worlds from anything.”

We realized that we could leave the technology to others and focus instead on philosophical, ethical, and epistemic clarity.

From Reconstruction to Verification

This leads to a renewed articulation of Starling Lab’s mission:

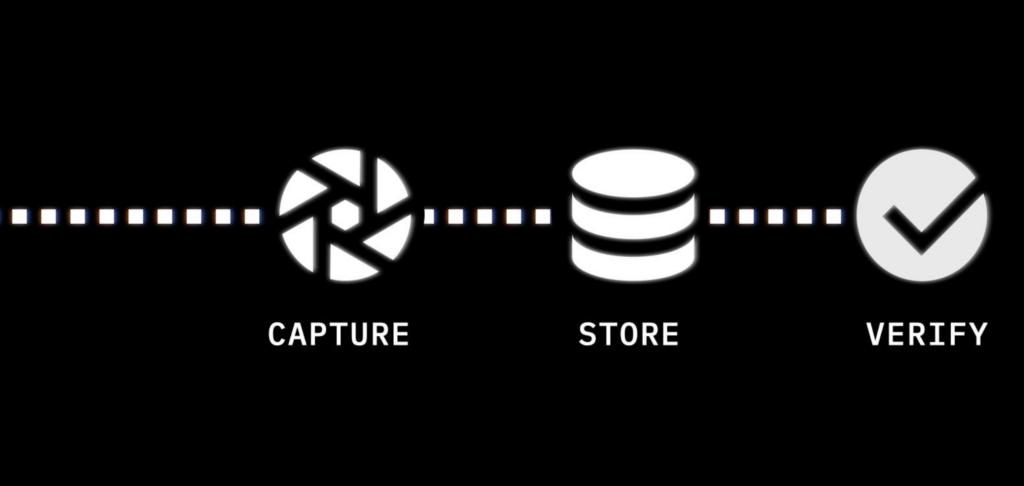

Capture → Store → Verify

Capture

We aim to establish a root of trust in the asset’s authenticity as early in its lifecycle as possible by binding authenticity metadata to an asset as close as possible to the moment it’s created. We promote the creation of stronger, richer digital items in two ways: by systematically hashing and signing digital media to preserve integrity, and by placing assets alongside metadata and provenance markers.

Store

Digital assets decay. Even with the best of intentions, some of humanity’s most vital digital records have been lost due to hardware failure, media obsolescence, human error, malicious attacks, natural disasters, or economic collapse. We address this problem in the storage step by leveraging tamper-evident data structures and constant audits to ensure and demonstrate preservation of integrity over time and exposing tampering. We also redundantly place data in several locations and use distributed and peer-to-peer systems to lower the risks caused by too few critical points of failure.

Verify

Starling Labs embraces both the human and technological approaches to verification. We create audit trails akin to those evaluated by real-world notaries who stamp a document and record it in their physical ledger. Our workflows capture the attestations of both experts and others throughout a digital asset’s lifecycle. We surface cryptographic integrity evidence and immutable ledger registrations.

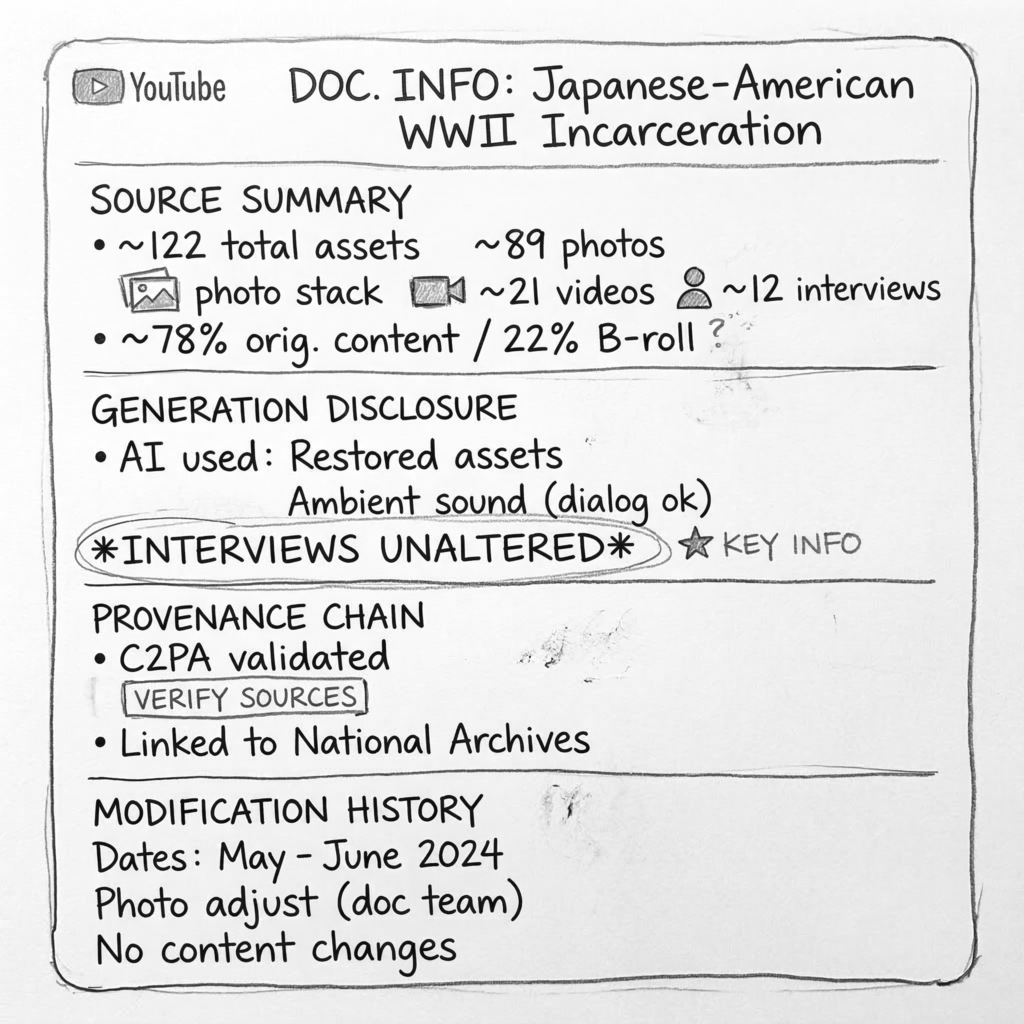

For this project we’re added an additional step we’re referring to as a nutrition label for generative AI outputs.

Nutrition Label

The next phase emphasizes the creation of verification and authentication systems for synthetic or hybrid media—a kind of “nutrition label for digital reality.”

This system would communicate to audiences:

- What data sources a scene or image derives from

- What transformations were applied

- Which layers are verified, synthetic, or uncertain

- How provenance metadata persists through remixing and AI generation

Such a “media ingredient label” could become a cultural expectation, much like nutritional transparency in food labeling—even if most people don’t read it, its presence builds trust.

Contents

The Prototype

ComfyUI Prototype

You can buy a camera from Leica, Nikon, Canon, or Sony capable of embedding C2PA provenance metadata into the photos they take (whether they allow you to use the feature is another matter). Google Pixel 10 cameras automatically embed C2PA provenance metadata by default as does OpenAI’s Sora. However those implementations treat each photo or AI output as a discrete and independent object, and assume that the images won’t be used as ingredients in a larger work combining multiple media types and AI.

Through our work in this project we observed that photos, videos, and diffusion model outputs were often small individual components or ingredients that went into making a larger AI work. We saw a need for end users to be able to know everything that went into making a work – all the photos, videos, AI processes, and other transformations.

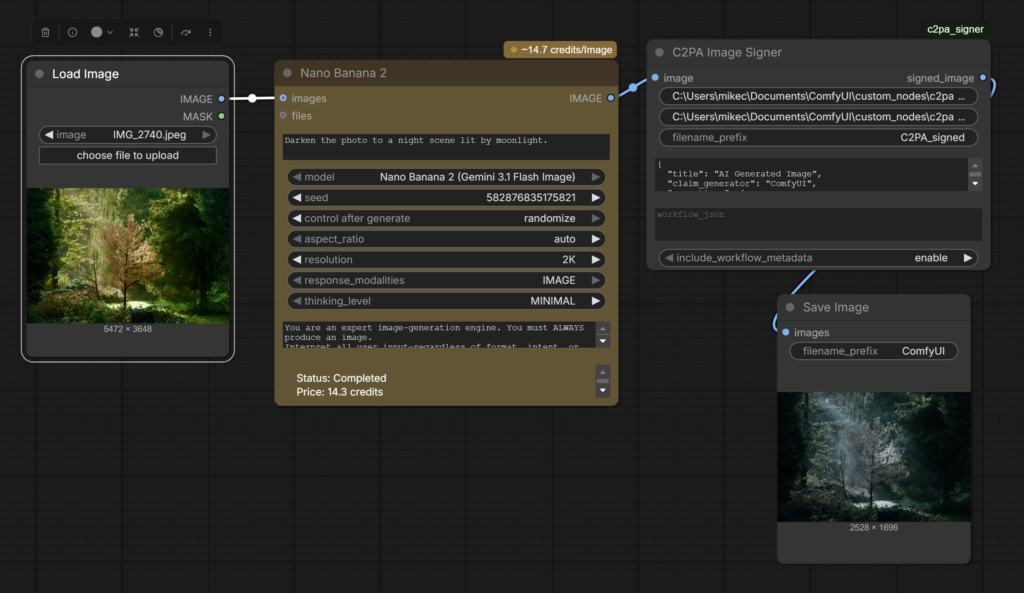

To that end we chose to target ComfyUI, an open source AI authoring suite that can be used for everything from upscaling and editing photos, to composing music, AI video creations, and complex workflows. We designed a prototype for ComfyUI that inserts C2PA credentials into AI creations, with the goal of providing information on everything that went into created media.

Our open source Comfy Node encapsulates the entire workflow with C2PA metadata, including all transformations, AI processes, which models were used and the specific settings. This not only informs users, but allows them to recreate the works themselves following the embedded recipe, enabling users to verify the process themselves.

Designing for Authenticity Class

Our fellows and classes at Stanford are working with a unique collection of 35,000 images and videos taken in North Korea. Captured by former AP and National Geographic photojournalist David Guttenfelder (now with NY Times), these one-of-a-kind visuals document life at all levels in North Korea, from the leadership to people going about their daily lives. This serves as the foundation of an ongoing experiment to create immersive experiences from 2D photos.

These approaches are opening up new opportunities in experiential journalism. We’re also exploring collaborations with world-class journalists to better understand the impacts of runoff from abandoned mines in rural America and answer historical mysteries from the Vietnam War.

A unique opportunity emerged from the materials we’d been authenticating from North Korea—a place notorious for propaganda and which hasn’t been visited by western journalists for half a decade. We set out to test the limits of 3D reconstruction and see if audiences might be able to “visit” North Korea in virtual reality—with as little as a single authenticated photograph. This material was already being presented in a Stanford course called Electrical Engineering 292J, “Designing for Authenticity.”

Designing for Authenticity students applied Starling Lab’s “Capture, Store, Verify” workflow to the unique set of photojournalistic images photographed in North Korea by Guttenfelder. Additionally, students were challenged to attempt to create explorable immersive 3D environments from the photographs using cutting edge 3D visualization and AI techniques running locally on Nvidia GPU hardware. This project often required taking a single photo and using the information in it, combined with additional information gathered from news reports, additional images including satellite imagery, and using AI to generate the additional visual materials necessary to create a 3D model. In doing so, students explored the tensions and boundaries of using AI and 3D visualization to tell journalistic stories, and proposed approaches for responsibly using these techniques while maintaining authenticity.

Contents

Technology

Evaluating Workflows and Algorithms for 3D Reconstruction

As mentioned earlier, it is typically necessary to have hundreds or thousands of images from a wide variety of angles of a scene in order to successfully reconstruct it in 3D with traditional techniques. The historical images we worked with often only had a limited number of views and sometimes only a single image of a given scene. The resolution and quality of the historical images varied greatly, from highly detailed black and white photography from the 1930s and 1940s to low resolution camcorder video filmed in the early 2000s.

- Gaussian Splatting – the most successful technique for creating photorealistic scenes from extremely sparse image sets

- Nvidia InstantSplat – this recently released technique (which also uses Gaussian Splats) showed tremendous promise, able to generate 3D from very sparse sets of images

- Neural Radiance Fields – also successful for reconstruction but less realistic than splats

- Photogrammetry – High requirement for input images and inability to render complex organic scenes and low data situations, made this technique only appropriate for buildings and other hard-edge scenes with ample input data.

- World Lab’s Marble – An all-in-one cloud-based paid world model-based reconstruction service that creates small Gaussian splat worlds (marbles) from input images and videos.

AI Diffusion Models Evaluated:

- FramePack – this Stanford University-developed software allows Tencent’s open source GenAI video model, Hunyuan, to efficiently run on local Nvidia GPUs. The software was first released as an Alpha within days of the start of the course and quickly became the go-to software for generating additional images from a single source image because of its open-source nature, speed, reliability and controllability.

- Wan2.1 – this Alibaba-developed open source GenAI video model was evaluated but not used because of difficulty controlling output.

- Commercial GenAI models – Google Veo 2&3, Runway Gen4, Luma AI Dream Machine, Kling, Higgsfield, OpenAI’s Sora, and many other commercial software were also evaluated. None of these were successful for various reasons: Some claimed a worldwide copyright in perpetuity to reuse any material submitted. Some had opaque content controls that would randomly refuse to work on images deemed inappropriate. Some AI were essentially uncontrollable and could not be used reliably. And for others, the quality of the visuals was cartoony and unrealistic.

Sample Outputs

Due to embedding limitations in this document, it’s best to visit the following samples via the provided web link. Once the experience loads, you can pan around and zoom in, experiencing these rare scenes in 3D—even though we only built them from an authenticated 2D image.

Learnings

These Starling experiments highlight where AI reconstruction meets the limits of historical fidelity:

A lost generation of video: A surprising result of our experimentation in making 3D reconstructions from historical imagery, was the extreme challenge of video recorded from the 1980s through the early 2000s. At the outset of the project we began with black and white photos taken by photographers working for the U.S. government Farm Security Administration and War Relocation Authorities in the 1930s and 1940s. The gelatin silver prints held by the Library of Congress are sharp, high-resolution (as high as 8K), and very information dense. Even Civil War photographs held enough detail to successfully 3D reconstruct using modern techniques. Diffusion models easily interpreted the scenes depicted in one-hundred-year-old photos and assisted us in creating additional viewpoints in order to 3D reconstruct.

Buoyed by these early successes, it seemed that any historical moment of any era captured by a camera could be brought to life in an immersive 3D experience. Surely if we succeeded with some of the oldest photos then more modern eras’ images benefitting from more modern technology would be even easier, we reasoned. We were wrong.

We soon realized not every era would be as easy when we tried to tackle the history of the AIDs crisis as depicted in David France’s seminal “How to Survive a Plague”. WIth the permission of France, we extracted stills from camcorder and broadcast video from the 80s through the early 2000s. Video recordings were an attractive target. Lengthy videos gave us a huge selection of moments to choose from, and if the camera operators moved through a scene, that might give us the additional view points we needed to make 3D reconstructions.

However, what we found was that in the video of the 1980s-2000s, standard definition ruled at roughly 720×480 pixels and not very sharp pixels at that. This was only a small fraction of the resolution of the black and white photography we had tried previously. Our attempts to create 3D reconstructions from the footage were stymied by mushy visuals and low resolution. Diffusion models failed to correctly interpret the scenes and had trouble understanding objects in the scene or even correctly identifying humans. We tried upscaling with commercial and open source upscaling tools, but given the lack of visual detail in the source material, file sizes and resolution got bigger, but the underlying information and detail in the scene was not enriched.

While in the late 2000s, digital devices would deliver high-resolution, sharp footage, the mushy analog camcorder footage from the 1980s to the early 2000s we dubbed a “lost generation” as far as 3D reconstruction was concerned.

Contextual truth: Reconstruction fidelity ≠ historical authenticity. Creating 3D reconstructions requires many views of a subject. The fewer source images you begin with, the more you need to recreate with AI. In our experiments creating 3D environments from single images, we relied heavily on diffusion models to fill in gaps, meaning that while our 3D environments are statistically probable, they are not necessarily accurate to the real-world scene.

As we were recreating the scene, a question that arose was when does an immersive experience based on historical material cease to be informative and become misleading? It seems impossible to pick a precise point when this threshold will be crossed, and likely it is different depending on the person and the intended use of the simulation.

While not ideal, this tradeoff can be acceptable if it is made transparent and so that audiences can make informed choices and decisions about what they are seeing. What matters most is the paper trail—knowing the provenance, source lineage, and process metadata.

The pace of technological development regularly outpaces our expectations: From “is it even possible?” to “it’s easy” in a matter of months. When we began this project we weren’t sure what we were proposing—to create immersive worlds from single images—was even possible. We focused on the technical, trying to establish the feasibility of doing this, and exploring techniques to do so. While we achieved our goal, other groups were working on the same. In [date] World Labs released its beta of Marble, an easy to use online tool for creating 3D Gaussian splat worlds from a single image.

This becomes the storytelling pivot: not only how we rebuild the past, but how we prove what we’ve rebuilt.

Contents

Next Steps

The greatest challenge facing provenance and verification systems isn’t technical—it’s adoption. C2PA and related frameworks have struggled to gain traction because they impose costs on creators without offering commensurate benefits. Verification feels like homework rather than opportunity.

The Starling approach reframes this dynamic by aligning authenticity practices with creators’ existing motivations. The rise of generative AI has created an unexpected catalyst: creators are now deeply concerned about establishing clear ownership claims over their work, both to protect against unauthorized AI training and to defend their intellectual property in an increasingly murky legal landscape.

This concern creates a natural alignment. The same provenance documentation that establishes authenticity for journalistic or historical purposes also creates a defensible copyright trail. When a photographer cryptographically signs their original capture and logs it to an immutable ledger, they’re simultaneously creating evidence of authorship and evidence of authenticity.

Companies operating in adjacent spaces recognize this opportunity. Startups are building infrastructure specifically designed to help creators document and defend their copyright claims through rigorous provenance tracking. While their primary motivation is intellectual property protection rather than journalistic integrity, the underlying technical requirements overlap substantially.

This suggests a strategic opportunity that would benefit copyright holders as well as journalists, historians, and legal professionals : rather than advocating for verification as an ethical obligation, we can embed these practices directly into workflows creators are already motivated to adopt. Provenance becomes not a burden imposed from outside, but a natural byproduct of protecting one’s creative and economic interests.

The implications extend beyond individual creators. Media organizations, archives, and documentary filmmakers all face pressure to demonstrate the authenticity of their holdings—pressure that will only intensify as synthetic media becomes indistinguishable from captured reality. By connecting verification to financial self-interest, we transform provenance from a moral duty into a market advantage.

Emerging Vision: Synthetic Infinity

Generative AI is producing an unprecedented flood of visual content. The economic logic is relentless: synthetic imagery is cheaper, faster, and infinitely customizable compared to captured media. Within a few years, the majority of images encountered online will likely be generated rather than photographed.

This abundance creates a paradox. As synthetic media becomes ubiquitous, authenticated captures become more valuable, not less. In a world where anyone can generate a photorealistic image of any scene, the ability to prove that a particular image represents something that actually happened becomes a scarce and precious commodity.

Starling Lab’s work positions us at exactly this inflection point. Our experiments with 3D reconstruction from archival materials demonstrate both the promise and the peril: the same techniques that allow us to create immersive experiences from historical photographs can also be used to fabricate convincing false realities. The difference lies entirely in the paper trail.

Looking ahead, this project points toward several concrete directions. First, we aim to develop a functional “nutrition label” protocol that can be applied to any piece of media, displaying at a glance what sources it derives from, what transformations have been applied, and what confidence level viewers should assign to different elements. This label would be machine-readable as well as human-readable, allowing automated systems to assess and propagate trust through chains of derivative works.

Second, we see significant potential in partnerships that span the archival, copyright, and provenance-technology sectors. Libraries and museums hold vast collections of authenticated historical materials that could serve as ground truth anchors for 3D reconstructions. Copyright-focused companies are building infrastructure that overlaps with our verification goals. By connecting these ecosystems, we can create network effects that accelerate adoption.

Third, we’re exploring methods to embed provenance tracking directly into creative pipelines, generating “paper trails” in real time as artists and journalists work. Rather than treating verification as a post-hoc audit, this approach makes authenticity documentation an invisible but persistent feature of the creative process itself.

Finally, none of these technical systems will matter without corresponding efforts in media literacy. Audiences need to understand what verification markers mean, how to interpret them, and why they matter. This educational component is essential to building the cultural expectation that authenticated media should be the norm rather than the exception.

Our belief is that with the adoption of authenticity markers, audiences will be able to make informed choices in their consumption of authentic and synthetic media. The tools have changed since we began this work, but the core insight remains: trust is built through transparency, and transparency requires authenticity infrastructure.

Near Term Actions:

Building on the foundation established through these experiments, we propose the following near-term actions:

Prototype Development: Develop a working prototype of the “nutrition label” system applied to one archival 3D reconstruction. This prototype would demonstrate the full pipeline: authenticated source materials, documented AI transformations, and a user-facing interface that communicates provenance clearly.

Practitioner Guidelines: Produce documentation and guidelines for journalists and documentary filmmakers seeking to incorporate 3D reconstruction into their work responsibly. These guidelines would address questions of disclosure, labeling, and the ethical boundaries of generative enhancement.

Contents

Archive

Fred Grinstein

Fred Grinstein is a documentary film maker and television executive exploring the boundaries of non-fiction storytelling and AI.