Preserving History in the Age of AI: The Christopher Morris Panama Archive

Preserving History in the Age of AI: The Christopher Morris Panama Archive

How the Authenticated Attributes protocol creates an immutable ‘web of context’ to save a generation of photojournalism from being lost to AI or the archive.

Reading Time: 5min

Contents

Background

We live in an age of digital excess but are losing the records of our past. Photojournalists amass millions of images, but without the support of repositories or preservation systems, this institutional memory is at risk of being lost or dismissed as AI-generated – a phenomenon known as “the Liar’s Dividend”. Over a lifetime, a photojournalist can amass thousands and thousands of images. This volume creates a massive preservation challenge; Adam Silvia, a photography curator at the Library of Congress, notes they are approached by at least one photojournalist offering an archive every week, but the Library can only acquire just one archive every five years, creating a critical bottleneck.

The rise of generative AI further complicates this reality. As Dr. Manny Ahmed, CEO of OpenOrigins, writes: “If you cannot prove that a piece of media existed before the AI inflection point, you can never again prove that it is real. This effectively drives the value of any archive to zero. Without trust in content, an archive holds no value as a documentation of history”.

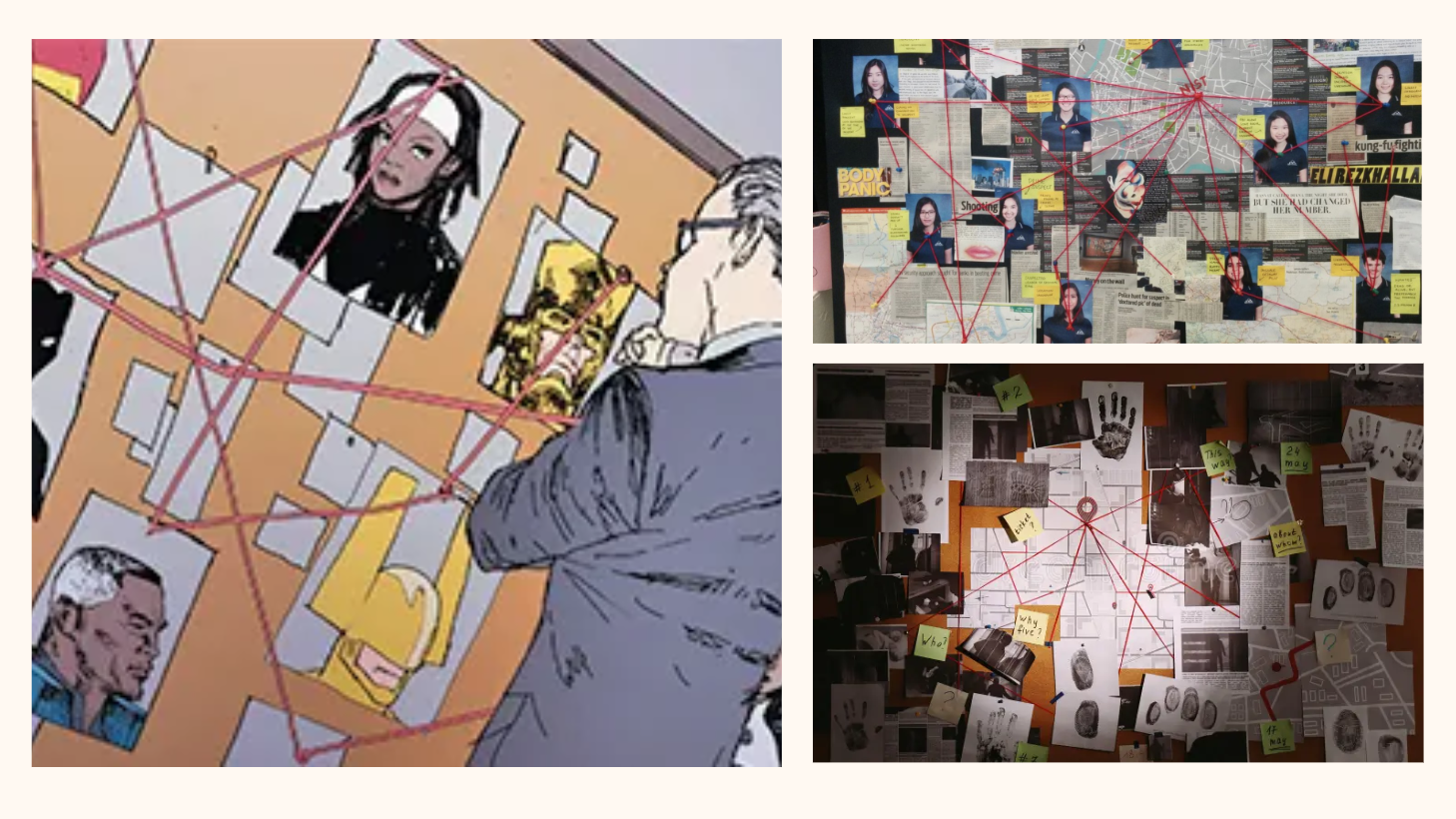

To confront this challenge, Starling Lab is moving beyond the industry standard of verifying single image files. We are experimenting with a Web of Context – a shift from asset integrity to relational integrity. By cryptographically linking an image not just to its own metadata, but to the historical documents, assignment cables, and peer testimonies surrounding it, we create a defensive layer of proof. All assets present corroborate and support each other.

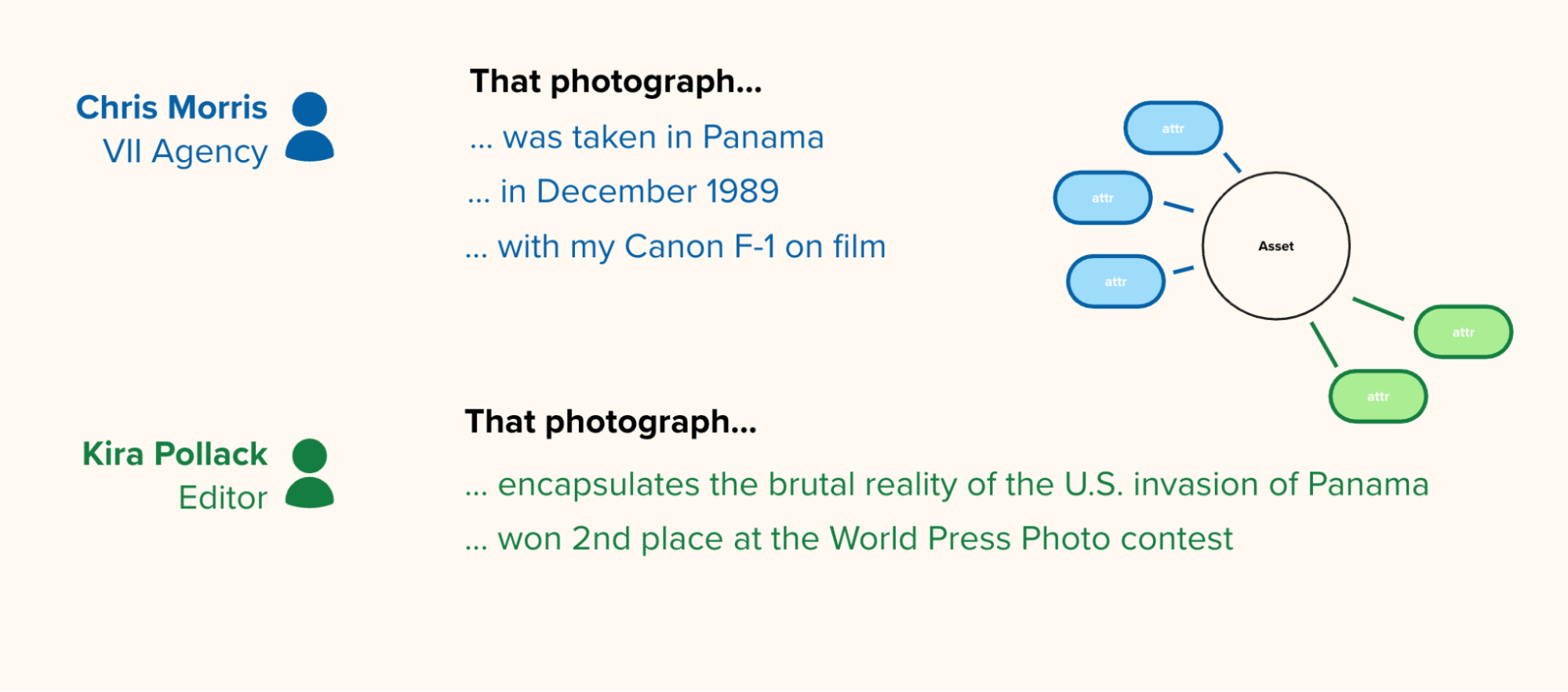

We partnered with two key figures:

- Kira Pollack: Starling Distinguished Fellow and former photo director at Time and Vanity Fair.

- Christopher Morris: An acclaimed conflict photographer whose nearly 700,000 images, taken over a 40-year career, are currently stored in filing cabinets “prepped to be loaded into a U-Haul truck very quickly, within one hour” due to environmental threats like hurricanes.

Below is a short documentary film, narrated by Chris Morris recollecting his experience photographing the scenes, and placing the images in context. Warning: it contains graphic and violent images.

Contents

Context

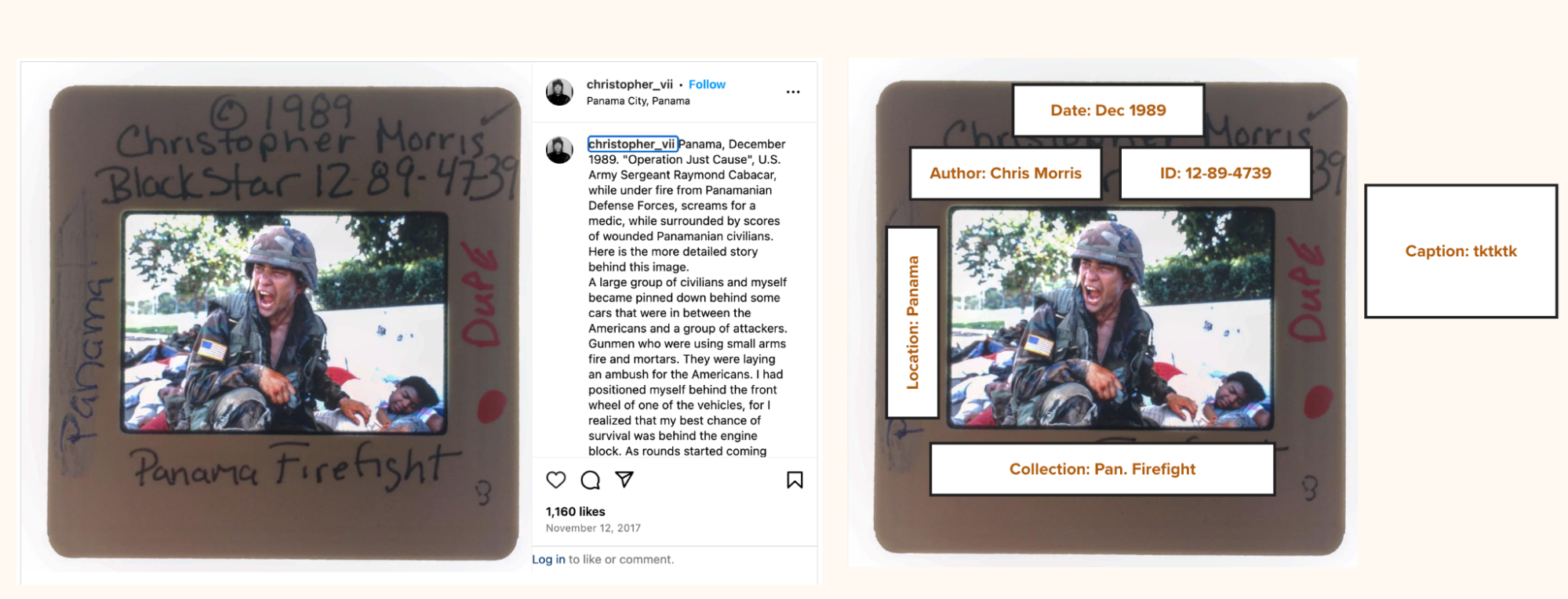

The project focuses on a specific historical event: the 1989 U.S. invasion of Panama. The investigation centers on Morris’s iconic image, “Soldier Screaming”, which captures Sergeant Raymond D. Cabacar of the U.S. Army and the 1/250th of a second it took to capture.

To prove the image’s authenticity, the scope of the project included digitizing and connecting a vast inventory of assets. Supporting documents for the image detail Sergeant Cabacar’s heroic actions on the day of the firefight (Dec. 22, 1989), where he saved a woman and two children while under heavy enemy fire – actions for which he was approved for a Bronze Star with Valor.

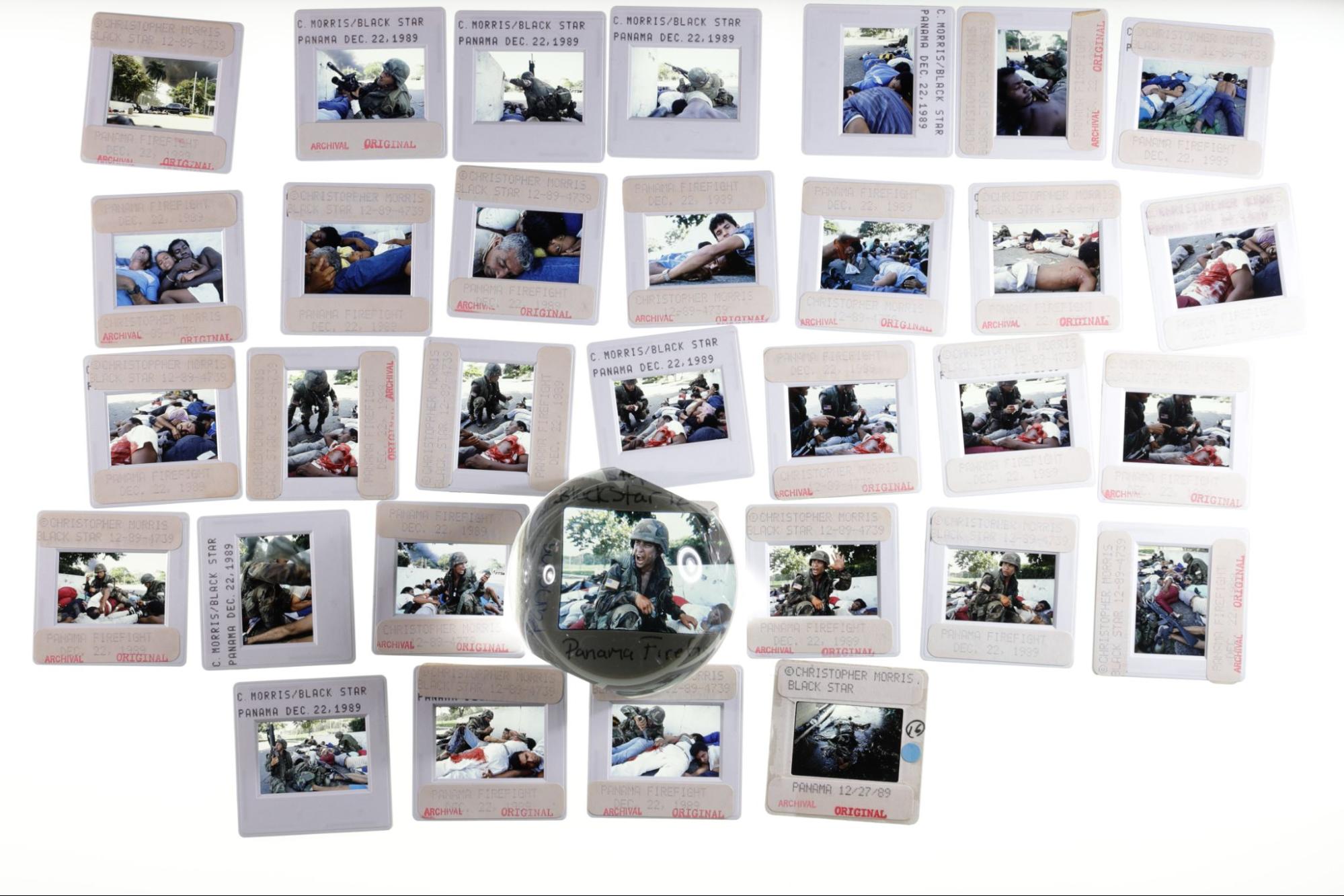

By moving beyond the single frame, the project captures the broader narrative. Specifically, the archive connects 131 Ektachrome stills, audio testimony of Morris describing sequences, related articles like Time magazine scans, and photos of the tools of his trade, such as his camera, passport stamps, and radio scanners.

Contents

Framework

The Challenge

In the realm of digital provenance, the industry has largely focused on verifying single assets: proving that a specific photograph was captured by a specific device at a specific time. While this is a critical first step, historical archives present a much more complex challenge: the problem of context.

An isolated photograph, even if cryptographically signed, is vulnerable to misattribution. If a bad actor strips a photo’s caption or falsely claims an image of the 1989 Panama invasion actually depicts a different conflict entirely, a simple digital signature on the pixels cannot defend the narrative. The truth of a photograph relies on the “web of context” surrounding it: the contact sheets showing the frames before and after, the press passes proving the photographer’s access, and the audio testimony of the photographer themselves.

The challenge for Starling Lab was twofold:

- Preservation: How do we transition a highly vulnerable, analog archive (currently sitting in filing cabinets susceptible to natural disasters) into a decentralized, immutable digital format before it is lost?

- Contextual Verification: How might we cryptographically bind a hero image (like “Soldier Screaming”) to its supporting evidence, ensuring that the relationships between these assets cannot be severed or manipulated by malicious actors or generative AI in the future?

We needed to build a system that didn’t just store files, but securely preserved the complex, relational truth of the photographer’s experience.

Contents

The Prototype

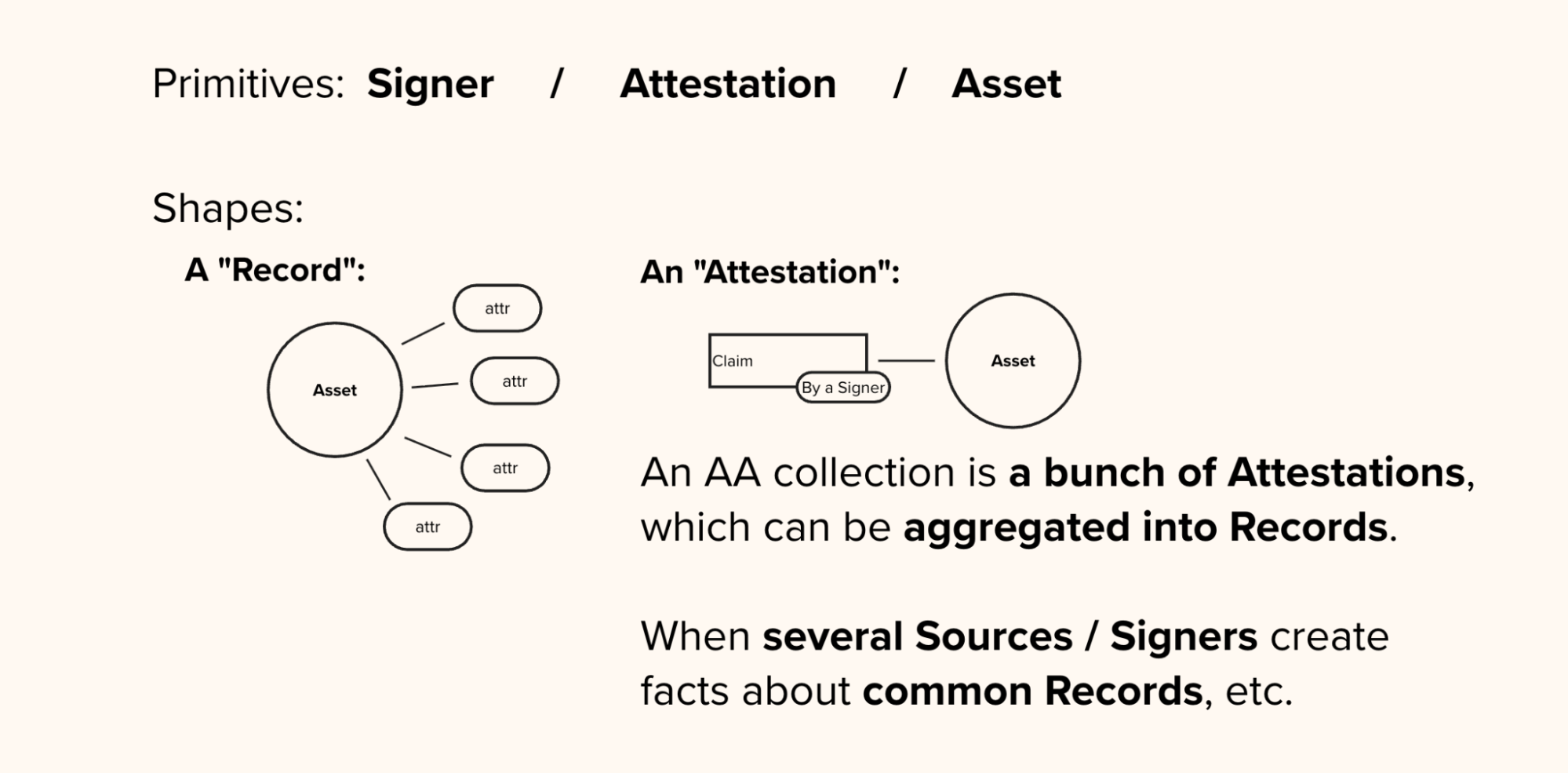

To solve the challenge of contextual verification, Starling Lab moved beyond single-file authentication to develop the Authenticated Attributes (AA) protocol and an accompanying web interface.

A “Web of Context” Concept

Instead of a linear article or a traditional folder of files, the prototype is a dynamic, “guided graph” explorer. It addresses the “Liar’s Dividend” by proving not just that a photo was taken, but how it relates to a supporting body of evidence.

Using the AA protocol, we cryptographically bound the hero image “Soldier Screaming” to its “parent” contact sheet, Christopher Morris’s audio testimony, and his scanned press credentials. If a bad actor attempts to strip the metadata or isolate the image to change its narrative, the cryptographic bond is broken, immediately signaling that the context has been tampered with.

Behind the scenes, the prototype is powered by a custom ingestion pipeline that moves away from traditional, editable databases. Instead, every piece of metadata (an “attribute”), such as “is a contact sheet of” or “was captured by”, is individually signed and recorded on an append-only cryptographic ledger. This creates an immutable, verifiable history of the archive’s creation. (A detailed breakdown of the cryptographic and storage infrastructure is available in the Technology section below).

A “Traffic Light” System for Trust

The front end of the prototype renders this complex data graph for a non-technical audience. It balances open exploration with a guided narrative.

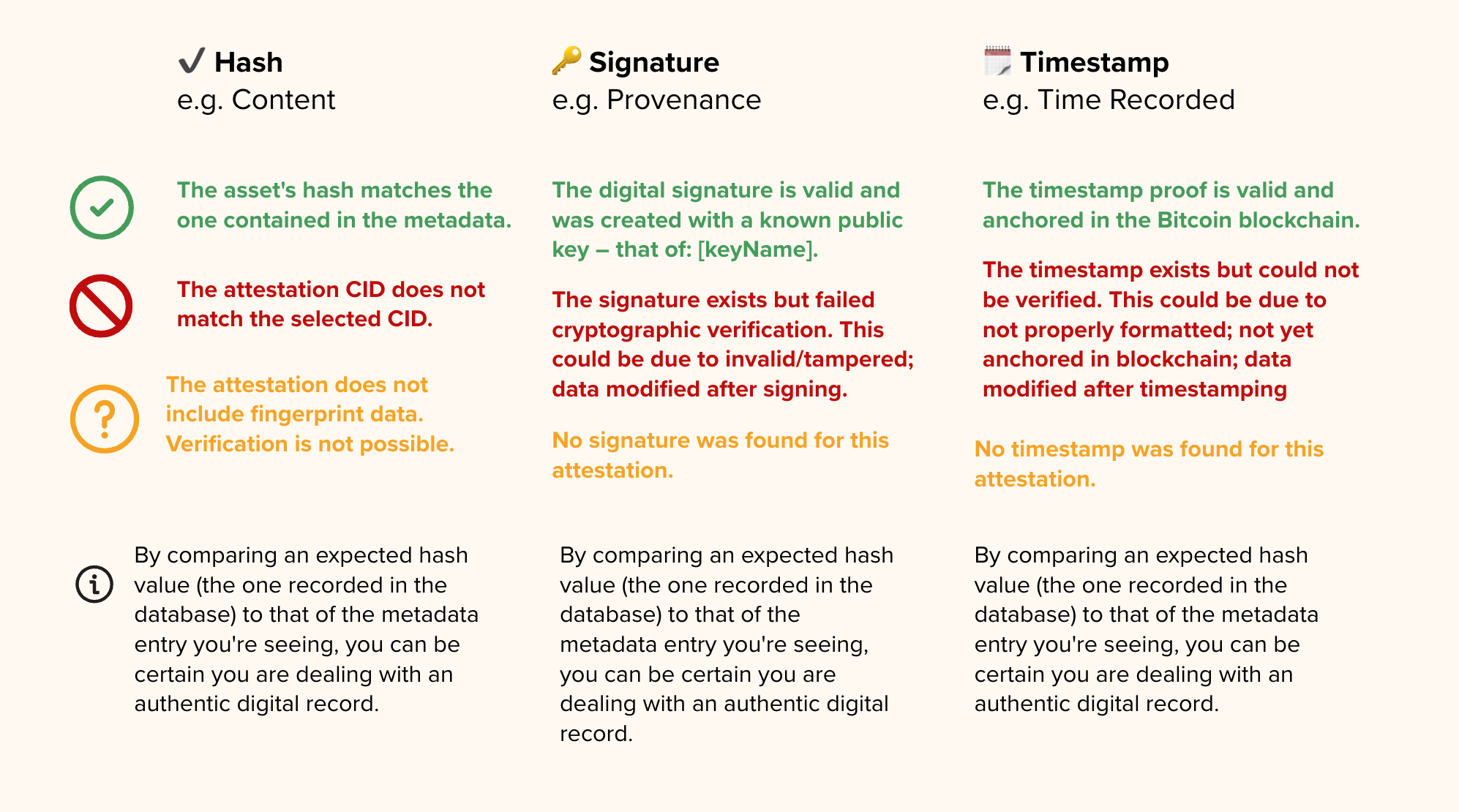

To make the cryptography intuitive, we implemented a “traffic light” verification system that evaluates Integrity (has the file changed?), Identity (who signed it?), and Time (when was it secured?).

Contents

Technology

To establish a resilient and verifiable digital archive, the project relied on a custom technology stack that moves away from centralized, easily manipulated systems in favor of cryptography and decentralized networks. We organize this pipeline into three distinct phases: Capture, Store, and Verify.

Capture: Digitizing the Analog Record

The first step was to bridge the gap between Christopher Morris’s physical archive and the digital realm without losing the chain of custody.

The first step in preserving Christopher Morris’s physical archive involved bridging the gap to the digital realm without losing the chain of custody. The team digitized 131 Ektachrome still images, along with supporting analog assets like contact sheets, passport stamps, and newly recorded audio testimony.

As these analog records were digitized, they were immediately processed through a custom command-line tool known as the starling-integrity-cli. At the exact moment of ingestion, this interface generated a SHA-256 cryptographic hash for each file. This hash acts as a unique digital fingerprint, ensuring that if even a single pixel or audio byte is altered in the future, the hash will completely change and instantly flag the file as tampered.

The output of a successful ingestion captures this fingerprint alongside the file’s essential provenance metadata in a single atomic record:

{

"file_name": "panama_001.tif",

"media_type": "image/tiff",

"file_size": 47382016,

"sha256": "a3f4e1...c9d2b8",

"blake3": "9e2c47...f1a034",

"md5": "d41d8c...98f00b",

"time_created": "1989-12-22T06:14:00Z",

"last_modified": "1989-12-22T06:14:00Z",

"asset_origin_id": "/panama-archive/ektachrome/panama_001.tif",

"asset_origin_type": ["folder"],

"project_id": "christopher-morris-panama"

}Store: Decentralized Preservation

Historical archives are highly susceptible to “link rot” (when a web page or file simply disappears) and single points of failure (like a centralized server crashing or being hacked). To guarantee the archive’s longevity, we utilized decentralized storage networks.

Because historical archives are highly susceptible to “link rot” and the single points of failure inherent in centralized servers, the project utilized decentralized storage networks to guarantee the archive’s longevity. Instead of storing files at a specific location or URL, which can easily be broken, the assets are sharded and stored on the InterPlanetary File System (IPFS) using Pinata. IPFS uses content addressing, which means files are located based on their cryptographic hash rather than where they are hosted.

For resilient, long-term archiving, this data is backed up on Filecoin, a decentralized network that uses cryptographic proofs to guarantee that storage providers are securely holding the data over time.

Finally, the root hash of each asset is registered on public, energy-efficient blockchains, such as Avalanche and Near, via the Numbers Protocol to create a permanent, public receipt of the file’s existence.

Each successful registration is itself logged back into the Authenticated Attributes database as a signed registrations entry, closing the loop between the file, its metadata, and its public receipt:

{

"chain": "numbers",

"attrs": ["media_type", "sha256", "time_created"],

"data": {

"txHash": "0x78d30a...8f968d625",

"assetCid": "bafybeibqzv26nf3i5lz...",

"assetTreeCid": "bafkreigeszf54jrgvlt...",

"order_id": "4dd7cd58-94f6-4aaa-8f24-b10bd41235d5"

}

}

Verify: Establishing the Chain of Trust

The verification phase is where the “Web of Context” is cryptographically sealed, allowing anyone to independently audit the archive’s integrity. The archive’s metadata (which provides the critical context defining how the photos, audio, and documents relate to each other) is stored using Hyperbee. Unlike traditional SQL databases, Hyperbee is a peer-to-peer, append-only B-tree database where historical entries cannot be overwritten; any change is added as a new, visible entry to create a transparent audit trail. Every attribute added to this database is signed using Ed25519 digital signatures via the Noble Crypto library, firmly binding the metadata to the recognized identity of the photographer or archiving institution.

Each piece of metadata – called an attribute – is stored not as a simple key-value pair in a traditional database, but as a full attestation record. Below is a simplified example of what the time_created attribute looks like for a digitized Ektachrome still:

{

"attestation": {

"CID": "bafkrei...hxfq",

"attribute": "time_created",

"value": "1989-12-22T06:14:00Z",

"timestamp": "2024-11-03T09:22:14Z",

"encrypted": false

},

"signature": {

"pubKey": <ed25519 public key bytes>,

"sig": <signature over the attestation>,

"msg": <CID of the signed content>

},

"timestamp": {

"ots": {

"proof": <OpenTimestamps proof bytes>,

"upgraded": true,

"msg": "Bitcoin block #868241 anchors this data"

}

}

}To prove exactly when the files and their context were secured, the system uses OpenTimestamps. This protocol bundles the cryptographic hashes and anchors them to the Bitcoin blockchain, providing mathematical proof that the data existed at a specific point in time and has not been backdated. Finally, to ensure this verification data travels with the files beyond a dedicated visualizer, the pipeline injects Coalition for Content Provenance and Authenticity (C2PA) manifests directly into the media files. This embeds the cryptographic history into the file itself, allowing it to be verified by external platforms and tools like Adobe’s Content Credentials viewer.

Contents

Learnings

UX is the Bridge to Trust: Cryptographic proofs are only as effective as they are legible. The success of the Panama Archive relied on the “Traffic Light” visualization, which translates complex hashes into intuitive trust signals for non-technical researchers and historians.

The Power of Relational Knowledge: Standard flat databases fail to capture the nuance of journalistic context. We found that representing “historical truth” requires graph-like knowledge structures to map the complex relationships between photos, telegrams, and witness testimonies.

Solving for Computational Scale: While the prototype successfully secured a curated selection, the next milestone is automation. Future work must focus on anchoring millions of legacy assets to the blockchain without compromising the manual oversight required for historical accuracy.

Standardizing Metadata: The project highlighted a critical need for flexible, interoperable metadata schemas. For an archive to be truly “future-proof,” its technical structure must remain compatible with evolving web standards and emerging AI-detection tools.

Contents

Archive

- “Photo are disappearing, one archive at a time” opinion essay from Kira Pollack in the Washington Post

- Kira Pollack’s profile by World Press Photo

- VII Foundation’s interview with Kira Pollack and Chris Morris: “Expanding the Archive”