Authenticated Camera Capture

Capture

Authenticated Camera Capture establishes a root of trust at the absolute moment of creation by embedding cryptographically signed metadata directly into media files. Spearheaded by the C2PA standard and major manufacturers like Leica, Sony, and Nikon, these prototypes shift the verification paradigm from reactive deepfake detection to affirmative proof of origin.

They rely on hardware-backed secure enclaves to sign images with private keys, ensuring that every photo or video carries a tamper-evident record – a “birth certificate” – that traces back to the original sensor and time of capture.

YEAR

2020-26

PARTNERS

Canon Cameras

Reuters

Adobe (Content Authenticity Initiative)

Leica Cameras

The Problem

Photographs circulate globally, often years after capture, stripped of metadata and decontextualized. Viewers are left unsure of who captured an image, or when and where events occurred. This vulnerability is exploited by bad actors using AI to manipulate content, giving rise to the “Liar’s Dividend” where even authentic evidence can be dismissed as a deepfake.

Furthermore, existing “companion device” workflows (pairing a camera to a smartphone) often suffer from field challenges like Wi-Fi connection issues, battery drain, and the technical complexity of managing multiple devices in high-pressure conflict zones.

Finally, a core challenge in authenticating news media is accounting for the reality of permissible edits. In photojournalism, editing a raw file is not inherently deceptive; it is a necessary step in the editorial process. Photo editors routinely crop images to fit specific publication layouts, adjust exposure or color balance to ensure visual clarity, and append critical contextual metadata such as captions, location data, and copyright credits. While these routine, ethical adjustments do not alter the factual truth of the scene, they inherently change the digital fingerprint of the file.

CASE STUDIES

– Canon-Reuters Collaboration on Preserving Trust in Photojournalism

LINKS

– The Canon-Reuters prototype POC

– Petapixel: Canon and Reuters develop new photo authentication technology

– On offline benefits: our submission to the UN Special Rapporteur on Human Rights Defenders

The Solution

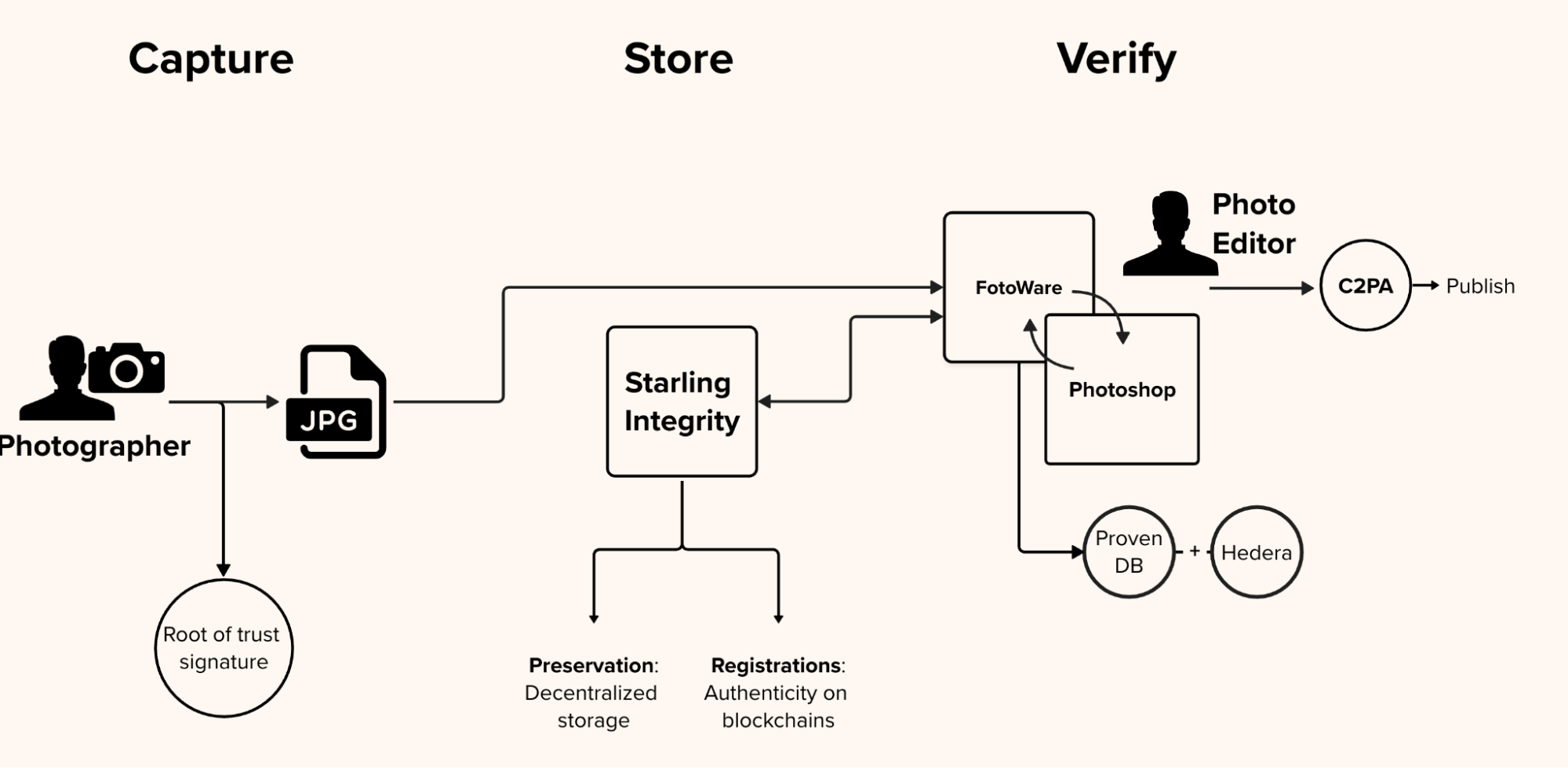

We experimented with several authenticated camera-centric workflows that enabled professional cameras to natively generate and sign C2PA manifests upon capture. By integrating signing keys into hardware-based secure enclaves (Trusted Platform Modules), the system ensures that private keys cannot be extracted or cloned, establishing a permanent “root-of-trust” within the device’s silicon.

From lens to a reader’s screen, this “glass-to-glass” chain of custody, pioneered with specialized firmware for Canon devices, injects rich, signed metadata—including server-acquired timestamps and GPS coordinates—directly into JPEG files. This ensures that every asset carries its own proof of integrity, allowing audiences to audit the steps taken from the initial shutter click to publication through standard inspection tools.

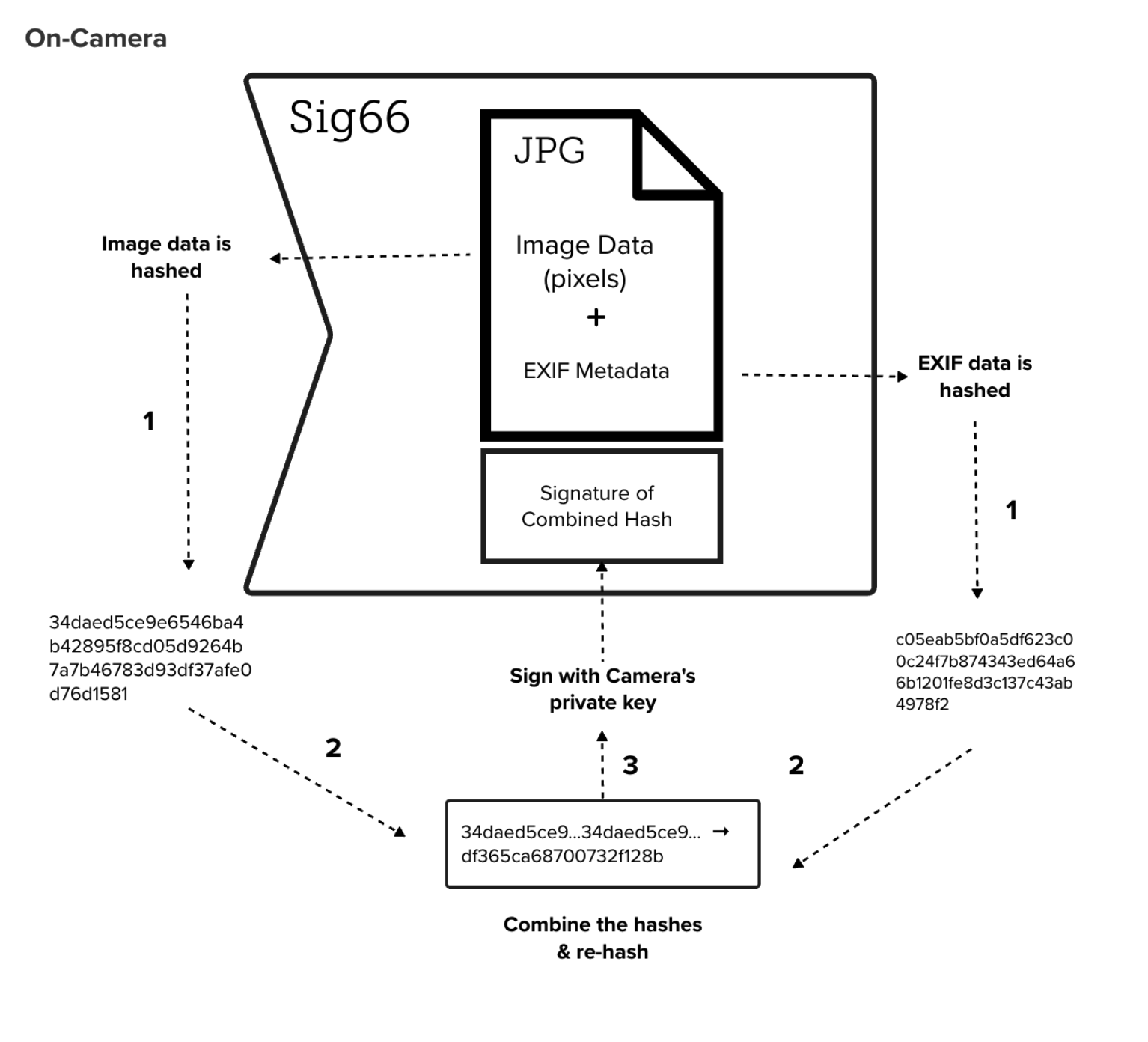

The on-camera process. The firmware computes a combination hash of image pixels and EXIF metadata, signs it with a unique factory-programmed private key, and appends the signature to the JPEG data.

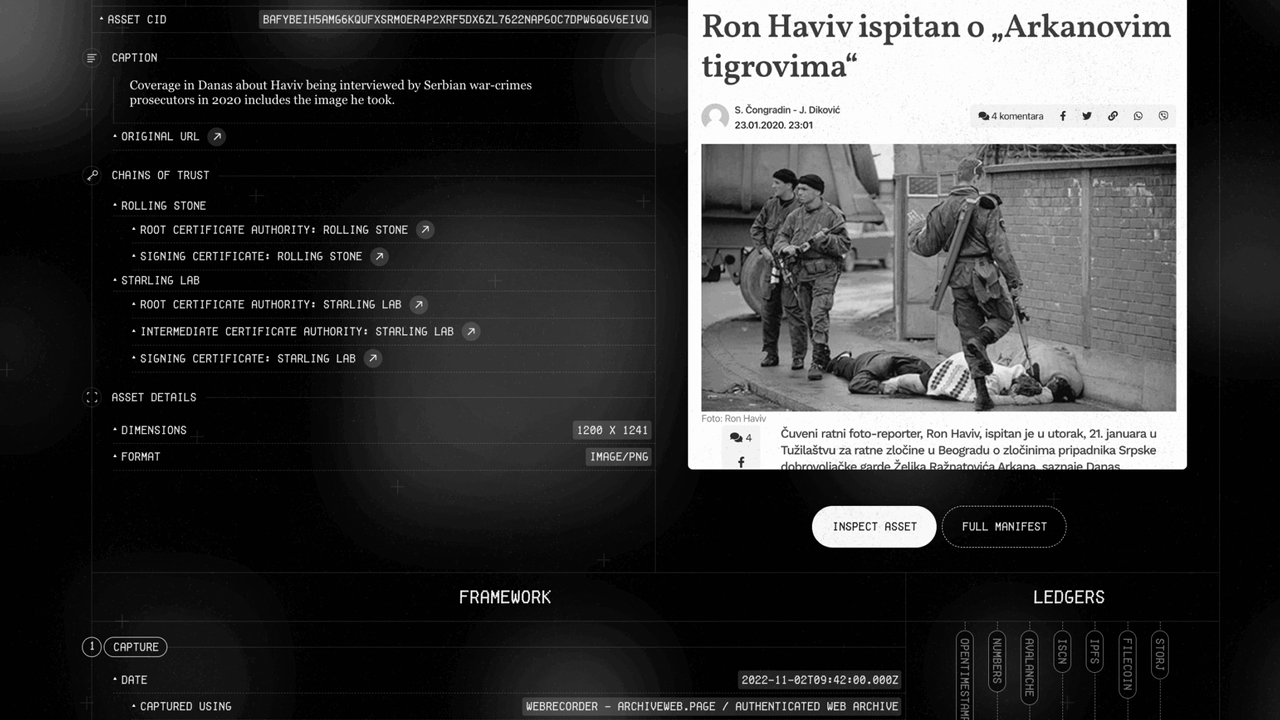

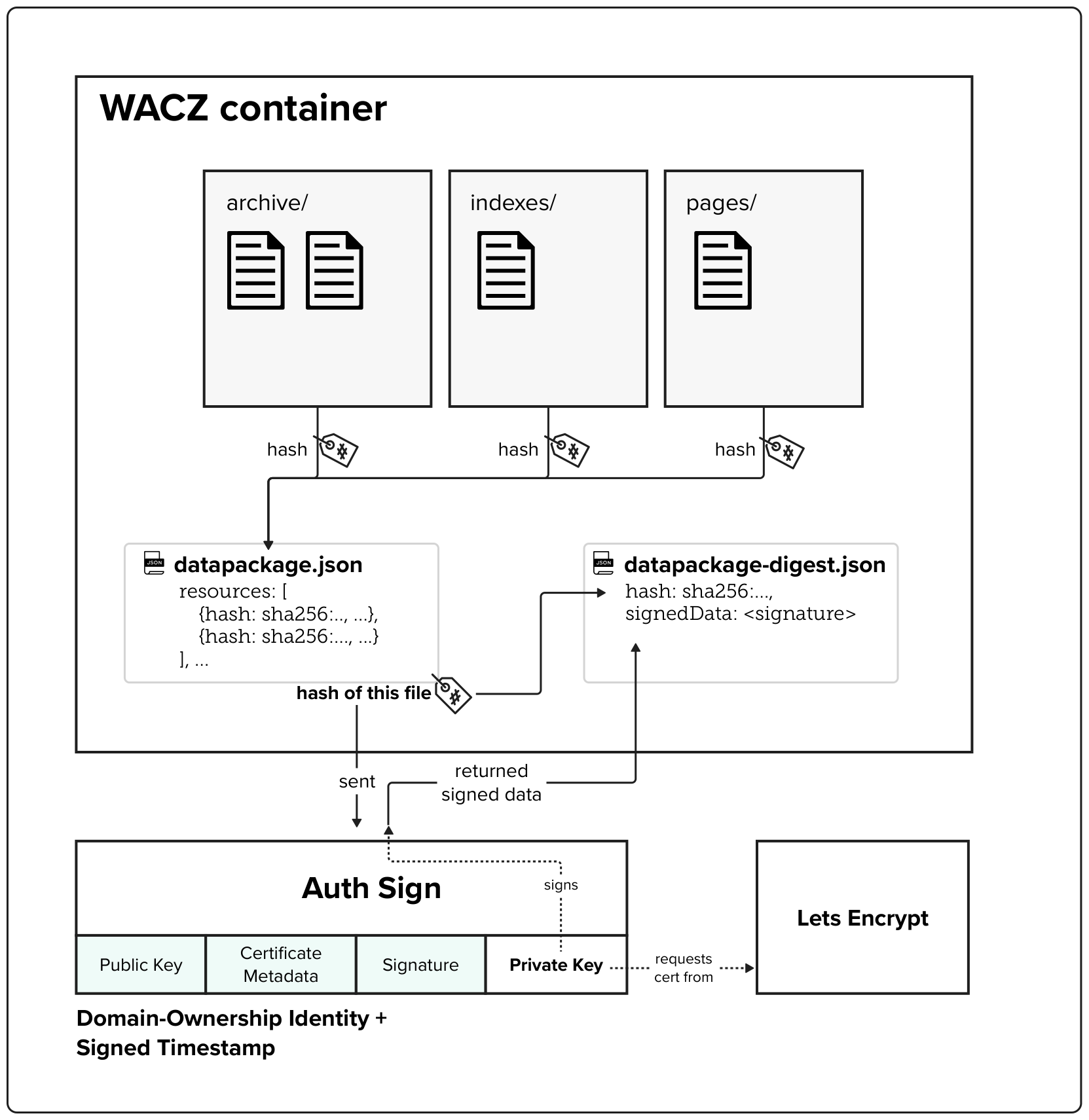

To bridge the gap between capture and publication, the Starling Integrity backend tracks permissible modifications in the background. Using webhooks within the Fotoware CMS, every edit, from caption updates to Photoshop adjustments, is recorded as a new entry in a C2PA manifest and anchored to the Hedera public ledger. This creates a mathematically provable, immutable audit trail that survives the industrial scale processing of a global newsroom.

Companion Secure Enclave Authentication

Capture

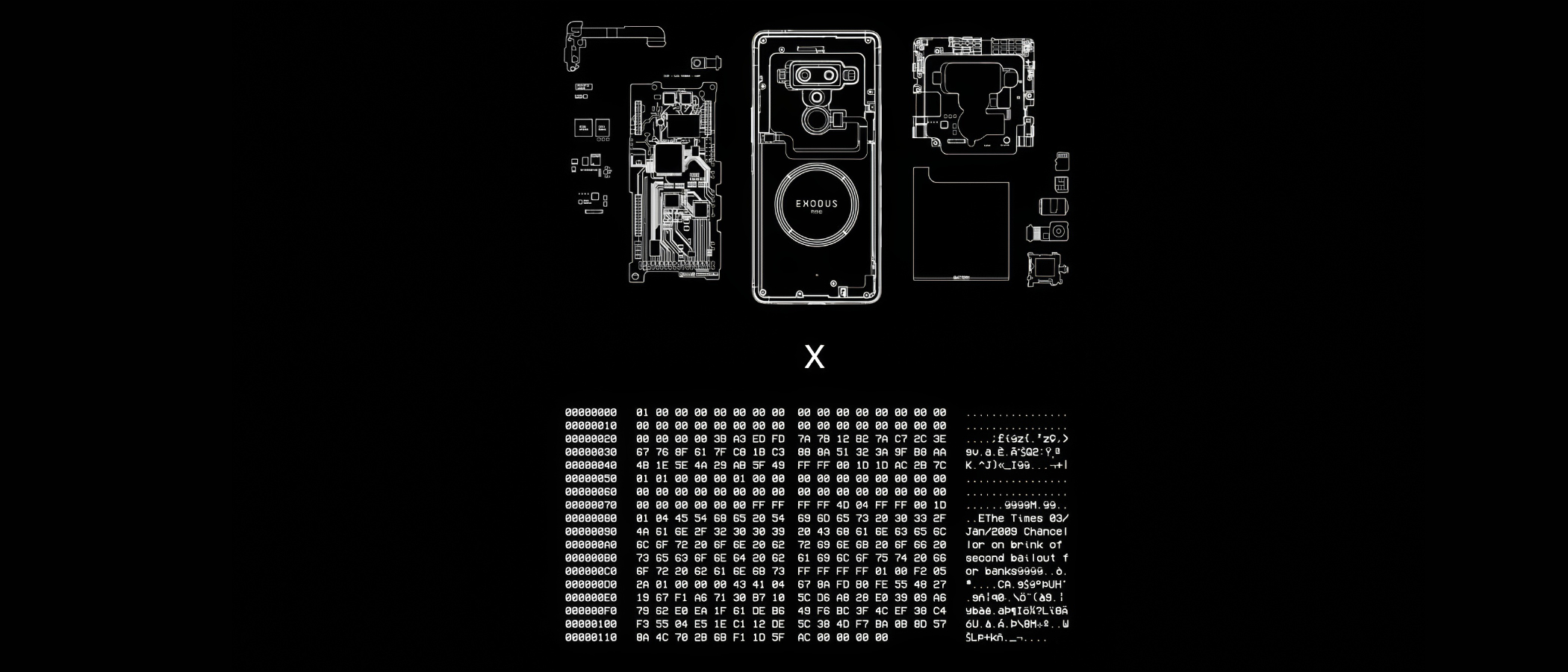

Companion Secure Enclave Authentication provides a “secure bridge” for professional photojournalism by tethering standalone cameras to mobile devices with hardware-level security. By pairing a professional camera with a smartphone’s secure enclave (such as the HTC Zion Vault), this prototype establishes a root-of-trust for images that traditional cameras cannot natively sign.

This method ensures that every photo is cryptographically sealed with a unique digital signature and sensor-rich metadata at the exact location and time of capture, creating an unalterable record of reality.

YEAR

2020-24

PARTNERS

HTC

Inside Climate News

Bay City News

Numbers

The Problem

Most professional cameras used in the field lack the internal hardware necessary to cryptographically sign assets or protect signing keys. Without a tamper-evident seal, digital photographs and their metadata (such as GPS and timestamps) are vulnerable to manipulation by AI tools or bad actors.

As these unverified images circulate, they lose their essential context, making it nearly impossible to determine the original version or defend against cheap- or deepfake allegations that distort the facts reported by photojournalists.

The Solution

Starling Lab pioneered a workflow that utilizes the hardware secure enclave of a companion smartphone to sign media from high-end cameras.

By tethering a professional camera (such as a Canon R5) to an HTC Exodus 1S phone via WiFi or USB, the Starling Capture app (co-developed with Numbers) instantly receives captured media. The phone’s Zion Vault hardware-secured signer then generates a cryptographic hash of the image and its associated sensor data (barometer, gyroscope, and GPS), sealing it with a private key that never leaves the device’s protected silicon.

CASE STUDIES

Stockton Homelessness: In 2022, Bay City News photojournalists documented the homelessness crisis in Stockton, CA, using Canon R5 cameras paired with HTC devices. These “authenticated time capsules” provided a verifiable record that challenged official statements and misinformation surrounding local funding disparities.

Brazil Pantanal: Photographer Felipe Albarenga documented the 2020 wildfires in the world’s largest wetland. By using the companion secure enclave, Albarenga created a tamper-evident archive of the devastation that could withstand the propaganda and denialism prevalent during the Brazilian presidential election.

Authenticated Web Archives

Capture

Accurate, reliable, simple to use, and secure workflows for archiving web content.

The Problem

Online content disappears rapidly, erasing critical evidence for investigative journalism, accountability, and cultural preservation. Social media platforms and hosting providers face pressure to implement stricter content moderation, with automated filters and human moderators making rapid decisions about what stays online. Records documenting potential crimes – especially those with violent imagery – risk being permanently deleted. Restoring content is often impossible: original posters may be arrested, lose device access, or no longer be alive when investigations begin.

Existing archiving methods face three challenges: platforms actively block automated crawlers, preserved content lacks the cryptographic verification and chain-of-custody documentation required for legal admissibility, and saved material becomes unsearchable across large collections.

JOURNALISM

Strong web archives provide a tamper-evident way to capture online evidence, safeguarding reporting against censorship and the erosion of digital sources.

HISTORY

These archives create a trustworthy and resilient collection of digital primary sources, ensuring that the ephemeral nature of the web does not erase our collective memory.

LAW

This technology establishes an unbreakable digital chain of custody, transforming fleeting web content into verifiable, court-admissible evidence.

The Solution

Starling is developing workflows using open source software for archiving web content to ensure the preserved archives are accurate and reliable, taking into consideration the sensitivity of the data. We draw from the considerable expertise deployed by national libraries and legal deposits from around the world.

Our case studies have experimented with forensically-sound web archiving, focusing on capturing broad contextual snapshots of web material.

The WACZ standard and file format

The Web Archive Collection Zipped (WACZ) standard provides a portable packaging format for web archives that bundles WARC data, indexes, metadata, and verification information into a single ZIP file. Unlike traditional WARC files that lack contextual information and require complex server infrastructure for viewing, WACZ enables efficient browser-based rendering by organizing content with indexes that allow random access to only the data needed for each page.

Built-in Integrity Through Cryptographic Hashing

Every WACZ file includes a datapackage.json manifest that contains cryptographic hashes of all resources within the archive, providing a verifiable fingerprint to detect any unauthorized modifications. This hash-based integrity checking ensures that archived content remains tamper-evident throughout its lifecycle.

Authentication Through Digital Signatures

The specification adds optional authentication capabilities by allowing creators to digitally sign archives – notably using TLS certificates. These signatures validate both the identity of the entity creating the archive (using X.509 SSL certificates) and establish a trusted timestamp for when the capture occurred.

Time for Trusted Timestamping

Capture

Most of Starling Lab’s work involves trusted timestamping. Normally timestamping is very easy: you simply add a timestamp field to your metadata and call a function such as time.Now(). Trusted timestamping, by contrast, is concerned with proving that the provided timestamp is actually correct, and wasn’t forged after the fact.

This is simple to do in one direction, in order to prove some data existed after a certain point in time. That can be easily achieved by including unguessable current information, such as news headlines and stock prices. A classic pop culture example is kidnappers proving a hostage is still alive by having them pose with that day’s newspaper.

But trusted timestamping is concerned with the opposite direction: proving data existed before a certain time. This is not so easily done, but it is possible, and the use cases are quite important.

Purpose

There are three main reasons for doing trusted timestamping.

- Verifying old cryptographic signatures

- Data integrity

- Proof of technology ✨

In addition to these there is the obvious one: authenticity. Trusted timestamping can prove that a document that claims to have been made on a certain date was actually created on that date.

Verifying Signatures

The mainstream industry use case is verifying that a signature was added on a given date. For example, Apple requires timestamping as part of its code-signing process. The reasoning is simple: sometimes private keys get leaked or revoked. This scenario can break the guarantees signatures provide, namely that you can be sure of the author of the signed data.

Once a key is leaked, can we validate any previous signatures? They could have been made by a third party using the leaked key. But if the signature was timestamped, we can know whether the signature was created before the key was made invalid. This is a real problem that trusted timestamping solves.

Data Integrity

A basic method of ensuring data integrity is storing hashes. But what if those hashes are tampered with? Using signatures instead allows us to verify that no one but a person/org we trust has modified the data. But what if we don’t want to trust that person, or their ability to guard their keys? Using signatures adds an extra moving part and security risk that in some cases may not even be needed. If we can agree that the data was valid and unmodified at a certain point in time, trusted timestamping will provide data integrity forever. An example use case is backups, or storing org-internal archival data like legal agreements.

Proof of Technology

This is a new, modern use case. As AI-generated content gets better and better, being able to prove that a piece of media was generated before certain AI software even existed will become extremely important. Trusted timestamping has the ability to end all these new uncomfortable arguments that such-and-such picture was created whole-cloth by some inscrutable algorithm, and instead bring us back into old school discussions about whether something looks “PhotoShopped”.

Of course, it can be that AI content generation has advanced too far for trusted timestamping to be useful. But the technology is not perfect, nor has it peaked. As the saying goes, the best time to plant a tree timestamp data was 20 years ago, the second best time is now.

Software

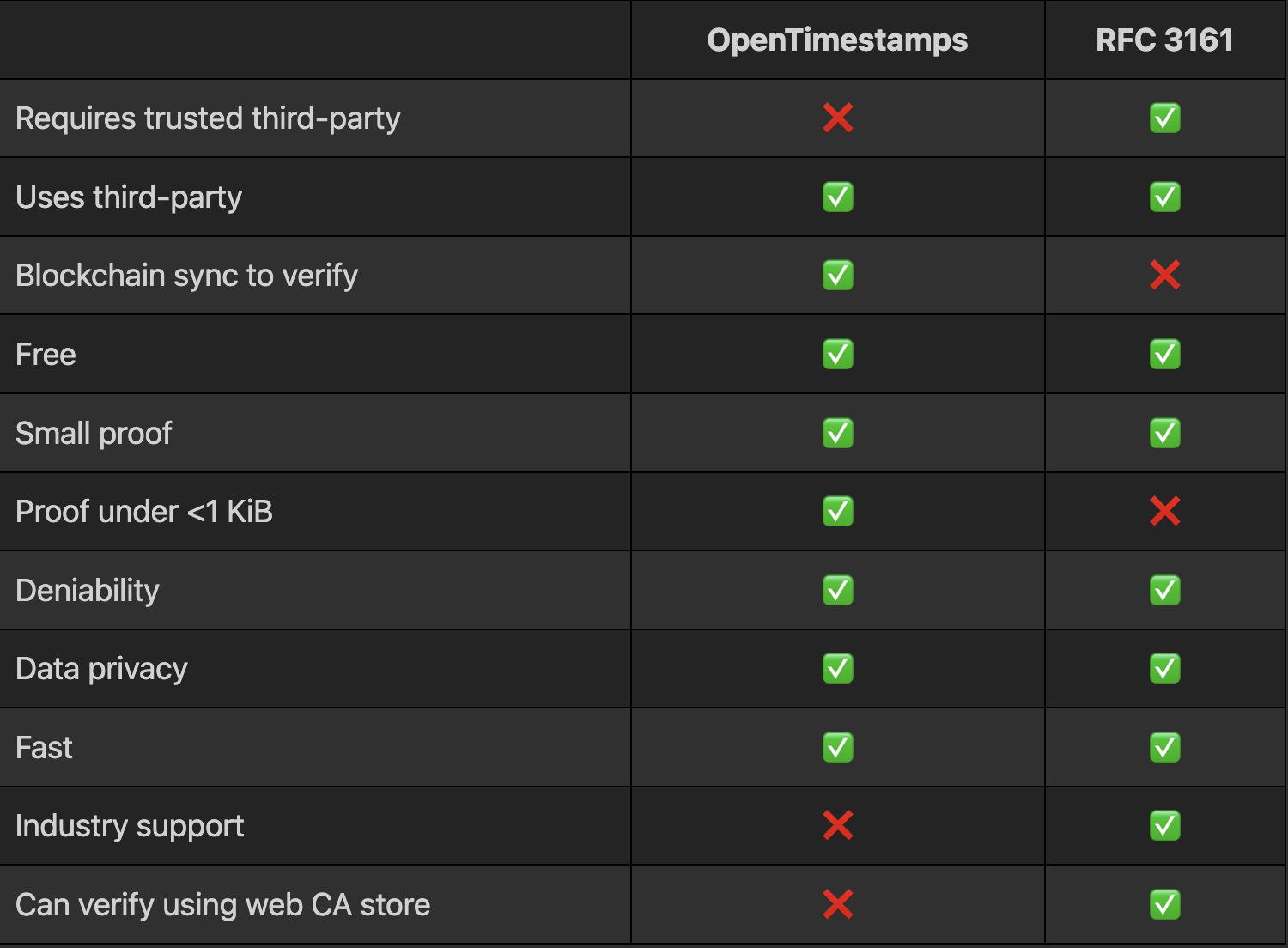

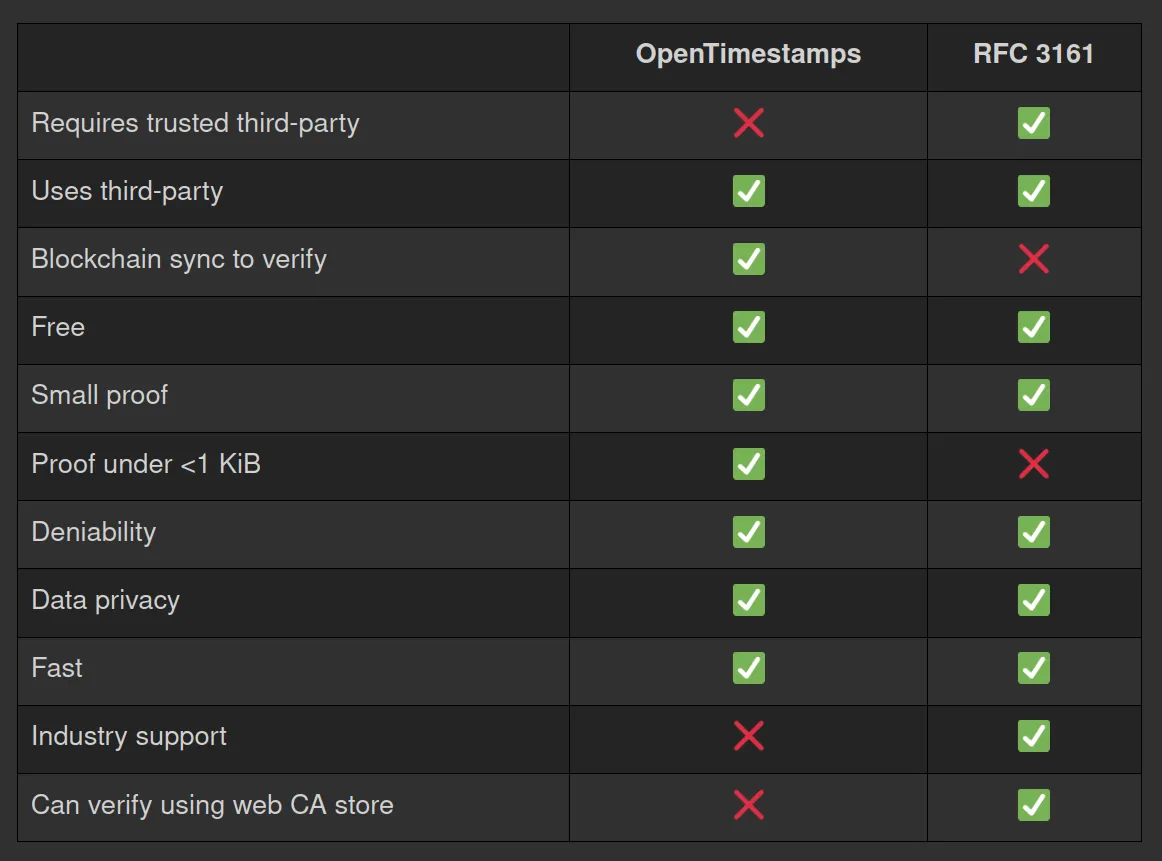

There currently exist two viable trusted timestamping methods I am aware of. The first is OpenTimestamps, and the second is the Time-Stamp Protocol, or RFC 3161. The former timestamps using Bitcoin (but efficiently, not one timestamp per block) and the latter uses the signature of a trusted service (Time Stamp Authority).

Here is a quick comparison:

You can learn more about OpenTimestamps on the website. Beyond the actual RFC linked above, you can learn more about RFC 3161 by playing around with OpenSSL, use the CLI and type the command man openssl-ts

I’m not going to dive any deeper into these tools than the above table, but my general recommendation is to use RFC 3161 with a good third-party unless not needing to trust anyone is actually a requirement for your project. And you could always do both!

Usage

OpenTimestamps has a CLI tool and libraries in several programming languages, see here. It also has a GUI on the website.

RFC 3161 is supported by OpenSSL as a CLI tool with the openssl ts command. To make RFC 3161 timestamping on the CLI easier (as it’s not just one OpenSSL command), I’ve created a bash script each for timestamping and verifying. You can get them here.

The only GUI option for RFC 3161 I’m aware of is on the freeTSA.org website, although personally I have no reason to trust freeTSA. Better than nothing though, for anyone who doesn’t use the command line.

For trusted third-parties there are various options, including reputable companies such as DigiCert.

Conclusion

It’s time for trusted timestamping! The cost of this is basically zero (storing a few kilobytes of proof at most), but the advantage can be very large. It’s one of those things that you’ll wish you had done at the start.

Many groups can act on this by adding timestamps for their documents and media. In increasing order of importance:

- General public: timestamp your important documents and media to prove their authenticity. You can do this today on your phone with ProofMode, and on the desktop using CLI or GUI tools as discussed above.

- Archivists: timestamp everything you archive, for integrity and authenticity. For redundancy consider using both methods mentioned above and many RFC 3161 timestamp authorities.

Developers: if you work on software where data or signature integrity is at all important, add trusted timestamping to your application. And if you use Git signing, you can easily start timestamping all those signatures, see here.

Archiving 10,000 Web Pages of Weaponized Narratives in support of the DFRLab

Capture

We are thrilled to announce our participation in an extensive archiving initiative supporting the Atlantic Council’s Digital Forensic Research Lab (DFRLab). This significant project involves preserving 10,000 web pages as part of the research for the “Narrative Warfare” report, providing a robust resource for understanding the complex landscape of digital misinformation and information warfare.

The “Narrative Warfare” report, published by the DFRLab, delves into the intricacies of how narratives are weaponized in the digital age. The report uncovers how state and non-state actors manipulate narratives to influence public perception and destabilize societies.

The dataset of 10,000 web pages represents pro-Kremlin news publications from the 70 days prior to the ground invasion (Dec 16, 2021 to Feb 24, 2022). The team at the DFRLab identified and tracked five primary narratives pushed in support and ahead of the invasion of Ukraine.

Starling Lab, renowned for its pioneering work in data integrity and digital preservation, played a crucial role in this initiative. By leveraging advanced technologies and methodologies, Starling Lab ensured that the archived web pages were meticulously preserved and accessible for future research and analysis. This collaboration underscores the Lab’s commitment to safeguarding digital content and promoting transparency in the digital realm.

Methodology

Given the risk to the material once the report would be published, we preserved and durably stored the material on the request of the DFRLab. Director Andy Carvin’s team shared a spreadsheet containing, for each URL, original research metadata such as the associated broad narrative, the source pushing this narrative, and the article’s reach on social media.

Each page was crawled individually, meaning that all its content (media, code, style) were downloaded in a package permitting to “replay” it in the future and offline, independently of whether the original content would be deleted. Archives were prepared and packaged up using the cloud tools provided by WebRecorder, and all the content cryptographically signed by Starling Lab’s SSL certificate. For more details on these authenticated web archives, read our Dispatch on WACZ files.

The resulting dataset, totalling 400GB, can be accessed by members of the “Dokaz” initiative on request. While each page can be individually browsed as it was captured at the time, regardless of whether it went away, we are looking for support to produce an explorable, searchable interface to facilitate access for researchers.

Conclusion

In conjunction with the DFRLab’s “Dokaz” initiative, which focuses on preserving and verifying evidence in conflict zones, this archiving effort marks a significant step forward in the fight against digital misinformation. The “Dokaz” initiative, supported by Starling Lab, aims to provide reliable and verifiable digital evidence that can withstand scrutiny and support investigative journalism and research.

This collaborative effort between Starling Lab and DFRLab highlights the power of interdisciplinary partnerships in addressing the challenges posed by digital misinformation. By combining expertise in data integrity, digital forensics, and narrative analysis, this project not only enhances our understanding of narrative warfare but also strengthens our ability to counteract its harmful effects.

For more information, visit the Atlantic Council’s Narrative Warfare Report.

Publication of our whitepaper on Best Practices for Admissibility of Web Archives

Capture

In the autumn of 2022, we convened two pivotal workshops to focus on the evolving role of archiving web pages and social media in the context of international justice, particularly concerning Russia’s war against Ukraine. These workshops, one technical and the other legal, aimed to explore how recent advances in web archiving could support the collection, storage, authentication, and utilization of digital evidence in accountability proceedings for victims of the conflict.

The discussions formed the basis of a whitepaper, authored by Scott Martin (Global Justice Advisors) and Basile Simon (Starling Lab) and set of best practices that outline the ideal characteristics of a web archive for use in court, drawing on the requirements of the Berkeley Protocol on Digital Open Source Investigations.

Best Practices for Web Archiving

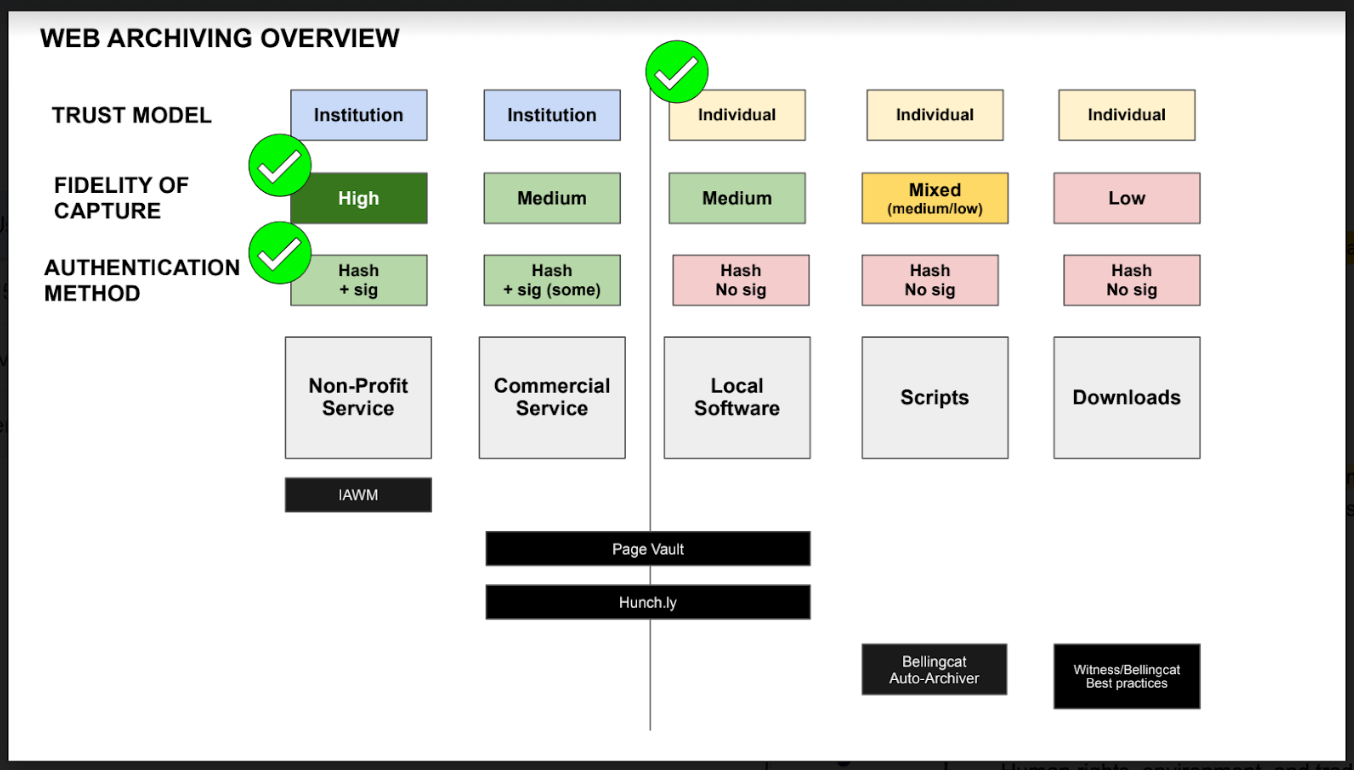

According to this whitepaper, the ideal web archive demonstrates the following properties:

- It can be produced by anyone, notably by individual actors with tools they can grasp and control (as opposed to using a commercial service or being granted access to a platform). This is correlated, to an extent, with the use of open-source and local software.

- It is of high fidelity, meaning it was carried out by a tool that preserved most, if not all, of the original material.

- It includes the content itself, its surrounding metadata, the metadata of the web scraping software. This includes cryptographic hashes of all website assets and the signature of these hashes authenticating it to the author.

- Furthermore, cryptographic hashes and signatures must be preserved, that is to say, stored securely and made available for the long term, as would the content itself.

Establishing Clear Methodologies

To maximize the admissibility of a web archive as evidence, archivists and legal professionals must establish clear, detailed methodologies. These methodologies should document the provenance of the digital evidence — detailing where it comes from, how it was procured, who procured it, when it was procured, and the process followed. This includes documenting the chain of custody and demonstrating that the webpage has not been altered during archiving.

Key points include:

- Detailed Record-Keeping: Identify the person conducting the archiving, their qualifications, and the web collection protocols observed. Describe the hardware and software used, and explain the process for selecting and assessing websites and articles for credibility and resistance to manipulation.

- Storage Protocols: Describe measures against corruption, hacking, and other risks to ensure the integrity of the archives over time. This should be recorded in a chain of custody that tracks who has handled the document.

Background on Workshops

Technical Workshop: Enhancing the Integrity of Web Archives

On August 25, Starling brought together experts in web archiving to discuss methods to preserve information for accountability purposes in Ukraine. The workshop delved into various collection, authentication, and preservation strategies, emphasizing the technical aspects that ensure the integrity of recorded web pages and other digital materials.

Participants first examined existing web archiving practices and their operation on a technical level, then discussed the potential risks to these archives that could threaten their integrity. A significant focus was on the vulnerabilities of storing web archives using traditional archival models. The discussion highlighted how a shift towards more distributed and decentralized models could offer improved long-term resilience and availability, essential for maintaining the integrity of the archives in unpredictable environments.

We thank the following participants for their contributions:

- Mark Graham, from the Internet Archive;

- Ilya Kreymer, from WebRecorder;

- Michael Nelson, from the Old Dominion University;

- Nicholas Taylor, expert witness in the Internet Archive Wayback Machine;

- Ed Summers, from the Stanford Libraries;

- And Cade Diehm, from the New Design Congress.

Legal Workshop: Web Archives in the Courtroom

Following the technical discussions, a roundtable of legal experts convened on September 27 to explore the legal dimensions of web archiving practices. This group included lawyers specializing in war crimes and legal professionals experienced with digital evidence. The goal was to identify potential legal vulnerabilities in current archiving practices and determine how such materials could be admitted into evidence in courtrooms, particularly in war crimes and other international criminal proceedings.

The legal experts articulated best practices to ensure that web archive data are preserved, produced, and authenticated in ways that maintain their integrity. This enhances their reliability, utility, and probative value as evidence in a judicial context. The roundtable discussed the characteristics and challenges of various web archiving practices and presented a framework to assess these methods.

We thank the following participants for their contributions:

- Scott Martin, from Global Justice Advisors;

- Melissa Bender, from Ropes and Gray LLC;

- Tim Parker, from Blackstone Barristers;

- Cari Spivack, from the Internet Archive;

- Karolina Aklamitowska, from Tallinn University;

- Clare Stanton, from Harvard Law School;

- Bastiaan van der Laaken, from the UN IIIM Syria.

Next Steps: Call for Contributions on Witness Servers

Finally, to improve on the process of entering web archives into evidence, Starling are formalizing the concept of “Witness Servers” as an additional layer of self-corroboration for web archives. A Witness Server is a service, hosted and run by an institution, which carries out web crawls on-demand on behalf of individuals conducting web archiving activities.

Participating institutions, e.g. the Stanford Libraries, WebRecorder, or the Harvard Library Innovation Lab, bestow the individuals or team they accept to witness with the trust that might be placed in the institutions themselves. The roundtable findings identified the reliance on the social trust placed in institutions as particularly supportive of strengthening the work of potentially vulnerable investigators and archivists.

Several Witness Servers act in concert on the instruction of a web archivist and simultaneously capture the same web page. Such an approach addresses the possibility of a webpage having slight variations depending on locale (and many other potential anomalies) and works to otherwise corroborate the contents of a website through a replication process that validates the contents of a web archive from several different locations and actors.To learn more, participate as an institution or a researcher, read the Call for Contributions.

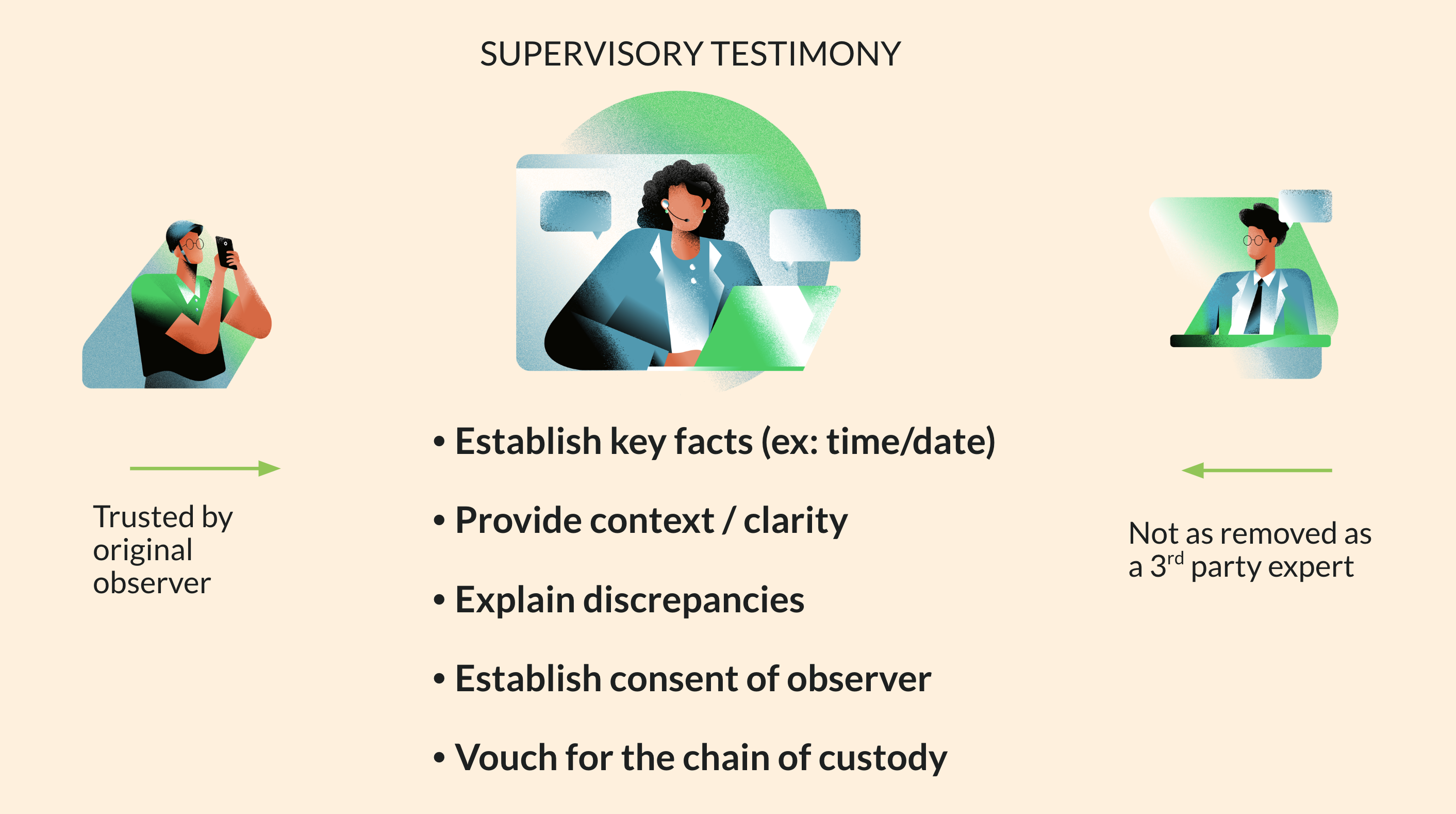

Supervisory Testimony as a Novel Tool for Accountability

Capture

The testimony of a primary observer’s supervisor could help bridge evidentiary gaps. Supervisory testimony would consolidate the institutional knowledge of the chain of custody of specific pieces of digital evidence with a single person.

Criminal investigations depend on evidence gathered in the field. Unfortunately, these scenes can be difficult to access (ex: war zones, disaster areas) and individuals on the ground may not be accessible to courts (ex: threats to safety, loss of contact). Emerging technologies could help overcome such challenges, providing novel solutions to weigh probative value and establish authenticity.

In the summer of 2021, and as part of the Human Rights and International Justice Policy Lab at Stanford Law School, the Starling Lab and Hala Systems convened a group of international experts on digital evidence and war crimes prosecutions to solicit feedback on their proposed model of Supervisory Testimony.

The gathering was chaired by Beth Van Schaack (then visiting professor at Stanford), assisted by Mackenzie D Austin (then Lab associate).

Problem: Authentication and chain of custody of digital evidence registered on a distributed ledger

Data (including multimedia assets like photo and video) that is collected by field observers can present a number of evidentiary and admissibility issues when that data is submitted in accountability settings. Because of the ad hoc nature of evidence collection in conflict zones, the chain of custody of individual pieces of digital evidence can be especially hard to trace. Furthermore, a variety of custodians might have handled evidence before it reaches a host organization or tribunal, which can be difficult to track as well. The extended period of time between evidence collection, storage, and admission into evidence also presents the possibility that many primary observers and custodians may no longer be reachable by the time that accountability processes take place. Thus, those individuals would not be able to provide critical affidavits or testimony as to the veracity and authenticity of the digital evidence at the time of trial. All of these factors threaten the admission of digital evidence in a court of law.

Proposed Solution: Supervisory Testimony

Supervisory testimony, or the testimony of a primary observer’s supervisor (who may participate remotely), could help bridge the evidentiary gap. Rather than requiring a different witness to account for each individual link in the chain of custody, a single supervisor could account for the entire chain of custody. Supervisory testimony would consolidate the institutional knowledge of the chain of custody of specific pieces of digital evidence in a single person.

For example, a supervisor would be tasked with training and overseeing a set of field observers. During a years-long conflict, the supervisor would keep track of the provenance of the swaths of digital evidence submitted by their cohort of observers. Once an accountability mechanism (e.g. a tribunal) is initiated, that single supervisor would present a consolidated bundle of data (e.g. photographs) obtained by their field observers. As a witness, the supervisor would testify as to the chain of custody of specific pieces of evidence, having been present as a supervisor during the collection and storage process. That supervisor would also explain the general process of capture and transfer of all recorded data functions, including the training of field observers. Most importantly, that supervisor would provide a blanket certification of authenticity of the digital evidence. Ultimately, a single supervisory witness could stand in for the dozens of field observers who collected the data over an extended conflict and account for the entire lifecycle of digital evidence from the moment of capture to its presentation in court. This strikes an important balance, streamlining several obstacles to evidence admission while still allowing a defendant an appropriate party to cross-examine.

Technological solutions like cryptographic hashes and distributed ledger entries have been pitched as solutions for chain of custody concerns, serving a bit like a notary system. However, those solutions may be insufficient to adequately account for the human observers whose testimony may still be necessary to authenticate digital evidence. Instead, the combination of technological authenticity markers and supervisory testimony would help shore up any gaps in the chain of custody and enhance authentication for accountability purposes. While technological solutions will be discussed, the workshop has primarily explored how both human and technological protocols are essential. Together, these robust protocols could be a new frontier in authentication.

Possible Implementation Methods

- Asynchronous supervision: A supervisor would train new cohorts of field observers with an established protocol to ensure the authenticity of the collected data and reliability of the collection methods. This could include corroborating data, like photos or videos of the field process itself. A supervisor could also conduct debriefs with their observers to affirm that the collection protocol was followed at the time that specific evidence was collected.

- Direct supervision synchronous with the moment of capture: For example, a supervisor may text back and forth with a field observer at the moment they collect evidence. Alternatively, the field observer may livestream the evidence collection with their supervisor.

- Development of uniform collection protocols: Hosting organizations that employ supervisors must develop uniform collection protocols to be used in the field. As part of these protocols, organizations should also contemplate ways to maintain impartiality and neutrality in the collection of their data, and consider common or likely rules of evidence.

-