Data Integrity in the AI Era: Key 2026 Legislation to Watch

Law

Last updated May 2026

International

- EU AI Act — Article 50 (Transparency Obligations) (Upcoming — effective Aug. 2, 2026). Requires machine-readable marking of all AI-generated outputs and visible disclosure of deepfakes by GPAI providers and deployers, with fines up to €15M or 3% of global turnover.

- China — Measures for the Labelling of AI-Generated and Synthetic Content (In effect — Sept. 1, 2025). Mandates both explicit user-facing labels and implicit metadata labels on AI-generated text, images, audio, and video; paired with the 2023 Deep Synthesis Provisions and GB/T 45654-2025 data-provenance standard.

United States — Federal

- NO FAKES Act (H.R. 2794 / S. 1213, 119th Congress) (Upcoming / pending). Federal private right of action against unauthorized AI voice and visual-likeness replicas; live First Amendment fight (FIRE has flagged it).

- COPIED Act (Content Origin Protection and Integrity from Edited and Deepfaked Media Act, S. 1396) (Upcoming / pending). Directs NIST to set provenance, watermarking, and synthetic-content detection standards; bars training on content with provenance information without consent.

- Take It Down Act (In effect — signed May 2025). Federal takedown obligation for non-consensual intimate imagery, including AI-generated and AI-altered content; the only enacted federal piece in this cluster.

- NIST AI Safety Institute / NIST GenAI program guidance on synthetic content provenance (In effect — ongoing rolling guidance). Non-binding but increasingly referenced by state statutes.

United States — State

- California — AI Transparency Act (SB 942) + AB 853 amendments (Upcoming — operative date deferred to Aug. 2, 2026). Latent and manifest disclosures, AI-detection tool requirement, capture-device provenance option, and platform-side provenance surfacing for large online platforms.

- New York — Stop Deepfakes Act (S6954A / A6540A) (Upcoming / pending in legislature). Three-part provenance regime: synthetic-content providers attach metadata, social platforms preserve it, state agencies attach it where practicable; AG rulemaking authority.

- New York — Synthetic Performer Disclosure (GBL § 396-b) (Upcoming — effective June 9, 2026). Conspicuous disclosure of synthetic performers in advertising; $1,000 first-offense / $5,000 subsequent civil penalties.

- Tennessee — ELVIS Act + Digital Content Provenance Pilot Program (In effect — July 1, 2024). Civil cause of action for AI voice/likeness misuse, plus a state-run provenance pilot for emergency-management content and election-content provenance requirements.

Beth Van Schaack

Beth Van Schaack

Former Ambassador-at-Large for Global Criminal Justice

Distinguished Fellow, Center for Human Rights and International Justice at Stanford University

Prior to returning to Stanford University, Dr. Van Schaack served as Ambassador-at-Large for Global Criminal Justice (GCJ) in the U.S. State Department office where she once served as Deputy. GCJ advised the Secretary of State and the Under Secretary of State for Civilian Security, Democracy, and Human Rights on issues related to war crimes, crimes against humanity, and genocide and the deployment of the whole range of transitional justice mechanisms in states emerging from violence or repression. Prior to returning to public service, Dr. Van Schaack was the Leah Kaplan Visiting Professor in Human Rights at Stanford Law School, where she taught international criminal law, human rights, human trafficking, and a policy lab on Legal & Policy Tools for Preventing Atrocities. In addition, she directed Stanford’s International Human Rights & Conflict Resolution Clinic. Ambassador Van Schaack has published numerous articles and papers on international human rights and justice issues, including her 2020 thesis, Imagining Justice for Syria (Oxford University Press). From 2014 to 2022, she served as Executive Editor for Just Security, an online forum for the analysis of national security, foreign policy, and rights.

In addition to her work as a Distinguished Fellow with Stanford’s Center for Human Rights & International Justice, Dr. Van Schaack is a Commissioner with the International Commission of Jurists (ICJ), a Senior Peace Fellow with the Public International Law & Policy Group, a Distinguished Fellow with the Atlantic Council’s Strategic Litigation Project, and a Distinguished Fellow with the Georgetown Institute for Women, Peace & Security. With seven other senior U.S. government human rights mandate holders, she is a co-founder of The Alliance for Diplomacy & Justice, which works to center human rights within U.S. foreign policy.

Earlier in her career, she was a practicing lawyer at Morrison & Foerster, LLP; the Center for Justice & Accountability, a human rights law firm; and the Office of the Prosecutor of the International Criminal Tribunals for Rwanda and the Former Yugoslavia in The Hague. Dr. Van Schaack is a graduate of Stanford (BA), Yale (JD) and Leiden (PhD) Universities.

The Starling Lab for Data Integrity brings scholars and industry to advance innovation in digital trust for journalism, history, and law.

Training British Barristers in Interrogating Authenticated Digital Evidence

Law

“Never ask a question in court you don’t know the answer to” is advice lawyers heed. On Saturday 19 July, 2025, I took part in a training session for British barristers built as a mock hearing. The objective: practicing cross-examining an expert witness about open-source intelligence (OSINT) as evidence.

OSINT is increasingly available to prosecutors thanks to the popularity of cell phone cameras and social media, but finding the photographer may prove difficult – if not impossible. Generative AI creates additional challenges to admissibility.

The fictional scenario we designed involved all the staples of open-source methodologies (geolocation, chronolocation, etc.) and was the first time C2PA metadata was being interrogated in a court-like setting for its reliability and probative weight.

The Case Itself

The fictional case derives from an alleged war crime in Yemen (in fact, an active investigation of Bellingcat). In our training scenario, the prosecution argues that a jet fighter pilot conducted a “double-tap” airstrike on June 12, 2023 at approximately 10:30 AM – first dropping one bomb, then returning to strike again and killing at least six civilians who were conducting rescue operations. Mr. Smith, the defendant, claims he conducted only a single, legitimate military strike at 9:00 AM targeting a military communications center when no civilians were present.

Ultimately, the case is about the second strike (which makes it a war crime). If its criminal nature can’t be established beyond a reasonable doubt, Smith won’t face consequences for an alleged strike against a possibly legitimate target.

The Evidence Presented

The mainstays of open-source investigations are relied upon to interrogate when the acts might have taken place:

– Firstly, chronolocation aims to reliably and accurately estimate the time of the incident.

– Secondly, contextual verification is put forward by the prosecution to corroborate a piece of open-source evidence with other open-source evidence. (The scenario deliberately doesn’t include direct testimony, to focus the trainees on open-source investigations only.)

– Finally, and most relevantly for us at Starling Lab, some material includes C2PA metadata, a technical standard for provenance which is presently untested in judicial settings. We presented the trainees with a video including two Manifests: one with the media’s authentication metadata produced straight from the C2PA-compatible Sony camera that captured it; the second added to the video after the fact by an investigative journalism collective, claiming to have verified the video, in a similar fashion to what BBC Verify have experimented with.

In addition to contributing to the scenario design and the case papers, I took part in the training as an expert witness interrogated and cross-examined by a group of six barristers (three on each side). I testified to the whole of the prosecution expert report as though the case was real and it were my own report. It was an intense four hours!

Impressions and takeaways

I found it fascinating to get an insight into what barristers – with no pre-existing familiarity with digital investigations methods – make of this bundle of evidence. It appeared quite corroborating, all-pointing-in-one-direction, but perhaps somewhat circular. In a trial, nothing is taken for granted.

As an expert witness the reliability of what you say is under assault – all answers contribute to your own credibility on the stand, and in your report.

The experience highlighted the paramount importance of intellectual honesty for an expert witness. Rather than serving the interests of the party who commissioned their report, the expert’s primary responsibility lies with the court. This means providing unbiased, accurate, and complete information, even if certain nuances or findings might not directly support the commissioning party’s case. The mock trial served as a practical exercise in upholding this principle, underscoring that the expert’s credibility and the integrity of the judicial process depend on their unwavering commitment to the truth, irrespective of who is paying their fees.

The purpose of relying on expert witness testimony is to inform the court, but it comes with the responsibility of having to effectively communicate findings and opinions. Too long and you’ll be cut out; too in the weeds and jargony and you’ll lose the room. Analogies and relatable explanations are the name of the game there.

From a substance perspective, I found myself unable to get to some of the nuances I wanted to touch on. Because the exercise was quite focused on how much time was allocated to the metadata questions, we got somehow stuck on how C2PA data can be removed from an image, and how difficult it might be to acquire an X.509 certificate.

I had laid a few hooks for the defense to pick up on, and left a question or two unanswered. For example, the prosecution expert did not include a detailed verification report of the second organisation’s cert (they really should have).

Prior to this mock trial, I took a week-long “Courtroom Testimony for Expert Witnesses” course from LEVA and Jonathan Hak KC. This gave me a hunch that I was trying to be too clever: as a training exercise it’s not optimal to leave stones unturned, since no-one in the court is more of an expert than we are. In other words: The training aims to bring the lawyers to having some familiarity with the substance, and to practice the techniques – it is not for the defense to pick up on minute, detailed, very precise concerns from the report themselves.

Indeed, we did not stray far from the expert reports themselves due to lack of time. I’ll be proposing a different, much more back-and-forth structure in a future training, so that we can derive must-dos and mainstays of _any_ C2PA verification.

I’m very grateful to the following people and organisations for giving me this opportunity to contribute to this exercise, and for inviting me to take part on the day. Walking the small alleyways of Temple, Blackfriars, and Fleet St is so humbling for this former law undergrad who spent a bit of time in various newsrooms, and in Hackney. So a huge thank you to:

– Professor Yvonne McDermott from Swansea University and the TRUE Project;

– Judge Korner KC, presently at the International Criminal Court (ICC)

Legal Experts Are Racing to Keep International Courts Ahead of AI

Law

Recent policy changes in the United States, retreating from leading positions in international efforts and aid, led to new relevance of already important questions we had covered in Washington DC, in September 2024, at Georgetown University and with Ambassador Van Schaack.

At a high level, the question remains: When it comes to digital evidence, what is changed (if anything) by widespread cheap and accessible generative AI?

In addition to a health check of our information environments and of its pollution (or not) by deepfakes and AI slop, we must return to existing protocols and guidelines – and interrogate whether and how they should be updated.

Starling did just that on July 11, 2025, teaming up with Fénix Foundation, a non-profit that leverages technology to support international justice, peace, and accountability through legal AI tools and judicial training programs.

We have previously worked with the co-founders of Fénix, on the Hala Protocol for the Collection, Processing, and Transfer of Audio Data, Sabrina Rewald and Emma Irving (they are the main drafters, and I am an adviser). Emma is one of the authors of the Leiden Guidelines on Digitally-Derived Evidence, and Sabrina supported the running of our Washington event in 2024.

Joined by a few additional friends from the academic world, we gathered at Leiden University’s The Hague campus to draft a short-term response to the rapid advances in AI’s sophistication and accessibility – and resulting implications on legal evidence. Our efforts led to a set of “Preliminary Guiding Principles” for immediate use by courts, investigators, lawyers, and fact-finders. .

In addition, we are seeking support for an AI and Digital Evidence Knowledge Hub – an open-source, go-to resource that would contain cases and relevant procedural rules from various jurisdictions around the world. Using a decentralized approach to knowledge collection, it will rely on a wide network of contributors to populate the hub with case law, legal provisions, and best practices.

Notably, the meeting moved beyond a US-centric conversation, incorporating diverse legal traditions and regional experiences to address the complexities of AI-generated content in atrocity crimes prosecutions worldwide.

As legal authorities in Europe and universal jurisdiction countries undertake their important work, we hope to assist them by identifying specific areas where digital evidence guidelines need updating, as well as where professional training can be beneficial.

Submission to the UN Special Rapporteur on Human Rights Defenders from our Brazil Coverage

Law

We are honored to see our work featured in Mary Lawlor‘s UN Special Rapporteur report to the Human Rights Council: “Out of Sight: Human Rights Defenders in Rural, Remote and Isolated Contexts.”

UPDATE: Since this was published, the UN Human Rights Council adopted a resolution on protecting human rights defenders in the digital age. It addresses several important issues that our Lab has been focused on. Scroll to the end of this page for more details.

The report notes how Starling Lab supported efforts in Brazil’s Pantanal wetlands with tools that work even in low-connectivity environments, which is needed where the “digital divide hits many human rights defenders very hard.”

In a speech this month presenting her findings, Lawlor observed how some of those most at-risk include “journalists covering human rights issues at the local level.” Starling’s methodology was used by journalists to document environmental destruction – and confront climate change denialism. Our submission to Lawlor’s office focused on data authentication, decentralized storage, and cryptographic verification. Together, these ensure documentation remains tamper-evident and accessible, even when governments seek to dismiss the truth.

Her report includes several valuable recommendations to governments and other international actors, two of which resonate with our work:

- “Expand access to the Internet and secure communication tools, including by increasing funding for such digital security resources as encrypted communication applications and secure reporting channels.”

- “Support efforts to enable human rights defenders to store and safeguard their information securely, without fear of unlawful surveillance or data breaches, including putting in place robust legal safeguards to prevent the misuse of digital tools to suppress dissent or target defenders and ensure that their digital rights are protected.”

We appreciate being included among so many other human rights defenders, and remain grateful to Pablo Albarenga for his photojournalism in Brazil, as well as to Inside Climate News for publishing this important coverage.

And we hope this underscores the vital role of trusted digital evidence in defending human rights and environmental justice.

🔗 Read the Special Rapporteur’s remarks;

📄 Read the full UN report;

📕 Don’t miss our own case study;

📰 And the Inside Climate News article

Starling Lab was previously referenced in a report to the UNHRC in 2023 by the Rapporteur on the right to education, who acknowledged similar methods used by the lab as emerging “good practices” for documenting war crimes against civilian objects like schools.

Update (April 16, 2025): Our submission is also echoed in a new resolution from the UN Human Rights Council, which addresses the protection of human rights defenders in the digital age (full text: A/HRC/58/L.27/Rev.1).

The resolution emphasizes “universal connectivity” and “meaningful connectivity” as essential for defenders’ work, calls for “technical solutions for strong encryption and anonymity,” and advocates for secure information storage “without fear of unlawful surveillance.” It specifically recognizes the “growing number of digital attacks” on defenders and acknowledges the “protection gap” caused by lack of accountability.

These address key points from our submission on data integrity and authentication technologies for remote areas.

We’re particularly encouraged by the HRC’s recognition that “new and emerging digital technologies can hold great potential for strengthening democratic institutions and the resilience of civil society, empowering civic engagement and enabling the work of human rights defenders, public participation and the open and free exchange of ideas, and for the exercise of all human rights.” This aligns perfectly with our mission at Starling Lab to harness technology to establish trust in digital records.

Our earlier submission outlined innovative approaches to produce documentary evidence and combat digital denialism. This includes cryptographic methods that authenticate evidence from the point of capture, enabling defenders in remote areas to establish credibility despite connectivity challenges. The submission also referenced ongoing work on telecommunication technologies, including 5G, to further enhance these capabilities.

Basile Simon

CONTACT

Basile Simon

Director, Law Program, Special Projects

Fellow, Stanford EE

Basile is a multi-disciplinary researcher bridging between engineering, law, and journalism in promoting accountability for causing harm to civilians. In addition to policy and advocacy, he has worked as a data-journalist at the BBC, Reuters, and the Times and Sunday Times.

He is the technical co-founder of Airwars, a human rights watchdog, and is now part of its board. He is also a resident at the European Center for Constitutional Rights, a law firm in Berlin, as part of the Forensis / Investigative Commons collective.

Basile heads the Lab’s law program and leads the Ukraine rapid response and the Lab’s special projects. He looked after formulating the initial product and engineering roadmaps, and the technical delivery thereof.

The Starling Lab for Data Integrity brings together individuals with experience in academic research, technological innovation, journalism, history, and law.

Building Trust in the Age of AI – A Starling & HAI Conference at Stanford

Law

On October 22, the Starling Lab and Stanford HAI convened a diverse group of over 100 technologists, journalists, legal experts, and archivists at the Cecil H. Green Library for our conference, Trusting Digital Content in the Age of AI.

We want to extend a sincere thank you to everyone who took part – from speakers like Brewster Kahle and Zach Seward to attendees from the AP, BBC, and the Internet Archive. Together, we moved the conversation beyond the “arms race” of deepfake detection and toward “upstream” solutions: cryptography, provenance, and interoperable ecosystems of trust.

Thank you for helping us design a more authentic digital future. We look forward to continuing this vital work with you in 2025.

The event convened a diverse group of experts to address the erosion of trust in digital ecosystems. James Landay (Stanford HAI) and Tsachy Weissman (Starling Lab) opened the conference, framing the urgent need to design new systems for authenticity.

In the first general session, Jonathan Dotan moderated a discussion on building interoperable systems of trust. Zach Seward (New York Times) addressed how newsrooms are adapting to AI, while Riana Pfefferkorn (Stanford Cyber Policy Center) explored the legal challenges of deepfakes and the “liar’s dividend.” Brewster Kahle (Internet Archive) spoke on the critical mission of preserving digital history amidst technical threats.

A second panel, led by Vanessa Parli, reviewed the year in Generative AI. Michael Bernstein (Stanford) discussed the logic of social media platforms, Oren Etzioni (TrueMedia.org) presented on deepfake detection at scale, and Aimee Rinehart (Associated Press) shared insights on AI procurement and combating misinformation in journalism.

Later sessions focused on solutions, with Dan Boneh (Stanford) demonstrating cryptographic proofs for content authenticity, joined by Jeff Hancock and Margaret Hagan on the human and legal aspects of trust. Finally, Ann Grimes, Basile Simon, and Adam Rose led discipline-specific roundtables on tools for journalism, law, and archiving.

The Proof's in Your Pocket: Proofmode inside Signal

The Proof’s In Your Pocket

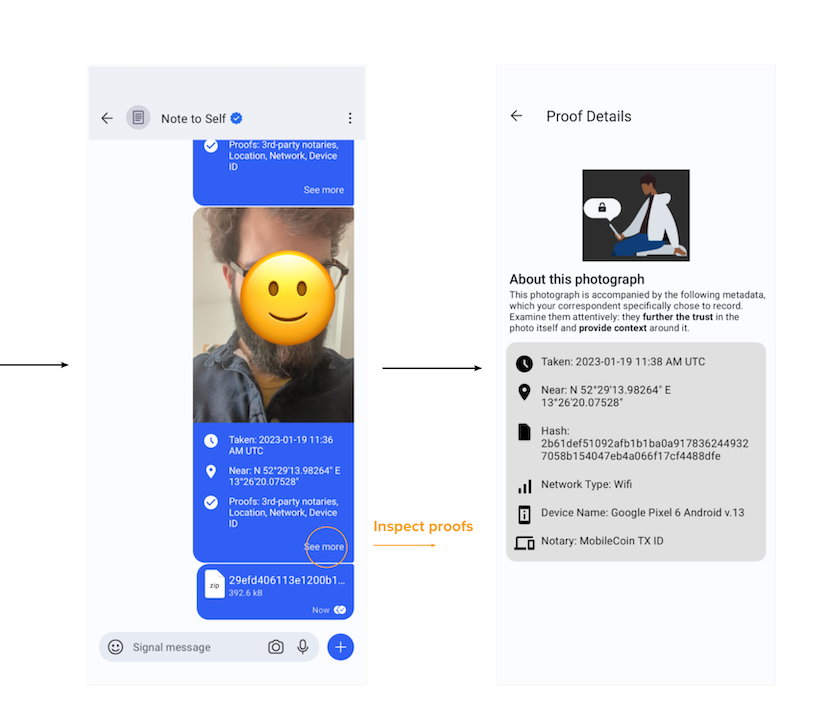

What could authentication data look like in your favorite messaging app? We built an easy-to-deploy secure camera for crowdsourcing documentation of Ukrainian schools by integrating ProofMode with the popular Signal messaging app.

Basile SimonReading Time: 5min

Prototypes

Share

Contents

Background

Background

In response to the large-scale invasion of Ukraine by Russia, the collective response of the Lab was to start a broad project cutting across all of our three research areas: Journalism, Archiving, and Law. Under the project called Dokaz (“Доказ”, Ukrainian for proof), a loose coalition of organizations have shared material and ideas related to the support of Ukraine against aggression. In the context of this new large-scale conflict, documentation has been a cornerstone concern of our research into the creation of stronger digital material:

- The Lab’s law program led the submission of originally-collected field and remote evidence to the International Criminal Court;

- On the journalism side, we have supported the first demonstration of at-source cryptographic authentication, directly in-camera, for Reuters photojournalists;

- And we have prototyped the integration of metadata-rich, authenticated photography in Signal Messenger, most notably for the use case of crowdsourced citizen documentation.

Quite a few of these projects were aimed at professionals and specific uses of information. However, as the specter of deepfakes gives way to the arrival of mainstream photorealistic generative AI, we are faced with even faster-moving challenges that require us to consider how new camera hardware, software, standards, and user experience (UX) can help establish what is an accurate depiction of the real world. This challenge concerns everyone, extending to our day-to-day consumption of media. Simply put, in the near future, just seeing digital photos may not be a reliable means of believing them.

In order to make digital proof more accessible, we set out to incorporate a powerful authenticity tool called ProofMode with a popular communications tool likely already in your pocket – Signal Messenger.

Our collective thanks go to the project partners:

- The team at Guardian Project (makers of ProofMode), who work tirelessly to bring their ideas to the world from the ground up, driven by the “right” approach and choices, with free and open software.

- Our collaborators and Dokaz <link> members Hala Systems, who provided the operational framework ensuring the support of the photographers, notably by red-teaming the risk assessment.

- Our local photographers in Kharkiv who once again went out to document their city.

- The Forté Group, who provided ad-hoc engineering resources for the integration delivery.

- The Signal team, who kindly heard our pitch for adopting this approach, and makes their code open-source for others to build on.

- And finally, the entire Starling Lab team, involving notably Basile Simon on project direction, engineering management and in-country deployment; Alisha Seam on technical advice to the prototype; and Yurko Jaremko on operating the preservation pipeline.

Contents

Context

FrameworkTechnologyLearningsArchive

Context

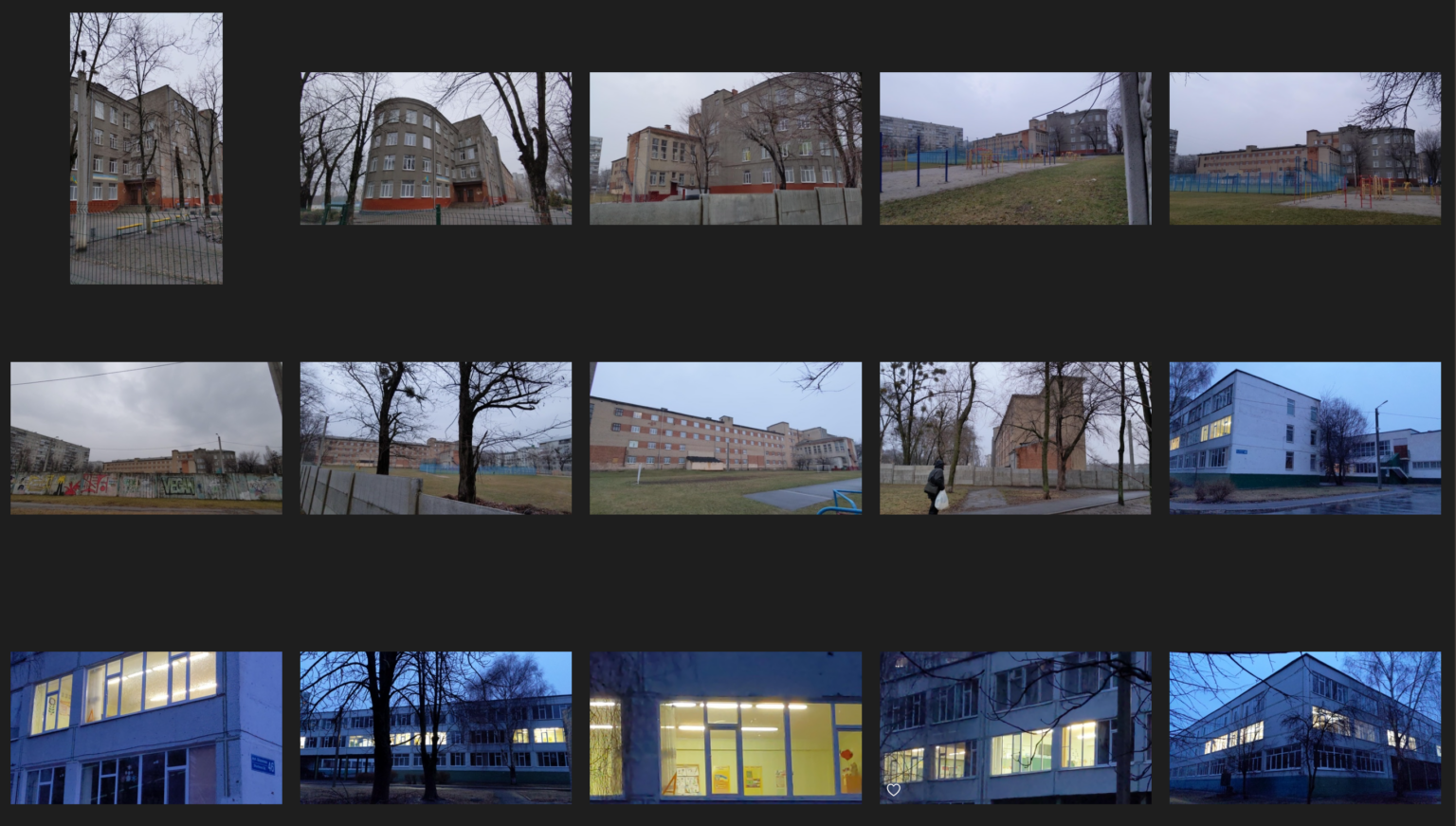

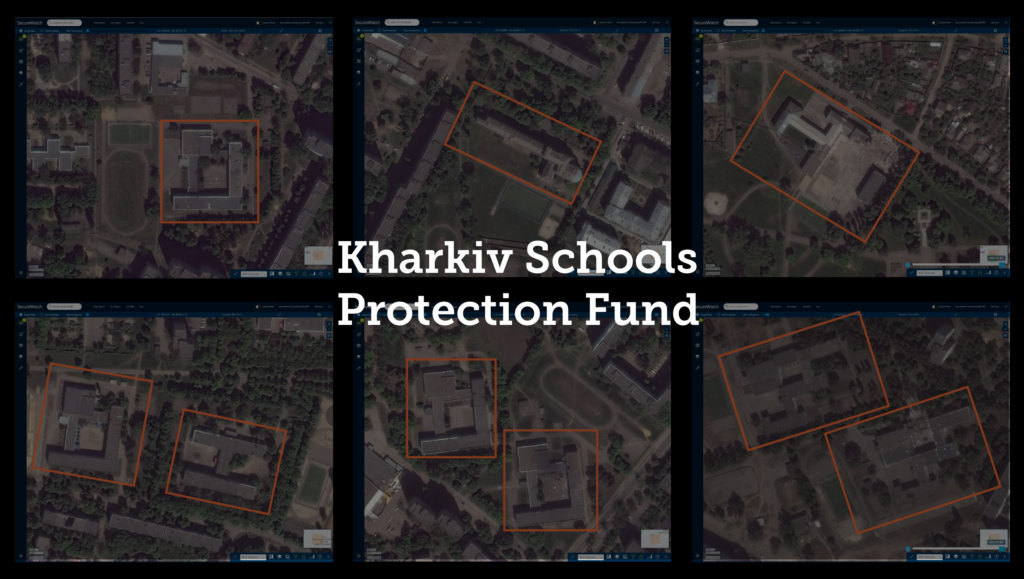

The first investigations Starling Lab supported in Ukraine were related to attacks against schools in the city of Kharkiv. With our grounding in higher education, we found the words of residents and witnesses especially disturbing:

“Where will the kids go to learn? Can they actually bomb these places?”

To address potential disinformation by Russia, the work of our legal team was to investigate and confirm that facilities had not been co-opted by Ukrainian military personnel – an after-the-fact task fraught with challenges.

In support of the Safe Schools Declaration, an initiative joined by over 100 countries, we sought to empower local communities to lead or crowdsource their own preventative documentation of schools. This entailed frequently visiting campus surroundings in order to document the absence of armed forces and thus the schools remain protected under international humanitarian law.

As a pilot, we organized two weeks of regular “rounds” at several schools in Kharkiv by designated photographers, who were tasked to document the surroundings and insides of the schools. This approach followed recommendations of the Declaration to “make every effort at a national level to collect reliable relevant data on attacks on educational facilities, on the victims of attacks, and on military use of schools and universities during armed conflict,” as well as its numerous guidelines, which in short recommend that armed forces of a country at war never use schools or educational facilities.

However, broadening the pool of documenters poses questions regarding how easily they can adopt technologies with robust authentication features. Our research questions turned to tool ease of use and accessibility with minimal training – important as we seek to understand the burdens placed on both the viewer of authenticated media and the creator.

The pilot deployment of this prototype was trialed in January 2023 in Kharkiv, where Starling engaged with two local photographers. They were able to go in the field only with their Android smartphones. Our prototype app was side-loaded into the phone through a custom APK. This setup permitted them to both preserve their message history and contacts, as well as to file their photographs with the Starling Lab.

The field work was completed in time to present the Lab’s findings and methodologies in a joint submission with Hala to the United Nations Special Rapporteur on the right to education, as well as in thematic presentations at the World Economic Forum 2023.

Contents

Framework

Framework

The Challenge

This project’s aims were two-fold:

- Can we devise a lightweight solution permitting the secure capture and transport of authenticated photos and videos?

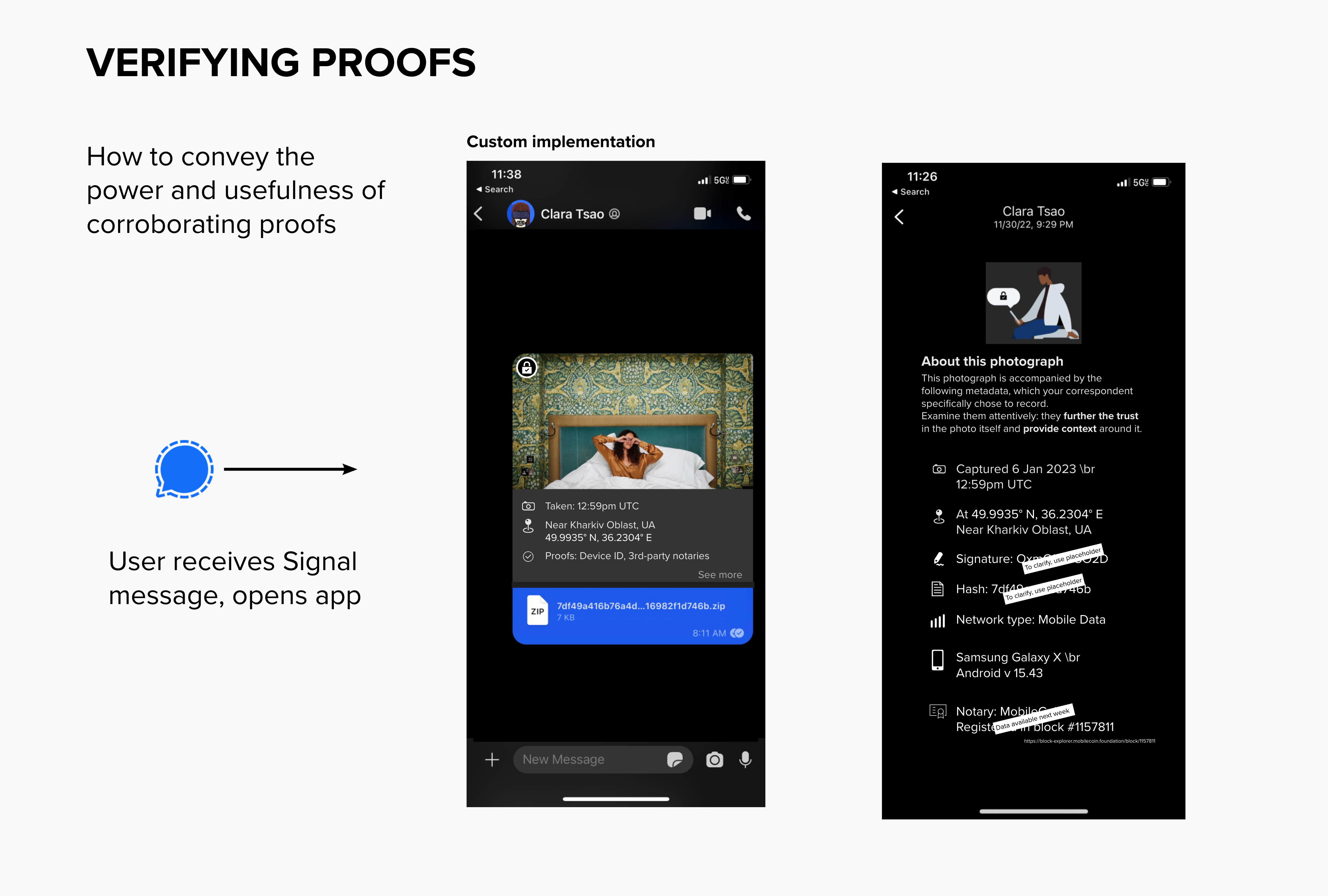

- What could a user interface presenting contextual and authentication information look like, inside a social messaging application? What data points, if any, further a person’s trust in what they’re seeing?

These questions demonstrate our commitment to approach information systems with the perspective of Authenticity-by-Design.

Contents

Framework

The Prototype

ProofMode is a software and smartphone app for authenticated media capture, developed by the Guardian Project, Okthanks and WITNESS. It was already used in several of our Lab’s projects when they released it as a software library for integration into any app. This spurred us to build a proof-of-concept combining its technology with a fork of the code for Signal Messenger. Signal is a popular global messaging service known for offering end-to-end encryption and open source code, and has also been used in Lab projects for secure communications and media transport.

At its core, ProofMode reverses a common approach to combating misinformation and deepfakes. Rather than identifying and debunking the authenticity of fake content, ProofMode promotes trust in what is genuine by providing a means to strongly authenticate the multimedia it generates. It accomplishes this by backing up numerous records of metadata, which come in as corroborating pieces of information supporting media captured in the field. In short, ProofMode helps users to trust the real rather than questioning the fake.

By incorporating the recently-released libProofMode library, we were able to introduce a streamlined experience natively within Signal to create verifiable, provenance-laden media using the app’s camera function.

The resulting app enabled us to deliver strongly-authenticated photographs with industry-leading privacy for both sender and recipient. As a system, multimedia content could be authenticated at the point of capture on a smartphone, then later verified by a recipient. It utilizes enhanced sensor-driven metadata, hardware fingerprinting, cryptographic signing, and third-party notaries to enable a pseudonymous, decentralized approach to the need for chain-of-custody and “proof” by both activists and everyday people alike.

Contents

Technology

Technology

Capture

Strongly-authenticated photographs are captured directly in the custom Signal app. Tapping the in-app camera icon opens the camera, and the resulting captured media is co-located with surrounding metadata through the integration of ProofMode.

Ahead of capture and upon loading the app on the phone, a digital identity was created through the generation of an OpenPGP key pair unique to the app / device combination. This key pair is used to sign the ProofMode data files, and this signature in turn permits later attribution of a ProofMode bundle to a person who would have custody of an OpenPGP key pair.

By default, ProofMode collects the following surrounding metadata at the moment of capture: hash of the capture media, information about the phone / device used, information about the device connectivity including nearest cell tower environment, device IP address, GPS coordinates and accuracy thereof, timestamp of the geolocation, and a Google SafetyCheck signature of the media attesting the integrity of the Android environment which ran the app.

The media hash is cryptographically registered on OpenTimestamps (a process ProofMode also calls “notarization”). The resulting anchoring on the ledger permits the demonstration that “this media existed at this time.”

After capture, the photograph and its associated ProofMode bundle are shared with a contact or group on Signal, following the app’s native UI and workflow. The resulting bundle of data is, at the moment of consisting of the photograph and its surrounding metadata, including the above timestamping receipt,is hashed and cryptographically signed

Each media shared this way is followed by an automated MobileCoin transaction, with a memo field containing the first 16 digits of the hash value of the ProofMode bundle (called “proof hash”). This permits the recipient to confirm that the ZIP shared with them is the one the sender meant to send, by comparing the hash of the data received with the hash registered on MobileCoin by the sender. MobileCoin is a micro-payments system and cryptographic ledger natively available in Signal Messenger.

For the specific purposes of this project and prototype, these features can complete Signal’s feature set: to not only encrypt, but also authenticate self-generated assets using cryptographic hashes and signatures. By embedding these road-tested tools natively in the app, they can protect and notarize photos at their source so they have a better chance of being trusted as they move through chaotic information environments.

Store

This project focused on the Capture and Verify phases, without requiring integration of a long-term preservation strategy for the multimedia assets. Beyond proof of concept and prototype however, routine storage considerations were addressed:

The files shared by the in-country team with Starling were automatically validated upon receiving them by our Signal signald client. Custody of the files was asserted and matched to the photographer’s previously-provisioned JSON Web Token (JWT). After these authenticated bundles were validated, they were preserved and encrypted at rest in Starling’s storage pools.

Non-critical metadata (hash values of the media and bundles) was registered on cryptographic ledgers, acting as immutable third-party record holders and timestamp anchors. This included the Numbers blockchain, Avalanche, and the International Standard Content Number through LikeCoin.

Verify

We designed a bespoke user interface inside Signal’s conversation view to demonstrate what surfacing contextual metadata about photos shared in-app could look like for all users. This data comes from Proofmode’s own surrounding environment metadata snapshots, as well as from several third-party record holders (called notaries by ProofMode).

A key element of the demonstration of non-tampering of the files is the aforementioned distribution of integrity data on third-party distributed (and immutable) ledgers, thus permitting verification of hash values and signatures at a later stage by means of comparing the present file hash with expected values registered with third parties.

While our use case involved communicating with an automated Signal client, we designed a rich verification and inspection UI into Signal Messenger. Both sender and receiver are presented with metadata in their normal conversation thread. Further metadata, including hashes and cryptographic signatures are displayed in a separate “See more” screen.

This visual layer of verification, and the inclusion of the micro-transaction on MobileCoin, provides an accessible, very present tool presenting background information about the shared media.

Contents

LearningsArchive

Learnings

Ease of use

Leveraging the widely tested and familiar user experience of Signal resulted in a prototype that was intuitive for users. The response was dramatic. Investigators in the field, lawyers, and in particular, leaders at the Department of State, indicated to us that direct Signal integration could be transformative for non-governmental organizations and citizen journalists.

Nathan Freitas, Director of Guardian Project and the ProofMode team, has over twenty years of experience providing digital security tools and training for human rights defenders around the world. He said: “Activists and journalists are already burdened with intense physical and digital threats through their work. Asking them to learn a whole new app often can be too much, or put them at more risk. Integrating provenance and authentication features into Signal means they get more benefit from an audited, vetted app most of them already have and rely upon everyday. Less is more!”

A best practice?

Starling Lab made a submission to officials at the UN’s Human Rights Council which outlined the work enabled by this prototype. The Special Rapporteur on the Right to Education<internal link> noted to the Council that our efforts, along with collaborator Hala Systems, was an emerging good practice for documenting evidence.

At the World Economic Forum in January 2023, Starling presented the project as a means to illustrate the need for the continuation of this documentation effort – itself made possible partly by the easy deployment of a top-tier documentation tool.

Cost of maintenance

There are rolling costs to keeping the fork up-to-date with Signal changes and potentially ProofMode itself. Lagging behind means being shut out of the Signal main network. These are important factors to consider when starting an initiative beyond the prototype stage.

Contents

LearningsArchive

Archive

Materials related to this case study are under review and kept private for now.